5 Steps for Effective Competitive Content Analysis

42% of B2B marketers say lack of high-quality data is one of the biggest barriers to proving marketing impact, according to LinkedIn and Ipsos. And if you're a Demand Gen Manager trying to build effective competitive content, you've probably felt the weekly version of this: one stakeholder wants more comparison pages, another wants brand safety, and somehow you're the one stitching together scraps in a doc at 6:30 PM.

A lot of teams think competitive content is a writing problem. It isn't. It's an execution problem. And now that AI engines are part of the buying journey, that mistake costs more than it used to.

Key Takeaways:

- Effective competitive content starts with verified inputs, not better prompts.

- If your team can't explain a competitor fairly in 3 sentences, you're not ready to publish a comparison page.

- The 3-Layer Gap framework explains why competitive pages slow down as teams grow: strategy gap, context gap, and review gap.

- For most B2B SaaS teams, the right benchmark is 6-10 high-intent competitive pages before trying to scale to 50.

- GEO changes the game: consistency and structure now matter as much as rankings.

- Competitive content works best when it's tied to funnel stages, use cases, and real objections.

- If you're trying to build this without a system, you'll usually trade speed for trust.

If you want to see what a governed approach to this looks like in practice, you can request a demo.

Why Most Competitive Content Falls Apart Before It Starts

Competitive content fails because most teams treat it like an isolated asset instead of a repeatable operating motion. The page looks simple on the surface. A few comparisons. A feature table. Some positioning. But the actual work behind effective competitive content involves product truth, market framing, legal caution, SEO structure, buyer objections, and internal alignment. Miss one layer and the whole thing gets shaky.

The real bottleneck is not writing speed

Back in the day when I was running content-heavy teams, the pattern was always the same. Writing was rarely the true blocker. The blocker was context transfer. One person knew the product nuance. Another knew the buyer objection. Someone else knew what legal would push back on. And the writer sat in the middle trying to turn half-complete knowledge into something authoritative.

That same thing shows up in competitive content all the time. A demand gen manager opens a doc on Tuesday morning, grabs a few notes from sales, pulls screenshots from old decks, checks a competitor's homepage, then waits two days for product marketing to confirm one claim. By Friday, the draft exists, but no one trusts it enough to publish. You feel productive because words got written. But nothing actually shipped.

That's the first hidden rule of effective competitive content: if truth is scattered across Slack, decks, old battlecards, and memory, content will stall. I call this the Fragmented Truth Trap. If your inputs live in 4 or more places, review cycles almost always double.

The old SEO playbook doesn't carry you far enough

A few years ago, you could get away with thinner comparison pages. Rank for "[competitor] alternatives," add a table, mention pricing, call it a day. That still gets you indexed. Sometimes it even gets you clicks. But GEO raises the bar because AI engines aren't just matching keywords. They're synthesizing who sounds credible, consistent, and specific.

Google rankings are table stakes now. That's the part a lot of teams still haven't fully absorbed. The real fight is whether search engines and LLMs see your brand as a reliable source worth surfacing when buyers ask comparative questions. That takes more than stuffing in competitor names. It takes coherent positioning across many pages, repeated clearly enough that machines can trust the pattern.

Fair point if you're thinking, "We've ranked before without all this structure." Plenty of teams have. But ranking one page and building an effective competitive content program are two very different things. One is a win. The other is a system.

The cost shows up in rework, not just missed traffic

Most teams measure the wrong cost. They look at whether the page ranked. They don't look at how many people had to touch it. They don't track how many claims got softened, how many rewrites happened, or how long the page sat in review.

At one SaaS company, we had strong founder-led ideas and solid opinions, but once content had to move through more people, quality got weird. Not terrible. Just diluted. Same thing happens with comparison content. A bold, useful point turns into a mushy paragraph because three reviewers each trim one sharp edge off it. By the end, the page is safe and forgettable.

That hurts more than most people realize. You lose time. You lose trust internally. You lose the habit of shipping. And eventually you stop trying to build competitive pages at all, which is brutal when those are often some of the highest-intent assets in your funnel. So the real question becomes obvious: what do effective competitive steps actually look like when you want pages that ship and hold up?

Steps for Effective Competitive Content That Actually Scale

Effective competitive content comes from a system with clear inputs, clear boundaries, and clear publishing rules. Not from heroic effort. If you want pages that support pipeline, you need a process that can survive growth, new stakeholders, and more volume without drifting. That's why the most useful steps for effective competitive work are less about writing tricks and more about operational design.

Diagnose whether you're ready before you publish anything

Before you create a single comparison page, you need to know what state you're in. This is the Readiness Ladder. It has 4 levels, and most teams overestimate where they are.

Level 1 is Reactive. Sales asks for a page, marketing scrambles, nobody agrees on positioning. Level 2 is Documented. You have battlecards, rough narratives, maybe some approved claims. Level 3 is Governed. Product truth, buyer objections, use cases, and narrative framing are centralized. Level 4 is Scalable. You can publish multiple competitive assets per month without dragging executives into every review.

You can diagnose your level with 4 questions:

- Can your team explain each competitor fairly without opening five tabs?

- Do you have approved boundaries on what you will and won't claim?

- Are your core use cases mapped to competitor comparisons?

- Can a non-founder publish a draft without executive rewrite?

If you answered no to 3 or more, don't scale yet. Build the inputs first. Honestly, this saves a ridiculous amount of pain later.

Start with buyer intent, not competitor obsession

A lot of teams build pages around competitors as if the competitor is the story. That's backwards. Buyers don't wake up thinking, "I'd love to read a balanced comparison matrix." They're trying to solve a problem, reduce risk, or justify a purchase.

So the first real step for effective competitive content is buyer-intent mapping. Take each competitor topic and force it through what I call the 3Q filter:

- What triggered this comparison search?

- What risk is the buyer trying to reduce?

- What proof would help them move forward?

If the trigger is "we've outgrown a tool," the page should focus on migration friction, depth, and operational limits. If the trigger is "my team needs better governance," then the comparison should lean into control, consistency, and review burden. Same competitor. Totally different page angle.

This is where a lot of content gets generic. It names features but ignores the buying context. And buying context is what makes content persuasive. Not hype. Not chest-beating. Relevance.

Build the page from verified truth blocks

This is where teams usually want to jump straight into drafting. Bad move. Competitive content is one of the few formats where writing should come after evidence assembly, not before.

The framework I like here is Truth Blocks. Every page should be built from 5 blocks:

- product facts you can defend

- competitor facts you can verify

- buyer objections you hear repeatedly

- use case differences that matter in evaluation

- clear boundaries on what you won't claim

Once you have those blocks, the page gets much easier to write. Without them, every paragraph becomes a debate.

There's good reason for this. According to Google's guidance on helpful content, content that shows firsthand expertise and clear purpose tends to align better with what search systems want to reward. Competitive pages need that doubly so because weak claims get spotted fast.

A simple threshold helps here: if more than 20% of a page depends on unverified assumptions, don't publish it. Park it. Fix the fact base. That one rule alone will improve the quality of your competitive content more than most style guides.

Use a repeatable page architecture, not one-off creativity

Creativity matters. But in competitive content, consistency matters more. Buyers compare pages. Search engines compare pages. AI engines compare patterns. If each page uses a different logic, your library won't compound.

A solid architecture for effective competitive content usually includes:

- a direct framing of who each tool is for

- use-case differences

- strengths and limitations on both sides

- workflow or governance implications

- decision guidance for a specific buyer type

That's it. Clean. Predictable. Useful.

Back when I saw content libraries really work, the big unlock was always structure. At Steamfeed, traffic jumps happened at 500 pages, 1,000 pages, 2,500 pages, 5,000 pages, then 10,000 pages because depth and breadth started reinforcing each other. Most pages weren't blockbusters. Far from it. But the library had shape. That same principle applies here. You don't need 100 random comparison pages. You need 20 pages built on the same bones.

Critics might say this makes content feel templated. Fair. It can, if the examples and insight are lazy. But a repeatable structure with sharp inputs beats a custom mess every single time.

Tie every comparison page to one use case and one funnel job

This is the step most teams skip, and I think it's one of the costliest mistakes. They create a comparison page for traffic, then hope sales or campaigns can use it later. That's upside down.

Each page should have one primary use case and one funnel job. One. Not five. If a page is for "teams trying to replace scattered AI prompting with governed execution," say that clearly. If it's for "demand gen managers building a competitive content engine," build around that workflow. Don't cram in every possible reader.

I use a simple rule here. If the page can't answer "who is this for?" in 12 words or less, it's too broad.

A before-and-after makes this obvious. Before: "This page compares Platform A and Platform B across features, pricing, and use cases." After: "This page helps lean SaaS teams decide which system can produce governed competitive content without adding review chaos." The second one gives the writer direction. It also gives the buyer a reason to care.

That focus carries into distribution too. Sales can use it. Demand gen can route traffic to it. AI engines have a cleaner signal. Everybody wins. If you're curious what this looks like in an actual governed system, you can request a demo.

Publish in clusters, not one-offs

One comparison page rarely changes much. A cluster does. That's because effective competitive content isn't just about winning a single keyword. It's about building a body of evidence around your market position.

The cluster model I like is 6-10 pages to start:

- 2 direct competitor comparisons

- 2 alternatives pages

- 1 category definition page

- 1 objection-handling or FAQ page

- 1 use-case-specific decision page

- 1 buyer enablement page tied to implementation or migration

- optional 1-2 pages for adjacent competitor angles

That's your Minimum Effective Set. Below 6 pages, it's hard to establish a strong narrative footprint. Above 10 pages, you start getting real pattern recognition across search and buyer journeys.

Not every team needs that on day one. Pre-product startups don't. Solo creators don't. Big enterprises with huge in-house editorial teams may solve this differently too. But for scaling B2B SaaS teams sitting in that awkward middle, 6-10 governed assets is usually where competitive content starts pulling its weight.

How Oleno Turns Competitive Content Into a System

Oleno turns competitive content from scattered work into a governed production system. Instead of relying on whoever has context this week, it centralizes the truth, applies your market point of view, and runs structured content jobs against that foundation. That's a very different model than asking AI for a draft and hoping review catches what went wrong.

Governance first, then competitive execution

The first thing Oleno does well is separate governance from output. That matters a lot in competitive content because this is where teams get nervous. For good reason. One sloppy claim can create a mess internally and externally.

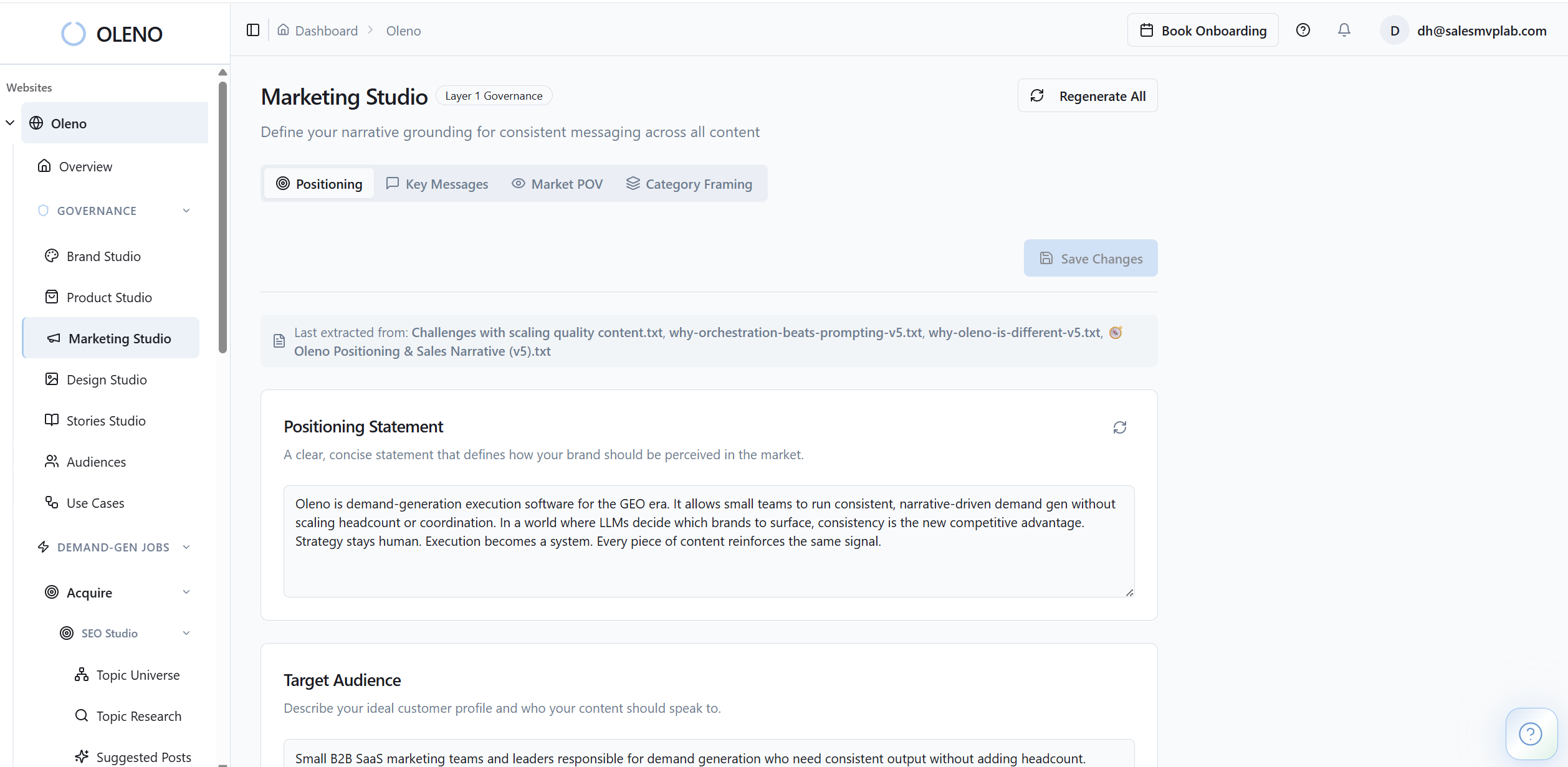

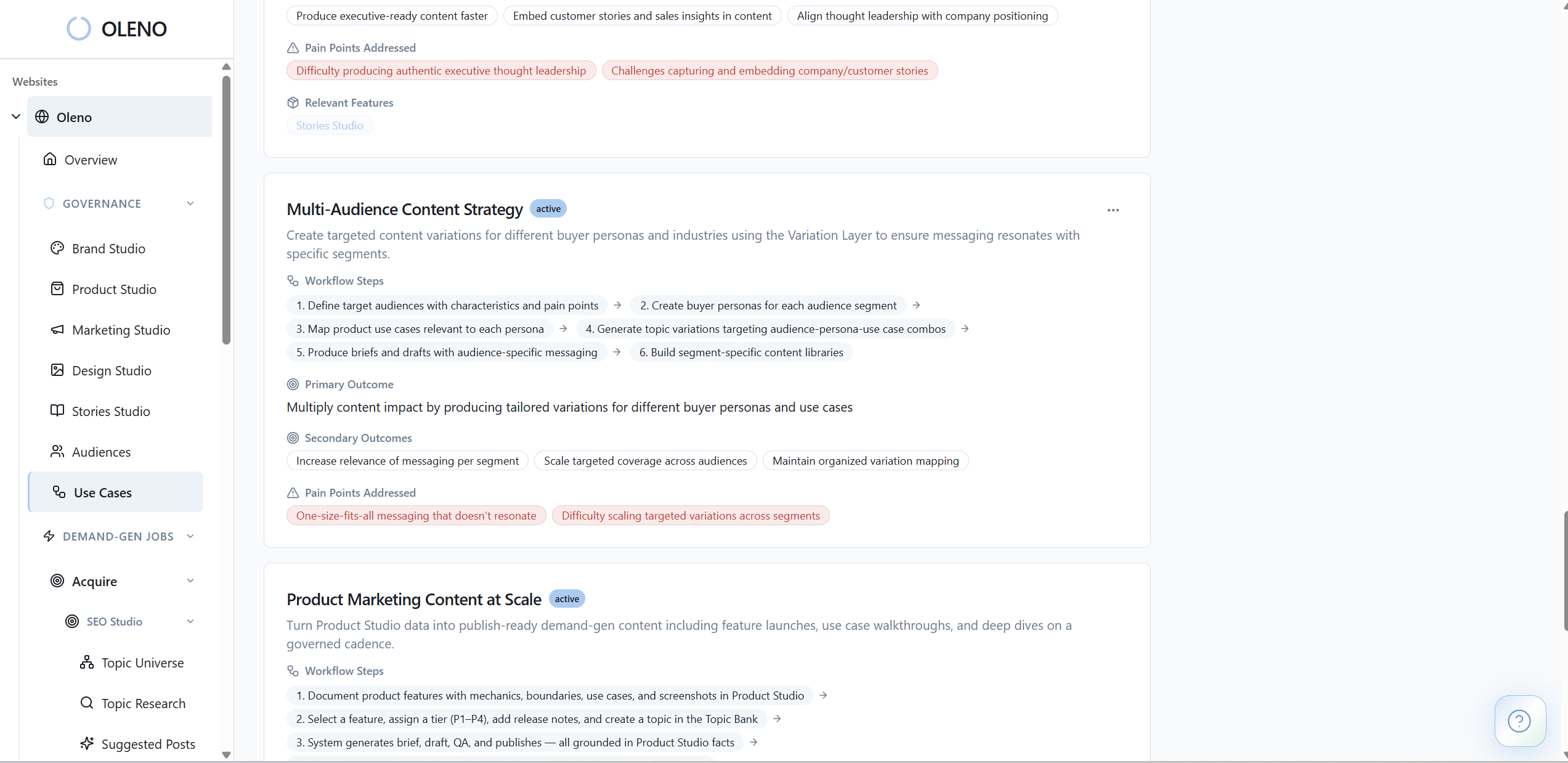

Marketing Studio gives the system your category framing, key messages, and narrative stance, so your comparisons don't drift into bland neutral copy. Product Studio keeps approved product truth and boundaries in one place, which reduces the risk of invented claims or outdated positioning. And Use Case Studio plus Audience & Persona Targeting makes the same comparison topic land differently for a demand gen manager than it would for a CMO or PMM.

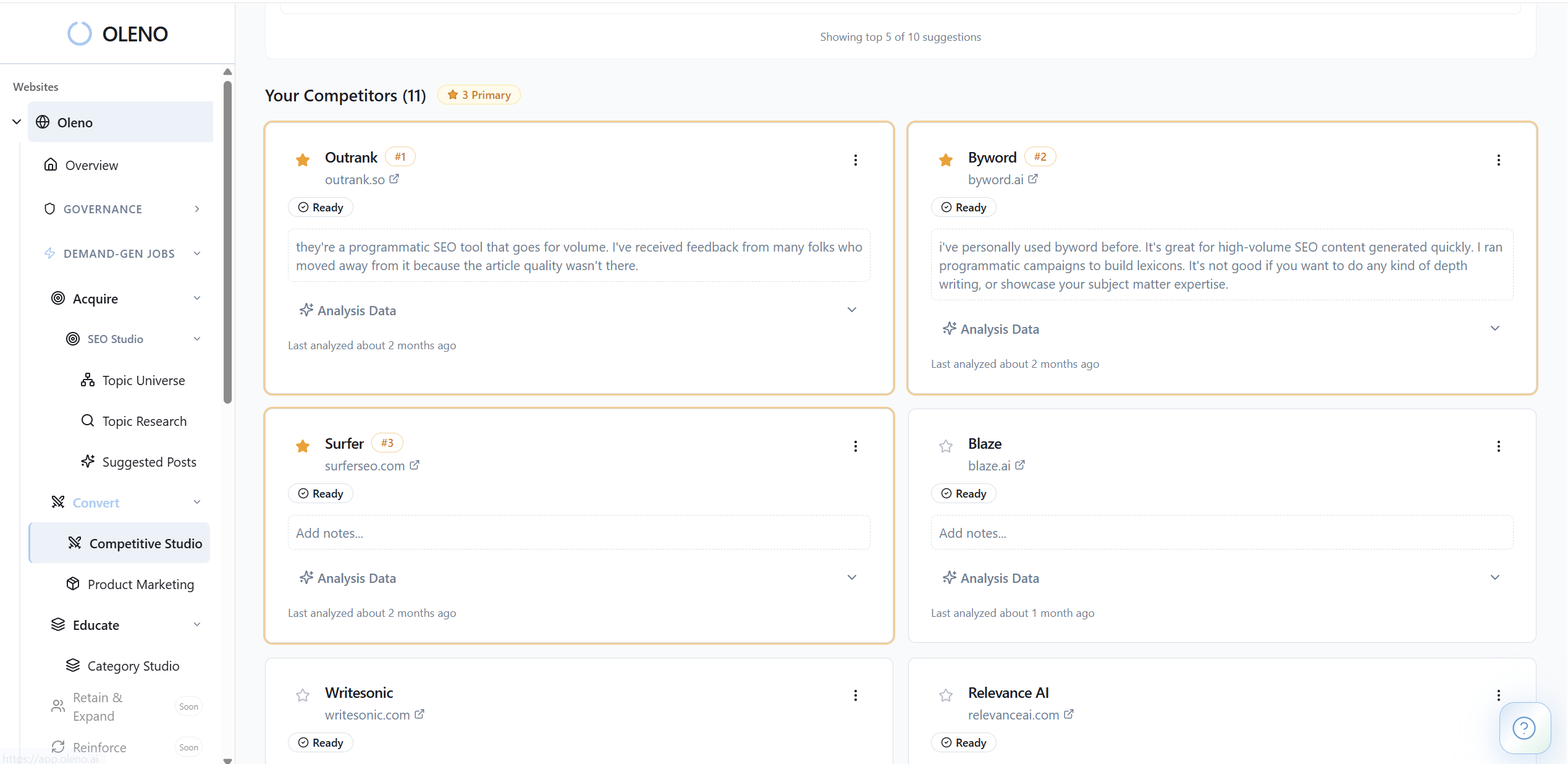

Then Competitive Studio runs on top of that. You define competitors once, it builds a competitive knowledge base from those configured sources, and the studio generates structured evaluation content with fairness rules and attribution built in. That means you're not recreating the fact base from scratch every time. Oleno is doing the repetitive coordination work that normally slows teams down.

The pipeline is built for volume without narrative drift

This is the second big thing. Oleno isn't trying to replace strategy. It's trying to make strategy executable at scale.

Programmatic SEO Studio helps build acquisition content at a steady cadence, while Buyer Enablement Studio handles decision-support content like FAQ pages and objection-handling assets. Orchestrator schedules approved topics by blueprint and quota, so the system can keep moving without someone manually pushing every task forward. And Quality Gate runs 80+ automated checks before anything reaches the review queue, including voice, structure, and grounding checks.

That combo matters because the real cost in competitive content isn't drafting. It's rework. It's waiting. It's fixing drift. Oleno cuts into that by using governed inputs up front and automated QA before publication. Worth saying clearly: it doesn't do technical SEO, ranking tracking, paid media, or campaign analytics. You still need those pieces in your stack. But on the content execution side, it gives lean teams a much more reliable way to produce competitive pages that hold together.

If you want to see how Oleno handles the governed side of competitive content production, book a demo.

Build Fewer Competitive Pages, But Make Them Hold Up

Effective competitive content is not about publishing faster for the sake of it. It's about making sure every comparison page can survive scrutiny from buyers, sales, product marketing, search engines, and now AI engines too.

Most teams don't need more prompts. They need a tighter system. One that starts with verified truth, ties pages to real buyer intent, and keeps execution from drifting as more people get involved.

That's the shift. Competitive content stops being a recurring fire drill and starts becoming an asset library that compounds. And once that happens, you stop guessing what to publish next. You start building a position the market can actually recognize.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions