7-Step Brief Checklist to Prevent Content Repetition in Saturated Markets

Most teams don’t notice repetition until the metrics flatten and the team starts debating headlines. By then, it’s late. You’ve already paid the cost in time, attention, and internal trust. The fix doesn’t sit in editing or “writing harder.” It lives in the brief. That’s the upstream lever.

I learned this the slow way. At PostBeyond, we could move fast when I wrote because I held the context in my head. Once I wasn’t writing every post, the drift started. Great people. Good writing. But the inputs were the same, so the output looked like everything else on the SERP. The lesson: stop hoping drafts surprise you. Engineer originality before writing starts.

Key Takeaways:

- Put differentiation gates in the brief so weak ideas never enter drafting

- Treat repetition as overlap across claims, sources, and structure—not word choice

- Quantify information gain at the outline stage and set pass/fail thresholds

- Add cooldown and saturation labels per cluster to stop cannibalization

- Tie novelty to specific H2/H3s and require first‑party examples or data

- Pre-spec visual requirements to show the new angle, not decorate it

- Use automated blockers so briefs fail fast when they lack new inputs

Why Your Content Team Keeps Repeating Itself

Teams repeat themselves because briefs don’t force novelty. When inputs mirror the SERP—same claims, same sources, same structure—you get familiar outlines with nicer prose. Rewrites help, but they’re slow and subjective. Hard acceptance criteria in the brief fixes this at the source.

The real failure starts before writing

Repetition isn’t a drafting problem. It’s a brief problem. If your brief doesn’t require new claims, fresh sources, or a distinct angle, the fastest path is mimicry. Writers follow the outline to the letter, and you end up editing tone while the substance stays the same.

I’ve watched this play out. You send a writer “top pages to review,” plus a vague goal like “be original.” That’s handcuffs. They deliver clean sentences wrapped around the same arguments. You get stuck in rewrites over phrasing, not ideas. The fix isn’t more talent or more time. It’s stricter inputs.

So put the burden where it belongs. Demand a unique claim tied to a specific section. Require at least one first‑party example. Call out one ignored use‑case the SERP misses. When these show up in the brief, drafts finally have something new to say.

What counts as repetition in a saturated market?

Repetition is measurable. If your outline restates the top five results’ claims, cites the same sources, and mirrors their section order, you’re duplicating value—no matter how clever the wording. Change the verbs, and you still deliver the same argument.

Make this objective. Track coverage overlap across H2s. Count primary sources beyond “the usual suspects.” Identify novelty gaps by section, not just the article level. When you quantify sameness, you remove debates about “voice” masking shallow inputs.

And about “write better”? Good prose can’t fix missing information gain. It makes repetition more pleasant to read. In saturated markets, pleasant isn’t enough. It needs to be new. If you want a quick lens on the dynamics at play, Parse.ly’s view on content saturation challenges is a useful backdrop. It won’t solve it for you. It frames the problem.

The Real Root Cause Lives Upstream in the Brief

Briefs often optimize for volume and length, not originality. Without rules for new claims, distinct sources, or use‑case specificity, teams copy what already “works.” That choice locks your post into the same proof points, so humans and AI treat it as interchangeable.

How do briefs become the bottleneck?

It’s easy to reward speed. “We need five posts this week.” So briefs lean on SEO keywords, generic H2 lists, and a couple links to “inspiration.” No requirement for new inputs. No acceptance criteria tied to novelty. The path of least resistance becomes copy‑the‑winners.

What’s missing is governance. Set explicit thresholds—information gain, source diversity, angle specificity—before the draft starts. Add a field for “first‑party proof” and another for the exact section it appears in. Give editors a number to approve against, not a vibe to chase.

When briefs carry that weight, the draft flips from “summarize the SERP” to “defend our unique claim with evidence.” It’s a different assignment. And it’s finally measurable.

What traditional approaches miss

Most teams “do research” by scanning top results, then importing the winner’s outline. That’s structure theft. It bakes sameness into your narrative and citations before you’ve written a word. It also discourages risk; you’re anchoring to what’s already been said.

Try dual discovery instead. Identify the SERP’s blind spots and define your section‑level answers in snippet‑ready format. Simultaneously collect new inputs—data, examples, use‑cases—so you’re not just cleaning the same windows. If you want a quick market lens on spotting saturation patterns, this breakdown on identifying market saturation and staying ahead is a helpful frame, even if you’ll set stricter rules than it suggests.

Want to see how a governed pipeline changes this dynamic end to end? If you’re curious what it looks like in practice, you can Generate 3 Free Test Articles and study the inputs versus outputs.

The Hidden Cost of Shipping Another Lookalike Post

Repetition burns hours, fragments clusters, and inflates editorial rework. The costs aren’t abstract. They show up as lost weeks, muddled internal links, and missed windows. A simple cooldown and thresholding at the brief stage prevents most of it.

The math you can feel

Let’s pretend your average post takes 12 hours across research, writing, editing, and design. If a duplicate earns half the engagement and never ranks, you’ve burned 6 hours in opportunity cost. Four repeats in a month? That’s 24 hours you could’ve spent on gap‑filling work.

There’s also the cost of attention. Editors triage sameness late in the process. Designers rebuild visuals to “make it feel new.” Sales never sees a narrative they can use. All of that is drift you can reduce with brief‑level rules.

Zooming out, wasted content spend compounds. While not a one‑to‑one comparison, the logic in Marketing Week’s analysis that advertising drives ROI per pound invested hints at the real tradeoff: money and time redeployed to proven inputs pay you back. Repetition doesn’t.

When cannibalization drags clusters down

Lookalike posts split intent and siphon internal links. Crawlers see noise. Your “pillar” loses clarity, and authority stalls. The solution’s boring on purpose: label cluster saturation and enforce a cooldown. If you don’t have a new sub‑intent, you wait.

A simple governance rule—90 days before re‑covering the same intent—prevents accidental duplicates. More important, it forces the team to make a decision: refresh the canonical, or create a distinct spoke with clear angle shifts. Either outcome beats another fuzzy rewrite.

Editorial rework is the quiet tax here. You feel it last. But it’s the one that breaks trust internally. After the third “this reads like our March post” comment, people stop pushing. Clear rules bring the push back.

The Moment You Know It Is Repetitive

You can feel it the minute you hit publish. The headline, the sections, even the quotes—familiar. It won’t rank. It won’t move pipeline. That moment tells you something simple: your pre‑write checks were optional. Make them mandatory and it stops happening.

The 3pm publish you regret

We’ve all shipped it. By 3pm the doc is live, and you’re déjà vu scrolling. You recognize the section order from last quarter. The sources are the same. It’s new text, not new ideas.

That sinking feeling is a systems problem. If novelty isn’t required in the brief, it won’t appear in the draft. Give editors veto power with a numeric gate. Give PMMs a field to attach proprietary proof. Make “what’s new” visible before a word is written.

And remember, audiences notice repetition more than we admit. In advertising, for example, Epsilon found 88% of consumers notice repetitive ads. Content isn’t immune. The takeaway isn’t “never repeat.” It’s “repeat on purpose and add value.”

A quick story from the trenches

At a prior SaaS role, a sharp writer took twice as long to ship a weaker piece—not for lack of talent, but lack of upstream rules. They did what the brief allowed. We spent days editing around sameness because the inputs were generic. That’s the expensive way to learn.

When we added decision rules to the brief—unique claim, first‑party proof, ignored use‑case tied to a specific H3—the drift dropped. Timelines improved. Fewer political debates. People trusted the process because the criteria were visible and objective.

Who should own the stop button? You should. But give people handles. Editors need numeric thresholds. PMMs need a slot for proof. Designers need a checklist for the solution section. When rules are clear, speed returns.

A 7-Step Brief Checklist That Blocks Repetition Before Drafting

A brief can block repetition by enforcing novelty at the input level. Map saturation, choose measurable signals, score information gain, and set pass/fail rules. Then tie unique claims and visuals to specific sections so the new angle shows up where readers actually see it.

Step 1: Map the topic landscape and label saturation

Start with a quick topic audit. Group content into clusters, tag each as underserved, healthy, well‑covered, or saturated, and note the last publish date per intent. This isn’t about keywords; it’s about coverage clarity. If a cluster is saturated, the burden shifts to “what’s genuinely new?”

Record the cluster label and last publish date in the brief. Add a simple rule: saturated clusters require a new sub‑intent or a 90‑day cooldown. No exceptions without an explicit angle shift. You’re not blocking creativity—you’re making it visible.

Step 2: Select three competitive research signals

Pick the signals you’ll measure every time. For example: coverage overlap across the top five pages, novelty gaps by section, and the count of primary sources beyond Wikipedia‑level. These give you a quantitative read on sameness, not a gut feel.

Design a capture process you can repeat. Compute H2/H3 overlap, list missing claims, and tally the sources you’ll bring to the table. The brief should carry these raw values. Editors shouldn’t need a second tab to see where the novelty lives.

Step 3: Calculate information gain and set thresholds

Score the brief 0–100 before drafting. New claims carry the most weight, then new sources, then under‑served use‑cases. Keep it simple at first: 30+ ships, 20–29 revises, 0–19 blocks. You can refine weights later as you learn.

Include a worked example in the brief so reviewers can see how the score was earned. Transparency prevents gaming. And it shortens approvals. You’ll find teams start aiming higher once the scoring is visible. Interjection. Make the math public; it changes behavior.

Step 4: Enforce outline-level differentiation

Don’t stop at “we’ll be different.” Specify where and how. Require at least one unique claim, one proprietary data point or first‑party example, and one use‑case the SERP ignores. Tie each to a specific H2/H3 in the outline. That’s how novelty moves from a promise to a plan.

Add acceptance criteria in plain English: “H3 X introduces Y with Z data source.” If the outline can’t hit these, the brief doesn’t pass. You’ll write fewer pieces at first. Then better ones. That trade is usually worth it.

Step 5: Mandate visual and brand differentiation

Tell the story visually, on purpose. Decide the visual types and placements before writing—comparison table in the reframe, diagram in the new way, product screenshot in the solution. This isn’t decoration; it’s how you surface the new angle fast.

Include brand constraints for colors, aspect ratios, alt text patterns, and filename rules right in the brief. Designers move faster when the rules are known. More important, visuals stop drifting into generic stock land, which quietly erodes credibility.

Step 6: Apply cooldown and cluster governance

Add a per‑cluster cooldown. Default to 90 days before re‑covering the same intent. Then write your repurpose versus rewrite rule. For example: if coverage overlap is greater than 60%, refresh the canonical. If overlap is less than 30% with a distinct persona, create a new spoke.

Record the decision in the brief with a one‑line rationale. That sentence prevents calendar‑driven repeats. It also helps you defend the choice later when someone asks, “Why not another post on X this month?”

Step 7: Add QA gates and automated blockers

Insert pre‑draft checks that fail the brief when thresholds aren’t met. Examples: IG score under 30 blocks; missing unique claim blocks; no new source blocks. Use a short JSON‑style checklist your CMS or pipeline can read. Failing fast beats editing late.

Give editors a one‑click approve or reject tied to these fields. You’re removing subjectivity without killing judgment. If you want to see how these kinds of gates feel in a live system, you can Try Using An Autonomous Content Engine For Always-On Publishing and compare inputs to outputs over a few runs.

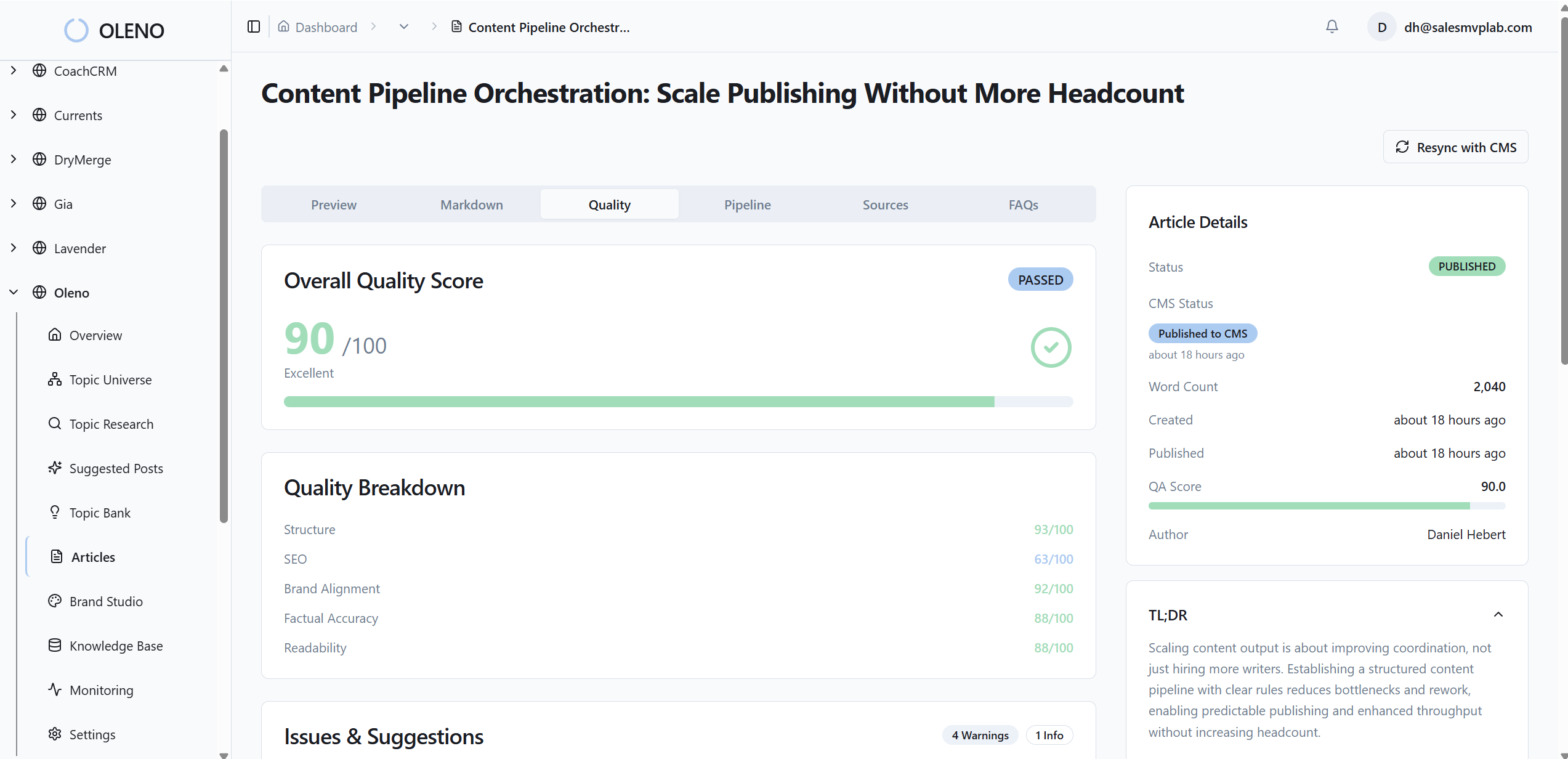

How Oleno Enforces Differentiation From Brief to Publish

Oleno makes the “new way” enforceable by turning it into a pipeline. Topic Universe maps clusters and applies cooldowns. Information Gain Scoring flags low‑differentiation briefs before drafting. Visuals, links, and schema are handled deterministically. The result isn’t just a draft—it’s a governed, publish‑ready article.

Oleno starts with Topic Universe, which maps your topics into clusters, tracks coverage, and labels saturation in real time. It enforces a 90‑day cooldown so you don’t recirculate the same intent and cannibalize yourself. That’s the governance we wanted earlier but didn’t have baked into the system.

During brief generation, Oleno runs competitive research and calculates a 0–100 Information Gain Score. Low scores trigger warnings before anyone writes. You can require a unique claim and first‑party proof tied to a specific section. When those rules live in the brief, you stop paying the “frustrating rework” tax later.

With Visual Studio, Oleno generates brand‑consistent hero and inline images, then matches product screenshots to relevant sections using semantic similarity. Solution sections get priority. Alt text and filenames are handled automatically. You’re not bolting on visuals at the end; you’re showing differentiation where it matters.

On the “accuracy in code” side, Oleno injects internal links only from your verified sitemap, generates schema programmatically, and runs every article through an 80+ checkpoint QA gate. Structure stays snippet‑ready across sections, which makes your pages clearer for both readers and machines.

If any of the costs earlier hit home—lost hours on repeats, cannibalized clusters, endless triage—this is the relief valve. Oleno doesn’t promise perfection. It gives you a system that blocks sameness at the source and ships complete, on‑brand articles consistently. Want to see it yourself? Try Oleno For Free. Or if you prefer starting smaller, Generate 3 Free Test Articles and study the information gain score and brief rules in action.

Conclusion

Repetition isn’t a sentence problem. It’s a system problem. When briefs don’t enforce novelty, drafts can’t help but echo the SERP. Put gates in the brief—map saturation, quantify overlap, score information gain, tie claims to sections, and fail fast. If you want those rules to run every day without you carrying the clipboard, Oleno makes the pipeline do the work.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions