A/B Testing Content Variations by Audience: SEO‑Safe Experiment Playbook

Most teams treat audience variations like art direction, not science. So they ship copy swaps, eyeball a few charts, then call a winner. When you are ab testing content variations without SEO guardrails, you are guessing, splitting signals, and sometimes hurting rankings you worked hard to earn. I have made that mistake. It is costly and avoidable.

The whole point of testing is to learn what really moves a metric for a segment. That requires clean hypotheses, stable URLs, and decision rules you can defend in a meeting. If you want confidence, build experiments that are SEO safe, statistically sound, and tied to a rollout plan you can execute next week, not next quarter.

Key Takeaways:

- Treat audience variations as experiments with one variable, a clear metric, and a stopping rule

- Keep one indexable URL, use canonicals correctly, and give crawlers a stable baseline

- Write hypotheses that name segment, variable, metric, and expected lift with a minimum detectable effect

- Randomize assignment inside segments, then analyze by segment and overall to avoid bias

- Instrument persona attribution at exposure time, not after the fact

- Use decision thresholds and a rollback checklist so winners ship safely and losers end cleanly

Why ab testing content variations goes wrong when SEO is ignored

Testing without SEO discipline often creates duplicate content, splits link equity, and confuses crawlers. Search engines tolerate responsible testing when you present a single preferred URL and avoid cloaking. The fix is simple in theory, keep the page stable for bots, randomize users, and consolidate signals with canonicals.

What most teams get wrong about audience variations

Teams swap headlines, intros, or section order without a control or a real hypothesis, then read too much into normal variance. A few prettier charts do not make that science. You need to isolate one change, hold everything else constant, and define what lift counts as meaningful before traffic hits the page. Otherwise you risk chasing noise.

In my experience, the fastest way to stop wasting time is to write the decision rule in plain English. Name the audience. Name the variable. Name the metric. State the lift you expect and why it matters to the business. If you cannot do that in one sentence, you are not ready to test.

A simple rule set helps once the page is live:

- One variable per test, no exceptions

- Predefined duration and sample size, no peeking for early wins

- Rollout only when the lift clears your threshold with power to back it up

SEO duplicate content traps to avoid

Near clones fight each other in the index and can cause cannibalization. Crawlers need a stable representative URL, consistent internal links, and coherent metadata. Randomizing by URL is a common mistake that splits authority across paths. Randomize users, not paths, and keep testing URLs out of the index while you learn.

You can stay safe without killing velocity. Use a single canonical that points to the preferred URL. Keep title tags and schema stable unless those are the variables you are testing. If you truly need temporary variant paths, set them to noindex and block them from your internal linking. That protects rankings while you run the experiment.

Google has spelled out how to handle website testing responsibly, including cloaking warnings and canonical guidance. If you need a refresher, start with their post on website testing and search from Search Central, which is still the clearest baseline on this topic. You can find it here: Website testing and Google Search, Search Central.

How do search engines handle test variants?

Search engines accept that teams test, provided you give them one version to index and you do not serve bots different content than users on purpose. Present the same primary content to crawlers as to users, consolidate signals with canonicals, and avoid permanent forks. Randomize assignment at the user level and keep URLs stable.

If your approach leads crawlers down two paths that look the same, you have a problem. If canonicals disagree across variants, you have a bigger problem. Keep the representative URL consistent across variants, and make sure internal links point to that URL. Your crawl budget and rankings will thank you.

The root cause is weak experiment design, not copy output

Poor results usually come from messy setups, not bad writing. Effective tests start with a clear question, one variable, and a metric that ties to money or qualified demand. Teams that treat experiments like creative swaps miss the truth, they needed better design long before they needed a better headline.

Hypotheses that are actually testable

A good hypothesis names the audience, variable, metric, and direction of change. For example, for mid market buyers, tightening the value prop headline will increase demo CTR by fifteen percent within fourteen days at 90 percent power. Pair that with a stopping rule and a minimum detectable effect, then commit.

Numbers matter here. Precomputing sample size and duration saves you from false winners. If you need a quick primer on statistical power and test planning, the classic write up from Microsoft Research is a strong foundation for web experiments. It is worth a read, even if you have run dozens of tests already. Here is the reference: [Seven rules of thumb for online controlled experiments].

A checklist I like to use after the hypothesis is drafted:

- Minimum detectable effect defined and tied to business value

- Power and duration calculated in advance

- A single decision threshold for rollout or rollback

Segmentation versus randomization, the fairness model

Analyze by segment, but randomize within segments. That is the fairness model. If you pre register persona logic, lock it before the test starts, then assign users randomly inside those buckets. Cherry picking cohorts after the fact is a fast path to bias. It feels good and looks smart, but it is wrong.

Persona tags should be stable and based on data you trust, not hand waving. For anonymous traffic, use intent signals like referrer, query intent, and on site behavior. For known users, use product usage or CRM properties. The goal is simple, keep the assignment random and the analysis faithful to how buyers actually differ.

What variables belong in scope versus held constant?

Scope one variable per test, like headline, hero image, or CTA label. Hold everything else constant, including layout, schema, internal links, and primary keyword usage. If you must bundle micro changes, tie them to one underlying hypothesis, such as clarity of the first screen. Too many moving parts create noise you cannot untangle later.

A simple separation helps teams stay honest:

- In scope, one change tied to the hypothesis

- Out of scope, technical SEO, internal links, and metadata

- Frozen, anything that affects crawlability or indexation

If you need to brush up on power calculations for planning, Evan Miller’s primer is still a favorite among experimenters and easy to follow. You can grab it here, Sample size and power primer.

The hidden costs of ab testing content variations the wrong way

Bad tests hurt rankings, waste traffic, and create false confidence. Duplicate variants split signals and burn crawl budget. Underpowered tests declare fake winners. Persona tagging leaks, which turns mix shifts into phantom lifts. The cost is real, slower growth now and a credibility hit that lingers.

SEO impact, cannibalization, and crawl budget waste

Duplicative variants compete with each other in the index, which drags down the page you actually want to rank. If both versions are indexable, you are inviting cannibalization. Keep one indexable URL, route internal links to it, and pin canonicals correctly. Crawlers should see a stable baseline that represents your site.

When you ignore this, the penalty is quiet. Impressions slip. Clicks drift. Traffic erodes week by week and no one can explain why. Google’s duplicate content guidance is clear on consolidation and intent, and it is a useful reminder that consolidation is your friend. Here is the doc, Duplicate content guidance.

Statistical waste, underpowered tests, and false winners

Underpowered tests light up your dashboard with random spikes that look like wins. You roll out noise, then watch conversions sag a month later. Protect yourself by computing power and duration before launch. Use sequential methods carefully and limit concurrent tests per template. Fewer, stronger tests beat a pile of maybes.

A solid explainer on power and sample size in practical A B testing is available from Statsig, which walks through trade offs and common mistakes. It is a quick read that can save you from preventable errors, Power and sample size in A B testing.

Attribution errors with personas and intent leaks

Attribution breaks when persona tagging depends on flimsy heuristics or last click alone. If you assign persona after the fact, you will confuse traffic mix with performance. The fix, stamp assignment and persona at exposure time, then analyze overall and by segment. Without that, you will celebrate lifts that are just audience shifts.

It is tempting to rely on UTM chaos and hope the CDP sorts it out later. That is a mistake. Define persona signals upfront, mirror them in analytics user properties, and keep the logic consistent across campaigns. Clean inputs make honest reads.

When tests backfire, teams lose time, trust, and momentum

The human cost is real. Constant variant shipping creates editorial whiplash, underpowered results feel like busywork, and leaders push to ship a thin win. You lose weekends to rewrites and your team’s energy drains. A few bad runs and people stop believing the process works.

Editorial whiplash and approval fatigue

Shipping and unshipping copy variants every week creates churn. Editors burn cycles. Stakeholders lose patience. Calendars slip. The fix is simple, limit active tests, set a review SLA, and add a freeze window during live runs. Structure protects focus and keeps your calendar intact.

I have seen teams bounce between three headline ideas in a single week because a chart moved two points. That is not learning. That is panic. Commit to a window, give the test room to breathe, and stop rewriting in flight. You will save time and keep your sanity.

Why do inconclusive tests feel worse than no test?

Because inconclusive work drains effort without teaching anything. People did the work, so they want a win. Set expectations up front. Decide what you will learn if the result is neutral. Use a neutral read to refine the hypothesis or the variable, not to rerun the same idea in circles.

Decision fatigue is a risk here. Too much data without clear rules actually slows you down, which HBR has written about in detail. It is a good reminder to define decisions before you drown in charts, How too much data can hurt decision making.

Stakeholder pressure and the rush to ship a winner

Leaders want movement. If you rush a thin result into production, you risk the wrong rollout and a trust hit that is hard to recover from. Protect the process with thresholds, pre agreed metrics, and a stop go calendar. The discipline buys you credibility, which is the only way to scale experiments.

There is a reason high growth companies treat experimentation as a system. McKinsey’s perspective on running experiments at scale echoes this, process and governance prevent waste and accelerate learning. Worth reading if you are building a program, Experiment at scale for growth.

SEO safe ab testing content variations, the end to end playbook

A safe, reliable program has five parts, a testable hypothesis, one variable, a single indexable URL, clean assignment and tagging, and clear decision rules. When you do that, you find real winners without harming rankings. Run this for six to eight weeks and you will know what to ship.

Define the hypothesis, metric, and decision rule

Document a single variable, target audience, primary metric, guardrails, and a stopping rule. Pre register minimum detectable effect and test length. Add a sample ratio mismatch check to catch traffic issues early. Decide in advance what triggers rollout, a retest, or an archive. That removes wiggle room.

A simple sequence works well:

- Write the hypothesis with audience, variable, metric, and expected lift

- Compute sample size, power, and duration for your traffic

- Define decision thresholds and a rollback plan

- Set freeze windows and review SLAs for the team

- Log all of this in a shared experiment record

Construct SEO safe variants with real difference, not clones

Keep one indexable URL and apply proper canonicalization. Avoid fragmenting schema or title tags unless that element is the variable. Make variants meaningfully different within on page constraints that protect SEO, such as an alternative headline plus hero copy, not five micro edits that do nothing but add risk.

A quick do and do not list helps here:

- Do keep internal links and navigation identical across variants

- Do noindex any temporary variant paths if you must use them

- Do keep meta titles stable unless you are testing titles

- Do not randomize by URL or create permanent forks

Instrument assignment and persona attribution correctly

Assign users randomly and store the assignment server side. Stamp exposure with persona or intent tags, then track performance overall and by segment. Use consistent UTMs for campaigns that feed the test. In GA4 or your CDP, create views that mirror your decision rules so analysis is fast and reproducible.

If you need to refresh analytics setup, Google’s docs on user properties and audiences explain how to carry persona signals cleanly, GA4 user properties and audiences. For SEO safety during testing, Optimizely’s developer guidance covers the main pitfalls for web experiments, SEO considerations for testing.

Ready to see this running without risking rankings and traffic? Request a Demo.

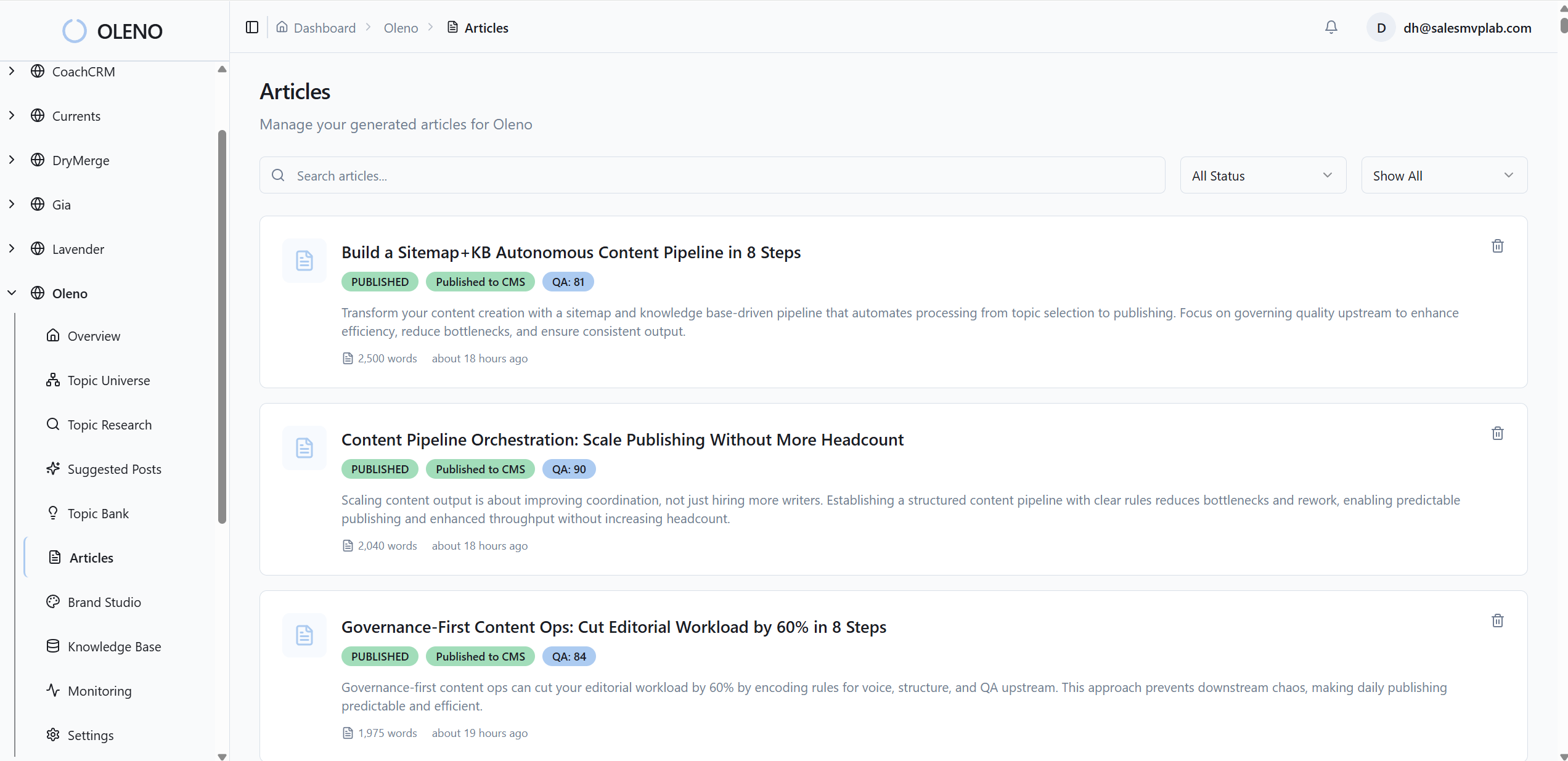

How Oleno automates SEO safe ab testing content variations

Oleno turns the playbook above into a system. Governance defines guardrails, the variation layer creates controlled differences, and the execution engine applies tracking and SEO rules on publish. You stop guessing, avoid duplicate content mistakes, and move winners into production without breaking URLs or internal links.

Governance studios turn rules into guardrails

Oleno’s Brand, Marketing, and Product Studios codify voice, claims, and constraints so variants stay true to your narrative and product facts. No more invented rules in meetings. Governance also locks CTA style, structure rules, and words to avoid, which reduces drift and prevents duplicate content lookalikes that confuse crawlers.

I like how this shifts work from memory to system. Instead of reminding writers to avoid certain phrases or formats, the rules live where content gets made. That cuts review time and stops slow, silent erosion of positioning. Accuracy improves because approved claims and boundaries are right there while you work.

Variation layer and audience targeting, built for experiments

Oleno’s variation layer creates persona targeted drafts with controlled difference while holding everything else constant. You define the variable to test, the system freezes layout, schema, internal links, and primary keyword usage. Assignment and exposure are logged automatically for clean segment analysis later. Noise drops. Signal shows up faster.

Teams that struggle with underpowered tests often try to test too many things at once. Oleno forces one variable per run, so you get honest reads. It also preserves a single indexable URL by default, with correct canonicals, which protects crawl stability during the test.

Publish variants safely in minutes, not days. That is what Oleno delivers. Request a Demo.

Execution engine and governed rollout to your CMS

From draft to QA to publish, Oleno automates test setup, tracking snippets, and rollback plans. Canonicals and indexation rules are applied consistently on push. When a winner meets your thresholds, Oleno rolls it out to production without breaking URLs or internal links. The whole handoff to your CMS is clean and predictable.

You can think of it as orchestration for content experiments. The system applies your rules every time, so quality does not drop when you scale volume. If you want to go deeper on experimentation lifecycle practices, LaunchDarkly’s overview is a helpful mental model, Experimentation lifecycle. For CMS integration patterns, Contentful’s webhook concepts show how to connect events cleanly, Content management webhooks.

Key capabilities you get out of the box:

- Governed briefs that pull brand voice, messaging, and product truth into every draft

- Controlled variation that locks constants and changes only your declared variable

- SEO safety with stable URLs, correct canonicals, and preserved schema on publish

- Clean analytics with server side assignment, exposure stamps, and segment views

- One click rollout when a variant clears your decision threshold, with rollback rules ready

Want the variation layer, governance, and rollout handled for you end to end? Book a Demo.

Conclusion

Audience personalization without experiments is guesswork. Clean, SEO safe tests flip that into learning you can trust. If you define testable hypotheses, hold one variable constant, instrument attribution at exposure, and protect a single indexable URL, you can detect meaningful lifts in six to eight weeks. Then standardize rollout so winners move into production without harming rankings. That is how you stop wasting effort, keep trust, and turn ab testing content variations into a real growth lever.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions