AI-Assisted Category Discovery: Validate New Narratives from Data

Most teams stumble on category work because it looks like branding, not operations. You brainstorm, debate, get a clever line, then hope the market cares. Sometimes it lands. Often it drifts. And when it drifts, you burn cycles on rewrites, fix collateral, and put more pressure on sales to “translate” on the fly.

I’ve been on every side of that. At PostBeyond, we hit volume and voice, but some content sat too far from the product, so leads stalled. At LevelJump, we learned the hard way that “everything software” is nothing in the market, and focus is what compounds. When we aligned narrative with the real use case buyers felt, we moved from stuck to steady. Category isn’t a tagline problem. It’s a signal and execution problem.

Key Takeaways:

- Brainstormed narratives drift because they ignore buyer phrasing and real search behavior

- Your constraint isn’t creativity, it’s the quality and coverage of the signals you mine

- Guesswork creates expensive false positives and coordination debt across teams

- Sales friction is usually a language mismatch, not a product failure

- AI can read, cluster, and draft patterns fast, but governance must set the rails

- Validate candidate narratives cheaply with staged tests before you scale

- Lock the winner into governance, jobs, and QA so the story compounds

Why Brainstormed Categories Miss the Mark

Most category narratives miss because they’re built on internal opinions instead of market signals. A working category frame is short, repeatable, and matches the phrasing buyers already use. If it’s built on vibes, it confuses sales and dilutes content, and you end up re-explaining it on every call.

What is a category narrative and why does it matter?

A category narrative is your compact, repeatable frame for problem, solution, and why now, written in the buyer’s words. It sets the lens for how customers evaluate you and compare options, long before your demo. When it’s clear and repeatable, customers echo it in searches, talk tracks, and budget conversations, which shortens sales cycles and reduces rework.

In practice, this means you need a story you can say in one breath that still carries proof and contrast. The kind of line a rep can repeat verbatim on call two, and the prospect nods. The market won’t memorize your paragraph, but it will remember your angle if it matches how they describe their pain. Aim there.

Workshops overfit to internal myths

Workshops align people. They rarely align with reality. You get loud opinions, the last big deal, and the upcoming launch all fighting for airtime. That overfits to internal narratives instead of buyer language. Use workshops to set guardrails, not to fabricate the story.

The raw material should come from outside the room. Support logs, sales call transcripts, product docs, and search queries carry the phrases buyers already use. When those phrases shape your options, you avoid clever-but-confusing frames. You also avoid the trap of designing a category around a feature name you love.

Why data-first discovery outperforms intuition

AI can read at scale, find patterns, and generate options quickly, which raises the average quality of what you review. The point isn’t to let models decide. It’s to feed your judgment with better candidates. When your inputs are customer-facing sources and real search behavior, your shortlist reflects reality.

That’s why validation gets cheaper. Instead of arguing hypotheticals, you’re testing phrasing the market already uses. Think of it like hypothesis generation. Drug discovery teams use machine learning to surface non-obvious patterns, then stage validation to decide what’s real. Marketing can borrow the same discipline, as seen in the deep learning approach to antibiotic discovery.

The Real Constraint Is Signal Quality, Not Creativity

Creativity isn’t your bottleneck. Signal is. The best category lines start with messy, high-coverage inputs, not a clean whiteboard. When you increase the volume and diversity of quality signals, the right phrases keep showing up, which reduces risk and speeds alignment.

Where does the best signal live?

Start with support tickets, sales call transcripts, product docs, and search queries, then add forum threads where your users actually hang out. It’s noisy. That’s fine. The noise contains phrasing that customers reach for under pressure. Those phrases become seeds for credible names, benefits, and objections.

You’ll see recurring metaphors and consistent “why now” triggers if you look at enough examples. New deal objections. Renewal risk themes. The questions that stall pilots. Stack these by segment, persona, and product tier. High-frequency pain across your best-fit customers beats any clever turn of phrase from a brainstorm.

What traditional approaches miss about evidence

Most teams pull a handful of anecdotes and leap to branding. The miss is coverage. You need volume and diversity to avoid overfitting to recent deals or internal preferences. Normalize formats, strip PII, and separate problem language from feature language so you don’t bias toward what you just shipped.

Then look for pains, desired outcomes, and the shorthand customers use. You’re not writing copy yet. You’re mining raw clay and labeling it. A systematic screening workflow reduces subjective bias, which is why researchers lean on structured evidence reviews like those described in systematic evidence screening workflows.

Governance sets the safety rails

Before discovery, define forbidden claims, compliance boundaries, and product truth. That gives you permission to explore confidently without stepping on legal landmines. You’re not censoring. You’re constraining drafts so early options don’t get torpedoed in review.

Simple rule sets work best. Approved use cases, disallowed comparisons, claim limits, and banned terms. With rails in place, you’ll iterate faster because reviewers are checking adherence, not rewriting from scratch. Momentum matters here.

The Hidden Costs of Guesswork in Category Work

Guessing wrong on a category frame is expensive and distracting. The direct cost is content you have to rewrite. The indirect cost is pipeline noise, slower sales cycles, and morale hit from redoing work. A tighter, staged validation loop avoids most of it.

How much does a false positive cost?

Let’s pretend you rally around a catchy frame that’s misaligned. Three months later, you’ve got 20 posts, a landing page, and paid copy that flatlines. If each asset costs 800 dollars and 10 hours of team time, you wasted 16,000 dollars and about 200 hours. That’s before counting the cost of misqualified leads you now need to recycle.

Now add the opportunity cost. Those 200 hours could have generated a 6 to 8 piece starter pack for a working narrative. Small teams feel this more. When one wrong bet eats a quarter, you lose compounding momentum. Not fatal, but painful.

Coordination debt and rework stack up

When narrative drifts, everyone edits. Sales updates talk tracks. Product marketing rewrites decks. Content retrofits articles. Each cycle adds more exceptions and one-off fixes that make your system brittle. This is how execution stalls even when ideas are good.

You want those hours pointed at compounding, not cleanup. The more fragmented the process, the more debt you create. The fix is upstream. Align narrative to signals before rollout, then lock the rules so you don’t rewrite every time a new idea pops up.

Legal and privacy risks increase under pressure

Rushed pivots stretch claims. Pulling raw transcripts without redaction can create privacy issues. It’s avoidable. Redact PII, set simple claim boundaries, and pre-approve how you’ll name competitors or categories. Moving fast doesn’t mean moving careless.

Keep a short checklist tied to review. If an experiment needs raw quotes, keep provenance and access controls tight. Researchers don’t scale validation without rails for a reason, and it’s why staged loops matter in high-stakes fields that use AI to accelerate hypothesis testing.

If you’d rather skip the guesswork and see a system run end to end, you can Request A Demo. It’s easier to evaluate this when you see the guardrails in action.

When Your Narrative Fights Reality, Everything Feels Hard

You can feel narrative friction before you can measure it. Higher bounce on core pages. Low reply on outbound. Reps translating your headlines into different words. That’s not a product problem. It’s a language and framing problem that you can fix with better signal.

A quick story from the trenches

We shipped sharp thought leadership that hit traffic goals but sat too far from our solution. Sales liked the attention, hated the leads. Conversations stalled in the first five minutes, and we spent time translating our own story. That’s the feeling of a frame the market didn’t ask for.

When we anchored to a real entry point buyers cared about, everything got easier. We didn’t change the product. We changed the story to match how buyers described their first use case. Meetings felt different. Messaging clicked. Pipeline moved.

Why sales pushback sounds vague

“When prospects don’t get it” usually means the language is wrong, not the product. Reps will say “they weren’t a fit,” but listen to the words they use to translate your message. Objections repeat. Metaphors repeat. Surprising synonyms surface.

Collect those and compare them to your website and ads. If reps are running a different vocabulary on calls, your narrative is making them work harder than it should. That’s a fixable problem, and a fast one once you see the pattern.

What buyers tell you without saying it

Metrics whisper. Higher bounce on core pages, low time-on-page for problem explainers, and outbound replies that shift to a different topic. Support tickets that restate the same confusion. These are narrative problems in disguise.

Pull the signals in. Tighten the frame. Test again. You’ll see friction drop when the phrasing matches the way buyers already think. The best part is you don’t need a big swing. You need a better angle that your customers will repeat.

An AI-Assisted Workflow for Category Discovery and Validation

An effective workflow reads widely, encodes governance, and validates cheaply before scale. You collect and secure signals, normalize them, cluster themes, draft concise options, then pressure-test the best candidates in the wild. Each loop takes a week, not a quarter.

Collect and secure the right signals

Extract support tickets, sales calls, product docs, search queries, and forum threads. Make it safe first. Redact PII, tag source and date, and isolate problem statements from feature requests. Standardize formats to JSON or CSV so downstream steps are deterministic and traceable.

Document access controls and keep provenance IDs with every record so you can trace quotes back to their source during review. That traceability builds trust with legal and leadership. It also makes it easier to explain why a phrase made the cut. Safety and trust are part of speed here.

Interjection. If you skip this step, the rest is guesswork.

Normalize, embed, and deduplicate for discovery

Pick an embedding model that balances cost with semantic accuracy. Chunk docs by meaning, not arbitrary length, and include small overlap for context. Remove near-duplicates using cosine similarity thresholds so one popular thread doesn’t flood your clustering with echoes.

Keep provenance intact. Store the ID, source, and timestamps alongside embeddings so you can pull representative quotes into review later. This is where you reduce noise without losing signal. In practice, that’s what makes clustering useful instead of just interesting.

Cluster to themes and extract emergent phrases

Use hierarchical or density-based clustering to group pains and outcomes. Within each cluster, extract recurring phrases, metaphors, and “why now” triggers. Score themes by frequency, recency, and customer segment weight so you don’t over-index on outliers.

Save cluster exemplars and quotes. You’re not writing copy yet. You’re assembling the raw parts for a story that sounds familiar to your buyer. This mirrors how structured evidence reviews work to reduce bias before scale, as in systematic evidence screening workflows.

Draft and validate candidate narratives fast

Synthesize three concise POVs per priority cluster. Force a structure: benefits, proof points, and disqualifiers in 120 to 180 words. Run a human rubric across clarity, distinctiveness, and claim safety. Then run three quick tests: email subject A/B, small paid search, and social CTA lift.

Keep it cheap, one week per loop. You’re looking for directional wins, not final copy. The point is to kill the weakest frames quickly and move the best candidate into a deeper test. Short loops reduce the cost of false positives and keep the team confident.

Operationalize the winner so it compounds

Freeze the winning frame as a governance object: message, proof, banned terms, and claim limits. Update briefs, templates, and CTAs. Seed a 6 to 8 piece starter pack, pillar explainer, objection posts, comparison angles, and sales one-pagers. Then set a quarterly review sprint to check for drift and new signals.

This is the difference between a one-off campaign and a system that compounds. When the frame lives in governance and jobs, new content reinforces the narrative instead of reinventing it. That’s how you get steady, low-drama execution.

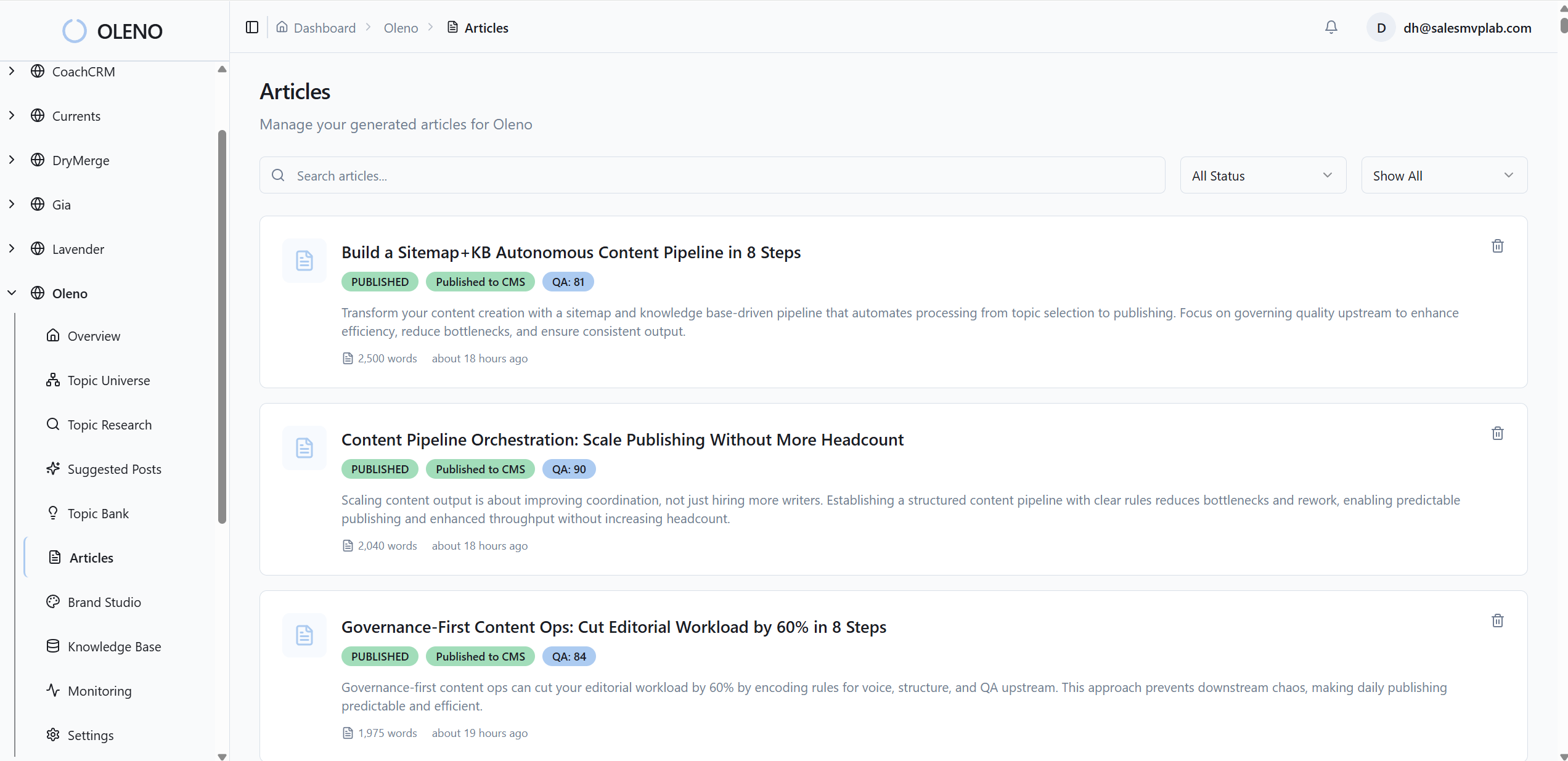

How Oleno Codifies and Scales Your Winning Narrative

Oleno turns your narrative into a system that runs daily. You define truth and boundaries once, then Oleno produces category education, frameworks, and evaluation content on a cadence, with quality gates that block drift. The result is consistent output that compounds.

Governance that locks in your narrative and claim boundaries

Codify voice, positioning, product truth, approved claims, and banned terms once. Oleno applies these rules everywhere during creation, so drafts respect legal and brand constraints by default. You see fewer risky edits, faster approvals, and a cleaner handoff to publishing when the rails are built in.

Because governance changes slowly, it becomes the anchor. Oleno uses your approved language and claim limits to keep new work aligned, even as volume increases. You direct the system with rules, not constant rewrites. That’s where small teams regain time.

Job-based execution to publish category education repeatedly

Turn the narrative into jobs, not ad-hoc tasks. With Oleno, you enable POV and category education, frameworks and guides, and buyer evaluation content as ongoing jobs. Each job runs the same flow, discover, angle, brief, draft, QA, publish, so coverage grows across the funnel without resets.

You decide what jobs to run based on goals. Oleno handles the pipeline and cadence. That separation keeps strategy human while execution stays predictable. It also prevents the “burst and burnout” pattern that kills momentum every quarter.

QA gates and knowledge grounding that reduce risk

All drafts pass automated checks for voice, structure, clarity, grounding against your knowledge base, and adherence to product truth. Claims stay inside your configured boundaries. Oleno blocks anything that fails until it’s revised, which reduces rework and protects narrative integrity as volume rises.

As output scales, manual review becomes a bottleneck. Oleno’s deterministic gates keep quality bar steady without adding headcount, so the story stays intact from article one to article one hundred. That’s the reliability most teams miss.

CMS publishing and reuse so the story compounds

Publish directly to your CMS in draft or live mode without duplicates, then reuse approved content across channels with formatting rules. Distribution uses your approved messaging so it doesn’t invent new phrasing. Cadence holds, coverage expands, and the category frame gets reinforced, not reinvented.

This is where compounding shows up. The more consistent your publishing, the more your narrative becomes the default lens for your buyers. Oleno keeps it steady so you don’t have to manage every step manually.

If you want to see how this looks with your narrative and sources, you can Request A Demo. We’ll walk through governance, jobs, and the QA gates on real examples.

Conclusion

You don’t need a better brainstorm. You need better signal and a tighter loop. Mine the language buyers already use, validate fast, then lock the winner into governance and jobs so execution compounds. That’s how small teams compete. Not with louder opinions, but with a system that keeps shipping the right story.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions