Audit Content Coverage with Information Gain Scoring (Practical Guide)

Most teams score content after it’s written. That’s backwards. If the idea is a repeat, no amount of editing or design turns it into something readers, or machines, should care about. I learned this the hard way. At one company, our best-written piece stalled for three weeks because it didn’t add anything new. It looked great. It said nothing new.

Here’s my take: originality is a pre-draft decision, not a post-edit debate. Score the brief’s uniqueness against your own knowledge base and what already ranks. If the delta is small, pause. Add missing angles, examples, or facts, then move. It sounds simple because it is. The discipline is doing it every time.

Key Takeaways:

- Gate briefs with a 0–100 information gain score before drafting

- Compare your outline against KB, sitemap, and top-10 SERP paragraphs

- Use cosine similarity and entity overlap to detect hidden duplication

- Label cluster saturation and enforce cooldowns to avoid over-publishing

- Reward high-gain drafts in QA; re-brief low-gain sections with specifics

Why Originality Should Be Scored Before You Write

Originality should be quantified before any words hit the page because duplication hides in familiar phrasing and recycled examples. A pre-draft score exposes overlap with your knowledge base and what already ranks, saving hours downstream. Treat it like a uniqueness delta you govern with policy, not vibes.

What Is Information Gain In Content Audits?

Information gain measures how much new, non-redundant value your draft adds relative to your own assets and current search results. You’re looking for net-new claims, examples, explanations, or synthesis, not just new sentences. When the score is low, you’re likely restating what’s on your site and the SERP. Pause the draft. Fix the brief.

Think inputs and outputs. Inputs: your KB, your sitemap, and paragraph snapshots from the top-10 results. Output: a 0–100 score that acts as a gate. Keep the math explainable. No black boxes. If someone asks why a draft was blocked, you should be able to point to specific overlapping entities and high-similarity sections. Even Google leans into “helpful, original content” as a baseline, which supports the spirit of this approach, though not the implementation specifics. See Google’s Creating Helpful Content guidelines.

The Metrics That Actually Matter For Originality

You don’t need a lab. Start with semantic overlap, entity novelty, and cross-source coverage. Compute embeddings similarity between your outline sections and source paragraphs, then add a simple entity overlap ratio. This catches mirrors when wording changes but ideas don’t. A single cosine score paired with entity overlap will outperform intuition.

Translate this into a stable 0–100. For example: base score equals 100 minus weighted similarity; subtract penalties for repeated entities; add small bonuses for sections with low matches across all sources. The key is consistency. If you need framing or external reference points, there’s a clear overview of information gain concepts in iBeam’s overview of information gain. Use it to align your team on terminology, not to replace your own scoring.

Where Duplication Actually Creeps In

Duplication creeps in at the idea stage, not during editing. It happens when briefs mirror entities and claims already spread across your KB, sitemap, and top results. A cross-compare step that checks embeddings and entities reveals mirrors even when the sentences sound fresh.

What Traditional Coverage Audits Miss

Traditional audits count URLs, keywords, or publish dates. They rarely evaluate whether a proposed draft adds a novel claim or example versus what you’ve already said. So the inventory looks thorough, but redundant angles slide through. That’s how teams end up with three pages that differ in tone, not substance.

Add a cross-compare step to your briefing. Take each proposed section and compare it to your KB facts, relevant sitemap pages, and top-SERP paragraphs. If similarity and entity overlap spike across sources, you’re rewriting an idea you already “own” publicly. Think of it like audit discipline applied to ideas. Even in classic audit work, structured components and clear criteria reduce subjectivity; helpful context from ISACA’s audit basics on structured reporting underscores why criteria clarity matters.

The Hidden Overlap Between Your KB, Sitemap, And SERP

Redundancy isn’t just identical phrases. It’s the same entities, examples, and claims in slightly different clothes. Your KB says X. Two blog posts say X with a different example. The top five results say versions of X. Your next draft pitches X again with a clever intro. Net-new value? Not really.

Use embeddings for paragraphs and named-entity extraction for people, products, features, and claims. Compute pairwise similarity between brief sections and source paragraphs, then aggregate by source type. If your overlap spikes in multiple sources, the draft needs new angles or evidence. This is boring to set up once, and it saves dozens of hours later.

The Cost Of Rewriting What Already Exists

Rewriting familiar takes burns time, budget, and patience. Low-gain briefs quietly consume research hours, editor reviews, and design cycles that could close real gaps. Quantifying this drag turns a vague annoyance into a predictable line item you can remove with a score and a gate.

Engineering And Editorial Hours Lost To Low-Gain Briefs

Let’s pretend a draft takes 10 hours across research, writing, editing, and publishing. If 40 percent of briefs are low-gain, you’re burning four out of every ten drafts on re-statements. That’s 400 hours per 1,000 hours of team capacity. Not to mention context switching and rework fatigue you’ll never see on a dashboard.

In my teams, this looked like endless review threads: “Didn’t we cover this already?” It’s deflating. A simple originality gate upstream changes the dynamic. Writers stop guessing. Editors stop patching. Designers stop polishing duplicates. You won’t need a big performance study to feel the difference.

The Opportunity Cost On Clusters And Authority

Every redundant page is a slot you didn’t spend on a true gap. Clusters build authority when claims and examples expand, not repeat. Redundancy drags that signal toward sameness. Internal links have less to point to. Sections are harder to cite because they don’t stand alone.

Here’s the practical call: if a cluster trends toward “well-covered,” require higher gain scores to proceed. If saturation creeps in, enforce cooldowns. It’s not punishment. It’s focus. And if you want more background on why “information gain” matters to discoverability thinking, this primer on information gain SEO from Supple offers additional language you can adapt internally. Want a low-risk way to see the difference in your workflow? Try a small pilot and Try Using An Autonomous Content Engine For Always-On Publishing.

When Teams Realize The Page Adds Nothing New

Teams usually notice duplication too late, during a handoff or exec review. The content reads fine but adds nothing relative to what you’ve shipped and what currently ranks. An upstream originality score prevents those awkward, demoralizing post-mortems that stall momentum.

The 3pm Handoff That Turns Into Three Weeks Of Rework

I’ve lived this. Strong voice. Beautiful visuals. Then feedback lands: feels familiar. Did we say this already? The piece ping-pongs between writer and editor and eventually stalls with design. It’s not a talent problem. It’s a system gap. A brief-level score would have stopped the cycle before a single mockup.

What stings most is the sunk cost. By the time teams notice, everyone’s already invested. That’s why the gate belongs before writing and design. You’ll still miss occasionally. But not week-ending, three-rounds-of-edits misses.

When Leadership Asks Why This Page Exists

A fair question. If it repeats your KB and mirrors the SERP, why publish it. This isn’t perfectionism. It’s intent. A passable article that adds zero new information is noise in your system. Tie every draft to a gain score and that conversation shifts from opinion to policy.

When I was supporting sales, this exact debate showed up in pipeline terms: what job does this page do. “Awareness” is not a job if it’s redundant. A score, a threshold, and clear exceptions are better than taste-based arguments. If this feeling is familiar, and faster drafting hasn’t helped, you’re not alone, context from Google’s Creating Helpful Content guidelines can also help reset expectations when “fast” becomes “fuzzy.” If you’re curious how a governed pipeline feels in practice, you can Try Generating 3 Free Test Articles Now and judge the friction reduction yourself.

Build The Information Gain Pipeline That Stops Redundant Drafts

An information gain pipeline scores briefs, not people, and blocks redundancy before writing starts. The method is straightforward: assemble sources, compute overlap, convert to a score, then govern with thresholds and cooldowns. With clear labels, you focus on gaps rather than churning similar pages.

Step 1: Assemble Your Sources Cleanly

Gather three inputs for every proposed brief: knowledge base chunks with stable IDs, live sitemap pages by section, and a top-10 SERP snapshot per target query captured as paragraphs. Normalize the text, strip boilerplate, and tag each source type. You can’t score what you can’t retrieve reliably.

I like to version these snapshots. When someone challenges a score, you can show exactly what the system compared against on that date. It avoids “but I don’t see that page now” debates. Small operational habits like this keep the process calm and predictable.

Step 2: Compute Semantic Similarity The Right Way

Use modern embeddings for paragraphs and brief sections. Cosine distance is fine. Add entity extraction to track overlap across people, products, features, and claims. Compute similarity between the outline and each source paragraph, then aggregate by source type. Keep dedup thresholds strict. Catch mirrors, not just identical strings.

Two notes. First, calibrate thresholds by topic area; “how-to” and “definitions” behave differently from “original research.” Second, document choices. You don’t need a thesis, just enough detail so future you remembers why a 0.78 cosine plus three shared entities triggers a penalty.

Step 3: Convert Similarity To A 0–100 Gain Score

Translate overlap into a simple score that everyone understands. One approach: gain equals 100 minus weighted similarity; subtract entity overlap penalties; add novelty bonuses for sections with low matches across all sources. Keep the math boring and explainable.

Scores under 30 usually signal restatement; 30–50 probably needs new angles or evidence; above 60 tends to carry new explanations, examples, or synthesis. Your mileage may vary. The point is consistency over cleverness. If you plan to socialize the method broadly, an accessible explainer like iBeam’s overview of information gain can help non-technical stakeholders align on the concept.

Step 4: Set Thresholds And Governance Gates

Apply gates before drafting. Reject briefs under 30. Escalate 30–50 for human review with notes on what to add. Auto-approve above 50, then reward above 70 in QA. Require evidence for low-gain sections, new data, examples, or a synthesis angle.

Policy beats persuasion here. If a draft somehow sails through and the score drops after writing, QA should push it back for re-brief. You’ll ship fewer pages initially. You’ll also ship fewer rewrites, which is the actual win. One interruption: celebrate the “no-go” decisions. They protect your time.

Step 5: Label Cluster Saturation And Enforce Cooldowns

Label clusters as underserved, healthy, well-covered, or saturated based on coverage ratios and gain trends. Enforce a 90-day cooldown on saturated topics. If a proposed brief in a saturated cluster scores under 50, auto-reject and suggest consolidation with existing pages.

This stops over-publishing the same idea while neglecting others. It also makes internal linking cleaner because you’re pointing to true “nodes,” not near-duplicates. You’ll feel the difference in planning meetings, more clarity, fewer circular debates about “another piece on X.”

Step 7: Run A 30 And 90 Day Cadence With Queries And Snippets

Create a lightweight job to re-sample SERPs, refresh embeddings, and re-score hot clusters every 30 days. Every 90 days, audit saturation and cooldown expiries. Keep a couple of reusable code snippets for fetching SERP paragraphs and recomputing gain so reviews don’t stall.

This rhythm keeps your scoring honest. Markets move. New sources appear. Thin coverage thickens. Regular refreshes ensure your “originality” bar reflects today’s landscape, not last quarter’s. And because the system is simple, the overhead stays low.

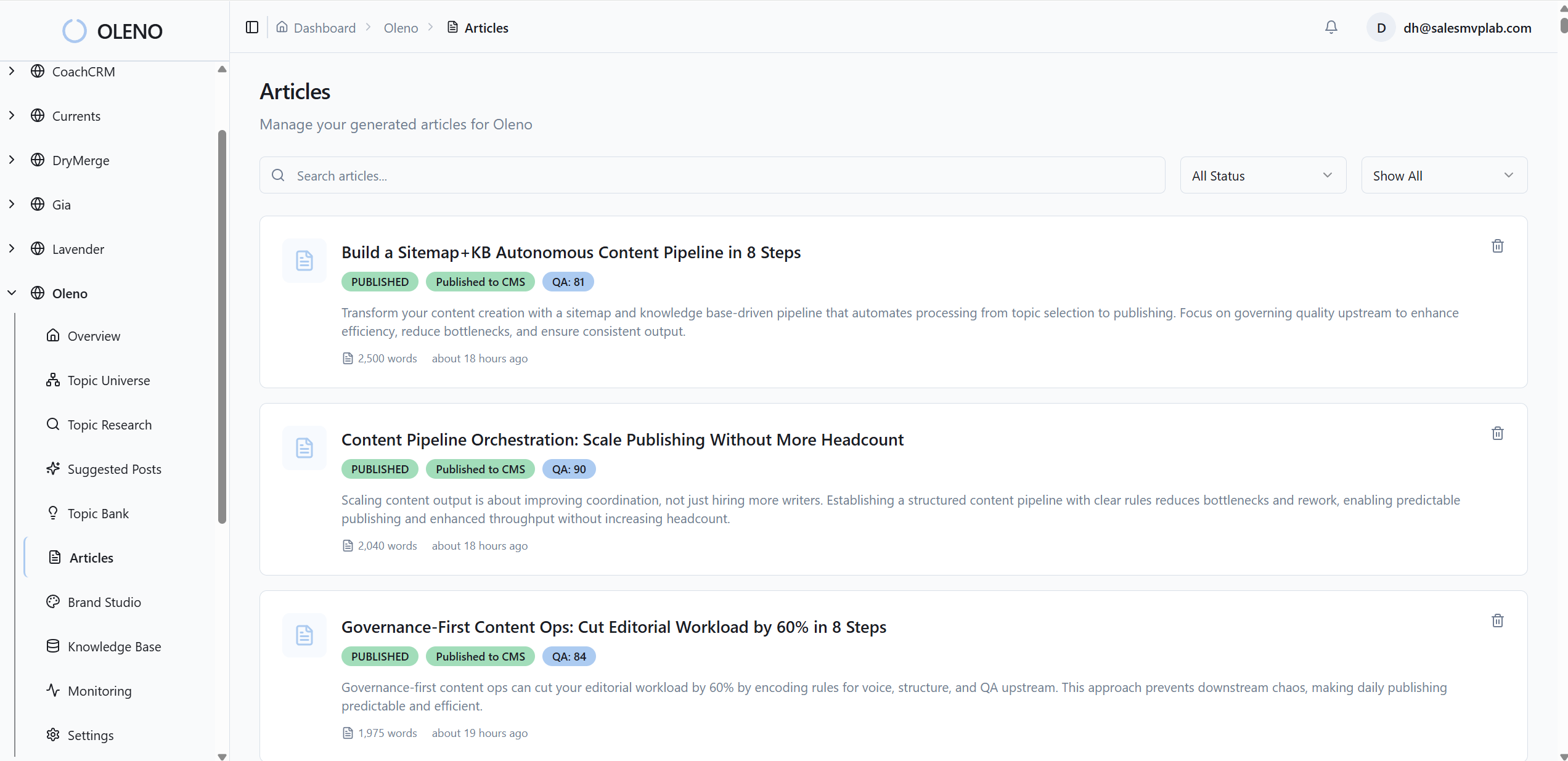

How Oleno Enforces Information Gain From Brief To Publish

Oleno enforces originality by scoring briefs during strategy and gating publication with automated QA. It compares your KB, sitemap, and current results in the brief, then preserves structure designed for citation. The result is fewer rewrites and more sections that genuinely stand on their own.

How Oleno Scores Briefs For Originality

Oleno calculates a 0–100 Information Gain score during brief generation, comparing top-ranking coverage against your knowledge base and live sitemap content. Low-gain outlines are flagged before drafting, so teams don’t burn cycles on redundancy. This keeps differentiation explicit and mechanical, not subjective.

Because the score lives in the brief, it travels with the draft. If a section drifts toward restatement, the system calls it out. Writers see what to add. Editors see why a piece was escalated or blocked. It’s simple governance that avoids taste-driven debates and protects time.

How Topic Universe And QA Gates Work Together

Topic Universe tracks clusters with saturation labels and enforces a 90-day cooldown before re-covering the same topic. Suggestions prioritize underserved areas, and saturated clusters face stricter gates. This aligns planning with originality, so the next page actually moves a pillar forward.

On the back end, Oleno’s QA system evaluates drafts against 80-plus criteria, including information gain and snippet readiness. High-gain sections are rewarded, and low-gain areas trigger refinement loops. Deterministic enhancements, snippet-ready H2 openings, internal links injected from a verified sitemap, and JSON-LD schema, make unique sections easier to reference without introducing monitoring or analytics. If you want to experience this flow without committing a team, you can Try Oleno For Free.

Conclusion

Scoring originality upstream sounds pedantic until you try it. Then the noise drops. Fewer rewrites. Clearer cluster growth. Fewer “why does this page exist” moments. Whether you build the pipeline yourself or let Oleno enforce it, the principle is the same: gate for new information before you write, and your system gets stronger with every publish.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions