Automated QA Gate: 80-Criteria Checklist for Publish-Ready Content

Most teams treat “QA” like a quick skim before publish. A checkbox. A polite “looks good.” Then something slips. A fabricated link. An off-brand hero. A breadcrumb that breaks your rich results. The truth is simple: if quality depends on who reviewed the draft on Tuesday, your system isn’t built for consistency. It’s gambling.

We learned this the hard way. Over the years I’ve shipped content with big range: scrappy founder posts, polished editorial, and yes, the occasional 9pm rollback. The fix wasn’t more hours. It was a gate. A deterministic policy that turns your rules into code and blocks anything under the bar. Less arguing about taste. More repeatable outcomes.

Key Takeaways:

- Treat QA as a production gate that enforces a passing score, not a last-minute edit

- Codify 80+ checks across structure, voice, information gain, visuals, links, and schema

- Use determinism where accuracy matters: internal links from a verified sitemap, programmatic schema, rule-based visuals

- Quantify the waste of manual cleanup and rollbacks to build urgency for change

- Design remediation loops that auto-fix safe issues, escalate edge cases, and retry

- Structure each H2 with snippet-ready openers to improve eligibility for citations

- Use an autonomous system to reduce rework and ship brand-consistent articles reliably

QA Is Not An Editorial Checkbox, It Is A Production Gate

QA is a production gate that programmatically enforces your content standards before publish. It evaluates a draft against 80+ criteria, calculates a score, and decides pass or fail. Think CI for articles: deterministic checks, automatic retries, and no go-live if critical rules break. A team shipping daily needs that kind of backbone.

The Checkbox Mindset Guarantees Inconsistency

When QA is a “courtesy pass,” quality turns subjective fast. One editor prioritizes voice, another fixates on commas, and your CMS becomes the place where structure gets patched after the fact. That variability isn’t anyone’s fault, it’s the inevitable outcome of relying on taste instead of tests. Convert the checklist into code. Set a passing score and blocking failures. You’ll feel the difference the first week you don’t have to debate what “done” means.

Here’s the kicker: once the bar is explicit, your team rightsizes effort. Writers aim for the score. Producers stop doing line edits at 5pm. And leadership gets predictability instead of vibes. It’s the content version of a Definition of Done that actually controls quality, not just describes it. For sanity, not ceremony.

What Changes When QA Becomes A Production Gate?

A gate turns preferences into policy. Passing score defined. Critical fail categories identified. Non-critical issues trigger remediation loops, not Slack arguments. The workflow tightens too: writers know what to hit, producers verify outcomes, and the CMS only receives publishable content. The biggest shift is cultural, you stop re-litigating the same defects every week because the system handles them the same way every time.

I’ve watched teams breathe easier once the gate exists. Fewer last-mile surprises. Fewer “who approved this?” threads. You still coach voice and story, but the machine handles structure, links, and schema. That’s the right split of labor.

What Is A QA Gate For Content, Really?

Think of it like continuous integration for articles. A policy that evaluates structure, voice, information gain, visuals, links, and schema against clear rules, computes a score, and gates publish. The process runs automatically and, if anything fails, files the fix or performs an auto-correction, then retests. If you want a model for the checks themselves, traditional QA checklists offer a useful blueprint: objective, repeatable, binary.

Ready to skip theory and see a system run the gate end to end? Build the muscle once and reuse it daily. Try Generating 3 Free Test Articles Now.

The Real Causes Of Unreliable Content Quality

Unreliable quality comes from variability, not laziness. Manual reviews drift, editors interpret rules differently, and LLM prompts produce inconsistent structure. Determinism fixes the failure modes. Put correctness in code so style can live in writing, not operations. That’s the separation most teams miss when they scale.

What Traditional Approaches Miss About Determinism

Prompts are great at words, weak at workflows. You can ask for “structured, on-brand content,” but you’re still rolling the dice on links, schema, and paragraph rhythm. Deterministic systems do the opposite: they guarantee structure and mechanics, then let writers focus on narrative. Programmatic schema generation, sitemap-verified internal links, and fixed section openers remove noise where accuracy matters most.

In practice, this looks boring, and that’s the point. The boring parts happen the same way every time. Internal links only from your verified sitemap. Schema generated as valid JSON-LD and attached correctly. Visuals that actually use your palette and aspect ratios. Save your creativity for arguments that should be creative.

Where Subjectivity Sneaks Into Structure And Voice

Subjectivity creeps in at the edges: bloated paragraphs, fluffy section openers, passive voice that dulls momentum. That’s manageable with rules, not reminders. Enforce 40–60 word openers per H2. Lint for banned terms. Keep readability within a band. Reject passive voice beyond a threshold. This isn’t about red pens; it’s about making clarity a requirement, not a wish.

The bonus effect is consistency that search engines and AI assistants can parse cleanly. Snippet-ready openers help machines understand your answers quickly. And humans appreciate the same clarity. Everyone wins when your structure is consistent.

The Hidden Complexity Behind Links, Schema, And Visuals

Links, schema, and visuals are where “looks fine to me” breaks down. Random internal links split crawl equity. “Close enough” schema doesn’t validate. Images without brand guardrails chip at credibility. Each is testable. Each benefits from determinism. Pull links from a verified sitemap only. Generate and validate JSON-LD. Enforce brand palette, alt text, filenames, and aspect ratios. That’s how a design system works at the content layer, objective rules, repeatable outcomes, fewer surprises. If you need a checklist mindset for this, the design system maintenance checklist maps nicely to content components.

The Compounding Cost Of Manual QA And Rework

The cost of manual QA shows up as hours, missed opportunities, and subtle trust erosion. You don’t feel it on Tuesday; you feel it when a quarter passes and goals slip. If quality is a negotiation every week, you’re paying for the same fixes forever. Let’s quantify it, even roughly, so the trade-offs become visible.

Engineering And Editorial Hours Lost To Fixes

Let’s pretend you ship 20 articles a month. Manual cleanup averages 90 minutes per piece across structure, images, and links, there’s 30 hours. Add 15 more for schema and formatting. That’s essentially a workweek lost to predictable, preventable fixes. A deterministic gate slashes that by catching issues before the CMS work begins, when changes are cheap and automated.

The second-order effect matters too. Editors pulled into cleanup aren’t working on narrative or product storytelling. Developers dragged into schema validation aren’t building features. Opportunity cost is real, and it stacks.

The Cascading Impact On Brand Trust And SEO

Inconsistent visuals suggest rushed execution. Broken or invalid schema reduces eligibility for rich results. Thin or duplicative sections signal low information gain to machines and readers. None of these kills you in a day. But they chip away at trust and discoverability over time. Over a quarter, you’ll likely see fewer featured snippet wins and less AI citation potential, all while spending the same (or more) time to ship.

This is the quiet tax. You pay it in missed impressions and muted click-through rates. A gate doesn’t guarantee wins, but it removes self-inflicted losses.

What Does Inconsistent Linking And Schema Actually Cost?

Links placed randomly split crawl equity across pages that don’t need it. Schema errors can drop FAQ or breadcrumb enhancements for weeks. Let’s pretend two posts a week ship with invalid JSON-LD. Over 12 weeks, that’s 24 misses. If those enhancements add even a modest CTR lift, the compounding loss is obvious. The fix is straightforward: enforce correctness once, then never regress. A simple QA checklist before release mindset, but automated, pays back every month.

If you’re already feeling the drag of manual cleanups, it’s time to change the system, not the people. Try Using An Autonomous Content Engine For Always-On Publishing.

The Human Friction Everyone Feels But No One Measures

The operational pain isn’t just technical. It’s emotional. Ambiguity creates anxiety, and subjectivity breeds rework. When your team dreads “publish day,” you’re burning energy on the process, not the story. The fix is clarity, enforced by a gate that removes the debate.

The 3pm Publish That Turns Into A 9pm Rollback

You’ve lived this. A post goes live at 3pm. At 7:30, someone spots a broken breadcrumb or a fabricated link. Now it’s a scramble: rollback, thread, patch, republish. No one planned for it; everyone pays for it. A production gate blocks the publish before the scramble starts, files the fix, and retries. Predictability beats heroics.

There’s also the reputation hit you don’t see. Every rollback trains the team to anticipate fire drills. That’s not a culture anyone wants.

When Your Best Article Looks Off Brand

I’ve been there. At scale, hundreds or thousands of posts, visual consistency varies by whoever handled images that day. Strong ideas lose credibility because the hero looked like a stock photo collage. A simple visual policy, enforced by code, would’ve saved hours and preserved trust: palette, aspect ratios, alt text, filenames, and screenshot placement in the sections that sell. The fix wasn’t taste. It was rules and a check.

The most common miss? Product screenshots show up where they’re convenient, not where they persuade. That’s solvable with semantic matching and a rule that prioritizes solution sections for product visuals.

Why Your Team Dreads Releases More Than Launches

People dread ambiguity. “Good enough” is hard to hit when the target moves. A gate gives safety rails: writers aim for a score, producers see a pass, leadership knows the brand ships consistently. You trade second-guessing for forward motion, more time on story, less time on structure. If you want a parallel, the content equivalent of a clear Definition of Done removes the fear.

Build An Automated QA Gate That Enforces 80 Criteria

An automated QA gate encodes your standards as tests, sets a passing score, and controls publish. It enforces structure, voice, information gain, visuals, links, and schema, then runs remediation loops and retries as needed. This isn’t theoretical. It’s a model you can implement incrementally and evolve as your content footprint grows.

Define Your QA Gate Model: Thresholds, Score, And Gating Policy

Start with a passing score, say 85, and explicit critical failures that block publish, like invalid schema or fabricated links. Weight criteria groups based on risk: structure and schema often carry more impact than minor tone drift. Decide which failures auto-fix and which require human escalation (for example, product claims). Document the “publishable” contract so everyone understands the bar and how the gate enforces it.

You’ll want versioning and transparency. When criteria change, log it. When a draft fails, record the why. That audit trail pays dividends during postmortems and keeps the team aligned.

Convert Your Checklist To Validators Across Structure, Information Gain, And Snippets

Translate every rule into a validator. Verify each H2 opens with a 40–60 word, three-sentence paragraph. Enforce paragraph counts per H3 and cap list density. Score information gain against the approved brief; flag low differentiation early, not after draft. Validate that headings answer the question they introduce. If you use tables, enforce consistency. Fail any drift from the outline.

This is where teams get leverage. Once the validators exist, they work every time. They also scale, validators don’t get tired at 4:45pm. If you want a template for tool selection and test design, an evaluation checklist for test automation provides a useful mental model.

Enforce Visuals, Links, And Schema With Code Not Taste

Define brand palette, logo usage, and permitted aspect ratios. Generate alt text and SEO-friendly filenames. Match product screenshots to relevant sections using semantic rules. Inject internal links only from a verified sitemap and require anchor text to match page titles exactly. Generate Article, FAQ, and Breadcrumb JSON-LD, validate it, and attach before the post hits your CMS.

One interjection. If any of these steps rely on “eyeballing,” you’ll reintroduce drift. Keep the mechanics deterministic; let judgment live in the narrative.

Design Remediation Loops, Retries, And Audit Trails

When a validator fails, trigger an auto-fix if it’s safe, reformatting a paragraph, revalidating schema, swapping an anchor to match a page title. If not, file a task with failing criteria, suggested fixes, and local context so a human can make the call. After every fix, retry the gate. Keep audit logs of inputs, outputs, QA events, retries, and version history. Send debounced alerts so the team stays informed without noise. For additional structure inspiration, a mandatory checklist for evaluating test automation mirrors the mindset required here.

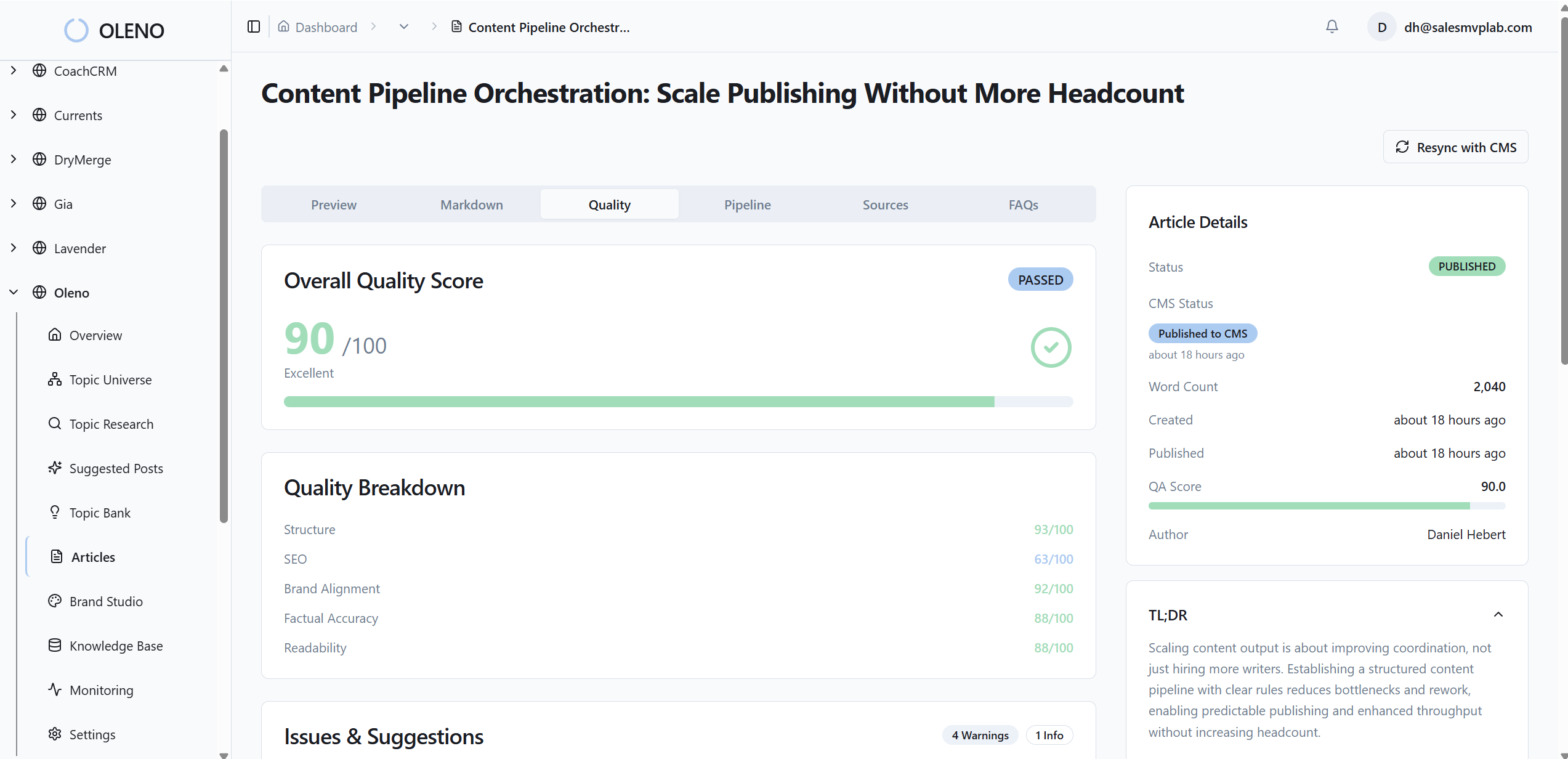

How Oleno Enforces An Automated QA Gate End To End

Oleno runs a closed-loop system that evaluates every draft against 80+ criteria, refines weak areas automatically, and only publishes once thresholds are met. Deterministic internal links, validated schema, and brand-consistent visuals are handled in code, not by handoffs. The outcome isn’t a draft, it’s a publish-ready article delivered to your stack.

80 Plus Criteria With Automated Refinement Loops

Oleno evaluates structure, information gain, brand voice, visuals, links, and schema against a comprehensive rule set. Low-scoring areas trigger refinement loops that rewrite thin sections, normalize tone, and remove AI-sounding language. The system enforces snippet-ready openers and paragraph rhythm, then retests until the passing score is achieved. Drafts that don’t meet the bar don’t move forward.

This approach shifts your team’s effort to narrative and product story, where humans add the most value. The gate handles the repeatable parts, reliably.

Deterministic Internal Links And Schema Generation

Internal links are injected only after drafting from your verified sitemap. Anchor text matches page titles exactly, so fabricated URLs are impossible by design. Oleno generates valid JSON-LD for Article, FAQ, and Breadcrumb, validates it, and attaches it as metadata, no copy-paste errors. Two chronic failure modes, broken links and invalid schema, are removed from the process, not just corrected after the fact.

That predictability is hard to overstate. It protects your eligibility for rich results and keeps crawl equity focused where it should be.

Visual Studio For Brand-Consistent Images And Alt Text

Visuals aren’t bolted on. Oleno’s Visual Studio uses your brand palette, logos, and style references to generate a hero image and 2–3 inline visuals per article, then matches product screenshots to relevant sections using semantic similarity. It writes SEO-friendly alt text and filenames, and enforces aspect ratios and resolution guidelines. Solution sections are prioritized for product visuals where they matter most.

The output looks like your brand every time, without a design handoff. That’s credibility you can feel.

Monitoring And Notifications With Debounced Alerts

Once the gate passes, Oleno prepares CMS-ready HTML and delivers directly to WordPress, Webflow, HubSpot, or even Google Sheets for custom workflows. Duplicate posts are prevented by design. The system sends email notifications for draft ready, publish success, generation failures, and low topic inventory, with debounced alerts to avoid noise. If you prefer to map your “Definition of Done” to events, this is where the process becomes visible and predictable without dashboards.

If you want to see where this gate sits in an orchestrated pipeline, the mechanics are straightforward: Topic → Brief → Draft → QA → Visuals → Links/Schema → Publish. The system runs daily so you don’t have to babysit it. Try Oleno For Free.

Conclusion

You don’t need more editing. You need a gate. Treat quality as a production decision, not a hallway conversation, and the rework headache fades. Determinism handles links, schema, and visuals; your team focuses on story and strategy. Whether you build it yourself or use Oleno, the outcome is the same: fewer rollbacks, tighter standards, and content that ships ready, every time.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions