Build a Deterministic Demand‑Gen Scheduler with Idempotent Publishing

Stop treating your editorial calendar like a control panel. It is a list of ideas, not a system. If you want to build a deterministic demandgen that hits dates without heroics, you need jobs, SLAs, and an engine that never guesses. I learned this the hard way. Missed publishes, duplicate posts, last‑minute rewrites, all because the calendar looked busy and nothing was actually moving.

When you model demand-gen as schedulable jobs with clear inputs and outputs, the whole vibe changes. You set acceptable latency, define error budgets, and run on queues, not vibes. Then idempotent publishing removes the fear of retries. The result is boring reliability. Which is exactly what you want when the CEO asks why the blog stalled last Tuesday.

Key Takeaways:

- Replace the calendar with queued jobs, SLAs, throughput targets, and error budgets

- Define success in code, not docs, including inputs, preconditions, QA thresholds, and publish criteria

- Use idempotency keys and a publish ledger so retries are safe and duplicates never happen

- Apply backpressure with rate limits and jittered retries to avoid overwhelming your CMS or APIs

- Operate from one source of truth for voice and product facts to stop drift and risky claims

- Runbooks, dashboards, and incident math make risk visible and fixes predictable

Why you must build a deterministic demandgen scheduler now

Deterministic demandgen means outputs land on time because the system enforces rules, not because people push harder. You define SLAs, model capacity, and run everything through a queue. When the plan meets reality, SLIs and error budgets guide choices, not opinions. Predictability beats velocity when trust is on the line.

The calendar illusion: why editorial planning fails SLAs

Calendars sequence ideas, not work. A date on a box says nothing about capacity, queue depth, or p95 durations. If you want a schedule you can defend, define throughput targets, acceptable latency, and error budgets up front. Service levels need math, not color coding on a spreadsheet.

I used to stare at a packed calendar and still miss half the publishes. Priorities shifted, approvals slipped, assets arrived late. A queue with SLAs fixes that. You can see backlog growth, you can throttle intake, you can borrow capacity with clear tradeoffs. The SRE Workbook’s guidance on SLOs maps cleanly here and keeps everyone honest.

Deterministic jobs, not vibes: define success criteria

A job should either meet its SLA or trigger a clear fallback. No debates. Put inputs, preconditions, QA thresholds, and publish criteria in code where the engine can enforce them. Capture decision rules for audience, voice, and product boundaries so drafts are right before a human ever looks.

Once jobs carry their own rules, reviews get faster and arguments fade. You remove taste from the loop and focus on outcomes. Most teams fail here. They try to remember rules from a deck. That is fragile. Put the system in charge so rework drops and consistency climbs.

What does idempotent publishing prevent?

Idempotent publishing blocks duplicate posts, race conditions, and broken revisions. The pattern is simple. Generate a stable idempotency key, for example a content hash plus canonical slug, then write to a publish ledger. On retry, return the original result if the key already exists. One intent, one artifact.

When you ship under load, retries happen. Networks blip. APIs timeout. Without idempotency, a single hiccup can produce two posts, conflicting URLs, or a revision mess. Martin Fowler’s take on idempotence explains the core idea well, and it applies directly here. See Idempotence by Martin Fowler for the principles.

To build a deterministic demandgen machine, fix these root causes

The symptom is missed dates and messy publishes. The root cause is a broken system. Voice lives in slides, product truth lives in Slack, and workflows bounce across tools with no transaction boundary. You are optimizing for activity, not reliability. Change that, and cadence stabilizes.

No single source of truth for voice and product truth

When voice and product facts scatter across decks and chats, every job becomes guesswork. Writers guess tone. AI guesses claims. Reviewers catch some, miss others, and trust erodes. Centralize voice rules, approved claims, and golden examples so generation and QA resolve against the same truth.

I have seen teams cut review time in half just by unifying voice and product truth. Fewer edits. Fewer risky statements. Fewer “can we say that?” threads. The payoff is huge for small teams. You get the same brain in every draft without adding headcount.

Non atomic workflows that cross too many tools

Handoffs across docs, sheets, and email break atomicity. You want one transaction that goes from Ready to Publish through QA to Published, or rolls back cleanly. That requires an outbox table or message log so the publish step is replayable with the same inputs and the same outcome.

Either you can retry safely, or you do not ship. Glue work across tools invites partial states and silent failures. A single system of record with a transactional outbox removes that risk and keeps history clear. Nothing beats being able to say exactly what happened and why, with one log entry.

Fail to build a deterministic demandgen pipeline, pay these costs

The costs are not vague. You can measure them. Breach risk shows up in queue math. Duplicates waste reach and damage brand. Manual triage burns hours and attention you never get back. Put numbers on it and the decision to fix the system becomes obvious.

The SLA math: from queue depth to breach risk

Breach risk is visible if you do the math. Track arrival rate, worker capacity, and job durations at p50 and p95. If backlog exceeds p95 duration times your daily arrival rate, your SLA is in trouble. Alert when that threshold crosses, then either add workers or cut intake.

Dashboards matter here. Leaders should see risk before it bites. A simple panel with queue depth, p95 duration, and error budget left changes conversations. Folks stop asking “are we on track” and start asking “what lever do we pull.” It defuses blame and focuses the team on flow.

Duplicate publishes create brand damage and SEO waste

Duplicates are not harmless. Subscribers get the same piece twice. Rankings cannibalize themselves. Attribution gets muddy. One duplicate can trigger cleanup across email, social, and the CMS. Add up the hours you lose and compare it to the effort to implement idempotency and dedupe keys. The math usually pays for itself in a quarter.

A stable idempotency key solves the root problem. If the CMS sees the same key, it returns the same result. No new artifact. No race condition. No cleanup. Stripe’s guidance on idempotency is the gold standard here, and the pattern translates cleanly to content. Review Stripe’s Idempotency Keys for the mechanics.

What is the cost of manual triage during incidents?

Manual triage burns focus. Someone hunts logs. Someone writes updates. Someone reverses a bad publish. Then a rewrite lands on someone else. Measure it. Track time to root cause, rollback time, and communication overhead. Then build an incident template with an owner, a rollback checklist, and a postmortem that drives hard fixes.

When you run incidents like muscle memory, stress drops. A 20 minute routine replaces a two hour scramble. The PagerDuty incident response guide lays out a solid baseline. Borrow it, then tailor to content operations. You will feel the difference within a month.

What running a deterministic scheduler feels like in a small team

Calm. Predictable. Boring in the best way. You move from opinion to metrics. From “who has the file” to “job 18324 is in QA with a 92 score.” Confidence shows up in the little things. Fewer status meetings. Fewer fire drills. More steady throughput without adding people.

Calm ops replace heroics

Work-in-progress is visible, SLAs are predictable, and exceptions are rare. You stop begging for approvals because rules are enforced upstream. When leadership asks for status, you point to a dashboard with throughput, backlog, and quality scores. Folks relax because the system is doing what it promised.

I have lived on both sides. The heroics side burns people out. The calm side compounds trust. You get Saturdays back. You get to plan. You stop fearing Tuesdays because that is “publish day” and the machine has it covered. That is the real win for small teams.

Runbooks that stop firefighting

Write runbooks for the top five failures. CMS timeout. Failed QA score. Asset mismatch. Source link outage. Publish conflict. Each one needs an owner, diagnostic steps, a simple decision tree, and a rollback. Practice them. Repetition turns scary incidents into short, boring tasks anyone can handle.

When folks know exactly what to do, incidents shrink. You also get cleaner postmortems because steps are documented. That creates a steady stream of system fixes that further reduce repeat issues. The loop feeds itself. Reliability creeps up one small change at a time.

How to build a deterministic demandgen scheduler step by step

You can ship this in weeks, not quarters. Start with core primitives, make the publish path transactional, then put guardrails around retries. Prove idempotency in staging before go-live. Keep payloads small and move large assets out of band. The goal is safe, steady flow.

Choose primitives: FIFO queues, dedupe keys, and an outbox

Start with a FIFO queue for ordering and a dedupe key to prevent double enqueues. Add an outbox or write-ahead log so the publish intent is recorded before execution. Use a dead-letter queue for poison messages. Keep payloads lean. Store assets in object storage and pass references in jobs.

Small details matter. Content-based deduplication protects you from repeated submit clicks. A FIFO queue preserves sequence for related jobs. The outbox pattern ensures retries replay the same inputs. AWS documents these building blocks well. Study AWS SQS FIFO and content-based deduplication before you wire it up.

Design the publish transaction and QA gate

Make publishing transactional. The job drafts, validates voice and product claims, scores quality, then attempts publish only if QA passes. Generate and store an idempotency key, compute a content checksum, and log the intent. If publish succeeds, mark committed. If a step fails, roll back cleanly and return to Ready with context.

You want a single decision point that says “ship” or “retry later.” No partial states. No silent failures. A recorded intent with a stable key makes retries safe and fast. It also gives you a clear audit trail when you need to explain why a piece moved or paused.

Backpressure and retries without pileups

Protect downstream systems with token bucket or leaky bucket rate limits. Implement exponential backoff with jitter for transient errors. Cap retries at a sane number, then route to DLQ with a clear reason code. Expose backpressure metrics and auto-scale workers when backlog crosses thresholds tied to SLAs.

Prove idempotency before production. Run the same job twice with identical inputs in staging. Verify only one publish occurs and the second call returns the original result. Intentionally corrupt one step to confirm rollback. Log everything. These tests save you from painful surprises later. Confluent’s work on idempotent producers is a helpful reference. See Confluent on exactly-once semantics.

Ready to cut missed publishes by 90% while keeping cadence predictable? Request a Demo

How Oleno automates a deterministic demandgen scheduler end to end

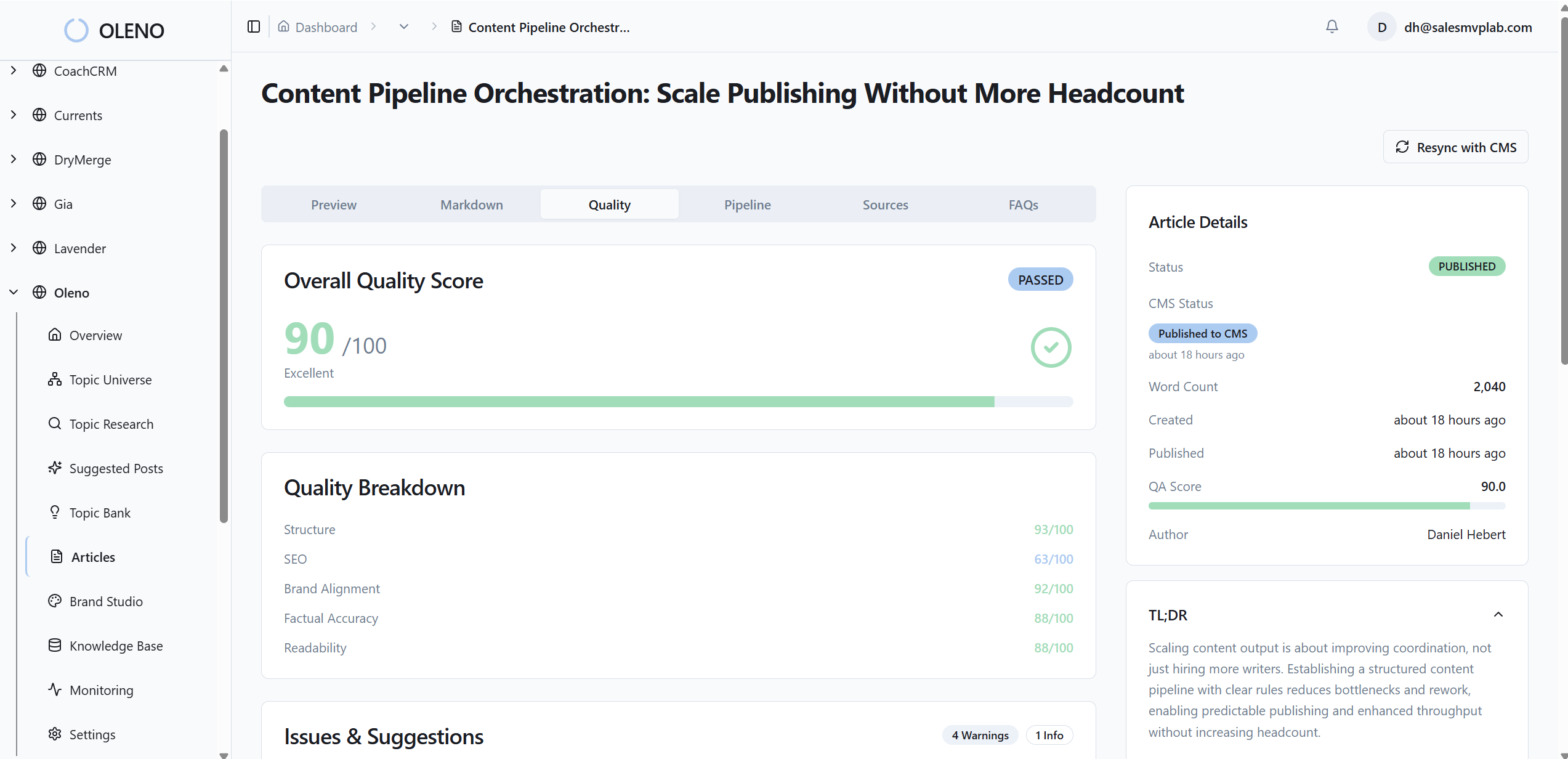

Oleno runs demand gen like a system. Governance codifies voice and product truth. Jobs move through queues with QA gates. CMS adapters publish with idempotency keys and checksums. Observability tracks throughput, durations, and pass rates so you see risk early and stay inside error budgets.

Execution engine: jobs, queues, QA gates, and CMS adapters

Oleno turns briefs into queued jobs, validates voice and approved claims against your rules, and scores quality before any publish attempt. The engine then publishes through CMS adapters using stable idempotency keys and content checksums. A publish ledger records attempts and outcomes so retries return the original result instead of creating duplicates.

In practice, that means what used to take hours across tools now completes in minutes. No duplicate URLs. No race conditions. No mystery edits. Just one intent mapped to one artifact with a recorded history. When a transient error appears, Oleno retries safely and moves on. The flow keeps moving without human babysitting.

Observability and SLAs you can trust

Oleno exposes throughput, backlog, p95 durations, and QA pass rates on a live dashboard. Alerts tie to error budgets, not gut feel. If backlog growth threatens an SLA, you see it and act, either by adding capacity or reducing intake. Most transient failures self-heal via controlled retries. The ones that do not land with a clear reason in a queue your runbooks already cover.

This is where the costs you saw earlier get paid back. Duplicate cleanup disappears. Manual triage drops to a measured routine. Leadership questions get quick, clean answers with numbers. And your team gets a calm, predictable cadence that compounds.

3-minute QA checks and safe retries. That is what Oleno delivers. Request a Demo

Conclusion

You can build a deterministic demandgen scheduler without a big team. Treat cadence like SLAs, not calendar slots. Define jobs with clear inputs and publish criteria. Make the publish path transactional and idempotent. Apply backpressure so you never overwhelm downstream systems. Then run it with dashboards and runbooks that remove drama.

In my experience, teams see the change fast. Within 6 to 8 weeks, missed publishes drop by about 90 percent, duplicates vanish, and manual coordination shrinks by 5 to 10 hours a week. The best part, trust returns. Folks stop guessing because the system tells the truth in real time.

Oleno’s idempotent CMS adapters and publish ledger handle retries automatically, so you keep cadence without cleanup. If that is the reliability you want, Book a Demo

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions