Build a Retrieval-Augmented KB for Factual AI Writing

Most teams ask the model to be smarter. The model’s fine. The problem is your knowledge. If your ground truth lives in ad hoc docs, wikis with conflicting versions, and random Notion pages, you’re feeding the system a moving target. You don’t get factual writing from clever prompts. You get it from a retrieval-ready knowledge base with rules.

I learned this the hard way running lean teams. At PostBeyond, I could write quickly because I carried the “true” product story in my head. When I handed it off, the facts got fuzzy. Not because the writers were bad. Because the source wasn’t governed. Same thing later: videos transcribed into posts were fast, but structure, citations, and search intent weren’t encoded upstream. We fixed output by fixing inputs.

Key Takeaways:

- Treat your KB like a product: canonical sources, versions, and deprecations

- Build hybrid retrieval (BM25 + embeddings) with reranking and metadata filters

- Redesign the KB when the same claims keep breaking, not the prompts

- Add QA hooks that block publish when citations or provenance are missing

- Log retrieval sets and versions so you can answer “where did this come from?”

- Use snippet-ready structure to reduce editorial lift and improve citation clarity

Ready to skip the theory and see a governed pipeline work end to end? Try Oleno For Free.

Why Your KB Design, Not The Model, Drives Factual Output

Most factual issues trace back to vague or conflicting sources, not weak models. A governed KB provides stable truth, version tags, and clear provenance so retrieval returns the right passages consistently. Think of a pricing change: the model doesn’t guess; the KB points to the one page you trust.

Most teams treat knowledge as prompt context, not a governed source

If you paste docs into prompts, you’ll get variability, drift, and frantic edits later. Prompts are direction, not governance. When you design the KB with canonical sources, version tags, and deprecation rules, your “truth” stops changing mid-sentence. Retrieval becomes a repeatable entry point instead of a coin toss.

Here’s the shift: treat knowledge like a product. Give it ownership. Track lineage. Audit what enters and exits. We did this on content at scale years ago by enforcing structure before writing—same idea here. You’ll still tune prompts for tone and format. But the KB carries the weight, not the model. That’s the point.

What is a retrieval-augmented KB and why now?

A retrieval-augmented KB is a curated corpus the LLM queries at generation time, limited to sources you’ll stand behind. It’s indexed for high recall, labeled with metadata, and paired with prompts that constrain answers to retrieved passages. You’re not hoping for memory. You’re asserting ground truth.

Why now? Because content ops need predictability across topics and weeks. Models change, prompts evolve, and teams rotate. Retrieval stabilizes output when everything else moves. Research summarizing RAG patterns shows improved factuality when answers are limited to evidence slices, especially with freshness and source constraints built in. See the empirical findings on RAG efficacy for a broad overview of what actually moves accuracy.

Why hybrid retrieval beats pure semantic search for content ops

Dense vectors capture meaning. BM25 catches exact tokens—feature names, SKUs, product nouns. You probably need both. Hybrid retrieval lifts recall on domain terms while reducing semantic false positives. Then reranking narrows to the most precise spans. That’s the pragmatic default for factual drafts.

In practice, the stack looks simple: lexical + embeddings → rerank on recency and canonical flags. You can weight by document type (specs over marketing pages) and filter by version. Many teams start with “just embeddings.” They hit edge cases immediately. Hybrid gives you smarter recall without sacrificing exactness. It’s the middle path for busy teams.

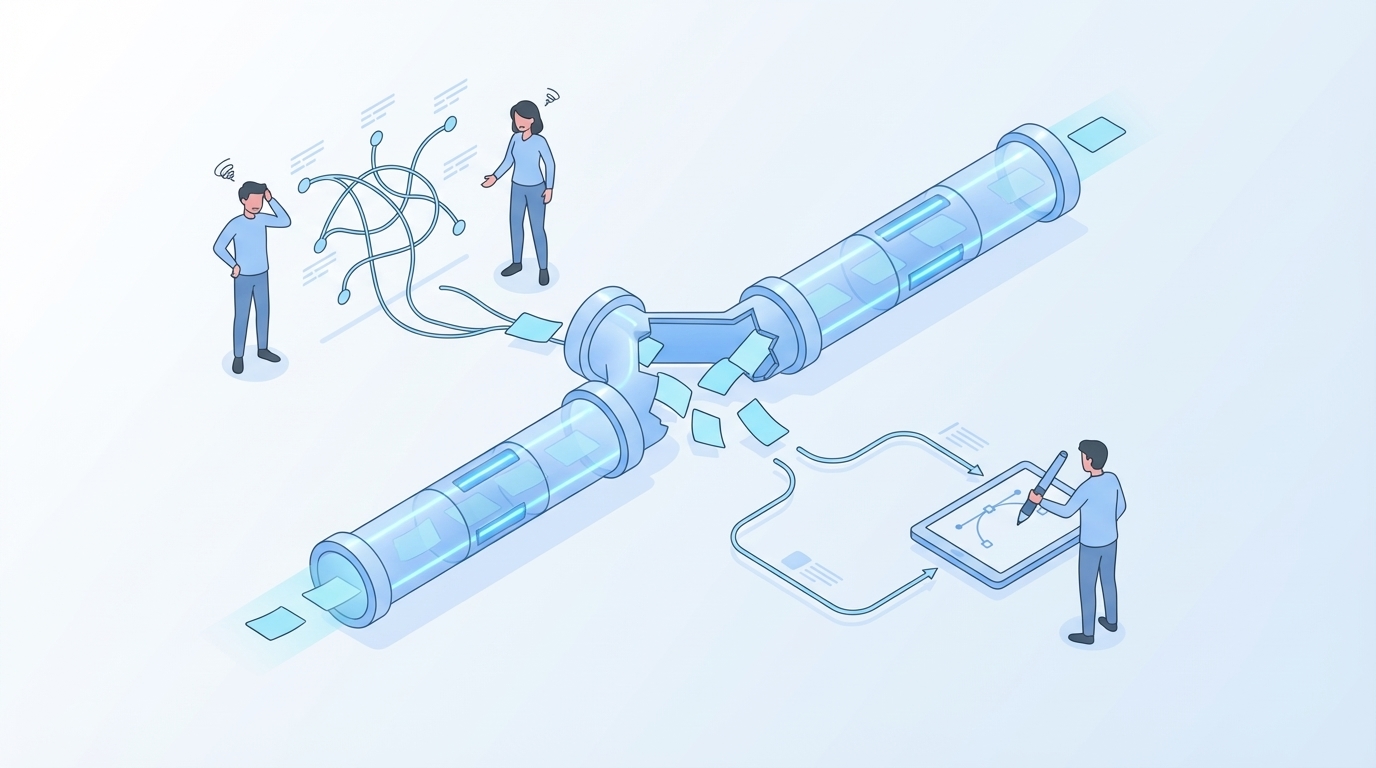

The Operational Root Causes Behind Hallucinated Drafts

Hallucinations rarely start in generation; they start at ingestion. Without canonical sources, version indicators, and clear lineage, your retrieval system will surface contradictory passages. The fix is upstream: curate, tag, deprecate, and make provenance obvious to both machines and editors. Then generation can be strict.

What traditional approaches miss in KB design

Most teams collect everything and call it a “knowledge base.” That’s an archive, not a KB. When two pages disagree and neither is marked canonical, retrieval picks wrong truths. Sometimes the old page is written better, so it ranks higher internally. That’s how “facts” drift without anyone noticing.

Fix it at ingestion. Set canonical sources per topic. Add version metadata and freshness windows. Deprecate stale docs so they’re excluded from recall. Also surface provenance in the draft—the editor should see which source drove which claim. Studies surveying RAG pipelines note the outsized impact of source selection and curation on factual output. Governance isn’t overhead. It’s accuracy.

The hidden complexity across ingestion and versioning

Scraping is the easy part. The real work is parsing structure, extracting metadata, deduplicating near copies, and tracking versions over time. If you skip that, your embeddings will index noise and your reranker will fight contradictions it can’t resolve. That’s not a model problem. It’s a pipeline problem.

Build an ingestion layer that emits stable IDs, doc types, product areas, and version fields. Include effective_date and a canonical flag. Store checksums so you can detect silent edits. Index metadata alongside the text so filters and reranking rules can do the heavy lifting. For a sense of why this matters, see work on data curation effects—garbage in, fuzzy out still applies.

When should you redesign the KB instead of tuning prompts?

Simple test. When editors fix the same claim twice. When retrieval keeps returning outdated SKUs. When latency spikes because your semantic index is bloated with duplicates. Stop tweaking prompt language. Redesign the KB. It’s the only lever that changes the evidence the model is allowed to cite.

Where do you start? Canonicalize sources, prune weak content, revise chunking boundaries, and fix metadata gaps. Prompts can constrain tone and instruct “cite or say you don’t know,” which helps. They can’t recover missing or conflicting ground truth. Policy beats prompts when the truth is messy.

The Hidden Costs Of A Weak KB In Production

Weak KBs burn time, budget, and credibility. The costs show up as verification loops, rework after publication, and avoidable risk in regulated categories. You feel it in hours lost, not just in abstract “accuracy.” Quantify the drag, then make the KB the fix. That’s how you get buy-in.

Engineering hours lost to manual fact checks

Let’s pretend you publish 30 articles a month. Each one gets 30 minutes of SME pings and doc digging to confirm features and pricing. That’s 15 hours monthly before edits. Multiply by revisions and you end up funding an engineer to read docs, not build product. It’s slow and it’s demoralizing.

A tighter KB reduces this overhead. Not to zero—it’s still software, nuance changes—but enough to feel it in cycle time. Research reviewing RAG efficacy points to lower verification burden when drafts are grounded with constrained retrieval and visible provenance. It’s not fancy. It’s just fewer back-and-forths.

The rework tax from hallucinated claims

One wrong claim bounces through Slack, legal, design, and publishing. You rewrite copy, update screenshots, and republish. If that takes two hours per article across ten percent of your output, you’ve just burned six hours a month on cleanup. Small on paper. Big when the calendar’s packed.

The solution isn’t hero editing. It’s upstream constraints. Govern the KB. Use strict prompts like “answer only from citations or say you don’t know.” Add QA checks that block publish on missing citations. This doesn’t eliminate rework, but it reduces the messy kind—the kind that resets timelines.

The compounding risk in regulated content

If you publish in finance or health, sloppiness isn’t just a headache. It’s risk. No one wants an enforcement letter because retrieval surfaced an outdated claim. Versioned sources, visible citations, and audit logs create traceability. You can answer who changed what, and when, for any paragraph.

That traceability matters. It lowers organizational anxiety and speeds approvals. Reviews of applied RAG in sensitive settings discuss how governance and provenance support safer deployment, especially when combined with conservative prompting. Add compliance partners early. Then design the KB so it answers their questions by default. That’s how you ship faster without crossing lines. See an overview of governance in sensitive domains for patterns worth adopting.

The Human Side Of Broken Grounding

Bad grounding doesn’t just waste time—it frays trust. People stop believing the system and start rewriting by hand. The fix isn’t telling folks to try harder. It’s changing the pipeline so they’re not set up to fail. Clear sources, visible citations, and predictable handoffs make teams breathe again.

The 3am fix you should not ship again

We’ve all patched a post after a customer email. It’s stressful. It erodes credibility. The root cause is upstream: two docs disagreed, retrieval pulled the wrong one, and no one saw it before publish. You need guardrails so 3am never happens in the first place.

That means canonical sources, strict retrieval templates, and QA that blocks publish when evidence is missing. Use prompts that say “do not speculate” and “cite the source or decline.” Wire logs so you can see which passages were used. You’ll still miss occasionally. But not at 3am. And not for silent contradictions.

When your SME calls out a wrong claim in Slack

It stings. Use it. Capture the correction into the KB, bump the doc version, reindex, and add a regression test to your retrieval prompts. That’s how you turn a bad moment into a fix the system remembers. No shame, just governance.

Operationally, treat it like a bug. Tag the failing query. Store the expected passage. Add a rule to reward the canonical doc in reranking. Next time, the system returns the right slice. That’s progress. Not perfect, but measurable.

What does good feel like for writers and editors?

Good feels… predictable. Writers see retrieval snippets that line up with claims. Editors get inline citations and a short list of open questions. SMEs review small, specific changes, not vague rewrites. Publishing turns into a handoff, not a chase.

There’s a side effect: teams get bolder with thought leadership because the foundation is solid. You can argue a point while staying factual on product truths. Section by section, the KB lends confidence. That confidence compounds.

Still dealing with fact checks that never end? See what a governed pipeline feels like in practice. Try Generating 3 Free Test Articles Now.

A Practical Build For A Retrieval-Augmented KB

A practical build scopes trusted sources, adds governance, then encodes that structure into ingestion, indexing, and prompts. Start small with canonical docs and versioning. Layer hybrid retrieval, reranking, and QA hooks. You’re building a system that makes the right answer likely, not guaranteed.

Define scope, canonical sources, and governance

Start with a short list of sources you trust: product specs, pricing, PRDs, release notes. Mark one canonical per topic and assign an owner. Add version tags and deprecation rules. Then set rules for what never enters the KB: opinionated FAQs, outdated pitch decks, ambiguous field notes.

Governance reduces noise at index time and ambiguity at generation time. Keep it light but strict. Owners approve changes. Deprecations remove recall eligibility. A weekly sweep catches orphans. If you prefer templates, encode the metadata you’ll need later: doc_type, product_area, effective_date, and canonical flag.

- One interjection: you don’t need a committee. You need rules.

Ingest, parse, and version with metadata that matters

Automate scraping and parsing. Extract headings, tables, callouts, and frontmatter. Emit fields like doc_type, product_area, effective_date, version, canonical, and source_url. Deduplicate by stable IDs and shingled similarity. Store checksums for audit. This is boring work. It creates magical stability later.

Index metadata alongside text, not after. Retrieval and reranking can filter aggressively when the signals are present: “prefer canonical=true,” “exclude effective_date < last_cutover,” “rank specs over marketing.” It’s amazing how many accuracy issues vanish when you give the system simple rules to follow.

For more on preprocessing patterns that move the needle, see this survey of ingestion and curation techniques.

Embeddings, chunking, and metadata-aware vectors

Pick an embedding model that fits your domain and latency budget. Chunk by semantic boundaries—200 to 500 tokens—with small overlaps to preserve context. Embed content plus key metadata (product name, version, type) so domain terms are first-class signals. You’re not gaming keywords; you’re making claims retrievable.

Then test recall on tricky queries: legacy feature names, SKU variations, near-duplicate copy. Measure whether hybrid retrieval pulls the right source and whether reranking lifts freshness and canonical flags. If your chunks drift, reduce size or improve boundary detection. Chunking errors look like “almost right” answers.

Retrieval, reranking, and grounded prompts with QA hooks

Run hybrid search: BM25 plus embeddings. Rerank with either a lightweight learned model or a simple rules stack that rewards recency, canonical flags, and doc_type priority. Limit generation strictly to retrieved spans. Prompts should say: “Answer only from these citations. If uncertain, say you don’t know.”

Log retrieval sets and outputs. Wire QA to check for missing citations, outdated versions, and tone mismatches. Block publish if checks fail. This is where ops discipline creates creative freedom—writers can focus on story knowing the structure will keep them out of trouble. For a concise overview of retrieval templates and reranking, see this overview of RAG best practices.

How Oleno Implements KB-Grounded Content Operations

Oleno operationalizes this approach by making the KB the first-class input across the pipeline. Facts are injected during drafting, structure is enforced for clarity, and code handles the accuracy-sensitive parts—links, schema, and publishing. The result is fewer fact checks, fewer rewrites, and clearer provenance without adding headcount.

KB-first drafting and fact anchoring

Oleno processes your Knowledge Base, embeds it, and injects facts during drafting so each section writes against the same truth. Because the KB is present at every stage, phrasing around product details stays consistent, and drift is reduced. You’ll notice it in fewer SME interrupts and faster approvals.

This isn’t a “paste into prompt” trick. It’s a governed flow: topic → brief with research → draft grounded in the KB → QA enforcing accuracy. The KB’s job is to stabilize claims; the pipeline’s job is to make that stability automatic. Oleno does both.

Deterministic internal links and schema

After drafting, Oleno injects internal links from your verified sitemap and generates JSON-LD programmatically. There’s no LLM guessing. Link anchors match page titles, and schema is validated before delivery. These code-based steps improve citation clarity for both search and assistants, which also cuts manual cleanup.

Determinism matters here. Accuracy lives in code, not probability. When links and schema follow rules, editors stop fixing the same structural issues every time. That’s real time back in your week.

Snippet-ready sections that reduce editorial lift

Every H2 opens with a 40–60 word, three-sentence paragraph designed to stand alone: direct answer, key context, specific example. This structure is validated during QA, which means editors spend their time on nuance, not rearranging paragraphs for clarity. It also increases eligibility for featured snippets and AI citations.

You’ll feel this in cycle time. Drafts arrive organized. Review focuses on what the piece argues, not how it’s stitched together. It’s a better use of scarce attention.

Auditable logs across retrieval and QA

Oleno keeps system-level logs for KB retrieval events, QA scoring, retries, and version history. You can trace where a claim came from and why it passed. It’s not a performance analytics suite—it’s operational traceability so you can debug and improve without guessing.

Tie this back to your costs. Those 15+ hours of monthly fact checks and the rework tax? They drop when you can see and fix root causes in the pipeline. That’s the change that compounds. If you want to put this to work without building it all in-house, Oleno is built to run it for you: KB-first drafting, snippet-ready structure, deterministic links and schema, and quality gates that won’t let weak grounding ship.

Curious how this feels with your docs and tone? Try Using An Autonomous Content Engine For Always-On Publishing. Oleno runs the workflow end to end so your team can focus on story, not structure.

Conclusion

You won’t edit your way to factual AI writing at scale. You need a governed KB, hybrid retrieval, and QA that enforces citations and versions. The model is the last mile. The system is the work. Build the upstream rules, then let the pipeline do its job. Your writers will feel the difference, and your calendar will show it.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions