Building a Cohesive Developer Marketing Narrative from RFCs

I’ve seen both sides of this. At Proposify, my team lived with the downstream effects of content that didn’t map cleanly to product truth—traffic looked great, but we paid for vague claims with longer sales cycles and skeptical engineers. Earlier at LevelJump, we hacked content from leadership transcripts, which gave us speed, but we lost structure. Devs noticed. They always do.

Here’s the punchline. Your RFCs already contain the credibility. Constraints, tradeoffs, version boundaries, and guarantees. If you translate those into a reusable narrative spine—with rules, not vibes—you convert developers without overselling. Not overnight. But fast enough to see fewer support tickets and better time-to-first-call.

Key Takeaways:

- Start from constraints and guarantees, then wrap a story around them

- Keep a living product truth sheet with safe claims, examples, and evidence

- Use one pipeline from artifact to output so narrative can’t drift

- Bake in QA gates that block inaccurate claims before publish

- Measure with developer signals (time-to-first-call, snippet error rate), not pageviews

- Encode rules once; reuse across posts, docs, landing pages, and demos

Why Technical Artifacts Fail To Convert Developers Into Believers

Your RFCs capture the truth; marketing often removes the nuance that makes engineers trust you. Conversion improves when the market-facing story preserves constraints, examples, and timelines. For example, the launch post should never promise params or limits that the reference marks private or per-minute instead of per-second.

The gap between RFC truth and market story

RFCs and API specs exist to record decisions, edge cases, and non-goals. Marketing exists to make meaning from those decisions. When the bridge between the two collapses, devs call it immediately. The language feels soft. The examples feel “too perfect.” The result is a shrug and a tab close.

In practice, I see three leaks again and again. First, claims that skip limits—everything works at scale until rate limits surface in docs three clicks deep. Second, examples using unsupported params, often because a beta flag wasn’t documented in the brief. Third, timelines that ignore deprecation windows, which makes migrations look easier than they are. Small misses. Big trust hit.

The fix isn’t complicated. Preserve the constraints in the narrative. Keep the guarantees explicit. And stop smoothing the rough edges that make engineers believe the rest of your story. According to the Thorough team guide to RFCs, the point of the artifact is shared understanding. Your marketing should extend that, not erase it. Teams who follow RFC writing best practices tend to have fewer surprises downstream.

Why separate systems erode trust with engineers

When truth lives in RFCs, usage patterns in telemetry, examples in docs, and “the story” in a slide deck, you get drift. Not because people are sloppy. Because they’re busy. Each handoff adds interpretation. Each interpretation adds risk. And when the clock’s ticking, shortcuts win.

I’ve watched this play out inside small teams. Product ships the spec, docs publish new examples, marketing writes the launch, and social tees up snippets. Three weeks later, someone notices two rate-limit values. Now you’ve got duplicated edits, new review threads, and that dreaded “can we pause publish?” message. Meanwhile, engineers see the mismatch and assume you’re either sloppy or selling. Neither helps.

You also pay a coordination tax. People spend cycles chasing the latest “truth,” not building the next artifact. Worse, fixes bounce between tools—CMS drafts, doc PRs, and chat threads. Fragmentation isn’t a moral failing. It’s a system design issue.

The Real Bottleneck Is Product Truth Translation, Not Copywriting

The real bottleneck is turning specs into safe, reusable claims that can travel across formats. Teams move faster when they decide boundaries, examples, and guarantees first, then layer narrative on top. A crisp truth sheet shortens review cycles and reduces “is this allowed?” ping-pong.

What traditional approaches miss when turning specs into stories?

Most companies jump straight to the headline and CTA. I get it. Velocity matters. But skipping the truth inventory creates soft language engineers don’t trust. You end up editing adjectives instead of aligning on what’s actually true in production. That’s expensive and slow.

Start with constraints and guarantees. What does this API never do? What will it always do within a version? What’s the safe payload to demonstrate? Once those are settled, the story writes itself. You keep the specificity, then explain why it matters. This flips the usual process—truth first, narrative second. It sounds slower. It’s not.

A simple way to test whether you’re doing this: read the first three paragraphs of your launch post out loud to your lead engineer. If they flinch at a verb or ask “which build did that come from?”, you’re storytelling before truth-telling. The remedy is a shared sheet of approved claims, examples, and evidence. No slides. No flourish.

Safe claims and boundaries are your raw materials

Think of a product truth sheet as your source of reusable atoms. It should include approved features, version boundaries, SLAs or non-goals, and code examples that are allowed to be public. Each line needs a citation—RFC paragraph, doc URL, or telemetry window. Not to be academic. To be fast.

This is where teams feel the payoff. When marketing can pull from pre-approved claims, drafts don’t grind to a halt waiting on “is this okay to say?” feedback. Engineering reviews shift from “what’s wrong here?” to “does this capture the tradeoff we made?” You cut the number of cycles without cutting quality. The art of tailoring RFCs makes the same point: clarity upstream reduces churn downstream.

You’ll want tags for each item: docs-only, blogs-and-docs, or launch-post. Add “sunset by” for deprecations. This lets you avoid the awkward moment where a great example from three versions ago sneaks into a new quickstart. Engineers notice. They always do.

The Cost Of Drift, Rework, And Slow Sign-Offs

Drift compounds cost across review cycles, support, and reputation. Even small inaccuracies create outsized cleanup work and longer time-to-first-call. Quantify the tax and leadership stops treating “one more review” as free. For example, a single bad snippet can ripple into support tickets and community threads for months.

Hours lost to SME cycles and rework

Let’s pretend you ship two launch posts and a quickstart per month. Three reviewers per asset. Two rounds each. Forty-five minutes a round. You’re already at 13.5 hours of SME time monthly, not counting context switching. That’s one to two workdays from your most expensive people, before edits and Slack back-and-forth.

Stretch that across a quarter. Now you’re at 40+ hours of expert time that didn’t build, test, or improve the product. It just kept the narrative from accidentally saying the wrong thing. Add last-minute pauses and weekend fixes, and morale drops. This isn’t hypothetical. I’ve seen reviews stack up while a team waited on one ambiguous claim about rate limits.

And here’s the kicker—most of this is preventable. When product truth is decided upfront, reviewers approve in minutes, not hours. People stop re-litigating decisions buried in a doc somewhere. They focus on whether the story helps the developer, not whether the numbers are right. For broader context on why this matters in dev marketing, the Product Marketing Alliance’s best practices emphasize credibility over catchiness.

The risk of inaccurate claims and credibility debt

One misleading code example can burn weeks of goodwill. Imagine a snippet that uses a beta enum without labeling it. A developer copies, gets a 400, and spends an hour debugging before realizing the truth. They don’t file a ticket. They just leave. Worse, they answer a forum question later with, “Don’t trust their quickstarts.”

What’s the cost? Let’s be conservative. Five community threads referencing that mismatch. Twenty support touches to clarify behavior. One integration partner who pauses rollout because “the docs and marketing don’t match.” Credibility debt lingers. You fix the post, but chatter remains, and your next launch starts behind.

There’s also a compliance angle. Public documentation standards expect alignment between examples and references. When you drift, you increase the odds of a public correction that you didn’t plan. Not a crisis, but avoidable. If you need a reference point on the basics, here’s a short overview of public documentation standards.

Seeing these costs too often? Try Using an Autonomous Content Engine for Always-On Publishing.

Engineers Tune Out When The Story Feels Wrong

Developers don’t rage when your story is off; they disengage. Fixing this starts with eliminating mismatches—examples that 400, limits that don’t match, enums that don’t exist. A pre-publish accuracy check, even a simple one, preserves trust and keeps your next announcement worth their time.

The frustrated dev who hits a misleading example

Picture it. A developer tries your quickstart on a Tuesday afternoon. They paste your snippet, swap the API key, and run it. 400. The “sort_order” param in your example is private beta. Not in the reference. Not labeled as such. They delete the tab. Maybe they tell a coworker. Maybe they don’t. Either way, you lost the room.

What’s frustrating is how avoidable this is. If your examples inherit from a pre-approved truth sheet, and your weekly review removes anything not ready for daylight, this never ships. I’ve been in those triage calls. You don’t want to be apologizing for a parameter typo while your community PM drafts a forum note.

There’s a simple rule worth writing down: never publish examples that aren’t allowed or versioned correctly. Treat it as a non-negotiable. You won’t catch everything, but you’ll catch most of it. And your audience will notice the difference.

When your launch post contradicts the API reference

Contradictions create doubt fast. Docs say rate limits per minute. Launch post says per second. Docs show “status: queued.” Post says “status: pending.” Small? Sure. But developers treat conflicts as a signal: if the basics don’t match, what else is off? That doubt slows adoption.

Run a pre-publish diff pass on anything numeric, enum, or limit-related. If it’s in the post, it should match the reference exactly—names, units, ranges. This doesn’t require a new platform. A checklist and a linked source of truth handle 80% of issues. You’ll ship fewer corrections, and your team avoids those “wait, which one is right?” threads.

And if you do miss something, own it. Update the post, add a clear edit note at the top, and link to the corrected quickstart. You won’t win everyone back, but you’ll stop the slide. For broader perspective on dev audiences, a short developer marketing overview is a useful refresher: clarity and accuracy beat flair every time.

A Hands-On Workflow To Build One Narrative From RFCs

You build a cohesive developer narrative by mapping RFCs to buyer problems, extracting safe claims, and gating publishing with light, automated checks. The key is deciding truth upstream, then letting it flow through templates and briefs. For example, a weekly truth sheet review keeps launches moving without re-litigating decisions.

Map RFCs and specs to buyer problems with a conversion template

Start with a one-page mapping. Left column: RFC section. Middle: the buyer or developer problem it solves. Right: the safe market-facing statement you can make. Add two fields—measurable impact and acceptable metaphor. This becomes the spine for every brief, across blogs, docs, and landing pages.

This structure prevents overreach. If the RFC documents a tradeoff that sacrifices burst writes for consistency, your market statement can’t claim “sub-millisecond writes at any volume.” Instead, you say “read-after-write consistency within X ms under Y TPS.” Then explain why that matters. You preserve the engineering reality and make it legible.

The template also shortens review. The moment a PM checks the mapping, you’ve aligned on what can be said before anyone writes a paragraph. Now the launch post isn’t the first place truth gets debated—it’s just where the chosen truth gets explained.

Extract product truth and safe claims developers can trust

Stand up a product truth sheet with approved features, boundaries, and public code examples. Each item needs a citation—RFC paragraph or reference doc—and a channel tag: docs-only, docs-and-blogs, or launch-post. This avoids the classic “blog showed a shortcut the SDK doesn’t support” glitch.

Keep this sheet living, not precious. Roadmaps shift. Preview flags move. Deprecations inch forward. A weekly 20-minute review with PM and docs keeps it fresh without ceremony. You’ll be surprised how much speed returns when “is this claim safe?” is answered before writing starts.

Tag examples by version and SDK language. Engineers love to spot a language-specific pitfall (looking at you, default timeouts). Labeling removes ambiguity and saves support from answering the same “why does the Python SDK behave differently here?” thread three times.

Verification and governance that preserves speed

Insert two gates that won’t slow you down. First, SME sign-off on the truth sheet. It’s the upstream control that saves everyone from downstream churn. Second, an automated citation check in your pipeline—if a claim lacks a source, it gets flagged. No drama. Just a nudge that prevents a public correction later.

Add a lightweight example linter that runs your snippets against current versions. You don’t need a fancy setup; run integration tests on your quickstart where possible and static checks for enumerations and limits elsewhere. Use numeric checks for rate limits and pagination defaults. Engineers smell when these are off by one.

Governance here isn’t “process theater.” It’s insurance that protects your pace. Define the allowed claims once. Apply them everywhere—briefs, drafts, posts, and docs—so you don’t burn cycles debating language that’s already been decided. The net effect is fewer reviews, faster ship, more trust.

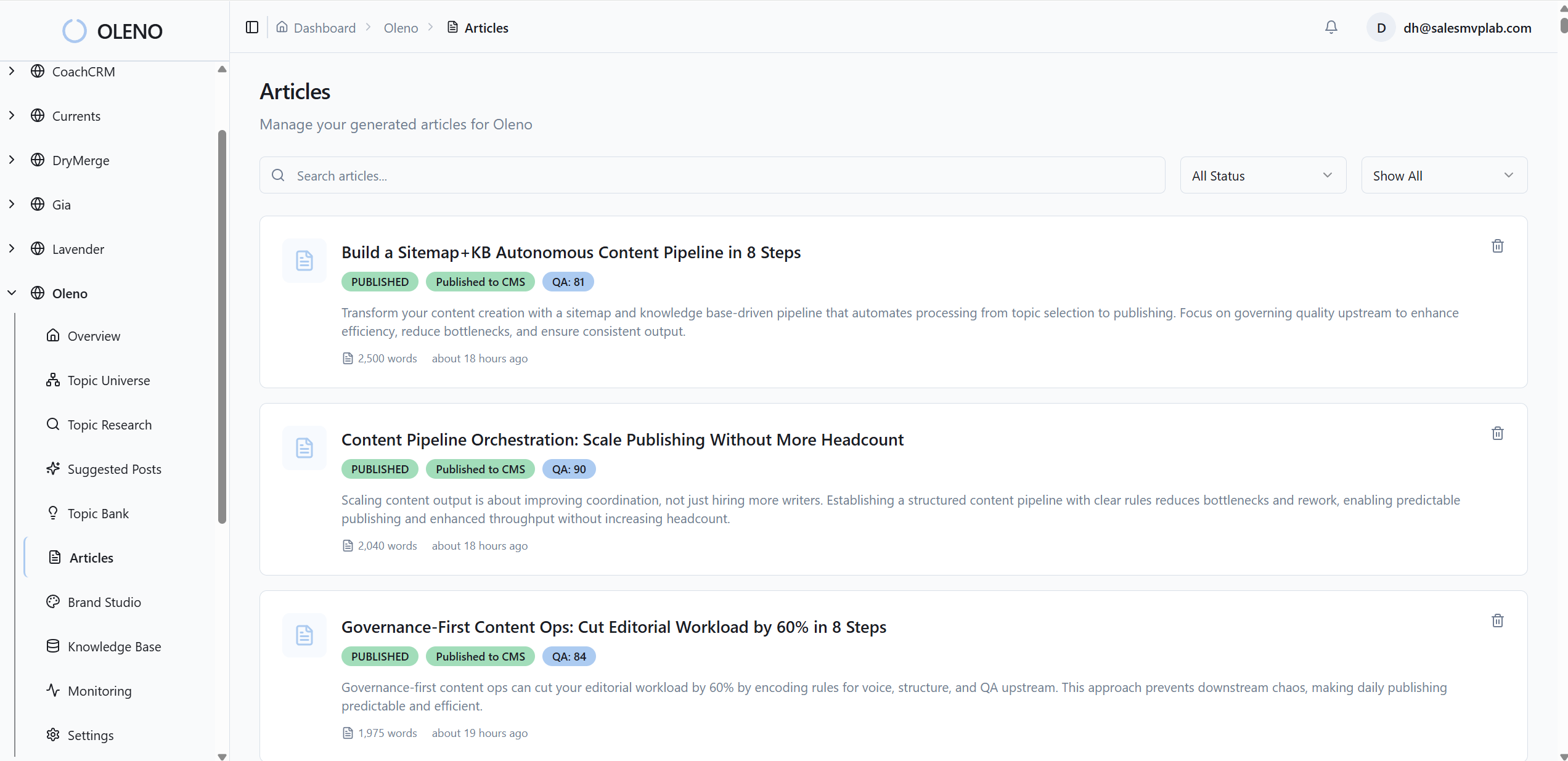

How Oleno Operationalizes Developer Narratives From RFCs

Oleno turns the truth-first workflow into a repeatable content system. You define the rules—voice, product truth, and allowed claims—then execution follows a deterministic pipeline from discovery to publish. For example, QA gates block inaccurate claims, which reduces the review burden on your engineers.

Governance that encodes product truth and claim control

Oleno starts with governance. You define brand voice, positioning, and product truth—approved features, claims, screenshots, and code examples. Those rules apply automatically to every asset the system creates, from technical guides to product explainers. This isn’t generic templating. It’s guardrails that keep drafts anchored to what’s true.

Because claim boundaries are explicit, marketing doesn’t need to ping engineering line by line. Oleno uses your knowledge base grounding so drafts stay aligned with real docs and internal materials. You control what’s allowed and what isn’t. Nothing new gets invented. Nothing wanders off narrative. In my experience, that’s where hours are saved—before the first draft even lands.

This governance step connects directly to the truth sheet approach. You define it once and reuse it across studios. The benefit is practical: fewer review cycles, less drift, and less “which version is this?” confusion during launches.

Deterministic execution that turns artifacts into consistent outputs

Oleno follows the same execution flow every time: Discover → Angle → Brief → Draft → QA → Enhance → Visuals → Publish. No prompt roulette. No one-off workflows that behave differently week to week. Studios then produce the formats you need across the funnel—programmatic SEO, category education, competitive evaluation, and product marketing content.

Determinism matters when the pressure is on. You don’t pause for new instructions or babysit drafts. The system keeps moving along a pipeline you trust. That reliability reduces rework and helps the team focus on the narrative decisions that require human judgment, not on orchestration. When I ran lean teams, this was always the gap—steady execution without constant coordination.

Quality is enforced at every step. Structure rules and voice guardrails aren’t an afterthought; they’re part of the pipeline. The output becomes predictable, not because it’s bland, but because it’s grounded and consistent.

QA gate and grounding that block inaccurate claims before publish

Oleno’s QA gate checks voice, structure, clarity, and—critically—accuracy and grounding before anything goes live. Claims are validated against your product truth and knowledge base. If a snippet references a beta-only param or a limit that doesn’t match the reference, it gets flagged for fix. Publishing waits until it passes.

This is where the rework headache drops. SMEs stop doing line edits and start doing high-leverage reviews. You avoid the embarrassment of contradictions between a launch post and API reference that your community will screenshot. And when you want visuals, Oleno can generate brand-consistent images under your design rules, then publish directly to your CMS with draft or live control.

Put simply, Oleno connects governance to execution without adding busywork. It lets a small team keep shipping narrative-driven, developer-trustworthy content on a steady cadence while the system handles accuracy and structure checks behind the scenes. If that’s the system you’ve been trying to duct-tape together, Try Oleno for Free.

Conclusion

A cohesive developer narrative doesn’t come from better adjectives. It comes from translating product truth into reusable claims, then letting a system apply those rules across formats without drift. Decide the boundaries once. Map them to buyer problems. Gate for accuracy. When you do, engineers believe you sooner—and your launches stop feeling like a scramble.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions