CI/CD for Content: Canary Publishing, Staging & Safe Rollbacks

When content ships like a one-shot launch, the anxiety is earned. You’re rolling the dice on layout, links, canonicals, and brand voice, live. I’ve done the midnight scramble after a pricing tweak nuked a CTA. Not fun. The fix wasn’t “be more careful.” It was changing how we release.

Think of publishing like deploying code. Staging, canaries, rollbacks, logs, the basic safety rails developers take for granted. You don’t need a giant engineering team to adopt this mindset for content. You need a pipeline. With gates you trust, versions you can point to, and a way to reverse without panic.

Key Takeaways:

- Treat publishing as a pipeline: draft → staging → canary → production, with gates and logs at each stage

- Use immutable versions and idempotent publish APIs to stop duplicates and enable safe rollbacks

- Decouple templates, content, and distribution to prevent one change from breaking everything

- Define canaries by region/channel and roll forward based on SLO-style thresholds

- Block unsafe promotions with automated QA on voice, accuracy, schema, and links

- Plan rollbacks that restore exact versions and purge caches predictably

Ready to skip the theory and see an always-on pipeline? Try a quick pilot and Try Generating 3 Free Test Articles Now.

Publishing Without Release Discipline Creates Most Content Incidents

Publishing incidents mostly come from treating “Publish” as a single, irreversible act instead of a controlled process. A CI/CD approach spreads risk across environments with checks and promotion rules. You get observability, a real rollback button, and fewer fire drills. Think fewer hotfixes, fewer duplicates, and fewer unexplained drops.

The Single Publish Moment Is Your Biggest Risk

A single publish concentrates risk in one keystroke. There’s no place to validate links at scale, no place to exercise redirects, and no place to catch voice drift before it’s live. When something breaks, you’re debugging in production with stakeholders slacking “what happened?” every five minutes.

I’ve watched teams ship a homepage hero straight to production because “we’re behind.” Then they spend two hours undoing a single variable mismatch. The lesson wasn’t “don’t rush.” It was “don’t have a single publish moment.” Give yourself runway. If edits move through staging and a small canary, you find issues in safe places. Then you promote or revert with intent, not hope.

Why Conventional CMS Workflows Miss The Safety Rails

Most CMS workflows optimize for writing and layout. Draft and publish. Maybe a scheduled publish. That’s fine for small blogs. It’s fragile at scale. What’s missing are gates that assert: this version is immutable, these links resolve, this structure passes schema checks, and if a retry happens, we won’t create duplicates.

When those pieces are absent, you get silent failures. Duplicate pages. Conflicting canonicals. Broken feeds. And zero audit trail. Add a release pipeline and your “CMS” becomes a destination, not the system of record. In other words, your process, not your people, prevents the mistake. For a primer on the release philosophy, see IBM’s overview of continuous integration.

What Is CI/CD For Content And Why Should You Care?

CI/CD for content borrows a simple pattern from engineering: build artifacts once, promote them through environments with checks, and keep a ledger of what moved where and when. Content becomes versioned, immutable, and auditable. Promotion replaces “edit live.”

You publish to staging, run canaries to specific channels or regions, and evaluate against clear thresholds. If it passes, promote. If not, roll back to the exact prior version. This isn’t heavy process. It’s a small amount of structure that turns “publishing” into a repeatable operation. You’ll feel the difference when nobody fears the button.

The Root Cause Is Mutability, Coupling, And Missing Environments

Content incidents often trace back to mutable edits in production, tight coupling between content and templates, and no distinct environments. Without isolation and immutability, you can’t observe, test, or revert precisely. Branching and semantic versioning remove the guesswork and make rollbacks targeted.

Mutable Content And Live Edits Make Reversibility Hard

Live edits mutate the only version that production serves. Now you can’t revert cleanly because there is nothing to revert to, just a pile of incremental changes layered in unknown order. When something breaks, you’re diffing snapshots you never formally created.

Shift to immutable artifacts: content IDs, semantic versions, and tags that map version to promotion history. The production environment serves a specific artifact, not a mutable idea. Rollbacks become a pointer switch to the prior version. Pair this with a ledger that records write attempts and promotions so you know exactly what happened and when. No blame. Just facts.

Tight Coupling Between CMS, Templates, And Distribution

When templates, content, and distribution rules are tightly coupled, a tiny tweak ripples everywhere. Change a metadata field? Suddenly the feed breaks because a template assumed a non-null value. Update a component? A regional page renders differently because the content and layout versions drifted apart.

Decoupling prevents this. Treat content as a versioned artifact. Deploy templates separately with their own versions. Keep distribution routing independent, so publishing to email, blog, or homepage hero doesn’t depend on authoring assumptions. That separation means you can change one layer without breaking the others. It’s not theory, this is how teams reduce “mysterious” regressions.

How Branching And Versioning Remove Ambiguity

Branching isn’t just for code. Use content branches for larger initiatives (new category explainer series), then create semantic versions as milestones pass checks. For example, article-123 at 1.2.0 passes QA and staging; 1.2.1 is a minor copy fix. Promotions reference the version explicitly, not “latest.”

This eliminates ambiguity in moments that matter. Need to roll back? Restore 1.2.0 to production and purge caches tied to that artifact. No cherry-picking production edits. No “what changed since Tuesday?” confusion. For a broader view of these patterns, read the GitLab CI/CD topic overview.

Unsafe Publishing Drains Time, Money, And Trust

Bad releases burn hours, create rework, and erode trust with readers and search engines. Duplicate URLs, race conditions, and rollback confusion are preventable with idempotent writes, promotion gates, and a clear reversion job. A small amount of discipline saves real money.

Duplicates And Race Conditions Hurt SEO And Readers

Concurrent publishes without idempotency lead to duplicate URLs and clashing canonicals. Search engines index both versions and devalue both. Readers land on out-of-date copy. Your internal links point in two directions. It’s a mess, and the cleanup is slow.

Add deduplication keys and treat “publish” as an idempotent write. Only one request with that content_id and version should win. Everything else returns a conflict or resolves to the existing artifact. It’s cheaper to block the second write than to unwind cross-linked pages later. Your SEO team stops doing triage, and your writers stop explaining why a post “blinked.”

Let’s Pretend A Small Team Blows A Launch

Let’s pretend you’re three people. A pricing update goes live at 5 p.m. No staging. No canary. The CTA disappears for 90 minutes. If your site averages 20 signups an hour, that’s roughly a day of pipeline gone. Then add the rework, meetings, screenshots, “what changed?”, and the hidden cost beats the obvious one.

I’ve seen versions of this at multiple companies. Not because people are careless, but because the system allows a single failure point. A simple canary, Canada only, blog and email only, 10% rollout first, would have caught it in minutes. Then a one-click rollback restores the prior version without touching structure. That’s the point: incident reduced to a tiny surface area.

The Hidden Cost Of Rollback Confusion

Rollbacks fail when teams can’t answer two questions: which version are we restoring, and which caches must be invalidated? Without that, someone “recreates” the page, accidentally changing a slug or canonical. Now organic traffic drops for a week.

Define a reversion job that restores the exact prior artifact while preserving URLs, canonicals, and redirects. Publish the purge list tied to the content object, not a guesswork path. This takes minutes to design and hours to test. It saves days later. If you want more depth on CI/CD vocabulary while you plan it, see the Codefresh CI/CD overview.

Still dealing with manual publishes and late-night rollbacks? It doesn’t have to be this brittle. Try Using An Autonomous Content Engine For Always-On Publishing.

The Human Side Of Bad Releases

Bad releases don’t just cost traffic. They cost energy. When the system is brittle, people delay publishes, skip canaries, and push risky fixes to “just get it done.” You feel the drag in cadence and team morale. Discipline removes fear, which restores speed.

When The Late Night Fix Makes It Worse

Tired people make risky changes. A midnight hotfix feels urgent and heroic. It’s often how image paths get changed, schema gets broken, or redirects get overwritten. Then you wake up to two issues, not one. The pattern isn’t sustainable.

Make it hard to promote outside of a safe path. Either block after-hours promotions unless the full pipeline can run, or define a limited hotfix route that only touches copy and requires post-hoc validation. If that sounds strict, good. Guardrails buy sleep. They also buy fewer Monday-morning apologies.

Who Carries The Pager When Brand Voice Drifts?

Voice drift isn’t a 500 error, but it is an incident. Claims creep. Tone shifts from confident to generic. It happens when edits bypass rules and nobody “owns” enforcement. This isn’t about micro-managing writers. It’s about system-level checks that catch the drift before readers do.

Put voice, accuracy, and structure rules into the pipeline so the “pager” becomes automated checks, not a person reading every line at 9 p.m. Humans handle exceptions, not the baseline. You’ll reduce the awkward, “did we really say that?” moments and protect trust over time.

Why Teams Start To Fear The Publish Button

If publishing equals risk, teams hesitate. They wait for “perfect,” which never comes. Cadence slows. Coverage thins. Then search performance looks like inconsistency, not incompetence. The solution isn’t pep talks. It’s visible mechanics.

When you can see a clean canary, a green set of checks, and a rollback that really restores, publishing becomes routine. The emotional load drops. You’ll notice it in the language: more “ship it,” less “are we sure?” That confidence compounds.

A Safer Pattern For Content Releases That Are Repeatable, Observable, And Reversible

A safer release pattern defines environments, gates, and idempotent writes. You stage changes, exercise a canary, and roll forward or back based on thresholds. The process is observable end to end, and reversibility is built in. Fewer surprises. Less drama.

Define Stages And Gates: Draft, Staging, Canary, Production

Create explicit environments with promotion rules. Draft for authoring. Staging for QA, schema checks, and link validation. Canary to a small region or channel slice. Production once thresholds pass. Each promotion should be logged with who, what version, and why.

Gates can be simple: link integrity, structured data validation, claim/voice compliance, and a quick design pass. Promotions are additive, no edits in production. That one principle cuts the majority of “who touched this?” incidents. You’re asserting state, not guessing.

Target Canaries By Region, Channel, And Segment

Start canaries where routing is easiest. Channel is usually simplest: blog and email before homepage hero. Region is next: Canada before global. Roll percentages on a schedule, 10%, then 50%, then 100%, with clear hold times at each step.

Measure leading indicators appropriate to content: engagement deltas, 404s, render errors, and basic latency. Compare canary versus control. If thresholds hold, promote. If not, roll back confidently, because you’re restoring a known version. For a practical release lens on this approach, see the Octopus CI/CD overview.

How Do You Design Idempotent Publish APIs?

Design for collisions. Use an idempotency key composed of content_id + version + environment. Persist a write ledger so retries return the same result and don’t create duplicates. Enforce unique constraints at the CMS boundary and return conflicts deterministically.

Add retry with backoff for transient errors and a dead-letter queue for failures that need human attention. When duplicates can’t win the race, they stop creating downstream headaches, no dupe URLs, no clashing canonicals, no mystery pages to clean up later.

Plan Rollbacks That Preserve URLs, Canonicals, And Caches

Rollbacks should restore the exact previous version, not a fresh hotfix copy. Preserve slugs, canonicals, and redirects. Maintain a cache purge list tied to the content object, so you don’t guess paths under time pressure.

Document a short runbook: how to trigger rollback, how to validate restoration, and who to notify. Create prewritten comms templates to avoid wordsmithing mid-incident. A 30-minute investment now avoids a 3-hour scramble later.

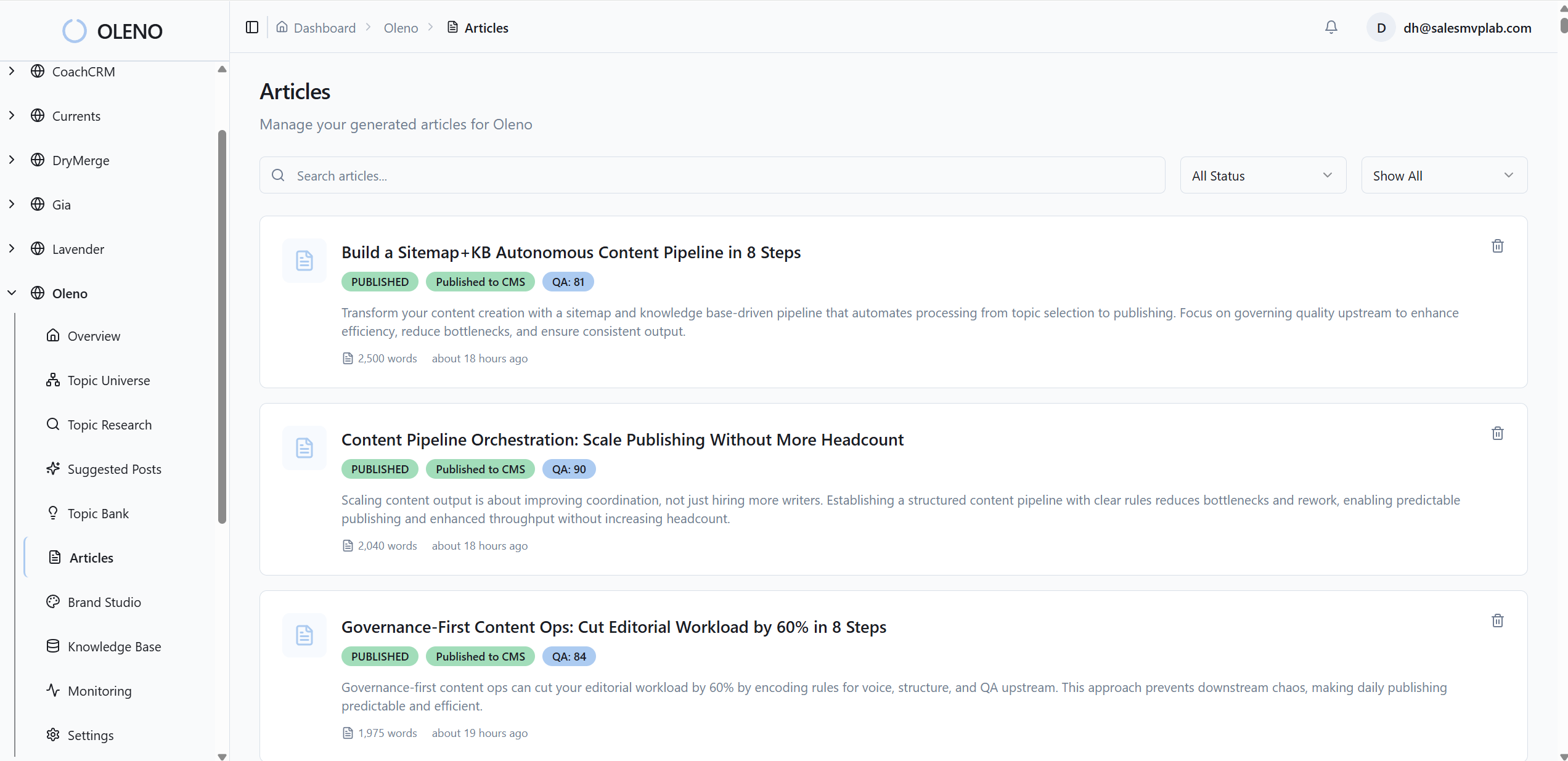

How Oleno Implements Staging, Canary Gates, And Safe Rollbacks

Oleno applies CI/CD principles to content without forcing you to build the plumbing. You define governance, rules, and jobs. The system turns those into a deterministic pipeline with QA gates, idempotent publishing, distribution controls, and operational signals. The result: repeatable, observable, reversible releases.

CMS Publishing With Idempotency, Retry, And No Duplicates

Oleno publishes directly to common CMSs as draft or live and enforces idempotent writes, so the same publish request won’t create duplicates. Retries with backoff handle transient errors; conflicts are returned cleanly with a ledger you can inspect. This is where duplicate URLs and canonicals stop before they start.

Because writes are idempotent and logged, you can trace what moved where and when. That’s your audit trail during “what happened?” moments, and your safety net when traffic or editors spike activity. It reduces the exact race conditions and SEO headaches we talked about earlier.

QA Gates That Block Unsafe Promotions

Nothing moves forward until it passes quality checks. Oleno validates voice rules, narrative structure, factual claims against your approved product truth, and schema and link integrity. If something fails, it’s revised automatically and rechecked against the same rules.

You set the standards once. Oleno applies them every time. This is the difference between relying on late-night intuition and relying on a system that catches drift before it ships. It doesn’t replace strategy. It replaces the risky parts of manual oversight.

Distribution Controls To Stage And Selectively Release

Distribution in Oleno reuses approved content across channels with scheduling, channel formatting, and cadence enforcement. Use it to stage content, sequence a rollout, and target specific channels or regions first, your canary without custom deploy code.

Because distribution uses already-approved artifacts, you aren’t inventing new messaging mid-flight. You’re promoting known-good versions to defined slices, then expanding as thresholds hold. That keeps releases predictable while giving you fine-grained control.

Operational Health Signals And SLO Style Checks For Reliability

Oleno provides visibility into operational reliability: output cadence, quality trends over time, and common failure patterns the QA gate can miss. It’s not traffic analytics; it’s a signal on whether the content engine is running well.

Pair those signals with SLO-style thresholds for publish success rate and latency. Now canary decisions are less subjective, and rollbacks are less emotional. You can point to a threshold, not a hunch. For more CI/CD background as you set those thresholds, the GitLab CI/CD topic is a helpful reference.

Here’s the simple next step if you want this in your world: configure governance, enable a few jobs, and let the system run for a week. You’ll see fewer duplicates, cleaner rollouts, and a calmer team. Want to experience it hands-on? Try Oleno For Free.

Conclusion

Most teams don’t have a content problem. They have a release problem. When publishing is a single, risky push, small mistakes become incidents and incidents become fear. Shift to a pipeline, immutable versions, environments, canaries, rollbacks, and the mood changes. Publishing becomes routine. Quality rises. And your team ships more, with less drama.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions