CMS‑Agnostic Editorial Orchestration: Webhooks, Idempotency, Retry

Shipping content across a messy stack isn’t hard because writing is hard. It’s hard because the last mile is noisy. Two webhooks fire. One retries. Your CMS says “published,” your logs say “failed.” Now you’re diffing versions at 3am, deleting duplicates, and hoping the redirect rules don’t take down the homepage.

I’ve been on teams where we did the same dance. Small crew, big goals, zero time for cleanup. We hacked together a workflow, shipped a lot, and paid for it with rollbacks and rework. The pattern is always the same: speed first, safety later. Then later shows up with a bill.

Key Takeaways:

- Treat each publish as idempotent with a stable key and version-aware upserts

- Use webhooks to notify, not execute; route to a queue and process with retries

- Make the orchestration layer the source of truth with append-only logs

- Enforce QA as a blocking gate before publish attempts

- Add non-blocking approvals with timeouts, requeues, and resubmission tokens

- Keep contracts explicit and versioned so change doesn’t break everything

Ready to stop cleaning up duplicates? See a safer approach in action. Try Generating 3 Free Test Articles Now.

Why Teams Ship Duplicates And Broken Pages

Most teams ship duplicates because their publishes aren’t idempotent. Each request is treated like a fresh attempt, so retries create new records or overwrite newer versions. The antidote is a stable idempotency key plus version-aware upserts. A launch day hiccup can trigger double-publishes for the same slug.

The hidden failure in most pipelines is non-idempotent publishes

If every publish acts like a brand-new write, you’re inviting collisions. Network hiccup? Your connector retries and now there are two posts with the same slug—one with outdated metadata. This isn’t theoretical; it’s how most “simple” integrations behave under pressure because they weren’t built for retries.

The fix is boring and effective. Use a stable idempotency key per logical publish and enforce version-aware upserts. Lock on a canonical identifier (canonical_slug, content_id) and reject writes when the incoming version is stale. That way, a retry can’t override a newer edit. And if a second request arrives with the same key, you return the prior outcome instead of creating another record.

I know why teams skip this. Deadlines, MVP thinking, pressure to “just ship.” But non-idempotent writes don’t fail fast—they fail later, when traffic spikes and everything is harder to unwind. Build idempotency in first, not as a “we’ll add it later” story.

What webhook-first automation misses

Webhooks are great for speed, not safety. They’re delivery, not execution. When a webhook handler does the work directly—no queue, no dedupe—it will race itself under load. Two events arrive, both try to publish, one wins, the other clobbers or duplicates. You didn’t automate; you multiplied chances to collide.

Use explicit event types, signatures, and replay protection. Verify source with HMAC, acknowledge receipt quickly, and put the payload onto a queue. Workers process with idempotency, backoff, and observability. This keeps “delivery” concerns separate from “execution” concerns, which is exactly what you need when things get noisy.

Teams that treat webhooks like a fire-and-forget API call tend to chase ghosts. Debugging becomes guesswork: did the CMS time out, or did two handlers publish at once? Move to notify-then-queue and those mysteries mostly disappear.

What is CMS-agnostic orchestration and why should you care?

CMS-agnostic orchestration means a stable event language and safety rules that don’t change when the CMS changes. The orchestrator translates uniform events into connector-specific calls, applies QA, handles retries, and writes the audit trail. New CMS, same contract. Your pipeline stays consistent while tools evolve.

This matters because every CMS has quirks. One treats slugs differently, another handles versions oddly, a third returns 200 on partial failures. A thin orchestration layer absorbs those differences. You maintain one system of record—not five. For a deeper look at modern CMS landscapes, this headless CMS overview provides useful context.

The Real Root Causes Behind Unreliable Publishing

Publishing breaks because integrations jump straight to API calls without contracts, QA gates, or version rules. The CMS is treated as workflow, not storage, so approvals and quality live in the wrong place. A stable orchestration layer becomes the source of truth, while the CMS renders.

What traditional CMS integrations overlook

Most teams start by wiring “publish” directly to “create post.” It works until you add drafts, approvals, retries, and partial failures. Without an event contract, you’ll smuggle decisions into handler code: If draft, do this; if publish, do that; if 429, sleep. It scales poorly and breaks in weird ways.

Move approvals and QA into your orchestration layer. Treat the CMS as a datastore and renderer. The orchestrator owns sequence, safety, and truth. That means you get consistent behavior across connectors, and you can change CMSs without rewriting your entire workflow. The CMS should render content. Your pipeline should decide when content is ready.

I’ve seen teams keep “approval” inside the CMS because “that’s where editors live.” Understandable. But it creates per-tool behavior and tribal knowledge. One CMS uses checkboxes, another uses states, a third uses “workflow apps.” Put the rules in one place and let connectors map to it.

Designing event contracts that survive change

Contracts fail when they lack durable identifiers and status transitions. Include fields like event_id, content_id, version, idempotency_key, occurred_at, and a signed signature. Name events explicitly: draft_ready, qa_passed, approved, publish_request, publish_succeeded, publish_failed. That clarity makes routing and analytics-free troubleshooting feasible.

Change happens. Add versioned schemas and maintain backward compatibility. A payload_v2 should be consumable by a v1 consumer—ideally through an adapter. And document retry behavior clearly: which events are safe to replay, which aren’t, and how deduplication works. These are architecture basics, the same ideas you’ll find in gateway architecture notes.

Treat contracts like code. They deserve reviews, versioning, and tests. If your event contract lives only in a Confluence page, you’ll eventually debug a production outage with a screenshot from six months ago. Not fun.

Who owns the source of truth when systems disagree?

Pick one. Your orchestration layer. If the CMS says “published” but your event store shows “failed,” the orchestrator must reconcile or roll back. That requires append-only logs, correlation by idempotency key, and deterministic conflict rules.

When systems disagree, humans arbitrate with the audit trail, not opinions. If an event timeline shows the last good state and subsequent failures, rollback is obvious. Without that trail, you’re guessing. Content federation patterns echo this philosophy—one interface, many backends—outlined in this content federation perspective.

We don’t need dashboards to do this well. Internal logs and a simple viewer are enough to reconstruct what happened. Keep it boring. Boring is reliable.

Still relying on manual checks and ad-hoc fixes? You don’t have to. Try Using an Autonomous Content Engine for Always‑On Publishing.

The Hidden Costs Of Manual Recovery And Rework

Manual recovery burns hours across roles, splits SEO equity, and erodes trust. Duplicates and broken pages trigger rework that slows future releases. The cost isn’t just cleanup—it’s momentum. Teams move from creating to cleaning.

Let’s pretend it is launch week, duplicates cost hours

Let’s pretend two publish requests race. You end up with duplicate slugs, broken canonicals, and confused internal links. One engineer spends two hours cleaning the CMS, another scrubs CDN caches, a marketer fixes metadata and social cards. Three people, half a day, gone.

Multiply that by a busy quarter and you’ve quietly lost weeks. A canonical slug constraint and idempotent upserts could have prevented the mess. This is exactly the kind of “small” detail that pays big dividends when stress is high and time is short.

The part people miss is the ripple. Fix one post; five others linked to the wrong one. Now you’re chasing references. The cleanup always takes longer than you think.

The ripple effects on SEO and support

Duplicate URLs split authority. Broken pages trigger support tickets and internal pings that disrupt deep work. The longer the mess lives, the more it compounds—crawl budgets wasted, mismatched canonicals, and a cache that refuses to forget the old version.

Editors lose trust, so they hesitate next time. That hesitation becomes a bottleneck. Even if traffic recovers, momentum doesn’t. A safer pipeline is as much about preserving team energy as it is about avoiding SEO hits. For headless context, this guide to headless CMS concepts explains why decoupled stacks magnify last‑mile mistakes.

Engineering hours lost to rollback and triage

Without a real audit trail, rollback becomes archaeology. You’re grepping logs, clicking through CMS histories, and guessing the order of events. It’s slow and brittle. Instead, store each event append-only with correlation IDs and outcomes. Reconstruct timelines in minutes, not hours.

Put failed items into a dead-letter queue with reasons engineers can act on. Not “it failed,” but “stale version; incoming 12 < stored 14; reject.” That phrasing turns a mystery into a to-do. Technology-agnostic workflows make this pattern portable across tools; CrafterCMS describes the benefits of this approach in their note on technology-agnostic workflows.

The Human Side Of A Bad Publish

The human cost is real: late nights, stalled approvals, and reluctance to automate. Guardrails don’t remove judgment; they make it safe to move faster. When the system catches issues, confidence returns.

The 3am rollback nobody volunteers for

You know the one. A redirect loop lands on the homepage. Someone hotfixes templates while another flushes caches and hopes for the best. Fatigue sets in. At 3am, even obvious fixes feel risky.

This is where version-aware upserts and a recorded last-good state matter. With a proper event store, rollback becomes a controlled operation instead of a guess. You select the last publish_succeeded for that content_id and reapply it. Night work becomes morning routine.

I’ve done the “keep the site up with duct tape” sprint. It’s a tax on future focus. The more you avoid it with structure and logs, the more your team invests energy where it matters.

When approvals stall and the story goes stale

Approvals that block the pipeline create hidden queues. People travel. Teams get busy. Content sits in limbo. Meanwhile, the topic cools off. By the time it ships, the angle is dated.

Use non-blocking callbacks with timeouts and escalation. If an approver doesn’t respond, requeue the item, notify a backup, and keep moving. Humans still make decisions. The pipeline manages the waiting. That difference keeps velocity without turning quality into an afterthought.

The nuance here: not every approval needs the same pattern. Sensitive pieces can remain synchronous. Everything else benefits from asynchronous reviews and reminders.

Why smart teams hesitate to automate

They’re not wrong. Automation without guardrails multiplies mistakes. One misconfigured handler can publish 100 broken pages in a minute. The fix isn’t “do less automation.” It’s “do stricter automation.”

QA gates that actually block. Idempotent publishing that actually dedupes. Retries that actually stop after a threshold and record a reason. When people see the system catch issues, confidence goes up. Slowly at first, then all at once. For a broader primer, this headless CMS overview is a solid backdrop for why last‑mile rigor matters in decoupled stacks.

A Safer Architecture For The Last Mile

A safer last mile uses explicit contracts, idempotent writes, blocking QA, and non-blocking approvals. Webhooks notify into a queue. Workers process with version rules and backoff. Humans decide with context, not chaos. It’s simple, but it’s disciplined.

Design your event model and webhook contract

Define explicit event types, signatures, and a schema with event_id, content_id, version, idempotency_key, attempt, occurred_at, source, and reason. Verify origin with HMAC. Deliver to a queue, not directly to workers, and keep payloads backward compatible with versioned schemas.

Document retry semantics and duplicate delivery so every consumer is built for replays. If your contract says “events may arrive out of order,” your code will sort them or ignore stale ones. If it doesn’t, you’ll patch behavior in random places. That’s where drift begins.

Instruction: implement an event schema and HMAC signing. Route webhooks to a queue. Version payloads and document retry rules so consumers are built for replays and duplicates.

Implement idempotent publishing and versioning

Use an idempotency_key per logical publish, not per HTTP request. In the connector, upsert by a natural key like canonical_slug and enforce version checks. Lock on content_id to prevent races, then record outcomes using the same idempotency_key for correlation.

On conflict, choose last-write-wins only when the incoming version is newer. Otherwise, reject and alert with a clear remediation path—ideally including the stored version and the expected next version. This tiny bit of context prevents a slack thread and a guessing game.

Instruction: use a canonical key and version-aware upsert. Apply a short lock and dedupe by idempotency_key. Reject stale writes with actionable error messages.

Build a QA gate service with blocking rules

Automate the checks humans are tired of catching: structural compliance, brand voice rules, KB grounding, metadata completeness, and LLM-friendly formatting. Return a score and a clear list of failures. Block publishing below threshold. Passing means the content is eligible to proceed, not guaranteed to publish.

This turns editors from fixers into approvers. They spend time on narrative decisions while the system polices structure and safety. And because it’s policy-as-code, evolving standards is a pull request, not a training session.

Instruction: implement policy-as-code checks. Return a score and failures. Only publish when the score passes your threshold.

Add human approvals with non-blocking callbacks

Offer two patterns. Synchronous approvals for sensitive, low-volume pieces. Asynchronous callbacks for scale, with timeouts and requeue logic. If someone requests changes, produce a resubmission token tied to the same idempotency_key so re-approvals don’t create duplicates.

Use escalation paths sparingly but explicitly. The point isn’t to push everything through—it’s to prevent work from vanishing into a silent queue. For teams exploring aggregation layers, these gateway architecture notes complement the idea of a stable contract sitting in front of changing backends.

Instruction: implement callback endpoints, timeouts, and resubmission tokens. Keep the same idempotency_key through approval loops.

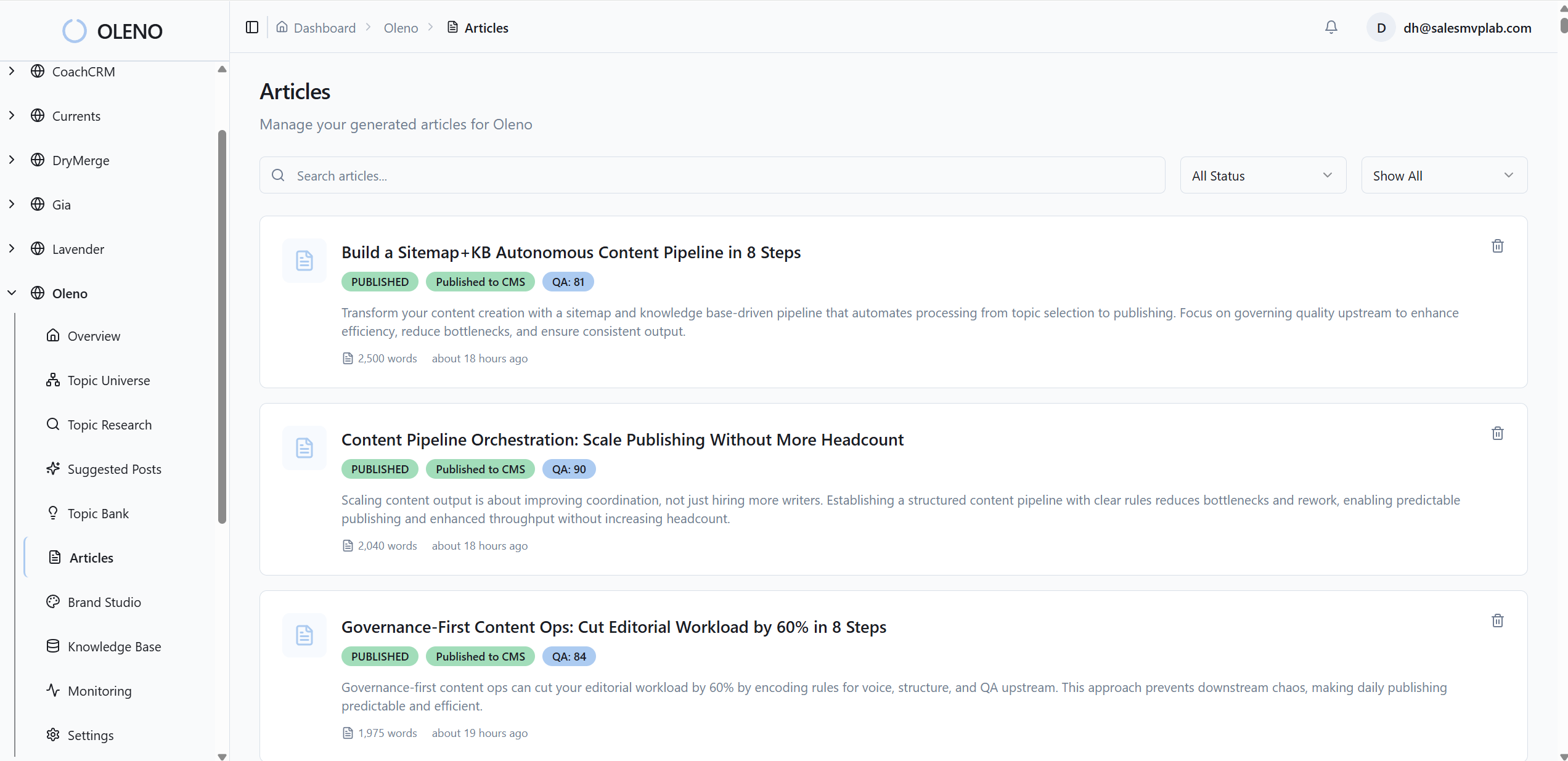

How Oleno Runs CMS-Agnostic Publishing Safely

Oleno runs a CMS-agnostic publishing pipeline with idempotent writes, policy-driven QA, and reliable retries backed by internal logs. It treats webhooks as notifications, not execution, and processes each publish with version context so retries can’t clobber newer edits. The goal isn’t flash—it’s fewer incidents and faster recoveries.

Idempotent CMS publishing with version-aware upserts

Oleno publishes directly to WordPress, Webflow, HubSpot, Storyblok, and others, and treats each publish as idempotent. It writes using canonical identifiers and version context, so late or retried requests don’t overwrite newer content. That design reduces duplicates and race conditions during high-volume windows.

In practice, this means fewer cleanup tasks for your team. When a connector sees the same idempotency_key, it resolves to the prior outcome. When it sees a stale version, it refuses to write and records the reason. You get determinism where it matters.

Policy-driven QA gates that block bad content

Before any publish, Oleno runs automated QA across structure, voice, knowledge-base grounding, and SEO/LLM formatting. Articles below threshold are revised and re-scored automatically. Nothing moves forward until it passes. This shifts work away from frustrating rework and toward higher-leverage edits when they’re truly needed.

Because QA is policy-as-code, updates are safe and auditable. You change rules once; the system enforces them everywhere. No “did we remember to check that” anxiety right before go-live.

Reliable retries and dead-letter handling

Publishing attempts are logged and retried with backoff. If a connector fails repeatedly, Oleno stops and records the failure in an internal log for safe triage. Editors don’t get stuck; they keep working while the system either recovers or flags the item for attention.

This is not an analytics dashboard. It’s a reliability layer: inputs, attempts, outcomes, and version history. Enough to reconstruct a timeline and take action without inventing new tools or processes.

Audit trail and connectors across popular CMSs

Oleno maintains internal, append-only logs of pipeline inputs, publish attempts, retries, and version history. That audit trail makes root-cause analysis straightforward and rollbacks predictable. Because connectors span popular CMSs, your orchestration layer stays consistent while tools evolve beneath it.

If you’ve been wrestling with duplicates, broken pages, or murky rollbacks, you don’t have to keep paying that tax. Let the system handle the last mile so your team can focus on the story. Try Oleno For Free.

Conclusion

You don’t need a bigger team to stop shipping duplicates. You need a stricter last mile. Contracts, queues, idempotency, QA gates, and non-blocking approvals—implemented once, enforced everywhere. When the orchestration layer owns truth and safety, the CMS can just render. That’s how you protect launch week and keep momentum intact.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions