Competitive Content Analysis: How to Stay Ahead

Competitive content analysis falls apart when teams treat it like a research task. It’s a positioning system, and when that system is weak, every comparison page, sales battlecard, and competitor article starts saying slightly different things.

For a Product Marketing Manager at a scaling SaaS company, this gets ugly fast. You’re not just trying to publish more content. You’re trying to keep product truth, market framing, and competitive positioning from drifting every time a new writer, PMM, or agency touches the work.

Key Takeaways:

- Competitive content analysis breaks when teams treat it like isolated research instead of governed positioning work

- The real bottleneck is not writing speed, it’s fragmented execution across PMM, content, SEO, and review

- Strong competitive content analysis starts with category framing, audience context, use cases, and product truth

- High-output teams build a repeatable system for competitor analysis before they publish comparison content

- In the GEO era, consistency beats random bursts of optimized content

- Product Marketing Managers need a way to keep messaging accurate across every evaluation-stage asset

Why Competitive Content Analysis Breaks at Scale

Competitive content analysis breaks at scale because most teams are analyzing competitors without first locking their own story. You end up with a pile of observations, screenshots, and feature notes, but no stable frame for what matters and why. So every asset gets rebuilt from scratch.

I’ve seen this pattern a lot. A PMM starts a comparison page with good intentions. They open five competitor tabs, pull a few lines from the homepage, maybe scan pricing, maybe look at a few feature pages, and then try to summarize the market. It feels productive. But what they’re really doing is creating one more isolated opinion inside the company.

The research usually isn’t the real problem

The problem isn’t that teams can’t gather data. Most can. The problem is that competitive content analysis gets treated like a one-off content exercise instead of a governed GTM function. So content says one thing, sales says another, and product marketing has to keep cleaning it up after the fact.

That’s where scaling SaaS teams get burned. Once you have multiple contributors, the cost of inconsistency rises fast. One writer frames a competitor around features. Another frames them around audience. Another frames them around pricing. Another one writes a balanced comparison that sounds nothing like your sales calls. Now your market story is scattered.

Why this gets worse as teams grow

Growth does not clean this up. It usually makes it worse. More PMMs, more content contributors, more approvals, more context gaps. The coordination cost starts to exceed the creation cost, which is kind of insane when you think about it.

And this is the part that wears people down. You review a draft and it’s not technically terrible, but it’s off. The framing is soft. The product claims are fuzzy. The audience isn’t clear. So you rewrite it. Again. Sound familiar?

GEO raises the stakes

Competitive content analysis matters more now because LLMs are reading the whole surface area of your market presence, not just one page. If your comparison content keeps changing voice, argument, and definitions, you send a weak signal. Search engines used to reward a lot of tactical moves. GEO rewards consistency and fundamentals a lot more.

That’s why random competitive pages rarely compound. They may rank for a bit. They may even pull some traffic. But they don’t build a clear market position.

The Real Issue Is Fragmented Positioning, Not Missing Research

The real issue is not missing research. It’s fragmented positioning. Most teams think they need better competitive intelligence. What they actually need is a better system for deciding how that intelligence gets interpreted and expressed.

I remember hearing April Dunford on a panel years ago. Some guy was going on and on about tools and tactics. Grab your list here. Run this play there. Chain together this stack. Then April jumped in and basically said tactics without strategy are shit. She was right then, and she’s even more right now.

Competitive analysis without positioning turns generic

Competitive content analysis gets generic when the team hasn’t clearly defined the category, the enemy, the old way, the new way, and the audience-specific reason your approach matters. Without that, your writer is left doing their best impression of product marketing. That almost never ends well.

So what happens? You get safe language. Soft comparisons. Long sections that describe everyone fairly but say almost nothing. It reads polished. It has no teeth. And it won’t move pipeline because the buyer never gets a sharp frame for evaluating the market.

That’s one of the hidden problems with a lot of AI content tools and SEO tools. They are anchored in channels and outputs. They can help you produce content. They can’t decide what your company believes. They don’t know your market POV. They don’t know your product boundaries. They don’t know which competitor difference actually matters to a Senior PMM versus a CMO.

Most teams are solving the wrong layer

If you’re a Product Marketing Manager, you feel this every week. The issue shows up as rewrite cycles, launch delays, stale competitive pages, and assets that don’t quite match the sales story. But the root cause sits lower in the stack.

It’s usually one of these:

- no shared category framing

- no approved product truth

- no audience and persona context in the brief

- no use case mapping

- no rules for fair competitive claims

- no system enforcing consistency across assets

That’s why competitive content analysis feels heavier than it should. You’re not just analyzing competitors. You’re rebuilding your company’s positioning every single time.

Why manual review doesn’t save you

Some teams think the answer is more review. More PMM review. More legal review. More exec review. More edits. But review is not a system. It’s a patch.

Frankly, that patch gets expensive. Every extra reviewer adds delay. Every delay adds more drift because the market moves, the product changes, or the context gets lost between handoffs. You can brute force this for a while. You can’t scale it.

How Strong Teams Build Competitive Content Analysis That Compounds

Strong teams build competitive content analysis by creating one repeatable interpretation layer before they ever write. They decide what matters, to whom, and how they will talk about it. Then content becomes execution, not reinvention.

This is the shift. Instead of asking, “What do competitors say?” you ask, “How should our market, product, and buyer context shape what we notice and how we frame it?” That question changes everything.

Start with the market frame, not the competitor page

Competitive content analysis should begin with your own category point of view. If you don’t know the old way versus the new way, your comparisons will stay shallow. You’ll compare features because that’s the easiest thing to compare.

But buyers don’t only buy features. They buy a frame that helps them make sense of tradeoffs.

In practice, that means your team should define:

- What category are we really in?

- What broken old way are we arguing against?

- What does the better approach look like?

- Which differences actually matter for our buyer?

A good competitive page is not a feature checklist with a logo table slapped on top. It’s a decision aid. It helps the buyer understand why one way of operating makes more sense than another.

Anchor the analysis to audience and persona

Competitive content analysis gets much better when it is audience-specific. A generic comparison tries to talk to everyone. That usually means it persuades no one.

A scaling SaaS PMM cares about different things than a founder at a 20-person startup. A Senior PMM evaluating GTM content systems cares about message control, feature accuracy, launch speed, and the amount of cleanup required after draft creation. So your analysis should reflect that reality.

What I’ve seen work is simple. For each competitor analysis, force the team to answer:

- Who is this asset for?

- What job are they trying to do?

- What objections do they already have?

- Which product truths matter most in their evaluation?

That sounds obvious. But most teams skip it. Then they wonder why the article feels smart but doesn’t convert.

Build around use cases, not abstract capabilities

This is where a lot of competitive content goes wrong. It stays at the feature level. Feature vs feature. Tab vs tab. Bullet vs bullet. Buyers rarely think that way.

They think in workflows. They think in outcomes. They think in friction.

So instead of comparing “AI writer” to “AI writer,” compare how each approach handles a real PMM job:

- launching a new feature accurately

- building comparison pages without factual drift

- keeping positioning consistent across content types

- producing evaluation content without endless rewrites

Now your competitive content analysis becomes useful. It connects the market story to actual work. And when it does that, readers start seeing themselves inside the page.

Create an approved product truth layer

This matters more than people admit. A lot of competitive content fails because the writer doesn’t know what the product actually does, what it doesn’t do, and where the boundaries are. So they overstate. Or understate. Or they write vague mush that says nothing.

You need approved product definitions. Not just for your own product, but for how you describe the relevant comparison categories and feature boundaries. Otherwise, every asset creates new risk.

I learned this the hard way years ago. The more contributors you have, the more your product truth gets diluted unless it’s documented somewhere stable. You can feel the drift in the writing. It gets blurrier. Less confident. Less specific.

Make fairness part of the system

Good competitive content analysis is not about taking cheap shots. It’s about helping buyers evaluate tradeoffs clearly. That means you should be precise about where a competitor fits, where they don’t, and what context matters.

Some teams are scared to do this because they think fairness means sounding neutral. It doesn’t. You can be fair and still be sharp. In fact, that’s usually more credible.

A useful structure looks like this:

- where this competitor is a strong fit

- where their approach creates limitations

- where your approach differs structurally

- who should care about that difference

That kind of analysis earns trust. Buyers can smell when a page is dodging nuance.

Turn competitive work into a repeatable operating model

At some point, the team needs to stop producing isolated assets and start building a system. That means the process should be stable enough that a new PMM, content marketer, or writer can create on-brand competitive content without starting from zero.

A repeatable system usually includes:

- a shared category narrative

- audience and persona rules

- use case definitions

- approved product claims and limits

- competitive source material

- a review bar for fairness and accuracy

Once you have that, competitive content analysis stops being a fire drill. It becomes a durable GTM asset creation process.

Why this compounds in the GEO era

This is the overlooked part. Consistent competitive content analysis does more than publish pages. It teaches the market how to categorize you. It teaches search engines and LLMs what you stand for. It reinforces your vocabulary, your positioning, and your product definitions over time.

That compounding effect is why consistency beats random bursts of output. I’ve seen teams publish a lot and get very little from it. Then I’ve seen smaller teams publish with strong structure and clean narrative consistency, and the market starts to understand them much faster.

If you want to see what this looks like in practice, Request a Demo and we’ll walk through how teams systematize competitive content analysis without adding another messy review loop.

What Oleno Does When You Need Accuracy Without Constant Rewrites

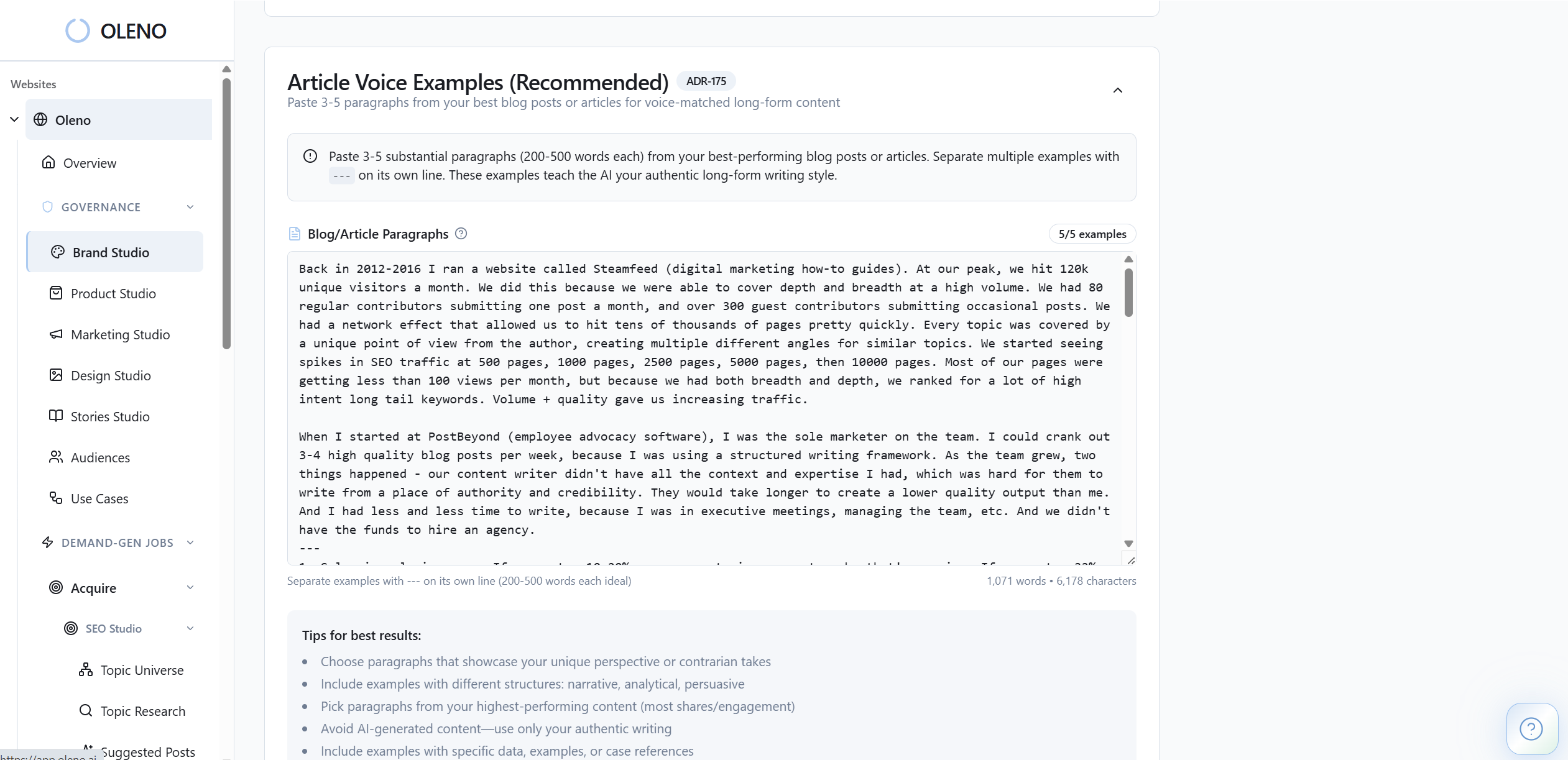

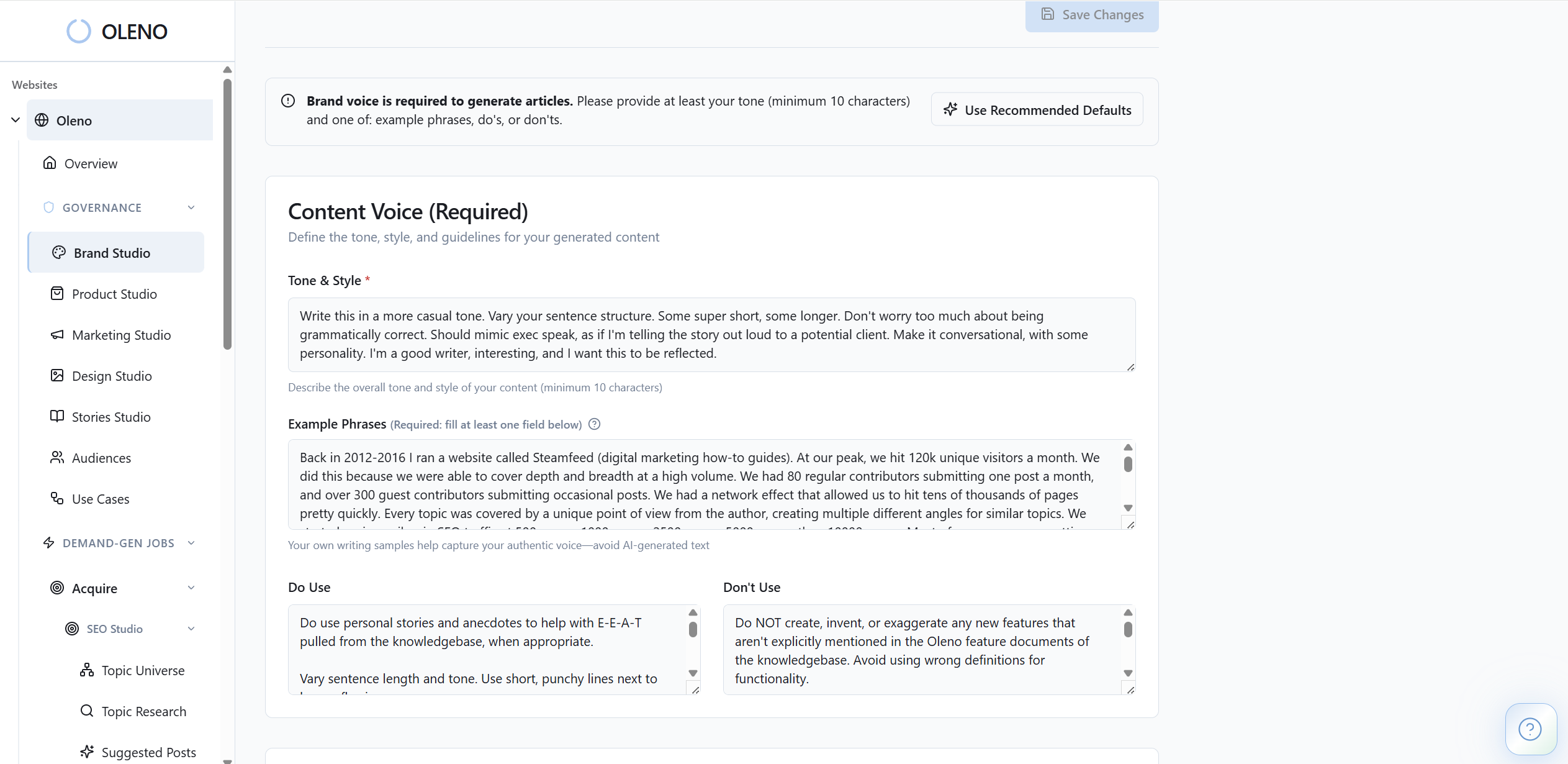

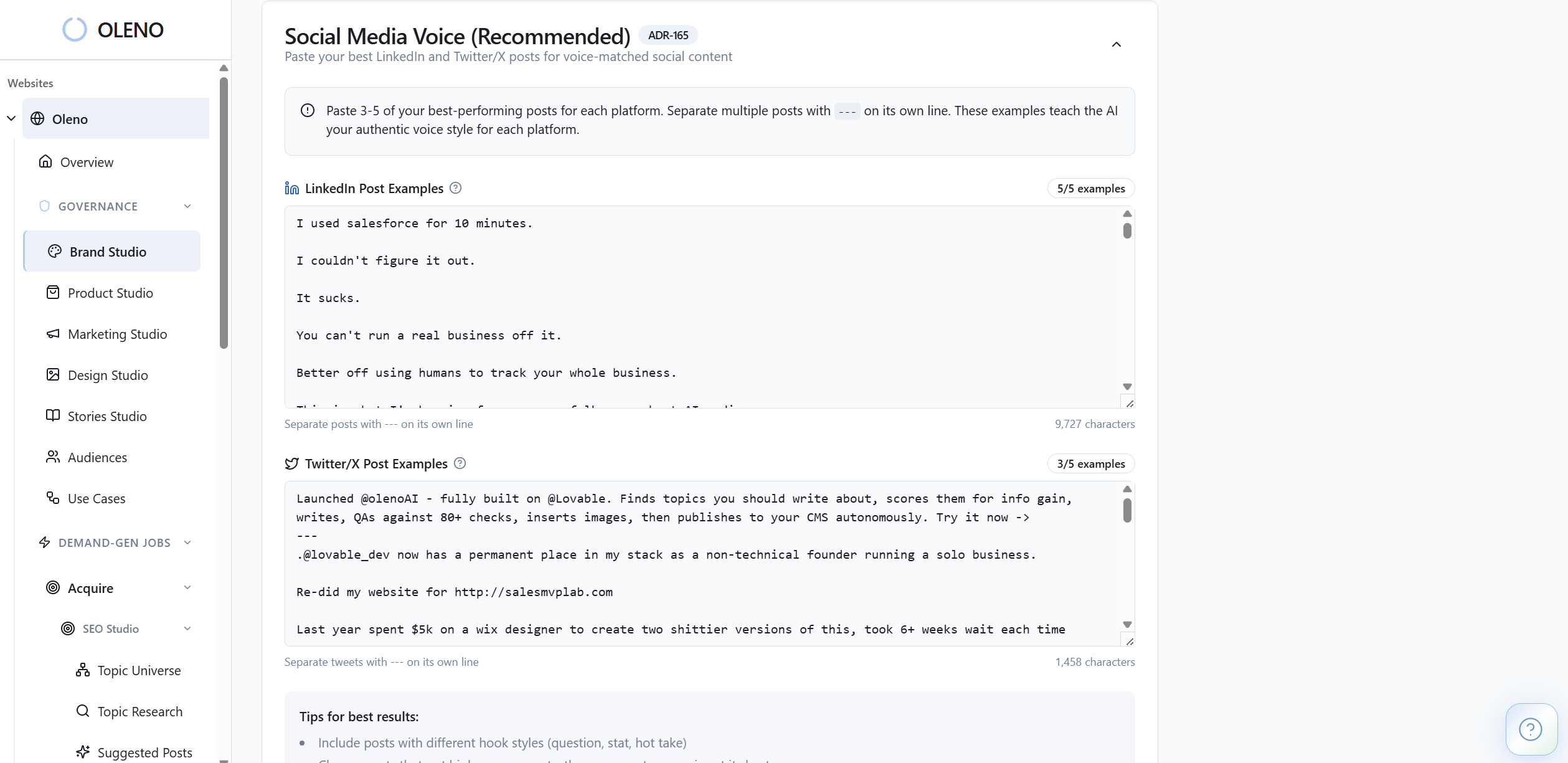

Oleno handles competitive content analysis by giving teams a governed system for positioning, product truth, audience context, and execution. That matters because most PMMs don’t lose time on writing alone. They lose time fixing drift after the draft is done.

The goal is not to replace product marketing judgment. It’s to stop wasting that judgment on the same corrections over and over.

Governance first, then competitive execution

Oleno starts with the layers most tools skip. Marketing Studio lets your team define key messages, category framing, and narrative structures so competitive assets argue from a real point of view instead of generic education. Product Studio stores approved product descriptions, feature boundaries, supported use cases, and limits, which keeps drafts from wandering into fuzzy or inaccurate claims.

Audience & Persona Targeting and Use Case Studio matter here too. They shape how the same competitive topic gets framed for a Product Marketing Manager versus another buyer, and they keep comparisons tied to real workflows instead of vague capability language. That’s a big deal if your team is publishing content across multiple segments and keeps paying a rewrite tax.

Competitive content with a stable fact base

For actual competitive content analysis, Oleno uses Competitive Studio. You define competitor domains and product URLs, and the system builds a competitive knowledge base from that material. Then it generates structured competitive briefs and drafts with fairness rules and competitive-specific QA checks built into the process.

That changes the work. Your PMM is no longer starting from a blank page every time a comparison needs to be written or refreshed. The system has the source context, the approved market narrative, and the product truth available before drafting starts. So the first draft is closer. Not perfect every time, but a lot closer.

What I like about this model is that it respects boundaries. Competitive Studio does not monitor competitor pricing in real time or create attack ads. Product Studio does not invent features. Quality Gate evaluates voice, structure, grounding, and clarity before content moves forward. So the process is built to reduce risk, not just produce more words.

If your team wants to see how that works in a real GTM workflow, Book a Demo. We can show you how Oleno turns competitive content analysis from recurring cleanup work into a repeatable operating system.

Build a Competitive Content System Before the Rewrite Tax Gets Worse

Competitive content analysis is not hard because competitor pages are hard to read. It’s hard because most teams are trying to produce sharp market-facing content without a system that holds positioning, product truth, and audience context together.

That’s why the work feels heavier every quarter. More contributors. More pages. More inconsistency. More rewrites.

The fix is to stop treating competitive content as isolated output and start treating it like governed demand-gen execution. If you want a clearer way to do that, Request a Demo and see how Oleno helps scaling SaaS teams keep competitive content analysis accurate, consistent, and useful.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions