Why Consistency Beats Volume in LLM-Driven Visibility

You felt this this week: a draft showed up looking fine on the surface, then stole 40 minutes of your night because it sounded like a company you don't run. That's why consistency beats volume when the machine deciding who gets cited is reading the pattern across 50 assets, not falling in love with your single best article.

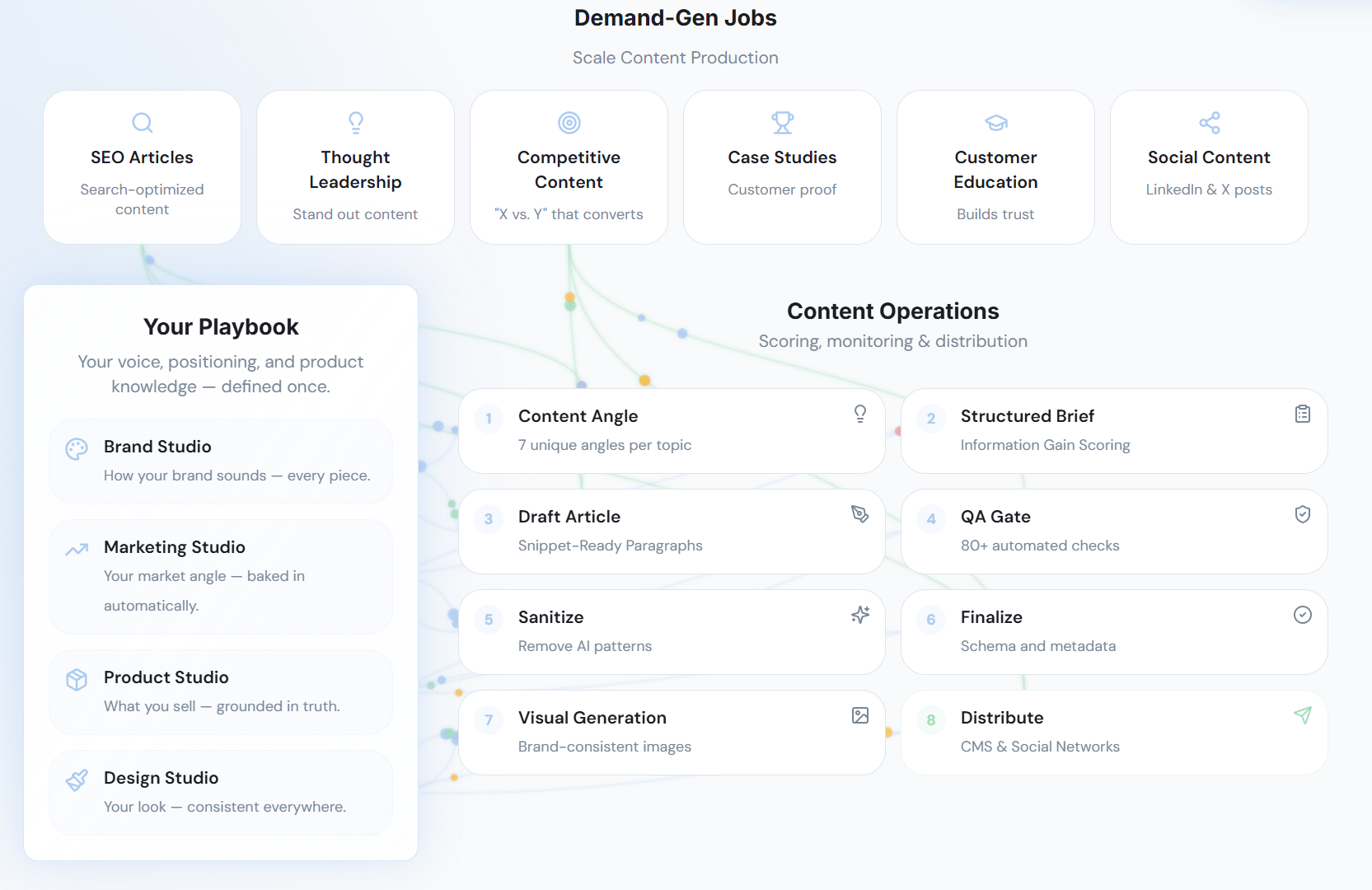

Demand-gen content execution platform is a governed marketing system that turns strategy into consistent, multi-channel demand generation output by applying brand rules, product truth, audience context, and publishing workflows across content creation at scale. Unlike an AI writing tool, a demand-gen content execution platform is built to carry strategic context from leadership all the way to published output.

The timing matters. This category showed up because SEO tactics alone stopped being enough once LLMs started choosing which brands looked trustworthy enough to summarize.

Key Takeaways:

- LLM visibility is pattern recognition, not article-by-article judgment

- The real enemy is The Strategy-Execution Trade-off, the false choice between speed and control

- More drafts can actually weaken your market signal if your message drifts

- Rewrites, approvals, and tone fixes are not minor inefficiencies. They're visibility debt

- Category leaders design consistency into the system before content gets created

- The teams that get cited most often usually sound like one company everywhere

More Content Does Not Mean More LLM Visibility

LLM-driven visibility comes from repeated clarity, not isolated bursts of output. If you came up in the SEO era, that feels backwards, because volume used to cover a lot of sins.

I get why that playbook stuck. Back in 2012-2016 I ran a website that hit 120k monthly visitors, and a big part of it was scale. We had 80 regular contributors, 300-plus occasional ones, and traffic spikes at 500, 1000, 2500, 5000, then 10000 pages. Most pages got under 100 visits a month. Still worked. Why? Because the catalog had breadth, depth, and enough point of view to hold together. Volume helped, sure. But even then, consistency beats volume when the underlying signal falls apart.

LLMs Reward Repeated Clarity, Not Random Bursts

78% of what matters to an LLM is not your best page. It's whether your definitions, claims, audience framing, and product language line up over time.

A search engine could rank one strong standalone page. An LLM does synthesis. Different game. It looks across your site, third-party mentions, product pages, category content, comparison pages, founder POV, and help docs. When those pieces reinforce the same market story, you're easier to cite. When they conflict, you go blurry.

Think of it like packet loss in a network. One dropped packet? Annoying, not fatal. But enough inconsistency across enough hops and the message arrives mangled. Same thing here. A content team can publish plenty and still send a corrupted signal into the market.

A Tuesday example makes this real. Your content manager drafts a blog in Docs at 2 PM. Sales is still using an old deck that frames the category differently. Product marketing updates a webpage on Wednesday with safer language because legal got nervous. By Friday, an LLM crawling the whole footprint doesn't see authority. It sees disagreement. That's the question the next section has to answer: if volume isn't the lever, what exactly is breaking?

The Old Volume Playbook Breaks When Machines Interpret Your Brand

Back when search was more keyword-driven, publishing 30 decent articles could outrun a team publishing 10 strong ones. In some parts of search, that still works. Fair concession. I am not arguing volume stopped mattering.

What changed is the evaluator. When the system deciding visibility is trying to infer who you are, what you stand for, and whether your explanation is stable enough to reuse, consistency starts carrying more weight than raw throughput. According to Google's documentation on AI Overviews and generative experiences, systems increasingly synthesize across sources rather than simply forwarding one blue link at a time (Google Search Central).

So what happens if your homepage says one thing, your blog says another, your comparison pages hedge, and your thought leadership sounds like a different company entirely? Ambiguity. And ambiguity is expensive.

Not because the content is terrible. Because the machine has to do interpretive labor on your behalf. And if a model has to guess what you mean, you're already losing ground. Which brings us straight to the real enemy.

The Strategy-Execution Trade-Off Feels Normal Because It Looks Like Progress

The Strategy-Execution Trade-off is the real enemy here. It's the false choice between strategic control and execution speed. Most CMOs don't call it that. They just live it.

You either keep standards high through manual review, which slows everything down, or you use AI and outside contributors to move faster, which creates drift that pulls senior people back into the edit loop. It looks productive. Drafts move. The calendar stays full. Content ships. But shipping isn't the same as compounding.

There's a day-in-the-life version of this that plays out constantly. A VP Marketing opens Asana at 8:10 AM and sees six assets marked "ready for review." By 11:30, they've rewritten positioning on two, softened claims on one, fixed product language on another, and left twelve comments asking what audience this was even for. The team feels busy. The leader feels trapped. The business gets output, but not a stronger signal.

If you want to see how a systems approach changes that, you can request a demo. The point isn't more content. It's a more coherent market signal.

That false trade-off is why so many teams look busy and still don't get seen.

The Problem Is Not Output. It's Signal Integrity

The market doesn't reward you for having a lot to say. It rewards you for being easy to understand. That's a very different problem.

Most teams think they have a content production issue. Usually they don't. They have a signal integrity issue. Strategy sits in one place. Execution happens somewhere else. Every handoff strips context away. So yes, consistency beats volume when the system reading your brand is really checking whether the signal survives the handoff.

Most Teams Don't Have a Content Problem. They Have a Signal Problem

What gets mistaken for a capacity issue is often a coherence issue. You have writers. Or freelancers. Or an agency. Or AI tools. Or all four. Still, the market isn't getting one clear point of view from your company.

Use the 3-Layer Drift Test:

- Does your category framing show up the same way on your homepage, blog, and sales deck?

- Would two different writers describe your product in almost the same words?

- Can your PMM review a draft in under 15 minutes without heavy rewrites?

If you answer no to two out of three, your problem isn't output. It's execution drift. That's a threshold, not a vibe.

Monday morning, this shows up fast. A content lead pulls up the homepage, a recent webinar deck, and last week's blog post. Three assets. Three different category descriptions. Then a PMM opens a draft and spends 27 minutes rewriting product framing that should have been locked before drafting started. Before lunch, you've diagnosed it: not a writing shortage, a signal shortage.

If the issue were pure capacity, more writers would solve it. Usually they don't. The next question is why strategy keeps dying on the way to execution.

Strategy Dies in the Handoff

When I started at PostBeyond, I could write 3-4 strong posts a week because I had the context in my head. As the team grew, the writer didn't have all that context, which is normal. Quality dropped. Speed dropped too. And I had less time because I was in exec meetings and managing more of the business. So the supposed scale move created more drag.

That's the handoff problem. Strategy is rich at the leadership layer and thin by the time it reaches the draft. The writer gets a brief. The agency gets a kickoff. The AI gets a prompt. None of those are the same as transferred judgment.

Not because people are bad at their jobs. Because the system wasn't built to carry context. Big difference.

Picture the relay race version. Leadership runs the first leg with a full map of the market. Then, somewhere between the brief, the freelancer, the prompt, and the review round, the baton gets swapped for a sticky note. Everyone is still running hard. They're just no longer carrying the thing that mattered.

That's why the next failure mode feels so sneaky.

Fast Drafting Without System Control Creates Noise

Prompting is useful for tasks. It isn't a full execution model.

Anthropic's own prompt engineering guidance says this pretty clearly in a quiet way: output quality depends heavily on context, examples, structure, and constraint (Anthropic docs). Which means the human team still has to supply the system around the prompt.

So if each draft is a standalone event, each one becomes another chance for your message to bend. Tone bends. Claims bend. Audience fit bends. Your point of view gets softer. Or broader. Or just generic enough to be harmless.

And harmless content almost never gets remembered.

One honest limitation here: strong writers can absolutely compensate for weak systems for a while. That's true. Especially on a small team with a founder still close to every word. But once publishing volume, channels, and contributors expand, heroics stop scaling. What felt like craftsmanship becomes dependence. And dependence is expensive.

The Cost of Inconsistency Compounds Faster Than Teams Expect

One weak draft is annoying. Fifty weak drafts create a market problem. That's the math a lot of teams miss.

They look at the writing budget. They should be looking at the rewrite budget, the review budget, the opportunity cost, and the visibility loss from mixed signals. This is where consistency beats volume when you stop counting published pieces and start counting signal degradation.

Every Rewrite Proves the System Never Carried the Strategy

A rewrite isn't just an editing event. It's evidence. Evidence that the strategic context never made it into execution in the first place.

Let's make it concrete. Say your VP Marketing spends 35 minutes cleaning up each draft and your team publishes 20 pieces a month across blog, product pages, social variations, and sales support content. That's almost 12 hours a month from one senior leader. Over a quarter, you're past 35 hours. Basically a week of executive time burned on translation.

Visible cost, though, is only half of it. The hidden cost hits harder. The leader stops delegating. The team slows down. Standards become trapped in one person's head.

Before: the team thinks review is a quality safeguard. After: you realize review is acting as a prosthetic for a broken system. That's the Rewrite Tax Rule—if senior review time exceeds 10 hours per month per function, you no longer have a content workflow; you have manual compensation.

And once that tax shows up, LLM visibility starts slipping for a reason most dashboards won't tell you.

Narrative Drift Makes You Harder for LLMs to Trust

What does an LLM do with a brand that describes the same category six different ways? Usually, not much.

LLMs need stable definitions. Stable contrasts. Stable product descriptions. Stable audience cues. If your site says you're analytics software, your category pages say enablement platform, your founder posts say workflow layer, and your comparison content sounds like a generic AI writer, you're asking the machine to reconcile your identity for you. Bad bet.

This is why consistency beats volume when LLMs decide who gets seen. They're not rewarding whoever posted most last week. They're rewarding whoever required the fewest interpretive leaps.

A concrete scenario: a buyer asks a model for the top tools in your category. The model pulls from your homepage, a partner mention, two blog posts, and a comparison page. If those assets use four different definitions, the model either hedges, summarizes vaguely, or excludes you in favor of a clearer brand. No warning. No email. Just invisibility.

Clear brands reduce model effort. Blurry brands increase it. Machines, like buyers, prefer the easier read.

Coordination Cost Eventually Exceeds Creation Cost

For scaling SaaS teams, this is where things get ugly. More contributors should create more output. Instead, more contributors often create more handoffs, approvals, clarification, and waiting.

I've seen this happen over and over. A team grows past 200 employees, adds PMM, content, SEO, demand gen, maybe a freelancer or agency, and suddenly no one is short on effort. They're short on alignment. Output rises a bit. Overhead rises faster.

You can see the old way against the category way pretty clearly:

| Dimension | Old Way | Category Way |

|---|---|---|

| Strategic control | Preserved through manual review bottlenecks | Defined once and applied across execution |

| Speed to publish | Fast only when standards slip | Scales without dropping the bar |

| Brand voice | Drifts across writers, prompts, and vendors | Stays stable across contributors and channels |

| Executive time | Gets consumed by rewrites and approvals | Gets used for direction and decisions |

| LLM visibility | Mixed signals weaken authority | Repeated signals strengthen citation odds |

| Budget efficiency | Output often needs rework or replacement | Work compounds instead of resetting |

That's not a workflow nuisance. That's visibility debt.

The Executive Editing Loop Is Where This Becomes Personal

The draft is never bad enough to throw out. That's the problem. It's just off enough to steal your night.

You open the doc at 9:40 PM. Structure is fine. Grammar is fine. Facts are mostly fine. But the positioning is soft, the tone is generic, the examples don't sound like your company, and the product framing is just wrong enough that you can't let it go live. So you rewrite it. Again. The issue isn't effort. It's that the system still needs your brain as middleware.

The Draft Isn't Broken Enough To Kill

Most senior marketers know this feeling immediately. You're not fixing typos. You're restoring intent.

That was the headache in the founder story too. Copying and pasting prompts and outputs into a CMS took 3-4 hours a day, which is bad enough. But the deeper issue wasn't the labor. It was that the system still depended on a human carrying all the judgment manually, every single time.

Short version: the machine wrote text. You still had to do the thinking.

At 9:40 PM, this gets painfully specific. A CMO opens a "final" draft in Google Docs, changes the headline, rewrites the intro, swaps three proof points, tightens the CTA, and fixes product language in comments because none of it sounds like the company. Forty-two minutes later, the doc is publishable. The team sees one approved article. The leader feels the cost in their chest.

That emotional drag matters because it changes behavior. People stop trusting the pipeline.

Senior Marketers Become Editors When Context Has No Home

There's a case for manual review, especially when you're early and positioning is still moving. That's fair. In early-stage companies, a founder or CMO probably should stay close to the message.

But once you know your category, your use cases, your audience language, and your product truth, continued manual translation becomes a systems failure. Not a leadership virtue. The role should shift from cleanup to calibration. If it doesn't, your smartest marketing person becomes a bottleneck with a title.

And bottlenecks don't scale, even when they're talented.

The exception proves the rule here: if you're pre-PMF, still testing category language, or changing product scope monthly, tighter executive editing makes sense. Absolutely. But if the message has been stable for two quarters and you're still rewriting every asset by hand, that's not diligence. That's design debt.

So what does the better model actually look like?

Category Leaders Design Consistency Before They Create Content

The shift is simple to say and harder to build. Category leaders stop treating content as a sequence of tasks and start treating it like an operating system.

A demand-gen content execution platform is designed for scaling B2B marketing teams that need speed without losing voice, product accuracy, or narrative coherence across channels. It emerged because strategy in docs and drafts in tools turned out to be a losing setup once LLM visibility started rewarding stable, repeatable brand signals.

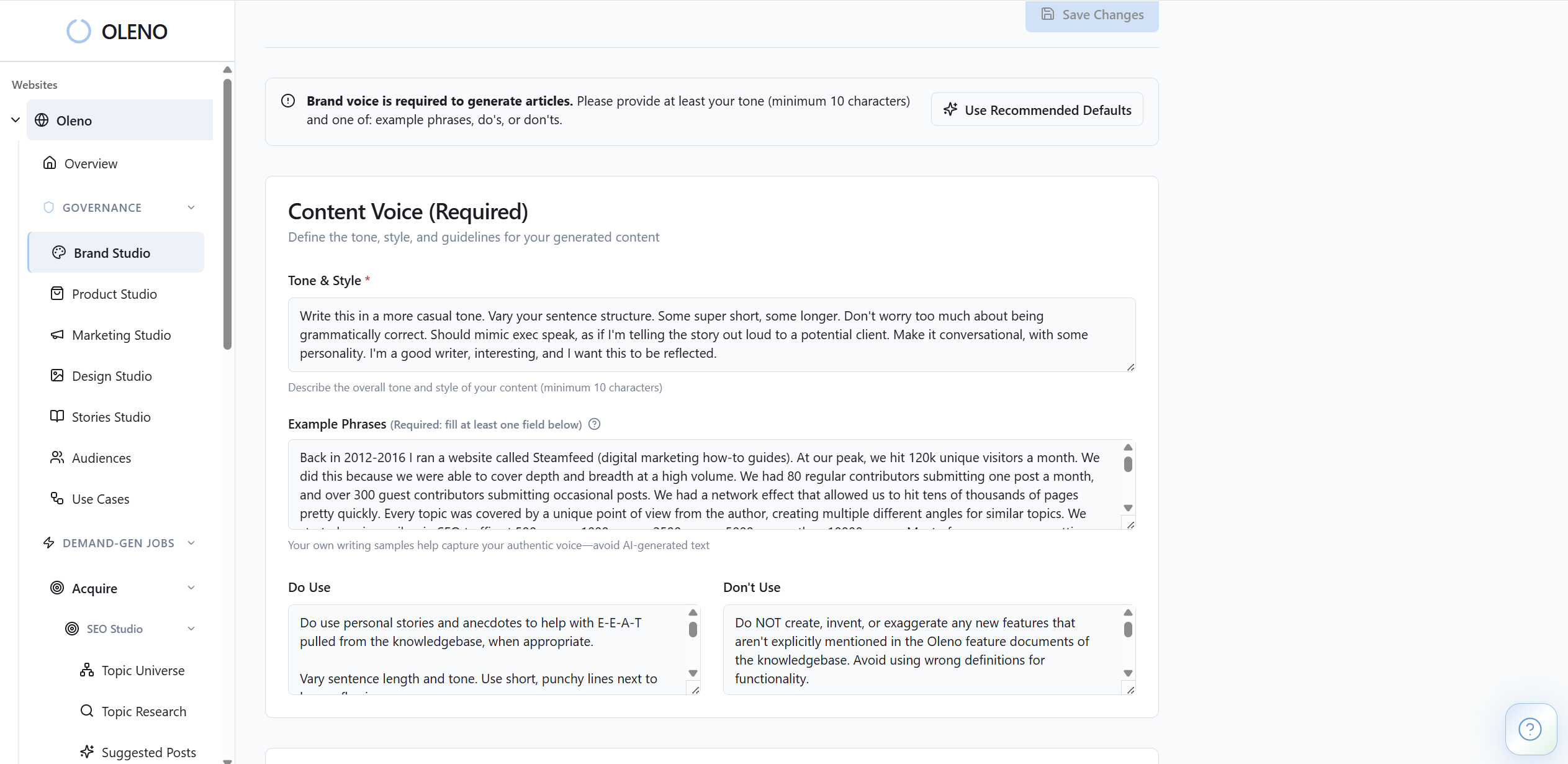

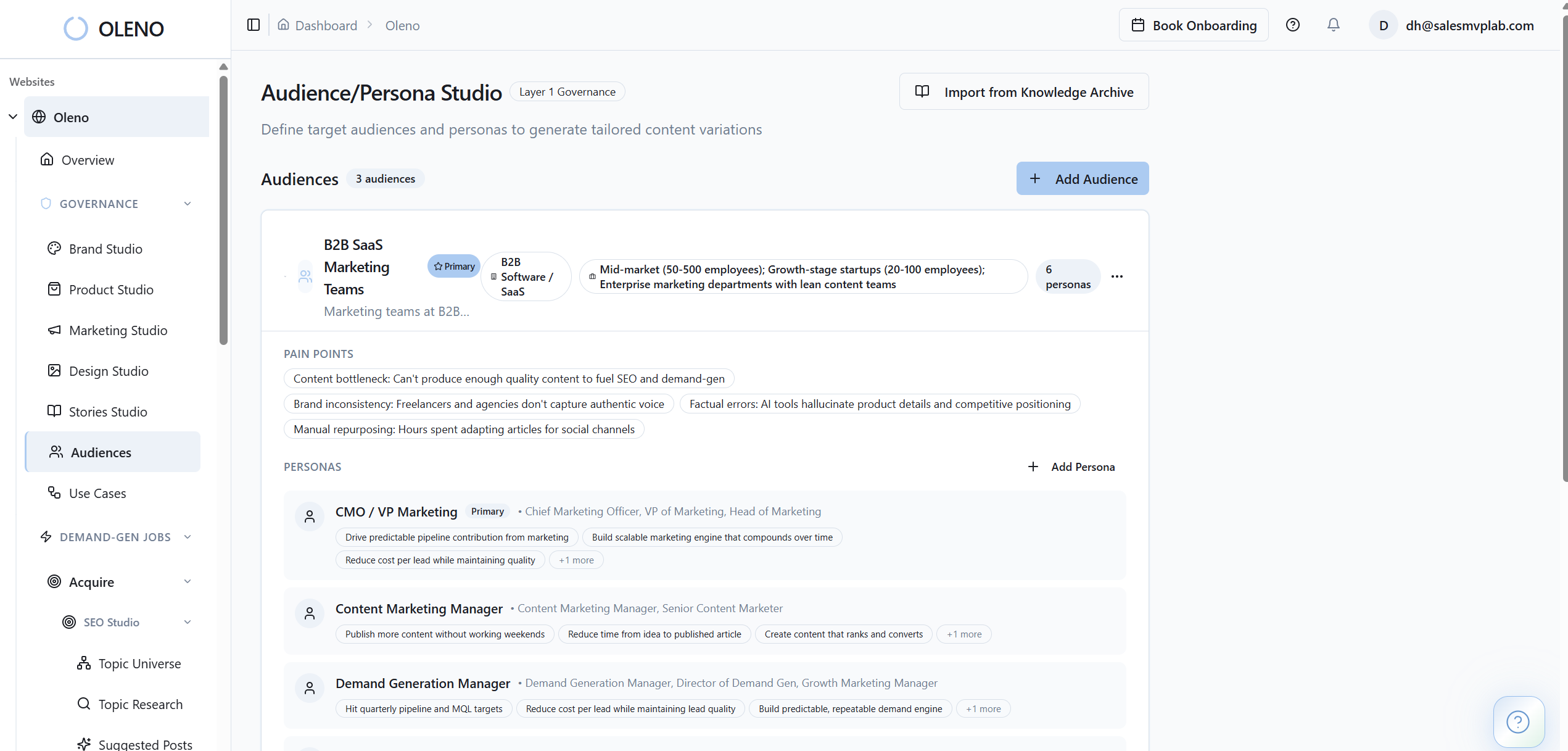

- Encoded Governance: Strategic decisions about voice, positioning, product truth, and audience fit are defined once and applied everywhere.

- Systemic Execution: Creation, review, variation, and publishing run as one system instead of disconnected tasks.

- Compounding Signal: Each asset reinforces the same market story, so buyers and LLMs can recognize and trust it faster.

Design Consistency Upstream Or You'll Pay for It Downstream

Consistency is decided before the first draft, not during the tenth rewrite.

If your team waits until editing to enforce standards, you've already lost efficiency. The useful rule here is the 80/20 Context Rule. If 80% of strategic judgment still lives in senior people's heads and only 20% is encoded into briefs, templates, product definitions, audience models, and review standards, output will drift no matter how strong your writers are.

This is where smart teams fool themselves. They think standards are clear because leadership can explain them verbally. But if the system can't apply those standards without the leader present, the standards are not operational yet.

Before, your team treats consistency like QA. After, they treat it like infrastructure. Big difference. Build guardrails upstream and publishing gets faster downstream. Skip that step and you pay in rewrites forever.

Strategy Has To Move From Docs Into Execution

One of the biggest mistakes I see is teams polishing strategy decks while execution keeps running on tribal knowledge.

The market story needs structure. Your category framing. Your point of view. Your audience differences. Your product boundaries. Your claims. Your examples. Your approved language. All of that has to move from static documents into the production process. Otherwise strategy becomes decorative.

Use the 15-Minute Review Threshold. If a senior marketer needs more than 15 minutes to review a draft on a known topic, the system isn't carrying enough context. They are.

On a Thursday afternoon, that threshold is easy to test. Pull a draft on a topic you've covered before. Start a timer. If the reviewer is still rewriting framing, examples, tone, and proof at minute 16, the process is not executing strategy. It's translating it manually.

If you want to see that kind of system in practice, you can request a demo. Seeing the structure usually makes the problem much more obvious.

The Best Brands Repeat One Market Story Across Every Surface

Buyers experience your brand one touchpoint at a time, but LLMs evaluate it as a whole.

That's why category leaders teach one market story everywhere. Not the exact same headline everywhere. Not robotic repetition. A stable core story with controlled variation. Same enemy. Same definition. Same product truth. Same audience logic. Same why-now.

At LevelJump, we could turn founder thinking into content fast by recording videos and transcribing them. Useful. But the structure wasn't there, and search intent wasn't there either. So we got output without enough discoverability. That taught me something important. Thought leadership without system design doesn't compound the way people think it will.

This is the paradox a lot of teams miss: variety helps humans stay interested, but stability helps markets remember you. You need both. Controlled variation on top of a fixed spine. That's how consistency beats volume when scale would otherwise blur the message.

The teams that win here are not necessarily the ones publishing the most. They're the ones making the market remember the same thing every time they show up.

How Oleno Makes This Operational

Oleno exists to remove the Strategy-Execution Trade-off. It gives marketing teams a way to keep control of their message without dragging senior people through every draft, rewrite, and publish step.

The simplest way to think about it is this: your team defines what is true, what matters, who you're speaking to, and how you want to sound. Then the system carries that forward across execution instead of asking humans to restate it from scratch every time.

Oleno Replaces Cleanup Work With Structured Control

Oleno does this by combining brand studio, marketing studio, product studio, audience & persona targeting, and use case studio at the front of the process. That means voice, category framing, product truth, audience context, and use-case detail are set up before drafting begins, not patched in after the fact.

Then the orchestrator runs job-based pipelines, while the quality gate checks whether the output actually meets the standard. If your problem today is that strategy lives in decks and edits, this is the bridge. The system starts carrying the context your senior team used to carry alone.

That changes the economics. One customer reaction captured it well: this felt like adding 3 people to the team. I like that framing because it gets to the point fast.

Before, senior marketers act like air traffic control for every asset. After, they set the flight rules once and spend their time on route changes, not every takeoff. That's how you turn judgment from a bottleneck into infrastructure.

Oleno Creates The Kind Of Consistency LLMs Can Recognize

Oleno's programmatic seo studio, category studio, buyer enablement studio, writing studio, and competitive studio let teams produce different types of content without resetting the narrative every time. That's a big deal. Different content formats usually create different kinds of drift.

Product studio matters here too, because factual drift is one of the fastest ways to create rework and lose trust. Stories studio plus ip studio matter because bland content is usually what happens when real company thinking never makes it into the draft in the first place.

One prospect read a single article and signed up off the strength of that piece alone. No sales call. No long demo chain. Just a reaction that the output cleared the slop test. That's not magic. That's what happens when the system remembers what your team means.

If you want to look at how that works in your own environment, book a demo.

The Brands That Get Seen Will Be The Ones That Stay Coherent

More content still matters. I'm not arguing for publishing less just to feel strategic. I'm arguing that consistency beats volume when LLMs decide who gets seen, because machines trust stable patterns more than isolated spikes.

That's the shift. Stop asking how to produce more drafts. Start asking how to make every published asset reinforce the same market signal. Once you do that, volume starts compounding again instead of making the blur worse.

One last way to say it. Publishing more while your message drifts is like pouring water into a leaky pipe: the pressure looks impressive right up until the line fails at the far end. Fix the system first. Then turn up the flow.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions