Content Cost Benchmarks: Calculate Cost‑Per‑Article to Scale Quality

Most teams plan content like a headcount problem. Feels controlled. Safe. Until velocity spikes, revisions pile up, and your “fixed” budget starts leaking from three different seams at once. I’ve lived that at startups and mid-market. You think you’re optimizing people. You’re really guessing at unit economics without a unit.

Back when I was running Steamfeed, volume and quality scaled because we inadvertently treated every post as a unit with a cost and a bar. At PostBeyond and later teams, we didn’t. I could write fast and well, but as soon as the work spread across roles, the rework tax showed up. That’s the lesson. Budget the unit, published article at target SLOs, or you will pay for rework you never priced.

Key Takeaways:

- Budget per published article, not by headcount or retainer, to expose real tradeoffs

- Tie quality SLOs (structure, accuracy, voice, visuals, QA pass rate) directly to time and cost

- Model fixed, variable, and one-off costs, and show how one-off investments lower variable spend

- Quantify the hidden costs: revision loops, missed publish windows, and backlog churn

- Use a three-tier SLO model (bronze/silver/gold) and forecast at 1, 10, 100 articles per month

- Adopt a deterministic pipeline and a QA gate to reduce rework and stabilize unit economics

Why Budgeting Per Article Prevents Quality Collapse

Budgeting per article surfaces unit-level tradeoffs before quality slips and costs balloon. By attaching SLOs to each published piece, you see the true cost of revisions, SME pulls, design loops, and QA retests. Teams using per-article accounting can dial volume without quietly degrading quality during spikes.

The Hidden Math Behind Quality Drop

Unit costs look fine when you stare at salaries or retainers. They don’t when you count the extra edit rounds, SME time, and re-approvals hiding under “just one more pass.” This is the drift that hits you two months later as trust and rankings wobble. At Steamfeed, we saw the opposite: clear volume + quality targets led to compounding results, even when most posts were small. The unit mattered.

Here’s the honest pattern. You push velocity, and the system starts borrowing against quality. Structure gets looser, factual grounding gets thinner, visuals arrive late. Each small miss adds back-and-forth. Nobody logs those minutes to a specific article, so headcount still looks fixed. The invoice doesn’t. Per-article budgeting forces that drift into the light.

What Is Cost Per Article, And Why Does It Matter?

Cost per article is total production spend divided by published articles. Not drafts. Published pieces that met your SLOs. Include fixed, variable, and one-off investments. Add SLOs like edit hours, SME minutes, visuals per article, and QA pass threshold. Now you’ve got a service unit the board understands.

This isn't pedantry. It’s how you negotiate with vendors, forecast at 1, 10, or 100 posts a month, and protect quality. Want a quick anchor on market rates? Look at ranges like those in WebFX’s content marketing pricing overview. Then translate those ranges into your SLOs instead of buying generic “packages.” You’ll make better calls, and you’ll see the rework tax early.

Why Headcount And Retainers Mask Tradeoffs

Retainers look efficient until volume surges, then every “minor” change gets billed or deferred. In-house headcount looks cheap until you add four edit rounds, a late visual redo, and a QA retest. I’ve watched teams convince themselves they saved money, right up to the moment the launch moved a week.

Per-article accounting changes behavior. You can say, “At gold SLO, two edit rounds and SME review are funded. The third round is variance.” It’s not punitive. It clarifies where quality expectations need to shift, or where process needs to tighten, before the brand pays with trust.

Ready to baseline your unit costs quickly? If you want a fast yardstick, Try Generating 3 Free Test Articles Now.

Treat Content Production Like a Budgetable Service

Treat content like a service with inputs, outputs, and error budgets so you can forecast predictable spend at target SLOs. Traditional process improvements help, but they rarely link SLOs to dollars. A service model forces the budget and quality conversation into the same room.

What Traditional Approaches Miss

Most plans tighten process: better briefs, cleaner approvals, smarter ideation. Good. But they stop short of pricing quality. If your SLO is “KB-grounded accuracy,” where does that show up? Editor hours? SME minutes? A QA gate? If it’s not priced, it’s a wish, not a standard.

A service unit fixes that. Inputs are time and tools; outputs are published pieces at defined SLOs; error budgets define the allowed variance. You can now forecast cost at silver SLO for a cadence of 20 per month and know exactly what tightens or loosens when demand changes. And you can point to broader budget shifts in reports like CMI’s 2025 B2B content trends to context your model for leadership.

The Hidden Complexity Of Fixed, Variable, And One-Off Costs

Every program has three buckets. Fixed: salaries and minimum retainers. Variable: per-article writing, editing, SMEs, visuals, QA. One-off: tools, templates, brand rules, knowledge engineering. The trick is modeling how one-offs reduce variable spend and how fixed amortizes with volume.

For example, a structured brief and brand voice rules can cut edit time by 30 to 40 percent. A library of visual templates can remove the design bottleneck most teams pretend isn’t there. None of that matters if you can’t show finance how those one-offs lower the per-article price at 10, then 50 per month. When I’ve seen this done well, the “fixed” debate ends, because the variable line tells a better story.

The Costs You Are Not Counting Add Up Fast

Hidden costs stack quickly: revision loops, QA failures, and backlog churn inflate unit economics without warning. A simple model exposes where the money goes and when the bottlenecks appear. Then you can decide what to tighten, not just work harder.

Revision Loops And QA Failures Multiply Spend

Let’s pretend your base article costs $600. Two extra edit rounds add 1.5 editor hours and 0.5 SME hour; QA retest tacks on more. At $120/hour editor and $200/hour SME, you’re at $180 + $100 plus QA time. Round numbers, you just hit $900. Do this 25 times in a month, and you’ve quietly created a $7,500 line item nobody forecasted.

That’s not doomsday talk. It’s arithmetic. And it’s why unit accounting matters. If you need market guardrails, look at line-item breakdowns like MeasureMinds’ cost inputs and ranges. Use them as placeholders, then replace with your actual rates. Your model will get honest fast.

Scenario Modeling At 1, 10, And 100 Articles Per Month

At 1 per month, fixed costs dominate; your unit cost will be high. At 10, fixed amortizes; variable governs quality. At 100, bottlenecks amplify; QA rework and design throughput become the limiter. That pattern shows up, every time.

Model three SLO tiers, bronze, silver, gold, and price the delta. Bronze: limited edit time, QA sample only. Silver: two edit rounds, SME spot-check, structured visuals. Gold: SME review funded, full QA pass, custom visuals. Then ask the question leadership actually cares about: what does it cost to sustain quality at our growth cadence?

Still wrangling this manually? There’s another route if you’re ready to systematize. Try Using an Autonomous Content Engine for Always-On Publishing.

When Quality Slips, Trust Erodes And Budgets Get Cut

Quality misses don’t just dent metrics. They burn time, credibility, and future budget. When content requires cleanup after publish, your team pays twice: once to fix it, again in lost momentum. That’s the real headache.

The Late-Night Fix That Derails Your Week

You publish. A factual miss surfaces. Leadership pings at 9 pm. You unpublish, revise, republish, and field customer questions. It’s not just reputation, it’s cross-functional hours: editor, SME, designer, and approver pulled into a fire drill. Tomorrow’s queue? Blown up.

I’ve been on both sides. As a sales leader receiving leads, a small content slip could throw the launch plan off by days. Nobody logs all those minutes to the article. Finance still pays for it. This is why error budgets should exist in content, not just software.

Who Pays For Rework?

Vendors point to the SOW. Freelancers bill by the hour. In-house teams absorb it and move on. Either way, marketing eats the cost and the narrative damage. If your unit is “published article at target SLOs,” rework shows up as variance. If your unit is “hours billed,” variance hides until the quarter closes, and you’re defending cuts.

The fix isn’t more heroics. It’s a system that blocks low-quality work from shipping in the first place, and a budget model that makes variance visible the moment it happens.

A Practical Framework To Benchmark Cost And Scale Quality

You can benchmark cost-per-article in a week. Build a line-item model, map SLOs to price points, and forecast across volumes. Then test levers deliberately. Small, controlled experiments beat big bets every time.

Build A Line-Item Model For Fixed, Variable, And One-Off Costs

Start with three tables. Fixed: salaries, retainers, minimums. Variable per article: writing, editing, SME time, visuals (or templates), QA sampling, publishing. One-off: tools, brand rules, knowledge base setup. Add columns for rate, hours, unit cost, and reduction impact. Short story: this is the sheet that finally explains your budget to finance.

Now connect reductions to steps. A stronger brief can shave edit time. A knowledge base can cut SME minutes. Templates can remove the design bottleneck. For guardrails on line items, cross-check with guides like The Digital Elevator’s content cost breakdown. Use their ranges as sanity checks; then replace with your data.

Map Quality SLOs To Price Points

Define the SLOs you will fund: narrative compliance, KB-grounded accuracy, voice rules, visuals per article, QA pass threshold, cycle time. For each, set an acceptable error rate and the time or cost required. Your silver tier might include one hour of editor time and a 10 percent QA sample. Gold might add SME review and a full QA pass.

This is where disagreements get productive. If someone wants gold-level accuracy but bronze-level budget, the sheet tells the truth. Interjection. That’s a good meeting.

Forecast Scenarios With A Sheet At 1, 10, And 100 Per Month

Add a volume input. Amortize fixed costs, apply variable per unit, and add a bottleneck multiplier at higher volumes, say 1.2x editor time above 50 per month without templates. Add experiment rows: micro-briefs, automated visuals, QA sampling. Produce per-article cost and total spend for bronze, silver, and gold.

Give leadership three options instead of one ask. “At 20 silver-tier posts per month, we forecast $X per article. Gold would add $Y and fund SME review. Bronze would reduce edit time but accept a higher error budget.” They’ll appreciate choosing the tradeoff they’re actually paying for.

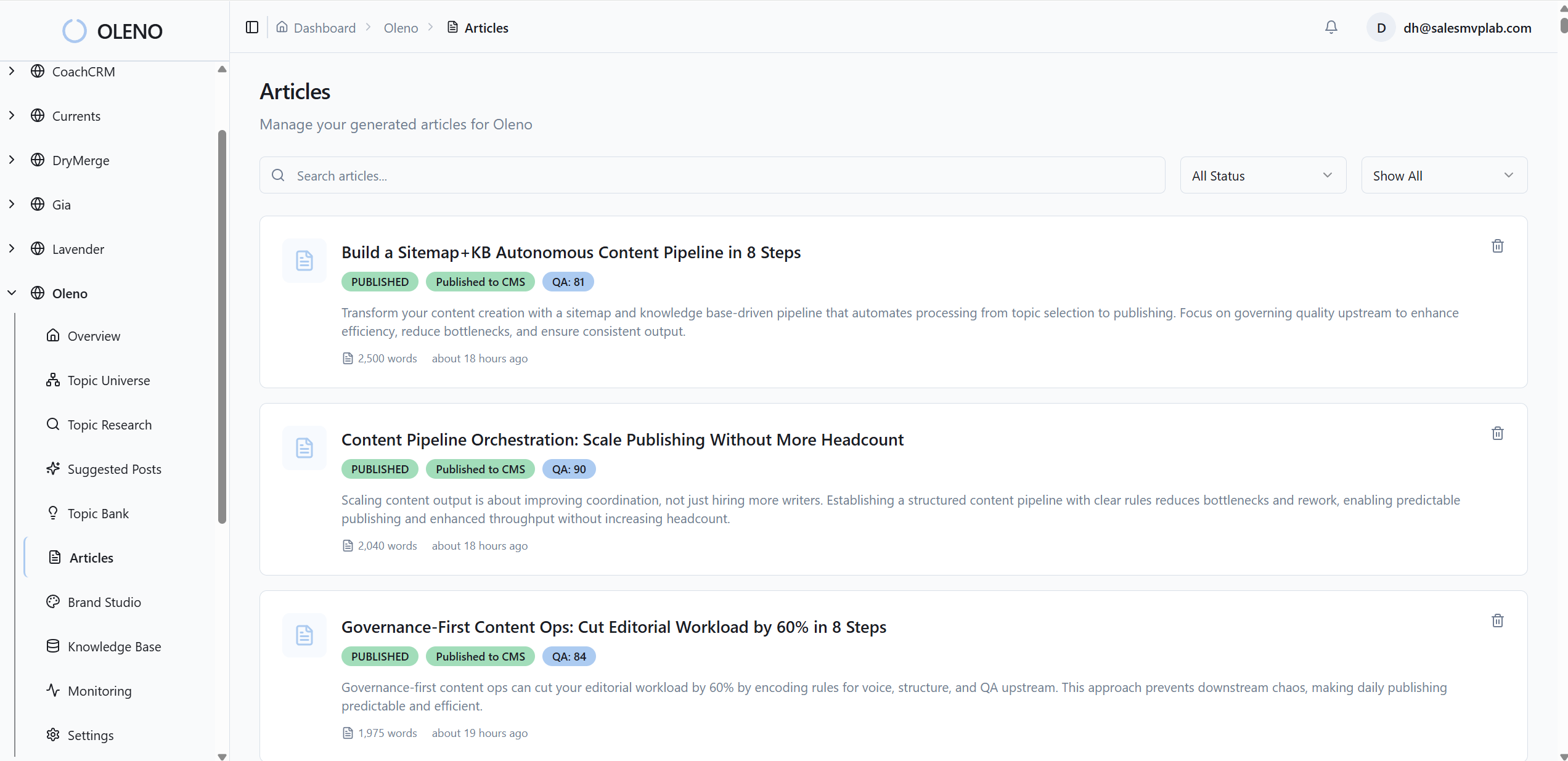

How Oleno Turns Quality SLOs Into Predictable Cost Per Article

Oleno runs a deterministic pipeline, topic, angle, brief, draft, QA, visuals, publish, that enforces quality before anything ships. By shifting quality from “review time” to “system enforcement,” teams cut rework, stabilize cycle time, and keep cost-per-article predictable as volume increases. It’s not a writing assistant. It’s the system that creates publish-ready content.

Quality SLOs Enforced Pre-Publish With A QA Gate

Oleno applies a pre-publish QA gate that checks narrative structure, voice alignment, KB-grounded accuracy, SEO formatting, and LLM clarity. Drafts that miss are revised automatically until they pass. Nothing publishes unless it meets the bar. That means the rework you’re used to paying after publish is handled before it ever goes live.

This is how you convert SLOs into cost control. If your silver tier funds two edit rounds and a 10 percent QA sample, Oleno’s QA gate enforces that expectation consistently. Variance doesn’t hide in someone’s calendar; it gets blocked by the system. That’s the difference between hoping for quality and guaranteeing the conditions for it.

Topic, Brief, Draft, Visuals, Publish Without Handoffs

Oleno executes the same sequence every time: Topic → Angle → Brief → Draft → QA → Visuals → Publish. No manual handoffs means fewer delays and fewer late changes. The brief locks structure before drafting, the knowledge base keeps claims grounded, and idempotent CMS publishing prevents duplicates that waste editorial time.

The downstream effect is predictable throughput. Your per-article cost stops wobbling when campaign pressure rises because the pipeline doesn’t improvise. If you’ve ever watched launch-day edits cascade into visual changes and schema tweaks, you know how fast a “tiny fix” becomes an expensive week.

Use Sampling And Templates To Reduce Variable Costs

Pair Oleno’s pipeline with your operating levers: statistical QA sampling on low-risk posts, micro-briefs to reduce SME minutes, and brand voice rules to shrink edit loops. Oleno’s voice and structure constraints do the heavy lifting upfront; your sampling policy decides where to spend human attention.

Over time, this shows up exactly where your model expects: lower editor hours, fewer SME consults, and a steadier cycle time. Not zero. Steady. That consistency is what lets finance forecast confidently and lets you push volume without inviting a quality slide.

Want to see how the pipeline affects your numbers in real life? Try Oleno for Free and map outputs to your bronze/silver/gold SLOs.

Conclusion

If you budget content by headcount, you’ll get surprises. If you budget per published article with SLOs, you’ll get choices. The honest math forces quality and cost into the same conversation, and a deterministic pipeline keeps both steady as volume climbs. Pick your tier, fund it, and let the system do its job. Then scale without the Sunday-night headaches.

External references:

- Market rate guardrails: WebFX’s content marketing pricing overview

- Line-item ranges: The Digital Elevator’s content cost breakdown

- Budget context: CMI’s 2025 B2B content trends

- Cost inputs: MeasureMinds cost inputs and ranges

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions