Content Coverage Audit: 5-Step Framework to Find Topic Gaps

Most teams don’t have an ideas problem. They have a coverage problem they can’t see. I learned that the hard way. Back at Steamfeed, we hit 10k+ pages because we could cover depth and breadth at volume, but it only worked because we mapped what we had and what was missing. Later at PostBeyond and LevelJump, when we didn’t do that mapping, we slipped into “same but different” pieces that looked busy and produced confusion.

At Proposify, we ranked brilliantly—but for topics too far from the product. Great traffic, poor demand-gen alignment. The pattern shows up everywhere. Keyword lists are easy. Coverage math is harder. But once you do it—pillars, clusters, saturation states, cooldowns—the work stops cannibalizing itself, and your authority starts compounding. That’s the shift.

Key Takeaways:

- Map coverage, not just keywords: pillars, clusters, and saturation labels reduce redundancy

- Use a redundancy score across titles, H2s, and summaries to find merge candidates

- Enforce cooldowns per subtopic so updates don’t trip over new posts

- Score information gain before writing to prevent “same but different” drafts

- Build a 90-day plan from coverage gaps, not hunches, then protect a buffer

- Deterministic internal links and snippet-ready H2s improve retrievability and clarity

Why Topic Lists Keep You Stuck In Redundancy

Topic lists keep you stuck because they optimize for volume, not coverage. Without pillars, clusters, and saturation states, you’re guessing at what’s net-new versus repeat. The fix is simple: label every page, quantify overlap, and prioritize gaps—like consolidating five similar “sales onboarding” posts into one leader with fresh examples.

The metrics that actually matter for coverage

Coverage is a map, not a spreadsheet of keywords. You need pillars that mirror your product’s problem space, clusters inside each pillar, and saturation states per cluster: underserved, healthy, well-covered, saturated. When you tag pages this way, planning stops being opinionated. It becomes arithmetic. You’ll see where every new article fits—or doesn’t.

What moved the needle for us was treating “coverage” like an audit discipline with thresholds that drive decisions. You set limits for cluster size, minimum information gain, and cooldown windows. The minute you do that, cannibalization isn’t a surprise anymore; it’s something your system prevents. It’s not fancy. It’s consistent. That matters.

There’s a governance analogy here that’s helpful: thresholds reduce interpretation drift. You don’t need a perfect formula; you need a consistent one you’ll follow. For inspiration on threshold thinking, skim the Audit Quality Disclosure Framework—different domain, same principle: reduce subjective judgment with clear criteria.

Why conventional wisdom about keyword lists fails

Keyword lists bias your roadmap toward recency and volume. They rarely account for how similar pages overlap, or whether you have one strong cluster leader versus six mid-tier posts splitting signals. That’s why cannibalization creeps in despite “new” topics. It’s the same intent, sliced thin, over and over.

When we moved from a flat list to clusters, the pattern was obvious: five posts “teaching sales enablement onboarding” with near-identical H2s. Each piece had value in isolation. Together, they diluted authority. The solution was merging and adding fresher detail: updated screenshots, brand-specific examples, and unique sources. Same space, better consolidation.

If you need a mental model, think unified frameworks. They reduce rework by normalizing inputs and choices. Content is no different. See how audit pros think about reducing duplication via shared frameworks in this short take from ISACA. Borrow the mindset. It saves hours later.

How do you know you are repeating yourself?

Start by diffing titles, H2s, and summaries. If two pages answer the same question with similar structure, that’s overlap. Don’t debate it; compute it. Title similarity, shared H2 terms, and cosine similarity between summaries—roll those into a 0–1 redundancy score. Anything above 0.6 is a merge candidate.

Once you have the score, decisions get cleaner. You can merge and redirect to consolidate equity, or refocus one page with a new angle: a use-case walkthrough, updated data, or product-specific guidance your other page doesn’t have. The real win isn’t the tidy URL tree. It’s that internal links and authority flow now have a single canonical target.

Ready to see what a coverage-first plan looks like in practice? If you’re already convinced, skip ahead and Generate 3 Free Test Articles.

The Root Cause You Miss: Coverage Blindness, Not Ideas

A coverage audit merges your sitemap and knowledge base, clusters content into pillars, and scores saturation and redundancy to reveal gaps. The point isn’t “more ideas,” it’s fewer, better choices. You’ll leave with a prioritized 90-day plan that fills high-value gaps while avoiding lookalike angles that confuse users and search.

What is a content coverage audit and why it matters?

Think inventory plus intent. You pull URL, title, H1, H2s, summary, publish date, canonical, and cluster guess into one table. Then you group into pillars and clusters and label saturation. Finally, compute redundancy and information gain. This turns planning into a sequence: identify gaps, de-duplicate, then brief to add net-new value.

When we worked founder-led, we could draft quickly from raw expertise. But without coverage math, we shipped smart takes on the wrong angles—or angles we’d already covered. Sales traffic spiked but didn’t convert because the narrative didn’t point back to the product’s core value. A coverage audit creates the map that fixes that.

It’s a mindset shift: the goal isn’t velocity; it’s compounding. You don’t need another “tips” post. You need the definitive cluster leader that other pieces support. Once you see your landscape as a system, authority stops being accidental.

What traditional inventories miss

Conventional audits jump straight to keep, update, delete. Useful, but incomplete. Without a cluster view, you’ll miss that five mid-funnel articles hit the same intent with slightly different titles. It looks like breadth; it’s actually drift. Add cluster labels, information gain scoring, and cooldown rules. Now you’re governing outcomes, not eyeballing drafts.

We learned this the long way. At Proposify, exceptional pieces sometimes outranked pages that should’ve captured demand. Not because the writing was weaker—the coverage was misaligned. A simple “this cluster is saturated” label would have saved content sprints that looked good in analytics but didn’t help the pipeline.

If you want a quick mental model for normalized inputs, the Unified Compliance Framework overview offers a helpful parallel: unify sources, normalize fields, then apply rules once. Same playbook for content audits.

The hidden complexity behind cluster mapping

Clusters aren’t neat parent-child trees. Some posts straddle pillars and intent stages. That’s normal. Use semi-automated grouping from titles, H2s, and summaries, then apply judgment. You’ll discover 10–15% of pages are truly multidimensional. Label them anyway. Imperfect clusters still reveal obvious saturation and low-hanging gaps.

Don’t let the edge cases stall you. Aim for “directionally right.” The moment you see saturation labels, priorities sharpen. The update you were planning next week may need a 90-day cooldown. Another page, lonely in an underserved cluster, should leap to the top. That’s the value: fewer debates, clearer sequencing.

The Hidden Cost Of Publishing The Same Ideas Twice

Repeated ideas burn hours, split internal links, and slow authority growth across clusters. Redundant pages trigger extra edits, create crawl confusion, and push users to weaker answers. Consolidation and information gain scoring prevent repeat work and put equity behind a single, stronger leader page.

Engineering hours lost to content rework

Let’s pretend you ship eight articles a month, each with two edit rounds. If 25% are redundant, you’ve got four to six extra edit cycles monthly. Call it 10–15 hours, minimum. Add design tweaks, screenshot swaps, and publishing fixes. That’s a chunk of your sprint you’ll never get back.

Now multiply the opportunity cost. Those hours could have gone to the underserved cluster that actually moves buyers. You didn’t just waste time—you slowed the roadmap. The fix isn’t heroic effort. It’s enabling the team with clearer coverage math, then measuring overlap before drafts exist.

The cascading impact on discoverability and demand

Redundancy splits internal links and dilutes crawl signals. It’s death by a thousand nearly identical pages. You might still rank here and there, but snippet eligibility drops, and the narrative rarely lands on the problem your product solves. This is how teams publish more and feel worse about outcomes. It’s not the writing. It’s the structure.

When we cleaned up overlaps, demand alignment improved—not because of a fancy campaign, but because every path led to a defined cluster leader with a focused angle. The job wasn’t to write harder. It was to stop competing with ourselves.

How do you quantify overlap fast?

Create a redundancy score. Combine title similarity, shared H2 terms, and cosine similarity of summaries into a single 0–1 metric. Anything above 0.6? Merge or differentiate. Redirects consolidate equity. Or keep one page live, but add new evidence, examples, and visuals so it actually says something net-new.

This is where threshold thinking helps again. You’re not debating every pair of pages; you’re following a rule. If the score is high, it’s a merge candidate. If it’s borderline, you add something substantial or you hold. It’s cleaner, faster, and kinder to the team.

Still wrestling with overlap and manual cleanup? There’s an easier path. Try An Autonomous Content Engine.

When The Team Feels It: The Rewrites, The Cannibalization, The Unclear Next Topic

You’ll feel redundancy in the rewrites and the “wait, didn’t we cover this?” debates. Updates fight new posts for the same query, traffic wobbles, and support gets questions routed to the weaker page. Cooldowns and cluster leaders prevent those headaches before they start.

When your biggest post competes with its own update

You refresh a proven winner. Then, two weeks later, a “new angle” ships that answers the same question. Both wobble. Internal links split, snippets drop, and sales sees a dip in quality leads. No one did anything “wrong.” Coverage blindness did it for you.

The fix is straightforward: a 90-day cooldown per subtopic and one canonical leader to consolidate updates. That way, the next “new angle” is either truly new or it’s a planned expansion to the leader page. The team breathes. The metrics stabilize. And your roadmap stops spinning in place.

The 3am incident no one saw coming

It’s launch day. The piece is solid. Then someone flags a near-duplicate from six months ago. Redirects help, but the morale hit is real. Writers feel whiplash, editors get dragged into forensic cleanup, and the calendar goes sideways. A simple cadence and handoff guardrail reduces these failure points—think audit discipline applied to content. The Internal Audit Competency Framework is a good model for building repeatable, low-drama routines.

Run The Coverage Audit In 5 Steps

A five-step coverage audit consolidates your sitemap and KB, clusters pages, calculates saturation and redundancy, scores information gain, and builds a 90-day plan with cooldowns. It’s not fancy. It’s repeatable. The result is fewer, stronger pages and a roadmap that compounds authority.

Step 1: Consolidate sitemap and KB into one inventory

Export your sitemap and pull knowledge base entries into a single sheet. Include URL, title, H1, H2s, summary, publish date, canonical, and a cluster guess. Add focus areas tied to your product and buyer journey. Keep it flat. If you can’t filter and sort in seconds, it’s too complicated.

I’ve done this with three people and a coffee. Don’t overthink tools. Consistency beats sophistication here. What matters is that the team trusts the source of truth. Every decision downstream starts from this table, so make it clean and complete.

Step 2: Cluster pages into pillars and subtopics

Create pillar labels that mirror your product’s problem space. Tag subtopics for each cluster. Use shared title terms, intent language in H2s, and quick manual scans to confirm. Flag multi-intent pages. Keep clusters small at first—4 to 12 pages is ideal. Add a cooldown column to mark last covered date per subtopic.

You’ll learn faster by labeling imperfectly than by waiting for perfect. The act of clustering surfaces obvious saturation. You’ll see three posts that look like different topics but share the same intent. That’s your first batch of consolidation candidates.

Step 3: Calculate saturation, overlap, and duplicate signal

Now add formulas. Saturation equals pages in cluster divided by target coverage count. Overlap equals the number of pages sharing 50% of H2 terms or more. Duplicate signal equals (title similarity + summary similarity) / 2. Color code thresholds. Anything above 0.6 on duplicate signal enters a merge review.

Once those colors light up, the roadmap writes itself. Merge here. Redirect there. Pause a subtopic for 90 days. You don’t need a meeting to justify it. The math just explained the next sprint for you.

Step 4: Score information gain and competitive gaps

Build a simple information gain rubric. Award points for new sources, original examples, product-specific guidance, and fresh questions answered. Compare against top SERP themes—not to copy, but to avoid restating. Your goal is to add what’s missing, not remix what’s already there.

Keep the rubric short so you can run it quarterly without groaning. If you can’t repeat it, you won’t enforce it. When we started scoring gain before writing, drafts got faster and edits got lighter. The angle was clear from the jump.

Step 5: Build a 90-day plan with cooldowns and brief templates

Sort by high information gain, low saturation, and strong demand fit. Apply a 90-day cooldown per subtopic to prevent accidental over-publishing. Generate briefs that enforce new information: required sources, unique examples, and section-level objectives. Schedule eight to twelve weeks. Leave one opportunistic slot per sprint. Interjection. Protect the buffer, or you’ll lose it.

If you want a model for execution reliability, skim this rundown from AuditBoard on leveraging frameworks for execution. Different field, same outcome: fewer surprises, better handoffs, cleaner results.

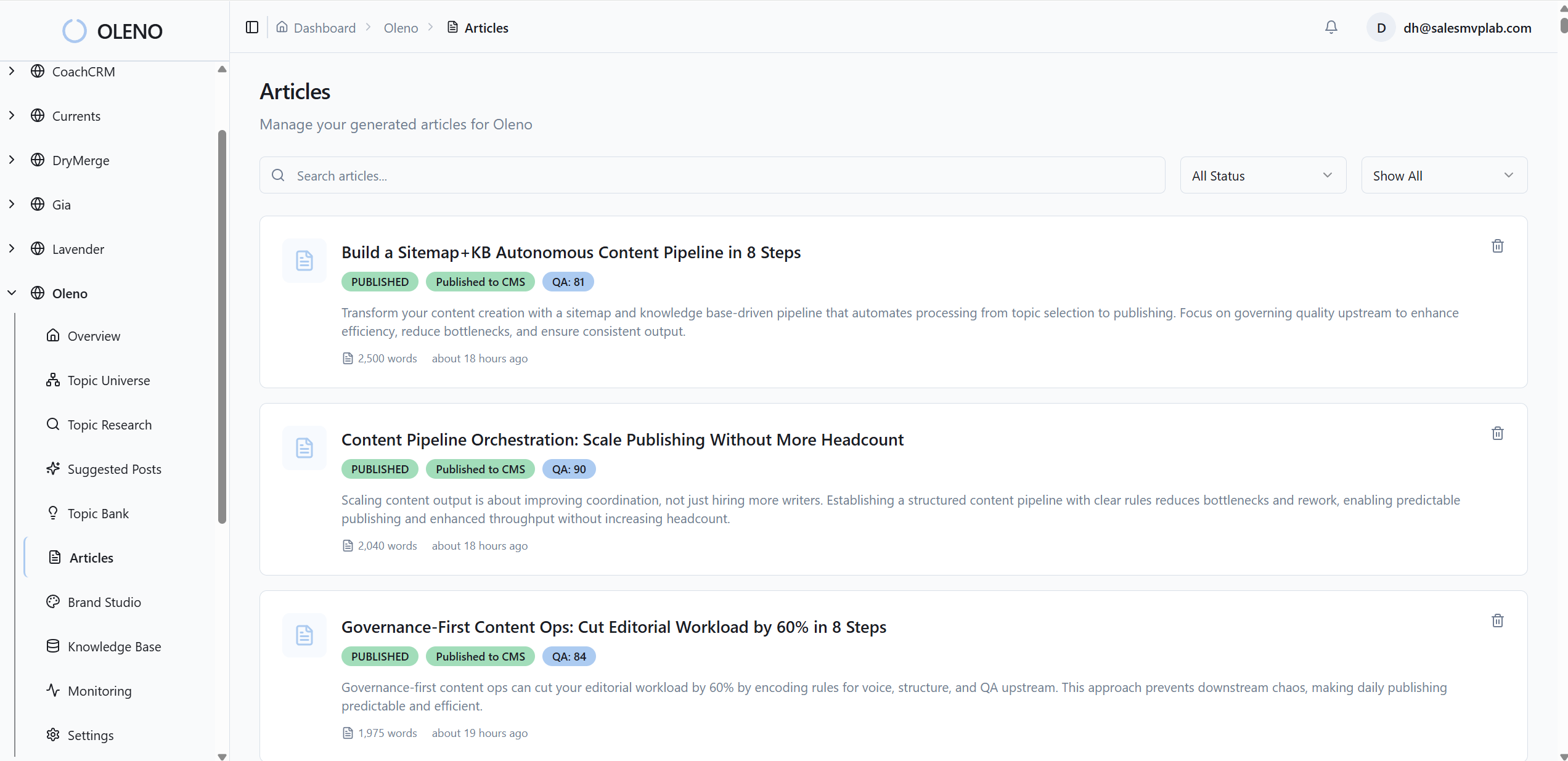

How Oleno Automates Coverage Audits And Prevents Redundancy

Oleno automates the whole coverage workflow—discovering topics from your KB and sitemap, clustering them, enforcing cooldowns, scoring information gain, then generating on-brand drafts with snippet-ready structure. Deterministic links, schema, visuals, and publishing are handled together so your team can focus on decisions, not glue work.

Topic Universe coverage tracking with enforced cooldowns

Oleno’s Topic Universe discovers relevant topics from your KB and sitemap, organizes them into clusters, and labels saturation state. It enforces a 90-day cooldown per subtopic, which curbs cannibalization and unnecessary refreshes. You get fewer rewrites, clearer priorities, and an internal linking map that compounds authority instead of splitting it.

I like how boringly predictable this becomes. Coverage changes? Labels update. A topic hits “saturated”? It won’t resurface for approval until cooldown. That’s how you stop creating two pages for one intent while still publishing daily.

Brief generation with information gain scoring

Every approved topic in Oleno becomes a structured brief with competitive research and an Information Gain Score. Low-differentiation outlines are flagged before writing. Writers get a clear angle, required sources, and section-level objectives. Drafting is faster, and editorial detours shrink because differentiation is enforced at the start—not begged for at the end.

This is the point where rework time drops. If the gain isn’t there, the draft never starts. If it is, the piece adds something the cluster lacks—fresh examples, product-specific guidance, or net-new questions answered.

Deterministic internal links and snippet-ready structure

Oleno injects internal links algorithmically after drafting, using only verified URLs from your sitemap and exact-match anchor text. Each H2 opens with a snippet-ready, three-sentence paragraph. Together, these improve retrievability and reduce drift—the kind that creates thin, repetitive sections users bounce from and search ignores.

You don’t need to babysit anchors or argue about paragraph structure. The system makes structure consistent and link placement reliable.

Visuals, schema, and publishing handled together

Oleno’s Visual Studio generates brand-consistent hero and inline images and matches product screenshots to relevant sections. JSON-LD schema (Article, FAQ, BreadcrumbList) is generated automatically, then everything ships to WordPress, Webflow, or HubSpot via connectors. QA gates ensure tone, structure, and information gain are met before anything publishes.

You’ll notice what’s missing: manual glue work. No last-mile scramble for images. No schema paste jobs. No copy-paste into the CMS that breaks formatting. Oleno handles it end to end so the 10–15 hours lost to frustrating rework start coming back to your roadmap.

Want to feel the difference in your next sprint? Try Oleno For Free.

Conclusion

You don’t need more topics. You need a map and a system. When you label clusters, measure redundancy, enforce cooldowns, and score information gain before writing, overlap drops and authority compounds. Whether you run the audit manually or let Oleno automate the loops, the outcome is the same: fewer, stronger pages that move the narrative—and the pipeline—forward.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions