Contrarian Case: Why Prompt-First AI Workflows Fail at Demand Generation

I get why prompt-first feels like a breakthrough. You type a few lines, get a draft in minutes, and suddenly your Notion board looks full. I’ve done it too. Early wins feel great. Then two weeks pass, the backlog grows, and your calendar turns into a holding pattern of edits, rewrites, and “quick syncs” that are anything but quick.

When I was the sole marketer at PostBeyond, I could write fast because I had the structure in my head. As the team grew, quality slipped because that structure wasn’t codified. Same thing at LevelJump when we were three people. We recorded the CEO, transcribed it, and turned it into posts. Velocity was high. Structure was missing. The stuff didn’t rank, didn’t convert, and didn’t add up to a narrative buyers could follow.

The uncomfortable truth is this: demand gen isn’t a document factory. It’s a system. It has to run continuously, hold a point of view, and stay accurate while volume increases. Prompts help create output. They don’t run systems.

Key Takeaways:

- Prompt-first creates visible activity, but shifts judgment back to people, which multiplies coordination

- Consistency breaks over 30 to 90 days without upstream rules, so quality erodes as volume rises

- The hidden cost isn’t drafting, it’s editorial rework, QA loops, and publishing friction

- A system-first approach encodes voice, claims, and structure so quality becomes automatic

- Standardized briefs and deterministic pipelines reduce drift and make cadence realistic

- Oleno turns strategy into repeatable output with governance, job-based execution, QA gates, and publishing control

Why Prompt-First Feels Productive But Stalls Demand

Prompt-first looks productive because you see drafts, fast. The catch is demand gen isn’t a pile of docs, it’s a continuous system that compounds only when it stays consistent. When every asset starts from a fresh prompt, you reset context, voice, and rules, which inflates rework and tanks reliability.

The output trap hides the system failure

The first week of prompts is intoxicating. Ten drafts appear. Feels like momentum. What you don’t see is how each draft drags judgment back onto humans. Someone has to check voice, pin the narrative, enforce claims, and fit it into an actual plan. Without a system, you’ve just created a review queue that never ends.

This is where teams mistake output for progress. Drafts are loud. System failures are quiet until they aren’t. The moment you try to sustain cadence, drift starts showing up in tone, structure, and messaging. As Stop Letting AI Guess Why Your Prompts argues, vague intent and missing context make models guess, which multiplies corrections later.

Why prompts shift judgment back to people

Prompts don’t decide what should exist across the funnel, or how it should be sequenced. People do. Editors carry accuracy. PMM carries the point of view. Ops carries publishing and channel specifics. As output scales, so do handoffs. That’s where the cost shows up, not in drafting time but in coordination overhead.

You feel this when every piece gets “one more pass” because someone caught a nuance the model couldn’t possibly know. Multiply that by 8 pieces per month and three reviewers, and your calendar starts bleeding. The prompt saved 25 minutes up front. It cost hours later. Simple math, painful reality.

What happens to consistency over 90 days?

Ask a simple question. If you prompt the same topic a month apart, do you get the same voice, claims, and frame? Rarely. Templates help a bit, but they don’t encode policy, so tone drifts and claims wobble. Teams compensate with more reviews, more meetings, and slower publishing. Compounding never kicks in, which is the real loss.

We’ve all seen vague briefs turn into incoherent outputs. That isn’t a model problem. It’s a specification problem. The pattern shows up in design too. Vague Prototyping documents how underspecified inputs create alignment churn. Content is no different. Without upstream rules, you pay in confusion and rework.

If you already see this happening and want to skip the theory, you can get a walkthrough of the system we use to prevent it. Request a Demo.

The Real Root Cause Is Coordination, Not Creativity

Prompt workflows treat content like isolated tasks. Demand gen is closer to an operating system with governance, job-based outputs, and operational control that runs daily. Without that system, each draft is a snowflake that needs shepherding, which forces leaders to coordinate instead of scale.

What traditional prompt approaches miss

Most prompt flows optimize the first mile. Faster drafts. Fresh angles. That’s useful for exploration. It isn’t enough for operations. Demand gen needs decisions that persist across assets: how you sound, what you believe, what claims are allowed, and what jobs matter for acquisition, education, and conversion.

Even prompt specialization hits a ceiling. Role-based prompting can reduce variance, but it still relies on a person to hold the system in their head. As Prompt Engineering Jobs Are Obsolete in 2025 points out, the work shifts toward systems and data, not clever phrasing. That’s the inflection. Upstream rules beat downstream edits.

The hidden complexity behind brand safety and claims

High-stakes content needs guardrails. Approved language. Product truth. Banned terms. Disclaimers. Prompts don’t enforce any of it. Humans try, late in the process, which is when mistakes are expensive. That’s where you see legal redlines, confused prospects, and walk-backs that are avoidable with claim control encoded as policy.

When you centralize voice rules, claim boundaries, and product references, you stop arguing about phrasing and start producing consistent assets. It’s not about perfection. It’s about preventing preventable errors. That’s what frees up your editors to focus on nuance instead of whack a mole.

Who owns the system when prompts are ad hoc?

If the answer is “no one,” you have a reliability problem. Ownership can’t live inside prompts. It lives in a shared model of how you show up, which jobs you run, and how publishing happens. Without a named owner and encoded rules, leaders become traffic cops. Decisions slow. People get frustrated. The system stalls.

I’ve stepped into teams where strategy sat in decks, content lived in Google Docs, and execution lived in people’s heads. Everyone worked hard. Nothing compounded. It wasn’t an effort problem. It was a coordination problem.

The Compounding Cost Most Teams Underestimate

Draft speed is visible, so it gets the credit. Rework and coordination are quiet, so they get ignored. When you add up the edits, reviews, and publishing friction, the savings from fast drafts often vanish and turn negative.

Editorial rework eats the savings from faster drafts

Let’s pretend a 1,600 word article takes 25 minutes to draft, 90 minutes to edit, and 30 minutes to QA per iteration. Two iterations later, you “saved” 25 minutes and spent 3 hours. Net negative. The more variance the prompt introduces, the more you pay in edits that a system could have prevented.

You see the same patterns reported by practitioners who study prompt brittleness. Common failure modes include underspecified intent, missing constraints, and outdated context, which all show up as avoidable edits. The punchline is simple: speed without upstream structure creates noise. Noise creates rework. Rework erases savings.

Let’s pretend we do the math on a 3 person team

You, a writer, and a designer. Target is 8 pieces per month. If each piece triggers two 30 minute review calls and 2.5 hours of edits across stakeholders, you spend roughly 24 hours per month on coordination. That’s six publishable drafts worth of time, gone to meetings and markup.

Now layer on publishing friction. CMS formatting, image specs, internal links, channel variants. Even small issues, like duplicate posts or broken images, can cost hours. The faster you prompt, the more these edge cases pile up, because variability sneaks bugs into the process. That’s not scale. That’s drift.

The Human Side Leaders Feel Every Week

When output wobbles, your calendar becomes the bottleneck. You get pulled into reviews, alignment calls, and last mile publishing fixes. The team is working hard. You’re still shipping late. Not for lack of ideas, but because the system depends on you to hold it together.

When your calendar becomes the bottleneck

I’ve been there. Launch week, three stakeholder requests, and a dozen drafts that don’t line up. We tried prompt chains to speed it up. In practice, it added more review steps because each chain introduced a new failure mode. We shipped, but two weeks late. That delay wasn’t creative. It was coordination debt.

This is the pattern that burns people out. Leaders feel responsible for the quality bar, so they step in. Editors clean up messaging because they can. Ops pushes publishing across the line. Everyone is doing the right thing locally. The system, globally, is broken. You can feel it in your calendar.

The trust problem that kills velocity

When outputs are inconsistent, leaders stop trusting the engine. They add more approvals, ask for more examples, and create extra checkpoints. That slows everything and demoralizes the team. Trust doesn’t come from “try harder.” It comes from rules enforced by the system and visible pass or fail gates.

Buyers feel inconsistency too. They get mixed messages across channels, which lowers lead quality and drags out cycles. Fixing trust starts upstream. Voice rules. Claim control. Deterministic structure. Once those are in place, approvals can be lighter because the system does the heavy lifting.

If parts of this sound uncomfortably familiar, that’s usually the cue to shift from prompts to orchestration. If you want to see what that looks like in practice, Request a Demo.

A System-First Workflow That Scales Demand Gen

A system-first approach moves decisions upstream and encodes them, so quality becomes automatic and cadence becomes predictable. You still use prompts for exploration. You don’t rely on them for operations.

Encode rules upstream so quality becomes automatic

Decide once. Enforce everywhere. Voice and banned terms, positioning and product claims, required disclaimers, and narrative structure should live in policy that the engine applies by default. That removes the need for reviewers to police basics and reduces the risk of accidental overreach on claims.

When you do this well, editors spend time on clarity and nuance instead of fixing preventable errors. You also cut legal cycles because content stays inside approved boundaries. The side effect is consistency. Publishing gets faster not because people rush, but because the process stops leaking.

Standardize briefs and structure so outputs snap into place

Briefs are your contract with the engine and the team. Lock headings, intent, key claims, sources, and the story spine. Use section-level drafting to reduce drift and make QA mechanical. When structure is deterministic, you can scale variants and reuse assets across channels without reinventing every time.

Day to day, this looks simple. Fewer edit meetings, less reactive planning, and a steady cadence that holds even when launches or sales requests spike. You monitor output and exceptions, not every draft. That’s leverage for small teams that don’t have headcount to burn.

How Oleno Turns Strategy Into Repeatable Output

Oleno runs the operational layer of demand gen, so strategy and product truth turn into continuous execution. It starts with governance, runs jobs tied to the funnel, and enforces quality before publishing. The result is steadier output, fewer rewrites, and content that compounds.

Governance that encodes voice, claims, and product truth

Oleno starts with governance. You define voice rules, preferred language, and banned terms. You set positioning, approved claims, and product references. Oleno applies these rules everywhere, so drafts don’t drift off-message or cross claim boundaries. Editors stop policing basics and start refining substance.

Because governance changes slowly, consistency holds as volume grows. Your team isn’t trying to remember rules. The system carries them. That’s how you cut avoidable rework and reduce the risk of brand or legal issues without slowing down every piece for manual checks.

Job based execution across the funnel

Oleno is organized around demand-gen jobs, not random content. Programmatic SEO for acquisition, category education for shaping the problem, evaluation content for comparisons and alternatives, and product explainers that connect features to value. Each job shares the same execution engine, so coverage grows intentionally.

Inputs are clear. Outputs are structured. Execution is deterministic. That prevents the grab bag effect where three unrelated posts go live and don’t add up to anything. With Oleno, assets reinforce each other because they’re produced inside the same rules and mapped to a shared narrative.

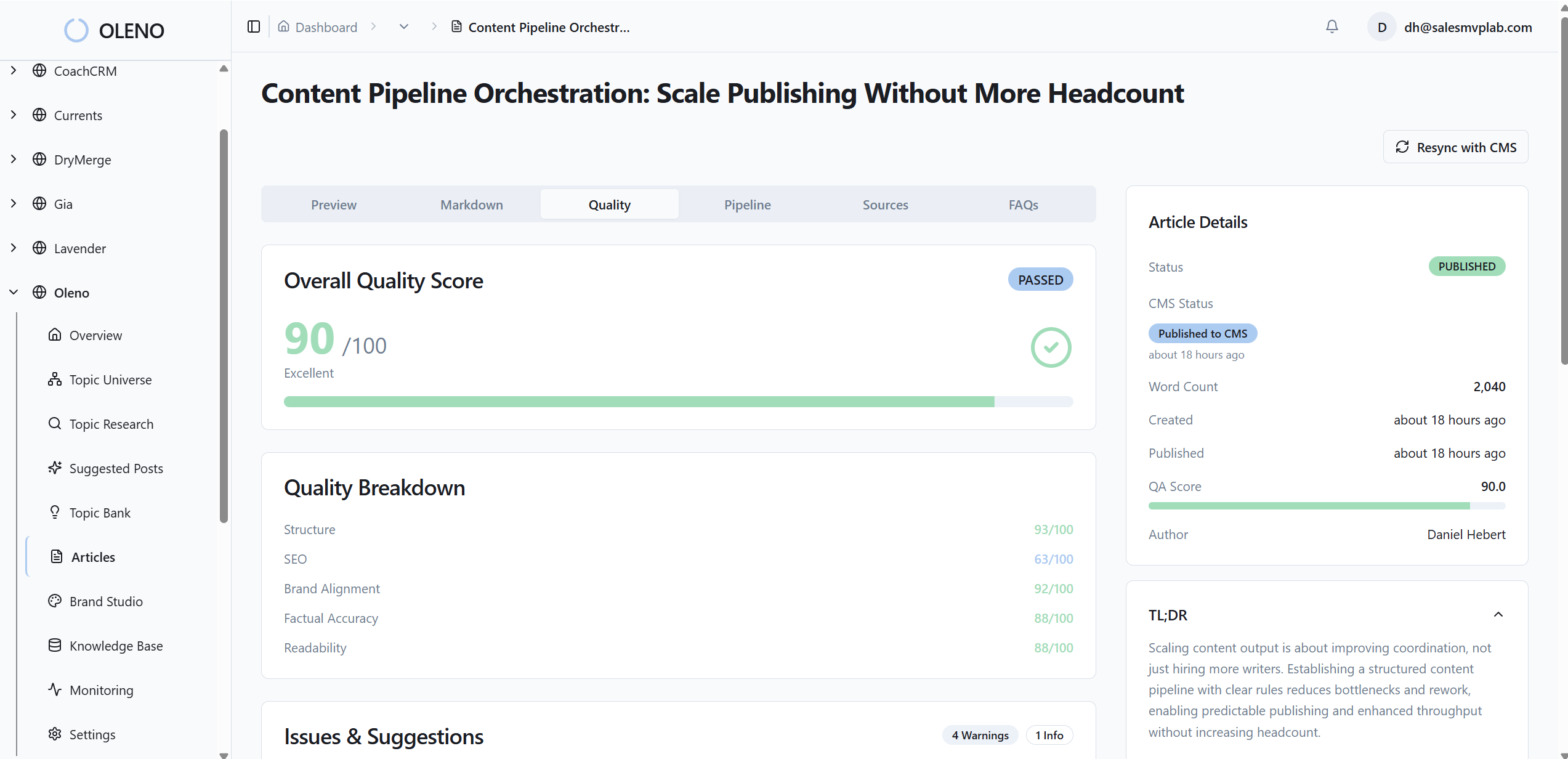

Automated QA gate and CMS publishing operations

Nothing ships unless it passes Oleno’s QA checks for voice alignment, structure, clarity, grounding, and safety. If an article fails, Oleno revises it against the rules until it passes. Then it publishes directly to your CMS with idempotency, so you don’t fight duplicates or half-published drafts.

Distribution can reuse approved content across channels without inventing new messaging, and optional visuals follow your brand guidelines. The net effect is fewer last mile headaches and a cadence you can trust. That addresses the hidden costs we walked through earlier and gives small teams room to breathe.

If you want to see how Oleno turns your strategy into an engine that runs every week, not just during sprints, Request a Demo.

Conclusion

Prompt-first is great for exploration. It’s fragile for operations. Demand gen needs a system that holds your voice, product truth, and narrative while output increases. When you move decisions upstream and let software enforce the rules, you cut rework, protect claims, and keep publishing steady. That’s how the work compounds instead of resetting every quarter.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions