Deterministic Content Systems: Map-to-Publish Playbook

Most teams try to fix content by writing more. I’ve done that sprint. It works until it doesn’t. You end up with a wall of drafts, still debating topics, still patching links, still wrangling images at the eleventh hour. You’re busy, but not building authority. That’s the tell.

Here’s the shift. Authority comes from a system that makes the important decisions for you, the same way every time. Strategy, differentiation, structure, visuals, publishing, one governed pipeline, not five committees and a heroic editor. When execution is deterministic, your team ships consistently and spends their energy on insight, not cleanup.

Key Takeaways:

- Replace tool sprawl with a rule-based pipeline from topic mapping to publish

- Enforce information gain before drafting to avoid thin, repetitive coverage

- Push accuracy into code: link injection, schema, alt text, and field mapping

- Standardize snippet-ready H2 openers to increase citation eligibility

- Use cooldowns and cluster coverage to prevent cannibalization and drift

- Make shipping binary with a QA gate, either pass or retry, no “almost”

- Treat content as infrastructure that compounds, not one-off projects

Why Publishing More Drafts Will Not Build Authority

Publishing more drafts doesn’t create authority; coordinated execution does. When rules decide topic selection, differentiation, structure, and publishing, you reduce variance and rework. For example, standardizing snippet-ready section openers and schema ensures your content is citable by search and AI systems.

The Real Bottleneck Is Disconnection, Not Writing

Your problem isn’t blank pages. It’s that strategy, drafting, visuals, and publishing live in separate tools with separate owners. Decisions drift. Variance creeps in. Then the rework begins, rewriting sections to fit a new angle, redesigning images to match a brand guide, republishing to fix broken links. Seen it too many times.

What you actually need is a single pipeline that makes yes/no decisions without meetings. Topics advance only when coverage and saturation rules say they should. Briefs pass when information gain is clear, or they don’t. Links and schema are injected after the draft, not manually sprinkled. That’s how quality becomes predictable, and predictable starts to feel fast.

If you need a governance blueprint to steal, public-sector teams publish theirs for a reason. The operational patterns in the GOV.UK: A Guide to Using AI in the Public Sector translate well here: make decisions explicit, encode them in process, and test before deploy.

What Is a Deterministic Content System, and Why Should You Care?

Deterministic means rules decide, not taste. Topic coverage and saturation determine priorities. Briefs must clear an information gain score before anything is written. Internal links and schema are injected programmatically. QA doesn’t “feel right”, it passes or fails against defined checks. Fewer debates, more shipping.

Care because variance is the tax on every team’s calendar. If your system can’t consistently produce snippet-ready H2 openers, brand-consistent images, and correct schema, you’re paying that tax multiple times per article. I’d rather you invest the cycles in stronger arguments and real examples, the stuff that actually moves pipeline.

Fragmentation makes small decisions expensive. Determinism makes them trivial. That contrast is where the compounding returns come from.

Ready to see what a governed pipeline looks like in practice, end to end? If you’re curious, you can Try Generating 3 Free Test Articles Now.

Variance, Not Velocity, Is Killing Your Pipeline

Velocity without coordination increases noise and cannibalization. Map your topic universe, label cluster saturation, and enforce cooldowns to keep coverage balanced. Think “idempotent runs” for content: the same input rules produce the same, reliable output. Ansible playbooks do this in ops; you can mirror it in content.

What Traditional Approaches Miss About Topic Coverage

Picking topics off a keyword list ignores your own map. Not all clusters need attention right now, and some should be avoided entirely for a while. Without a system that tracks coverage and saturation across pillars, you’ll naturally over-publish where you feel comfortable and under-publish where authority would grow.

A Topic Universe solves this. Clusters get labeled as underserved, healthy, well-covered, or saturated. Recency is enforced. You stop writing the same sales-enablement basic twice in a quarter because the cool new angle felt urgent. You build breadth and depth where it counts, not wherever a spreadsheet last landed.

There’s a reliable mental model for this: the way ops teams use playbooks to keep environments consistent. The structure in Ansible Playbooks: Introduction is a useful analog, declare inventory, run ordered tasks, check results, avoid drift.

How Do You Measure Coverage Without Rank Trackers?

Track what you can control. Your sitemap and knowledge base are ground truth. Score clusters on health and apply a 90-day cooldown per topic. Feed the gaps into a daily suggestion queue that never runs dry. The goal isn’t guesses about SERP; it’s consistency in how your narrative grows.

When you do this, you’ll notice two shifts. First, fewer duplicate pitches in planning. Second, less internal debate because priorities are encoded, not argued. I’ve watched teams reduce weekly editorial time by half just by removing the “what should we write?” loop and replacing it with “which approved gap is next?”

The Hidden Costs of Non-Deterministic Publishing

Non-deterministic publishing quietly taxes your team, minutes at a time that add up. Broken internal links, missing JSON-LD, or off-brand visuals don’t always trigger alarms, but they drag performance and credibility. You control this by pushing correctness into code and validating before publish, not after.

Engineering Hours Lost to Manual Handoffs

Let’s pretend you ship 20 posts per month. If each one needs 45 minutes to fix links, schema, filenames, and alt text, that’s 15 hours of monthly rework. Add another 10 hours when designers hunt for screenshots. Multiply by two if your CMS strips formatting and you republish. Those hours aren’t strategic; they’re preventable.

The simple fix: move the work downstream into post-processing. Inject 5–8 internal links from a verified sitemap. Generate JSON-LD. Produce descriptive alt text and filenames. Do it in code after draft, before QA. When you eliminate manual handoffs, engineering touches publishing less, and the whole machine stops stalling on small things.

What Happens When Links and Schema Are Wrong?

Broken links create orphaned content and crawl waste. Missing or invalid JSON-LD quietly reduces your eligibility for rich results and snippet capture. Tools might not yell at you. Results will. Schema is not a creative exercise; it’s a correctness one.

Push structured data into your pipeline and treat it like a build artifact. Validate it. Ship it. If you want a practical reference for what “right” looks like, the examples in Google Search Central: Introduction to Structured Data are a good baseline. Do that by default, and you stop losing by mistake.

Still stitching this together manually every week? It might be time to offload the rules and let the system handle the plumbing. If you’re there, Try Using an Autonomous Content Engine for Always-On Publishing.

When Rework Becomes the Job

Rework creeps in when structure lives in people’s heads, not in your pipeline. This shows up as endless edits, late design changes, and topic drift that no one intended. Move decisions upstream, encode them, and free your team to focus on the parts that actually require judgment.

The Founder-Led Content Trap I Keep Seeing

We were a team of three, CEO, VP Product, me. We recorded videos, transcribed them, shipped posts. It worked for speed. It failed on structure. No topic map. No snippet-ready sections. No deterministic linking. Good ideas, weak performance. I’ve seen the same pattern across small SaaS teams more times than I can count.

The fix isn’t “write more.” It’s build a system that keeps your best thinking intact while the pipeline handles the rules. Founders have the insight. The pipeline needs to handle differentiation, structure, and publishing so you don’t spend Saturdays retrofitting alt text or arguing headings.

The 3 A.M. Content Fire Drill No One Wants

A post is “done” until someone notices the screenshots are wrong, alt text is empty, and the CMS stripped your schema. Now you’re back in the editor, browsers are open, and the thread is 30 messages deep. It’s not an emergency; it’s a missing rule.

Move those decisions upstream. Decide image placement and alt text generation in the pipeline. Map CMS fields and validate delivery. Send as draft or live, but make it deterministic. The late-night ping disappears when publishing is yes/no, not “it depends who touched it last.”

Map-to-Publish, A Deterministic 7-Step Runbook

A deterministic runbook turns “we should publish more” into reliable outputs. Seven steps: map topics, enforce cooldowns, generate briefs with information gain, draft to snippet-ready patterns, inject links and schema, gate quality, and publish via mapped connectors. Example: even a two-person team can run this weekly without meetings.

Step 1: Initialize the Topic Universe

Start with your site and knowledge base. Define pillars and compute cluster coverage and saturation. Label clusters as underserved, healthy, well-covered, or saturated. No brief starts without a cluster and saturation tag. That single constraint removes accidental duplicates before they get oxygen.

When this lives in your system, editorial meetings get shorter because prioritization is encoded. The Topic Universe becomes your source of truth: not just what you could cover, but what you should cover next based on the whole map, not one keyword.

Step 2: Apply Prioritization Rules and Cooldowns

Enforce a 90-day cooldown per topic. Use cluster scoring to prioritize gaps. Generate a daily suggestion queue. Approved topics roll forward automatically. You stop juggling spreadsheets and start moving methodically across pillars.

Cooldowns feel like a brake. They’re actually a steering wheel. They force coverage across your landscape, reduce cannibalization, and give each article space to earn its keep before you circle back with a refresh.

Step 3: Generate Automated Briefs With Information Gain Thresholds

Briefs include competitive research, an information gain target, and 3–5 authoritative external link candidates. Set a minimum threshold. Low-gain briefs don’t advance; promising ones do. Sections are outlined to support snippet-ready openers later.

This isn’t academic. It’s cost control. When you enforce differentiation before writing, you eliminate the “rewrite this to sound original” cycle. You also protect your brand from sounding like the nth summary of what’s already on page one.

Step 4: Draft With Snippet-Ready Structure

Write to a fixed H2 opening pattern: a 40–60 word direct answer, supporting context, then a quick example. Keep paragraph sizing consistent. Use your chosen content archetype so the narrative holds together. Don’t inject links or schema in the draft.

This keeps creative energy where it belongs, on arguments, examples, and lived experience, while structure stays consistent. The outcome is cleaner for readers and friendlier for machines that quote sections out of context.

Step 5: Run Deterministic Post-Processing

After the draft, inject 5–8 internal links from a verified sitemap using exact page titles as anchors. Generate JSON-LD for Article, FAQ, and BreadcrumbList where it fits. Create descriptive alt text and SEO-friendly filenames. All in code. No guesswork.

Think of this as your build step. The artifact is content that’s structurally sound and ready for QA, not a draft that “probably” has the right links and “maybe” the right schema.

Step 6: Enforce a QA Gate With Refinement Loops

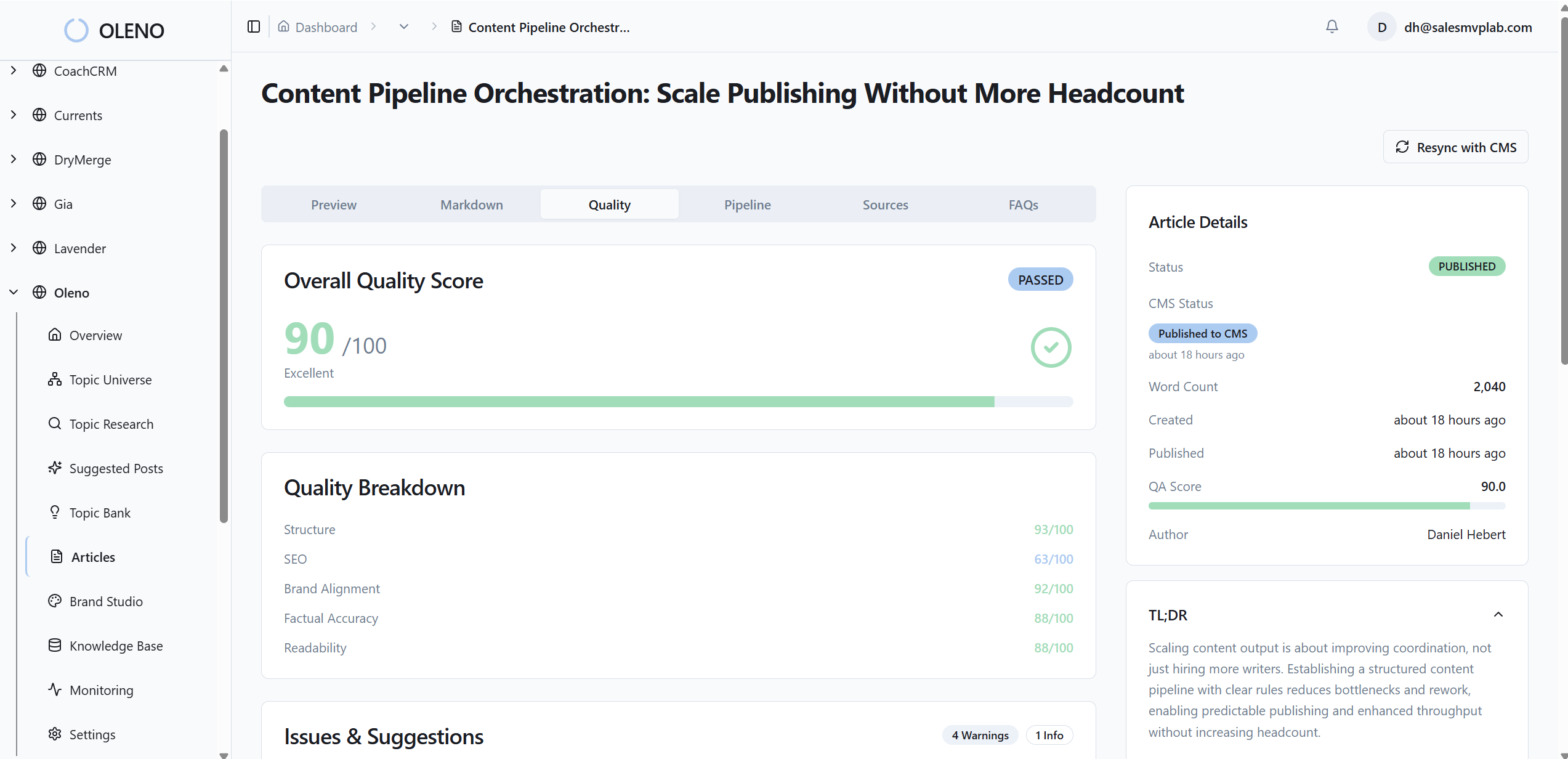

Score every article against 80+ pass/fail criteria: structure, brand alignment, information gain, snippet readiness, visual placement, and file hygiene. Misses trigger automated refinement and re-tests. Shipping is binary: either pass or retry. No manual approval queues.

When quality is a system, not a meeting, your calendar opens up. You get fewer subjective edits and more reliable throughput. And fewer 47-comment threads about commas.

Step 7: Publish Through Mapped Connectors and Verify Delivery

Convert markdown to CMS-ready HTML, map fields, and send as draft or live. Prevent duplicates with idempotent checks. Verify publish logs and notify on success or failure. Keep raw markdown and version history for audit.

You don’t need a dashboard to know it worked; you need a predictable delivery step that either succeeded or retried with a log you can trust.

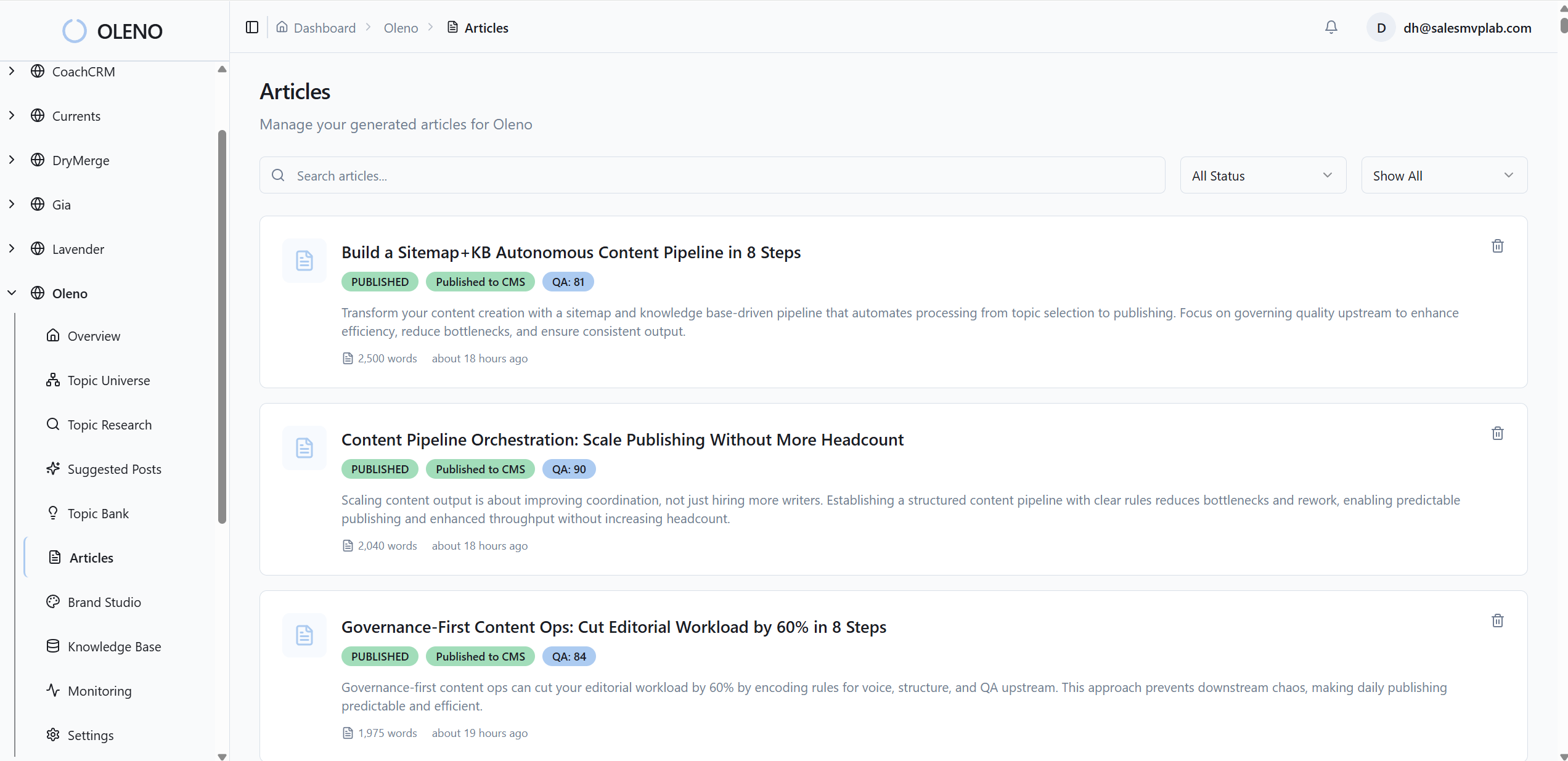

How Oleno Enforces a Map-to-Publish Pipeline End to End

Oleno operationalizes this entire runbook so you don’t have to duct-tape tools. It maps topics with cooldowns, enforces information gain before drafting, structures content for snippet eligibility, injects internal links and schema deterministically, runs an 80+ check QA gate, generates brand-consistent visuals, and publishes via mapped connectors, on repeat.

Oleno starts with Topic Universe. It turns your sitemap and knowledge base into clusters labeled for saturation, then enforces a 90-day cooldown per topic. You get daily suggestions prioritized by gaps, so “what’s next?” is answered by the system, not a meeting. Oleno’s brief generation bakes in competitive research and calculates an information gain score; low-differentiation outlines are flagged early, which prevents thin content from ever hitting draft.

After drafting, Oleno’s deterministic steps take over: internal links are injected from verified sitemaps with exact-match anchors, and JSON-LD for Article, FAQ, and BreadcrumbList is generated and validated automatically. Visual Studio creates brand-consistent images, matches product screenshots to relevant sections, and writes alt text and filenames. Then the QA gate evaluates structure, brand alignment, snippet readiness, and visual placement; misses trigger refinement loops until the piece passes. Final publishing uses connectors to WordPress, Webflow, or HubSpot with field mapping and duplicate prevention.

If your hidden costs today are link fixes, schema do-overs, and last-minute image scrambles, Oleno reduces that rework by pushing correctness into code and making shipping binary. Want to pressure-test your own workflow against a deterministic pipeline? You can Try Oleno for Free.

Conclusion

You don’t need more drafts. You need fewer decisions left to taste. Map your topics, enforce differentiation, standardize structure, push accuracy into code, and make shipping binary. That’s how content shifts from heroics to infrastructure, reliable, on-brand, and compounding over time. When the rules carry the weight, your team finally gets to focus on the story.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions