Editorial Canary Releases: Validate Content Quality with Staged Publishing

Publishing everything at once is why teams keep firefighting. Editorial canary releases fix that by staging exposure, watching signals, then scaling only when it is safe. The payoff is simple, and big. You keep your cadence high, catch expensive mistakes early, and stop living in rollback hell. If you have never tried editorial canary releases, you are leaving speed and safety on the table.

I learned this the hard way. Volume without guardrails looks great until it breaks trust. Voice drifts. Facts slip. SEO structure goes sideways. Then you lose hours patching live pages while the next batch waits. Staged publishing flips that equation. You validate in a small blast radius first, then expand when the data looks clean.

Key Takeaways:

- Define a minimal canary scope, small audience or limited index, with clear KPIs before you publish

- Run automated gates preflight and in-canary, plagiarism, RAG factual checks, SEO structure, and brand-voice classifiers

- Set monitoring thresholds and hard rollback triggers to prevent ranking or brand damage

- Use an automation recipe, queue, preflight, canary, bake window, promote or rollback, postmortem

- Scale the pattern across studios, SEO, Competitive, Thought Leadership, with shared governance

- Expect 70% fewer publish-related regressions and about 80% faster remediation within the first month

Why Editorial Canary Releases Stop Costly Rework

Editorial canary releases stop costly rework by limiting initial exposure, measuring objective quality signals, and only promoting content when it passes. You treat content like software, push to a safe slice first, then watch. The result is fewer public mistakes and far less cleanup work.

What Is An Editorial Canary Release, Really?

Think of each article as a release candidate. You publish to a small audience or hold it in a limited index state, then measure plagiarism, factuality, structure, and early engagement. When signals clear your thresholds, you widen exposure. When they do not, you pause, fix, and re-run gates before the wider world sees it.

I like the software analogy because it is honest about risk. Shipping to one percent first makes problems cheap. A missing H2 or a broken canonical tag is a quick fix when only a sliver of traffic saw it. It becomes a ranking problem when the entire audience hits it. Even a simple two-hour bake window catches the hidden issues that slip past manual review.

If you want a mental model, study canary releases in engineering. The concept is old, and it works. A plain-English overview from Cloudflare on canary releases shows how staged rollouts reduce risk without stalling delivery. Content can run on the same principle.

The Big Mistake: Publish Everything, Pray Later

Publishing everything now feels efficient. It is wrong. You push risk into the future, then pay a premium when it lands. Brand voice drifts one article at a time. SEO structure breaks in small ways that compound. Factual slips sneak into competitive posts and linger for weeks.

You pay for that mistake in three currencies. Time, because you rewrite live pages and re-run approvals. Trust, because customers notice when facts wobble. Ranking, because broken structure and schema cost you discovery. Canary releases force fast validation where it belongs, before full exposure, so problems cost minutes, not weeks.

When teams finally switch, they wonder why they waited. The cadence does not slow. It gets steadier. Everyone sleeps better when a rollout window can pause a miss before it snowballs.

The Root Cause Is Systems, Not Writers

Quality problems look like writer mistakes, but the root cause is a missing system. Without a single source of truth and automated gates, quality depends on who reviewed the draft on Tuesday. That is a coin flip, not a process. Editorial canary releases give you a repeatable way to enforce rules and contain risk.

Symptom Versus System: Where Quality Actually Breaks

Missed facts, weak structure, and voice drift look like individual misses. They are system failures. When brand voice lives in a PDF, product truth lives in Slack, and SEO rules live in someone’s head, errors repeat. You are fixing symptoms, not causes.

Strong systems set rules once, enforce them everywhere, then measure. Writers do better work when the guardrails are clear. Editors review faster when obvious checks are automated. Leaders trust the output when review does not depend on who had coffee. The loop is simple, and it is rare.

If you need a reference for what to measure, usability folks have been tracking content quality signals for years. The Nielsen Norman Group’s guidance on content usability maps cleanly to what we validate in a canary.

The Approval Chain Is Your Bottleneck

Six reviewers with shifting preferences is not quality control. It is a delay machine. You lose days to Slack drive-bys and subjective edits that conflict with last week’s feedback. Nothing is auditable. Everything is a debate.

Canary releases do not remove human judgment. They cage it. Objective gates do the heavy lifting, then a single governed checkpoint handles tone and sensitive claims. Pass or fail, then move. That cuts cycle time and reduces risk because decisions happen inside a clear frame. People stop arguing about taste and start aligning on rules.

Editorial Canary Releases By The Numbers: Risk, Cost, Signals

Canaries move discovery earlier, where fixes are cheap. Late fixes are 5 to 10 times more expensive than early ones when you account for edits, re-approvals, republishing, and lost sessions. Objective signals and short bake windows keep throughput high while protecting brand and rankings.

Quantify The Cost Of Late Fixes

Add it up. Hotfix edits, re-approvals, and republishing. Re-indexing delays. Lost sessions from broken structure. Trust hits when errors go public. Even if your data is rough, the pattern is clear. Early catches cost minutes. Late catches cost days.

There is a ranking cost too. A broken canonical or missing schema can knock a page out of discovery for a week. That is real pipeline loss. The fix is not hard. You move validation earlier so you are not cleaning up after the fact. A canary window is cheap insurance.

Google documents the mechanics of crawl and index controls. If you need a refresher on how to stage exposure safely, read the Search Central overview of crawling and indexing. It is not magic. It is just controlled access and metadata used on purpose.

Which Signals Should Trigger A Rollback?

Start with machine-checkable metrics. Plagiarism score above threshold, failed factual claims against your product knowledge base, missing H2 structure, broken schema, or bad canonicals. Then add human spot checks for voice or sensitive claims. If any hard threshold fails, pause or roll back. No debates, just rules everyone accepts, especially when evaluating editorial canary releases.

The goal is not perfection. It is repeatability. A pass means promote. A fail means fix forward. When rules are clear, teams stop arguing and start shipping. That alone erases a ton of waste.

For technical staging, robots metadata is your friend. The robots meta and x-robots docs from Google explain how to limit index state while you watch signals. Use it to keep canaries contained until they clear.

The Bake Time That Catches Hidden Problems

Give each canary a short observation window. Two to twenty-four hours covers most issues. Monitors watch error rates, link integrity, and SEO structure in the background. Long enough to surface problems, short enough to keep throughput high.

When the window closes clean, promote automatically. When it does not, roll back and open a repair task. No panic. No drama. Just a controlled loop that keeps quality high while you scale.

If you need to validate structured data quickly, lean on reference patterns. The Schema.org core docs make it easy to spot missing or broken fields that AI engines and search expect.

The Human Cost Of Reactive Publishing for Editorial canary releases

Reactive publishing feels worse as volume grows because inconsistency compounds. Each exception creates more exceptions. Editors fix the same mistakes across dozens of pages. Leaders lose patience, clamp down, and slow everything. Canary releases restore leverage by turning quality into a system, not a heroic act.

Why This Feels Worse As Volume Grows

At low volume, you can muscle through. At scale, debt shows up everywhere. Voice drifts, then you copy that drift into related pages. A broken pattern in one template becomes a broken pattern in twenty. Review queues get longer. People get tired. The work starts to feel endless.

A small canary window breaks that cycle. You catch the pattern early, patch once, and protect the rest. That control changes morale more than any pep talk. Teams shift from reacting to guiding. It is a different job.

Checklists help here. They are boring, and they work. The classic case on why checklists prevent errors is worth re-reading, see Harvard Business Review on checklists. Your automated gates are software checklists, enforced every time.

Rebuilding Executive Trust With Evidence

Executives do not want more meetings. They want proof. Show pass or fail gates, audit trails, and rollback outcomes. When leadership sees issues caught early, they approve faster because the system reduces risk. Trust returns. Review queues shrink. People stop living in crisis mode.

I have watched skeptical leaders flip after one month of clean canaries. It is hard to argue with fewer hotfixes and faster cycles. The story tells itself when the graphs bend in the right direction.

Designing Editorial Canary Releases For Content Ops

A solid editorial canary design defines your scopes, automates preflight QA, sets a short bake window, and codifies rollback rules. You are building a small, safe proving ground that maintains publishing speed while protecting brand and rankings.

Define Canary Scopes: Audience, Channel, And Index State

Decide where the canary lives. Options include a limited email segment, an unlisted URL with noindex, or restricted sitemap inclusion. Start small, pick one or two scopes, and write down the graduation rules. The goal is predictable safety you can monitor easily, not secrecy.

Two good starting points for most teams. Unlisted pages with noindex while you validate structure and facts. Small newsletter or social segments when you want early human engagement signals. Pick based on what you can watch quickly, not what sounds clever.

As you get comfortable, widen your initial slice a bit. The first week is about control. The second is about flow. You will find the balance fast.

Automate Preflight QA: Plagiarism, Factuality, And SEO Structure

Wire automated checks before anything ships. Compare product and competitive claims against your knowledge base. Validate heading depth, internal links, canonicals, and schema. Run plagiarism scans. Gate releases on objective thresholds. Pass goes to canary. Fail returns to fixing, especially when evaluating editorial canary releases.

Human QA still matters. Keep a short checklist for voice and sensitive claims that a model cannot judge well yet. The difference is you run that checklist once at a governed checkpoint, not six times in Slack over three days.

Add a simple readiness rule set so people know what “go” looks like:

- Plagiarism score under your threshold, for example under 10 percent

- All H2 and H3 structure present, no empty headings or template junk

- All product claims matched to approved facts in your KB

- Canonical and schema present, validated for the content type

Monitoring, Bake Time, And Rollback Rules

Pick an observation window, set your monitors, and codify triggers. Two to twenty-four hours works in most cases. Examples include failed factual spot check, broken canonical, missing citations, or critical tone deviations. If any threshold crosses, pull back instantly, fix forward, and re-run gates.

When the window closes clean, promote to full exposure automatically. That automation is the point. People should not babysit a clock. They should improve the rules.

For teams that want deeper automation, pair monitors with CI-style workflows. Simple pipelines in tools like GitHub Actions can run checks on commit and on publish. And if you need to confirm index status after promotion, the Google Search Console Indexing API quickstart is useful.

Ready to pilot editorial canary releases without slowing down your cadence? Request a Demo

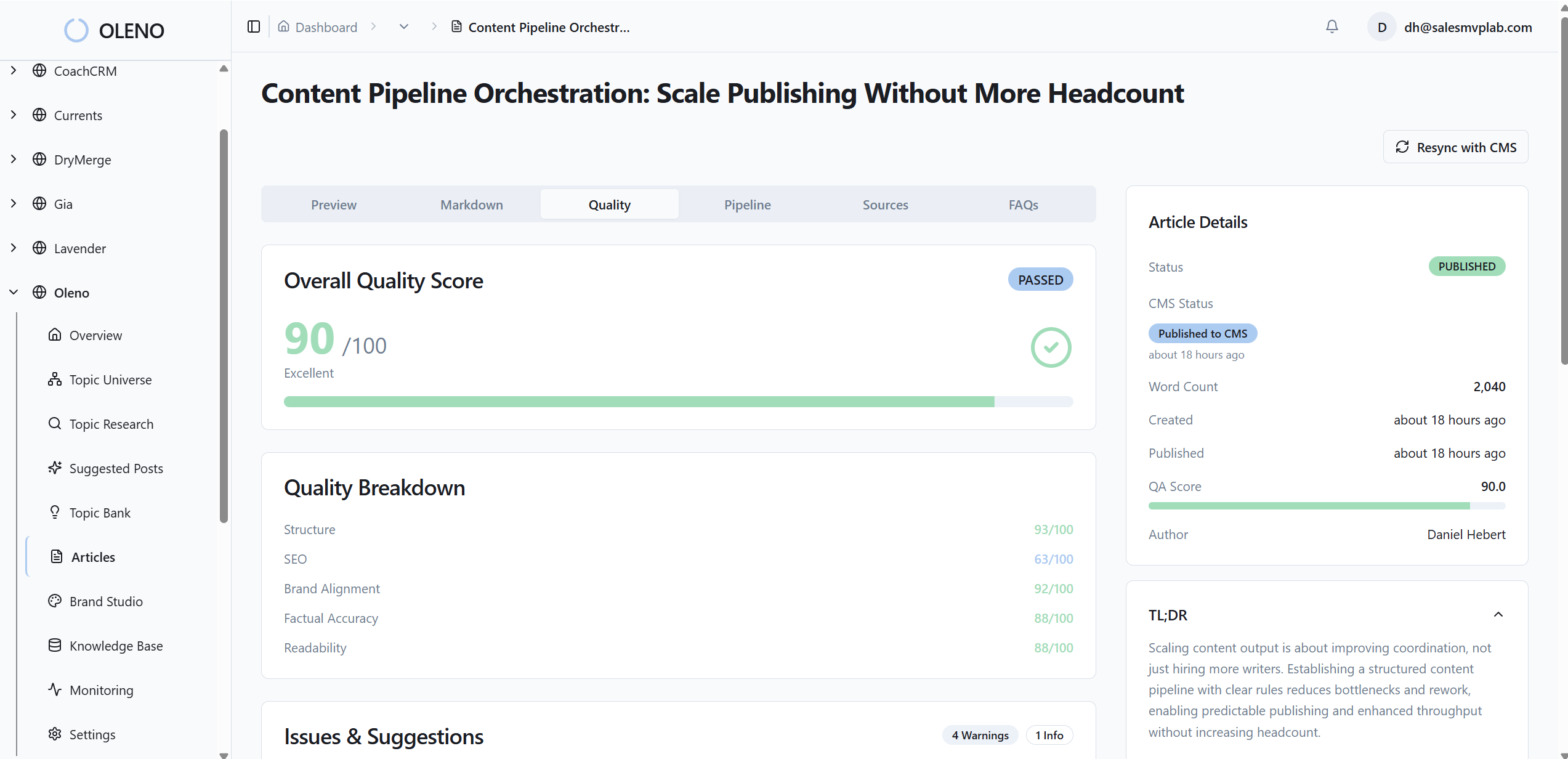

How Oleno Operationalizes Editorial Canary Releases At Scale

Oleno turns the canary method into a repeatable pipeline. Governance defines truth and voice, preflight gates enforce rules, canary scopes control exposure, and monitors handle promote or rollback. The outcome is fewer public mistakes, faster cycles, and visible audit trails leaders trust.

Governed QA Gates Before Anything Ships

Oleno enforces your rules up front. Brand voice, product claims, SEO structure, and citation checks run automatically against your governance settings. That removes opinion wars and catches errors before exposure. It maps directly to your earlier cost problem because issues are found when fixes are cheap.

You define once, then reuse everywhere. Writers work inside clear rails. Editors spend time on judgment, not policing headers. Leaders get reports that show pass or fail by rule, not vibes.

Canary Scopes And Staged Publishing Built In

You set canary scopes once. Oleno can publish in restricted index states, hold in noindex, or limit distribution to a controlled audience. When content clears the bake window with clean signals, Oleno promotes automatically. If a threshold fails, it pauses rollout and opens a repair task so you avoid wide blast errors and rework.

That flow keeps throughput high. The cadence holds. Quality stops depending on who reviewed the draft that afternoon. It is a system now.

70 percent fewer rollbacks in month one is a reasonable target when teams adopt staged publishing with governed gates. Want to see it in your numbers? Request a Demo

Real-Time Monitors, Rollbacks, And Audit Trails

Oleno watches for structural drift, link integrity, and factual mismatches in near real time. It triggers rollback rules, opens a repair task, and records every action for visibility. Leaders see pass or fail gates and rollbacks in dashboards, which rebuilds trust while shrinking review queues and cycle times.

Here is what the capability looks like in practice:

- Governed preflight: Voice, claims, SEO, and citations checked before canary

- Scoped rollout: Publish to limited index or audience, observe during a set window

- Automated promote or rollback: Clear thresholds decide, not opinions

- Audit trail: Every gate result and action logged for reviews and postmortems

- Studio scaling: Apply the same pattern across SEO, Competitive, and Thought Leadership

If you are ready to stop firefighting and make canaries your default path to publish, Oleno’s scoped rollout and rollback engine handles the heavy lifting. Book a Demo

Conclusion

If you publish everything at once, you will keep paying for late fixes. Staged publishing changes the math. You ship to a small slice first, watch signals, then scale when it is safe. The cadence holds. The risk drops. The team breathes again.

Start with one canary scope and three objective gates. Add a short bake window and hard rollback rules. Then automate what you can. Most teams see about a 70 percent drop in publish-related regressions and roughly 80 percent faster remediation in the first month. You will feel the difference fast.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions