How AI is Transforming Content Creation Processes

9 hours a week. That’s a realistic amount of founder or CMO time content can quietly eat when AI is transforming writing speed but not fixing the review loop. If you felt that this week, you’re not behind on AI. You’re stuck in a broken execution model.

Most of the talk about how AI is transforming marketing is focused on drafts. Faster first drafts. Faster idea generation. Faster outlines. Fine. Useful, even. But for a growth-stage SaaS team with 1-3 marketers, that usually creates a weird new problem: content gets easier to start and harder to trust.

You end up with more words. Not more pipeline.

Key Takeaways:

- AI is transforming content creation, but speed alone usually increases review work if governance is weak

- If a founder or CEO still reviews most articles line by line, you don’t have a scalable content system

- The real bottleneck is rarely writing itself, it’s context transfer from strategy to execution

- Growth-stage SaaS teams should treat 72 hours as the max acceptable review cycle for a standard article

- If AI output needs more than 30% of a draft rewritten, manual prompting is usually a bad operating model

- GEO raises the bar because consistency across dozens or hundreds of pieces matters more than random bursts of volume

- The teams that win encode voice, POV, product truth, audiences, and use cases once, then execute repeatedly

You can see what that looks like in practice if you request a demo.

Why AI Content Speed Usually Creates More Work

AI is transforming output speed, but for most B2B SaaS teams it’s also transforming a writing problem into an editing problem. The reason is simple: a fast draft still has to survive brand review, product accuracy review, and positioning review. If those three checks still sit with you, nothing really changed.

Faster drafting hides the real bottleneck

Most founders think content is slow because writing is slow. I don’t think that’s true for most post-PMF teams. The real drag is that strategy lives in the founder’s head, in old decks, in launch docs, in call notes, and maybe in a Notion page nobody fully trusts.

So the writer, freelancer, agency, or AI tool takes a swing. Then you step in and fix it. Again. And again.

I saw this years ago on a small SaaS team. I could personally ship 3-4 high quality blog posts a week because I had the context. Once the team grew, the next writer took longer and the output got weaker. Not because they were bad. Because they didn’t have the full picture. That’s a very different problem.

A simple diagnostic works here. Ask yourself three questions:

- Does the first draft usually miss positioning?

- Do you have to correct product nuance manually?

- Do articles slow down when one executive is busy?

If the answer is yes to 2 out of 3, writing isn’t your bottleneck. Context transfer is.

The editing tax gets worse before teams notice it

At first, AI feels like a win. You get a draft in 10 minutes instead of 3 hours. Great. Then the quiet cost shows up.

A marketer pastes prompts into ChatGPT or Claude. The draft comes back generic. Someone tweaks the prompt. Then rewrites the intro. Then changes the examples. Then fixes what the product actually does. Then removes phrases that sound nothing like the company. Then someone else adds SEO structure. Then it goes into the CMS manually.

That’s not automation. That’s disguised labor.

If a 1,500-word draft takes more than 45 minutes to clean up, the math starts turning against you fast. If it takes more than 90 minutes, you’d often be better off using a tighter system or writing from scratch. Harsh, maybe. But fair.

Some teams prefer the prompt-and-polish model, and that’s valid when volume is low. If you publish 2 articles a month and the founder likes being hands-on, fine. Once you need 8, 12, or 20 pieces across SEO, product marketing, buyer enablement, and competitive content, that model starts leaking everywhere.

GEO punishes inconsistency more than people realize

SEO used to forgive a lot. You could rank with decent structure, enough backlinks, and enough topical coverage. GEO changes what good looks like.

LLMs don’t just look at one page. They synthesize patterns across many pages. So if one article says one thing about your category, another sounds like a freelancer wrote it, and a third gets your product boundaries slightly wrong, your brand signal gets muddy.

That’s the hidden problem with how AI is transforming content teams right now. It increases output variance. And variance is expensive.

One mid-market SaaS team had strong organic rankings with a genuinely talented content team. But the content was too detached from the product narrative, so it didn’t help demand gen much. They had traffic. They didn’t have enough direction. Ranking and revenue had drifted apart. That’s the part a lot of teams miss.

So what should replace the old prompt-heavy model?

The Real Problem Is Fragmented Execution

The real problem isn’t that AI writes badly. It’s that most teams use AI inside a broken operating model. They’re asking a drafting tool to solve a systems problem.

Strategy dies in the handoff

Founders usually know exactly how they want the company to sound. They know the market enemy. They know the nuance in the product. They know what prospects misunderstand on calls. They know why deals stall.

None of that means it makes it into the article.

Picture a founder on a Tuesday night, 9:40 PM, editing a draft in Google Docs after three meetings, a board prep session, and two customer calls. The article is technically fine. But the framing is off, the examples are too broad, the product language is slightly wrong, and the CTA could belong to any SaaS company on earth. So the founder rewrites 40% of it. That happens a few times a month and suddenly content “doesn’t scale.”

That’s not a motivation problem. It’s a handoff problem.

A useful rule here: if your strategy exists mainly in decks, Slack threads, and executive brains, every new contributor will create translation loss. The fix is to encode the strategy where execution actually happens.

More contributors often make quality worse, not better

This sounds backward, but I’ve seen it too many times to ignore it. Teams hire a freelancer, then an agency, then maybe a content marketer. Output should go up. Sometimes it doesn’t. Sometimes it barely moves.

Why? Because every extra contributor adds one more layer of interpretation. And interpretation is where narrative drifts.

Back in the Steamfeed days, we were able to scale because there was both volume and depth, and because every contributor brought a real point of view to a clear topic map. We saw traffic spikes at 500 pages, 1,000 pages, 2,500 pages, 5,000 pages, then 10,000 pages. Most individual pages didn’t get much traffic. But the system worked because the coverage model worked.

Small SaaS teams don’t have that luxury. They don’t have 80 contributors. They have one founder, one marketer, maybe one freelancer. So if quality control depends on one executive doing final cleanup, the model breaks much earlier.

A hard threshold helps. If one person has to personally approve more than 8 pieces of content a month, quality control should move from manual memory to system rules. Past that point, leadership becomes the bottleneck.

AI exposes weak fundamentals

This is the part I think most people get wrong when they talk about how AI is transforming B2B marketing.

AI didn’t create the mess. It exposed it.

If your brand voice is fuzzy, AI exposes it. If your positioning is generic, AI exposes it. If your product truth is scattered across help docs and launch notes, AI exposes it. If your audience definition is weak, AI exposes it.

That’s why the same AI tool looks magical for one company and useless for another. One company has encoded thinking. The other has vibes.

And yes, there’s a case for using AI as a lightweight assistant. For ad hoc work, it’s useful. For reactive ideas, also useful. But if you want steady GTM content across blog, product marketing, category narrative, comparisons, and buyer enablement, prompts alone won’t hold the line.

The next question is obvious: what does hold the line?

What High-Output Teams Do Differently

The teams that scale content well don’t just ask AI for drafts. They build a system where strategy survives contact with execution. That’s the shift. Not more prompting. Better infrastructure for judgment.

Start with a diagnostic before you add tools

Before you buy anything or hire anyone, figure out which failure mode you actually have. Most teams skip this and waste a quarter.

Use this quick self-assessment:

- If publishing is under 4 pieces a month, your issue is probably prioritization, not production.

- If you publish 4-8 pieces a month but quality swings wildly, your issue is governance.

- If you publish 8+ pieces a month and review cycles keep slipping, your issue is orchestration.

- If traffic is fine but pipeline impact is weak, your issue is narrative alignment.

That last one matters a lot. A team can look healthy in GA4 and still be producing content that has no demand-gen spine. I’ve seen it. Good rankings. Weak commercial pull. It feels productive until someone asks what content is actually doing for revenue.

Honestly, that mismatch surprises founders more than almost anything else.

Encode voice, positioning, and product truth before scaling volume

Most teams do the opposite. They try to scale first, then clean things up later. Bad move.

You want the core inputs defined before volume increases:

- voice and tone rules

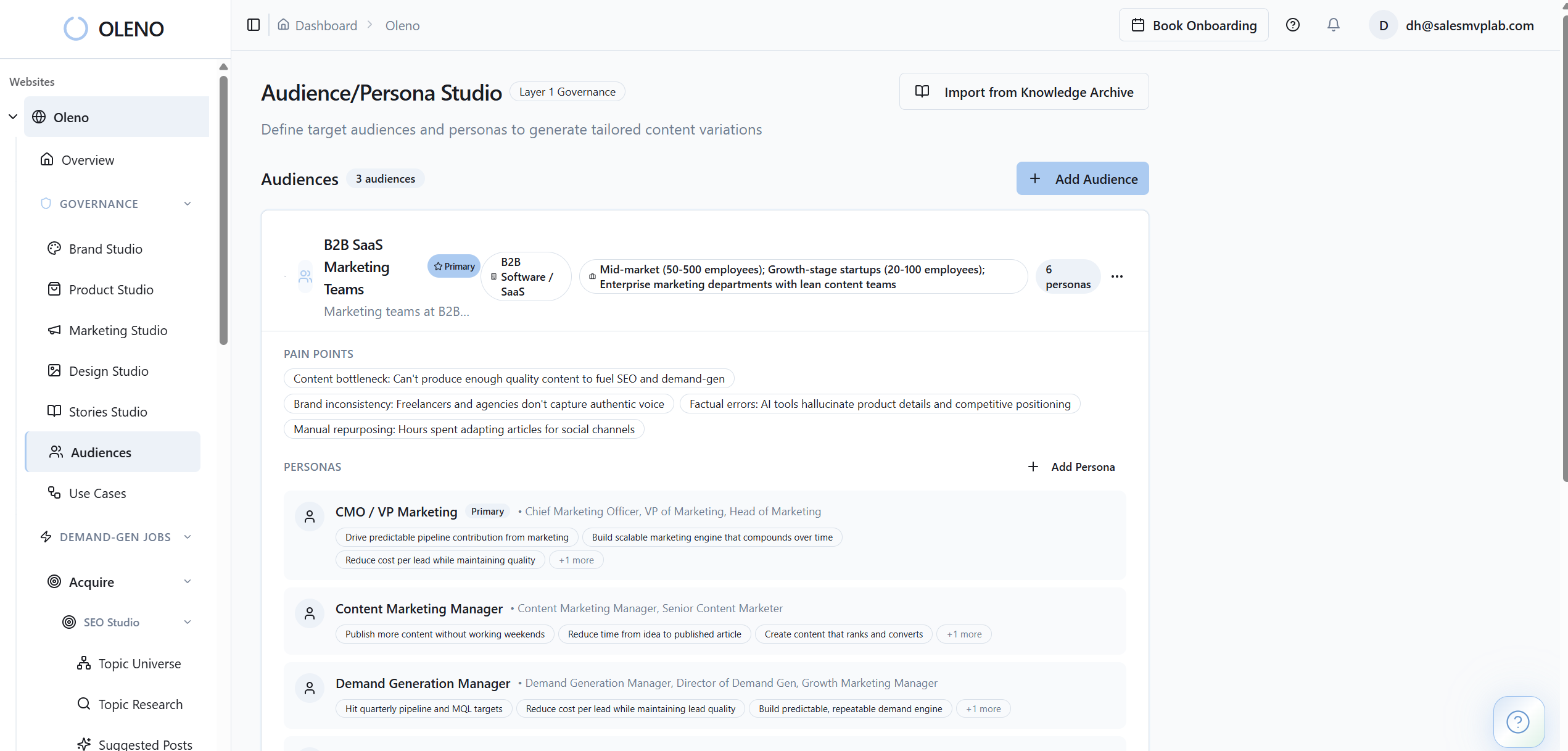

- audience segments

- persona goals and objections

- use cases and workflows

- product claims and boundaries

- category framing and key messages

Without that, every draft becomes a fresh debate.

Think of it like hiring a sales team without a pitch, ICP, or objection handling. You’ll still get activity. You just won’t get consistency. Content works the same way. The draft is the call. Governance is the sales playbook.

A practical rule: don’t try to scale past 8 articles a month until product claims, audience definitions, and category framing live in one governed place. Under that threshold, brute force can limp along. Over it, slippage compounds.

Separate planning from execution

A lot of marketing teams mash these together. They brainstorm topics in one sitting, assign whatever sounds good, and hope the mix works out over time. It rarely does.

Planning should answer:

- which audiences need coverage

- which personas are underserved

- which use cases matter now

- which funnel stages are thin

- which narratives need repetition

Execution should answer:

- what gets briefed this week

- how it gets drafted

- how quality gets checked

- what gets published next

That separation matters because AI is transforming content operations most effectively when it handles repeatable execution, not when it pretends to be your strategy lead.

If you don’t split those layers, your team ends up making strategic decisions inside random draft sessions. That’s expensive. And weirdly common.

Around this point, most founders start asking the same thing: okay, but what does the system actually look like in the real world? Fair question. If you want to see how that works in a way that doesn’t depend on endless prompt tuning, you can request a demo.

Set operational thresholds so quality doesn’t drift quietly

You need a few hard rules. Not a 40-page SOP. Just decision points.

Here are the ones I’d use:

- If review time exceeds 72 hours, remove a reviewer before adding a new one

- If more than 30% of a draft gets rewritten, fix the inputs, not the writer

- If one content type gets more than 50% of output for 2 months straight, your funnel coverage is drifting

- If published content older than 6 months contains outdated product or pricing context, queue a refresh

- If the same topic is reframed for 3 different audiences, build audience-targeted variations instead of one generic asset

Those rules sound simple because they are. But they create operational clarity. And clarity is what keeps volume from turning into sludge.

Treat content like a factory with QA, not an art project with opinions

I don’t mean content should be bland. Quite the opposite. It should have stronger opinions. More specificity. More lived-in examples.

But the process around it should feel like manufacturing quality goods, not like hoping a genius shows up on Tuesday.

Good factories don’t inspect quality only at the end. They build checks into the line. Same deal here. If your only quality check is “founder reads it at the end,” you’ve placed the whole system on one tired human being.

That’s why how AI is transforming content should really be framed as how AI is transforming operations. The draft is one step. The real gain comes from reducing coordination, repeat edits, factual errors, and drift.

And once you see it that way, the product layer makes more sense.

How Oleno Turns AI Into a Content System

Oleno is built for teams that already know what they want to say, but can’t keep carrying the full execution load themselves. It’s not trying to replace strategy. It’s trying to make strategy stick.

Governance first, then execution

Oleno starts where most AI tools don’t. Not with a prompt box. With governance.

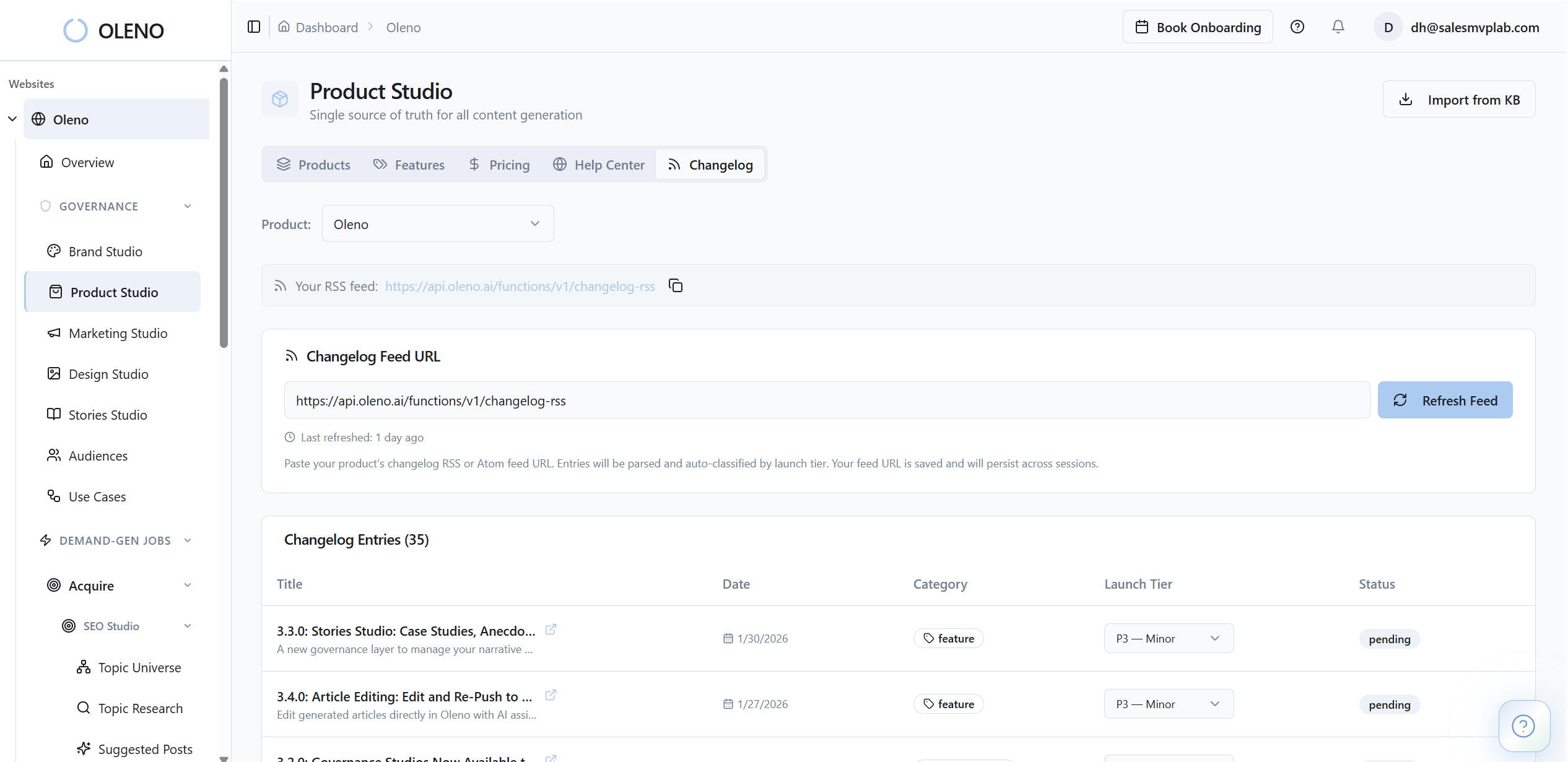

Brand Studio defines how the company should sound. Marketing Studio stores the core messages, category framing, and point of view. Product Studio holds approved product claims, boundaries, and use cases so content doesn’t wander into fiction. Audience & Persona Targeting and Use Case Studio shape who the content is for and what real workflow it should speak to.

That matters because the whole pain described earlier comes from translation loss. Oleno reduces that by loading the core context into the content process itself, instead of expecting a founder to manually re-explain it every time.

In plain English, the strategy doesn’t sit in a doc and hope for the best. It gets used.

Execution runs across multiple content jobs, not one-off drafts

Once the governance layer is set, Oleno can execute against different content needs through the right studio. Programmatic SEO Studio handles acquisition content at scale. Product Marketing Studio handles feature and workflow education. Category Studio supports opinionated market framing. Buyer Enablement Studio covers decision-stage content. Writing Studio handles more reactive moments when something happens in the market and you want a response fast.

That’s a big deal for a growth-stage SaaS team because the problem usually isn’t “we need one article.” The problem is “we need SEO content, launch content, comparison content, and founder-led POV, and we can’t hire three more people.”

That’s where the +3 headcount idea actually lands. Not as hype. As operating leverage.

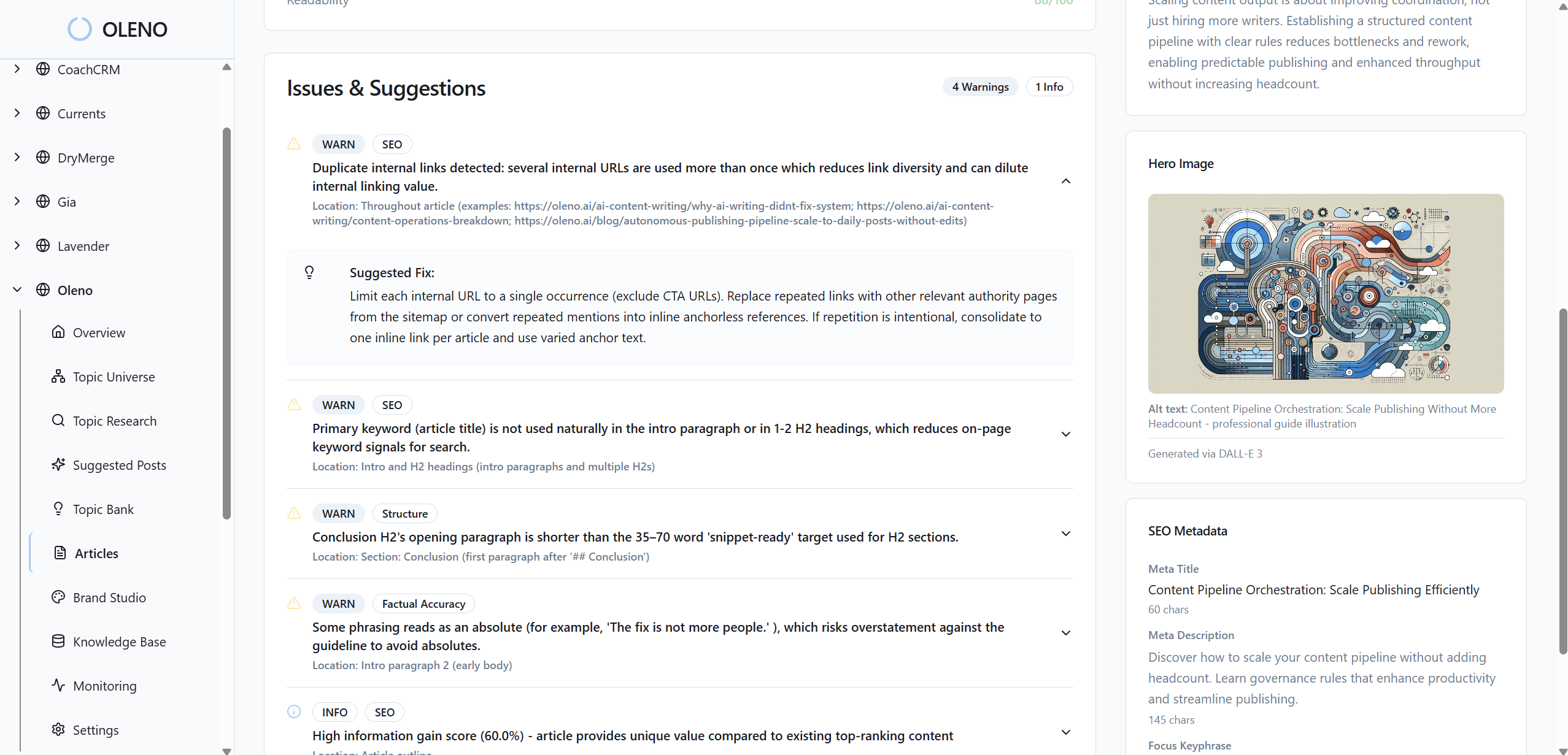

Quality control is built into the pipeline

This is probably the most important part. Oleno doesn’t assume draft speed equals publish readiness.

Quality Gate runs 80+ automated checks across voice, structure, grounding, clarity, and accuracy before content reaches review. And if published pieces drift over time, Content Refresh & Drift Monitoring can flag them when product claims, messaging, or positioning go stale. The Orchestrator then helps run the pipeline on a steady cadence, instead of relying on somebody remembering to kick off work manually.

So the old cost from earlier changes shape:

- repeated founder rewrites go down because inputs are governed

- ad hoc content scheduling gets replaced by a system

- product drift gets caught earlier

- output can move from 4-8 pieces a month toward 20-40+ in the right SEO use case without adding headcount

That doesn’t mean Oleno does your keyword research, technical SEO, attribution, or paid media. It doesn’t. You still need those surrounding disciplines. But it does remove the content execution bottleneck sitting in the middle of your GTM system.

If your team is at that point where strategy is clear but execution keeps slipping, book a demo.

Why AI Transformation Only Matters If It Compounds

How AI is transforming marketing is the wrong question on its own. The better question is whether AI is transforming your operating model or just making more drafts for you to babysit.

If you’re a founder or CEO at a growth-stage SaaS company, that distinction matters a lot. You don’t need infinite content. You need content that sounds like your company, stays accurate, covers the funnel, and keeps shipping when you’re in meetings. That’s the bar.

AI can absolutely help you get there. Just not as a loose pile of prompts.

The teams that pull away over the next couple of years will be the ones that encode their thinking once, then let a system execute it over and over again. That’s how output compounds. That’s how trust compounds too.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions