How to Choose Between Oleno and Semrush Content Toolkit

If you're comparing oleno vs semrush content, you're probably not just buying a writing tool. You're trying to figure out whether your agency needs better channel optimization, or a better system for turning client strategy into content that actually sounds right.

That choice matters more than it seems. Pick the wrong kind of platform and you don't just waste budget. You create frustrating rework, slower delivery, more review cycles, and that familiar agency headache where the team starts saying, "honestly, it's faster if I just rewrite it myself."

I've seen this pattern before. Back when I was running high-volume content programs, the hard part usually wasn't getting words on the page. It was getting the context right, across different audiences, offers, and points of view, without adding a ton more people to the process.

Key Takeaways:

- Semrush Content Toolkit is usually a better fit if your main goal is search research, topic discovery, and optimizing content for organic rankings.

- Oleno makes more sense when the bigger problem is turning positioning, product context, audience detail, and brand rules into repeatable client delivery.

- For agencies, the real evaluation criteria isn't "which tool writes more." It's which one reduces revision cycles across multiple accounts over the next 6 to 12 months.

- If you're managing distinct client voices, product nuances, and use-case-based messaging, a workflow built around strategy inputs will likely matter more than another SEO score.

- A short evaluation sprint of 2 to 3 weeks is usually enough to see whether a platform cuts review time or just moves the work around.

Why Agencies Get Stuck Choosing Between Search Tools And Strategy Systems

Most agency teams don't wake up wanting another content platform. They hit a wall first. Usually it starts when a few client accounts grow, expectations go up, and the old way of working stops holding together.

The surface complaint sounds like a production problem. You need more output. You need drafts faster. You need the team to publish more without hiring three more strategists and two more writers. Fair. But that usually isn't the whole problem.

The deeper issue is that a lot of content tools are anchored in channels and tactics, not in marketing strategy itself. That's the thing people miss. A tool can give you keywords, content ideas, optimization scores, and competitive pages to look at. Useful, yes. But if it doesn't hold the market point of view, audience pain, product reality, use cases, and brand rules together, your team still has to do the hard part manually.

I remember listening to a panel years ago at the DMZ in Toronto. One marketer kept talking through a stack of tactics, tool after tool after tool. Then another operator on the panel cut through it and basically said tactics without strategy are useless. That stuck with me. Because it was true then, and it's still true now. When positioning is clear, content gets easier. When positioning is fuzzy, every draft turns into a debate.

For agencies, that's where this decision gets expensive. Let's pretend you run 12 active client accounts. Each account has its own tone, offer, competitor set, and product language. If every draft needs 45 minutes of strategist correction and another 30 minutes of revision, that cost piles up fast. Not dramatic. Just real. And after a few months, your margin starts leaking through review cycles.

If you want to see whether Oleno fits your process, you can request a demo once you've mapped where those review loops are actually coming from.

What Actually Matters When Comparing Content Platforms For Agencies

The right criteria for this decision usually has less to do with raw writing output and more to do with how the system handles context. Agencies live and die on context. That's why a generic comparison often leads people in the wrong direction.

Search research matters if discovery is your main bottleneck

If your team struggles to find topics, understand search intent, cluster keywords, and identify ranking opportunities, then search tooling deserves a lot of weight. Semrush has a strong reputation in this part of the workflow. Agencies that lead with SEO retainers or high-volume search programs may care a lot about this.

That said, search research isn't the same thing as content execution. That's where people can get tripped up. A team can know exactly what to write about and still produce average content because the product story, audience pain, or category angle isn't clear enough in the draft.

Brand isolation matters if you manage multiple client voices

For agencies, voice problems are rarely about writing talent alone. More often, the team is juggling too many account-specific rules in their heads. One client wants direct, hard-edged positioning. Another wants cautious enterprise language. Another has six product nuances you can't get wrong.

When a system can't separate that context cleanly, the burden falls back on the strategist. And that's expensive labor. In my experience, that's also the work agencies underprice because it happens in bits and pieces all day long.

Product and audience context matters more than people think

This is where the split gets sharper. A lot of tools can support content production at the keyword and draft level. Fewer are built around the inputs that actually shape strong B2B marketing content.

For agency work, that usually means the platform should account for things like:

- product definitions and feature boundaries

- audience segments and personas

- use cases tied to buyer pains

- category point of view and positioning

- brand tone, style rules, and messaging constraints

One missed detail can throw the whole draft off.

Workflow cost matters more than seat cost

Buyers often focus first on subscription price. That's understandable. But for agencies, the bigger cost is usually labor wrapped around the tool. If a cheaper platform creates more editing, more QA, and more back-and-forth with account leads, it may not stay cheaper for long.

A simple way to think about it is this:

- estimate average drafts per month

- estimate average review minutes per draft

- multiply by loaded hourly cost of strategist and editor time This is particularly relevant for oleno vs semrush content.

- compare that number against software price

Sometimes the tool is not the cost center. The review loop is.

How To Evaluate Oleno And Semrush Content Toolkit Without Getting Lost In Demos

A good evaluation process is less glamorous than most buyers want. But it works. You don't need a massive RFP. You need a controlled test with real client work.

A two-week pilot usually reveals the truth faster than feature lists

Feature lists are fine. Buyers need them. But they rarely tell you what daily use will feel like. The cleaner way is to run both tools through the same sample workflow over 2 weeks.

Use 2 or 3 live client accounts if you can. Pick one account with straightforward SEO content, one with more product nuance, and one with a more demanding brand voice. Then evaluate the same jobs in each system:

- topic selection

- brief creation

- first draft quality

- revision effort

- final publish readiness

What you're looking for isn't who says they can do more. You're looking for where the work actually lands.

You should score output at the review layer, not just at draft generation

This matters a lot. Some content looks decent on first pass and still creates downstream issues. It may be factually loose. It may miss positioning. It may flatten product nuance. It may sound vaguely correct while being commercially weak.

So build a scorecard around review effort:

- minutes to approve first draft

- number of strategic corrections

- number of factual corrections

- number of voice corrections

- number of client-facing revision rounds

Frankly, this is where weak-fit tools usually get exposed.

The strongest test is whether your strategists trust the output

You can measure rankings later. First, measure trust. If your strategists open a draft and feel worried about what they'll need to fix, that's signal. If they open it and think, "okay, this is workable," that's signal too.

Not everyone agrees with using trust as an evaluation criterion, and fair point. It can sound soft. But in agency environments, trust changes adoption. No one sticks with a platform that creates hidden cleanup work.

If you want to pressure test Oleno against your own process, the most useful next step mid-evaluation is to request a demo around one active client workflow, not a generic sandbox.

Common Buying Mistakes That Create More Rework Later for Oleno vs semrush content

A lot of poor tool decisions are pretty understandable. People are busy. Demos are polished. Teams want relief fast. Still, a few mistakes show up over and over.

Buyers often confuse content optimization with marketing understanding

This is probably the biggest one. A platform can be strong at optimizing for a channel and still be weak at representing your client's market story. Those are different jobs.

If your agency mostly sells SEO production, this distinction may matter less. But if you sell strategy plus execution, it matters a lot. Because your client isn't paying you just to rank. They're paying you to position them well in-market.

Agencies underestimate the cost of context switching

Context switching is sneaky. It doesn't show up on a software pricing page. But it burns hours. When one strategist has to remember five audiences, three product lines, four competitive angles, and eight tone rules before touching a brief, that's a tax on the whole team.

We were surprised by this in some past content operations work. Not because the problem was new, but because it was bigger than it looked. The visible issue was slow drafts. The actual issue was fragmented context.

Teams buy for the manager and forget the operator

Leadership often buys based on broad capability and reporting. The people actually using the system care about something simpler. Can they get to a strong draft without fighting the tool? This is particularly relevant for oleno vs semrush content.

If the operator experience is clunky, usage drops. Then the team goes halfway back to docs, spreadsheets, and manual checklists. Which means you end up paying for software and keeping the old mess alive at the same time.

Buyers ask who has more features instead of which system fits the work

More features can help. Or they can distract. What matters is fit.

If your work is primarily search-first planning, a search-heavy toolkit may fit well. If your work is translating positioning and product nuance into repeatable client content, then a system built around that reality may fit better. There isn't a universal answer. There is a job-to-be-done answer.

A Simple Decision Framework For Agency Strategists

If you're an agency strategist, you need a way to make this decision without turning it into a month-long debate. A lightweight framework usually works better than trying to settle it by opinion.

The right choice depends on where your current bottleneck lives

Start by being blunt about your bottleneck. Is it research? Is it first draft production? Is it QA? Is it client-specific messaging? Is it account-level scaling?

Use this checklist with your team:

- If topic discovery and keyword planning are your biggest weak spots, weight Semrush Content Toolkit more heavily.

- If your team already knows what to write, but struggles to turn strategy into good drafts across many accounts, weight Oleno more heavily.

- If most content problems show up after draft generation, score review effort higher than research depth.

- If client voice drift causes escalations or rewrites, prioritize systems that hold account-specific context better.

- If your agency margin is getting squeezed by strategist edits, track labor reduction before you track output volume.

One sentence worth sitting with: software rarely removes work, it usually relocates it.

A side-by-side matrix keeps the conversation objective

| Evaluation Area | Semrush Content Toolkit | Oleno |

|---|---|---|

| Search research and keyword discovery | Likely a stronger fit for teams centered on SEO planning and topic research | May matter less if your main issue is not research |

| Product, audience, and positioning context | May require more manual handling outside the tool | Better fit if your team needs strategy inputs carried into execution |

| Multi-client brand isolation | Depends on how your team manages account context outside the platform | Better fit if distinct client rules need to stay separated |

| Review-loop reduction | Varies based on how much manual context editors still need to add | Better fit if the goal is reducing strategist correction time |

| Agency scaling without proportional hiring | Helps at the research and optimization layer | Better fit if scaling is blocked by context transfer and QA labor |

That table won't pick for you. It's not supposed to. It should make the trade-offs harder to ignore.

Your final decision should be based on one measurable improvement

Pick one metric that matters enough to change behavior. For most agencies, I'd use one of these:

- review minutes per draft

- drafts approved in first round

- strategist hours per account per month

- turnaround time from brief to publish

Then run the pilot and let the result guide you. If one platform materially lowers a constraint you already feel every week, that's usually your answer.

Apply The Framework To Your Own Evaluation

By this point, you probably know which direction you lean. Not with total certainty maybe, but enough to narrow the field. If your agency needs stronger search planning, Semrush Content Toolkit may be the more natural fit. If your bigger issue is scaling strategy-rich content across multiple clients without burying your team in edits, Oleno may line up better with the work.

The practical move now is simple. Run a live comparison using two or three client accounts, score the output where your team actually feels the pain, and make the decision from there. If you want to see how Oleno maps to that kind of agency evaluation, you can book a demo around your real workflow and pressure test it against the criteria above.

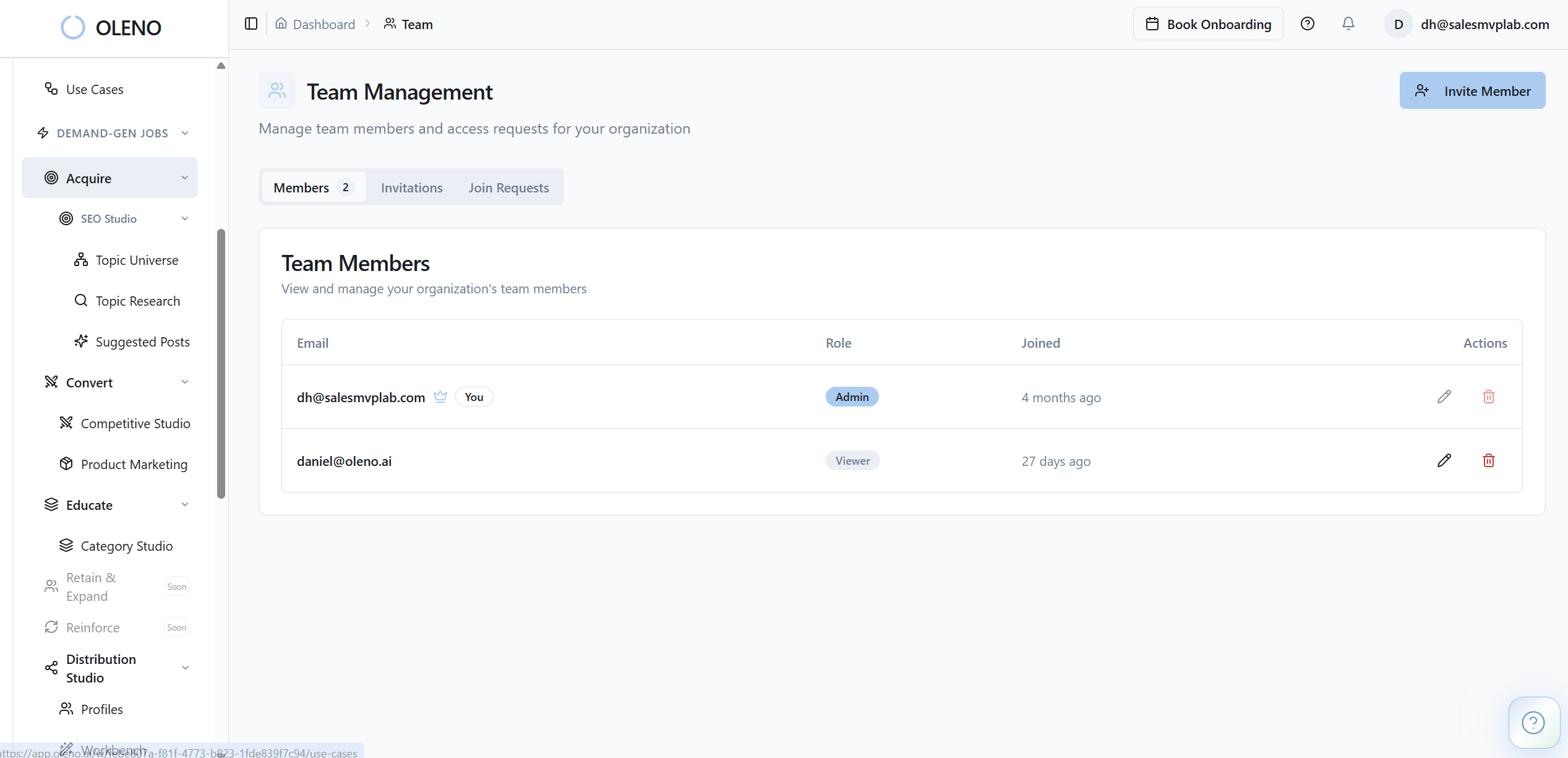

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions