How to Choose Oleno or Prompt-by-Prompt LLM Workflows

98% of bad AI content decisions happen before the first draft gets generated. If you're choosing between Oleno or prompt-by-prompt LLM workflows in 2026, you're not really choosing a writing method. You're choosing where your marketing thinking lives.

Prompt-by-prompt LLM workflows can work for a while. We all did it. You open ChatGPT or Claude, paste a long prompt, argue with the output, paste another prompt, fix the framing, add product context, rewrite the intro, then hand it to someone else who changes the positioning anyway. That's fine at very small scale. But once you have PMM, demand gen, content, and leadership all touching the same narrative, the headache isn't writing speed. It's coordination, context, and rework.

For a scaling SaaS marketing team, that's where this decision starts to matter. You're not buying words. You're trying to generate content that supports campaigns, reinforces positioning, and doesn't create frustrating rework three people later.

Key Takeaways:

- Prompt-by-prompt LLM workflows are usually fine for solo operators, but they start breaking once 4 or more contributors need shared context and repeatable output.

- The real evaluation criteria isn't "which tool writes better." It's where strategy, audience context, and product truth get stored and reused.

- If your team spends more than 30 minutes rewriting AI drafts for positioning and brand fit, the bottleneck is usually system design, not model quality.

- Buyers should evaluate this choice across six factors: context depth, reuse, review burden, handoff risk, output consistency, and executive visibility.

- In most mid-market SaaS teams, a 90-day test is enough to tell whether you're reducing rework or just hiding it upstream.

Why This Choice Gets Hard Once Marketing Gets More Complex

Choosing between Oleno or prompt-by-prompt LLM workflows gets harder when your team is no longer one marketer and one chat window. The decision becomes harder because content is now connected to pipeline, positioning, product launches, and category story. Once several people are involved, the cost of bad context compounds faster than the cost of bad writing.

Back when I was the sole marketer at a SaaS company, I could crank out 3 to 4 strong blog posts a week because all the context lived in my head. Product nuance, customer pain, positioning, objections, all of it. Then the team grew. And right away, output got slower, not faster. Not because people weren't good. Because they didn't have the same context, and I didn't have the time to keep transferring it over and over.

That's the trap with prompt-by-prompt workflows. They look cheap and flexible at first. And fair enough, for early teams they often are. But the real problem isn't that prompts are bad. It's that prompts are terrible at carrying an entire go-to-market system across multiple people, multiple content types, and multiple review loops.

Imagine your demand gen manager needs a campaign page, two nurture emails, a category article, a webinar landing page, and a sales follow-up asset. In a prompt-by-prompt setup, each asset starts over from scratch. New prompt. New context paste. New chance for drift. New chance to miss the actual message. By Friday, nobody's arguing about grammar. They're arguing about what the product actually is.

That's expensive. Let's pretend your team has four people touching content each week, and each person loses just 45 minutes to clarifying context, fixing drift, or rewriting weak AI framing. That's 3 hours a week. Over a quarter, that's roughly 36 hours gone. Almost a full work week. And that estimate is pretty conservative.

The core question is simple: are you evaluating a writing tool, or are you evaluating a system for marketing execution?

If you want to see how a system-based approach looks in practice, you can request a demo.

What Actually Matters When You Compare These Two Approaches

The right comparison criteria between Oleno and prompt-by-prompt LLM workflows have less to do with raw generation quality and more to do with operational fit. Buyers usually over-index on the demo draft. Underneath that draft are six things that matter much more once content volume and team count go up.

A Shared Context Layer Reduces Rework More Than Better Prompts Do

Context depth matters because LLM output is usually a reflection of input quality, not magic. A prompt-by-prompt workflow depends on each operator remembering to paste the right audience detail, product nuance, category framing, proof points, and brand constraints every single time. Miss one, and the draft may still sound polished while being strategically off.

That's why I like the 70/20/10 Rule for evaluation. Spend 70% of your attention on context structure, 20% on workflow fit, and 10% on raw writing style. Most buyers do the reverse. They obsess over whether sentence three sounds sharper, while ignoring the fact that the whole piece may be built on weak positioning.

A practical threshold helps here. If your team is repeatedly adding the same background into prompts 3 or more times per asset, your context model is probably broken. You're not saving time. You're just moving the work around. One person writes the prompt, another person rewrites the output, and a third person corrects the message.

Prompt workflows can still make sense if one experienced operator owns most output and knows the business inside out. That's a valid exception. But once you need reusable product, audience, and messaging context across functions, a system with persistent context starts to matter a lot more.

Review Burden Tells You More Than Draft Quality Ever Will

Review burden is one of the cleanest diagnostic signals in this entire decision. If a draft looks good on first read but still triggers positioning edits, product corrections, tone fixes, and CTA rewrites, then the writing wasn't actually good. It was cosmetically good.

You can use what I call the Two-Review Test. Count how many meaningful revisions happen after the first draft and after the second reviewer. If the same strategic issues keep showing up after reviewer two, your workflow is producing rework, not leverage. That's usually where prompt-by-prompt systems start to wobble.

A lot of teams think, "that's normal, marketing is iterative." And yes, some iteration is healthy. I don't think any serious team should expect zero edits. But there's a big difference between sharpening a point and rebuilding the argument. One is editing. The other is waste.

Here's the operational rule I'd use: if more than 40% of review comments are about message, positioning, audience fit, or product truth, don't solve that with better prompting alone. Solve it upstream by fixing how context gets structured and reused. Otherwise, every article becomes a negotiation between the reviewer and the model.

That gets tiring fast.

Consistency Across Assets Usually Beats Isolated Draft Brilliance

Consistency matters more than most teams admit, especially for category creation and thought leadership. One excellent article doesn't build market understanding. Fifty assets saying roughly the same thing, from slightly different angles, does.

This is where the comparison gets interesting. Prompt-by-prompt LLM workflows can absolutely produce a strong one-off asset. No question. Some marketers are really good at it. But repeated consistency across blog content, landing pages, nurture sequences, buyer enablement, and product marketing is harder because every output depends on the operator remembering the same underlying story.

Think of it like a relay race where each runner writes their own map. You might still finish. But you lose time at every handoff, and you drift off course more than you realize.

The consistency threshold I watch is 5 assets across 3 channels. If your category framing, pain language, and product positioning noticeably drift across that set, you're not evaluating content quality anymore. You're evaluating message control. For scaling SaaS teams, that's usually the real issue.

There's also a hidden buyer consequence here. Your prospects don't consume one asset in isolation. They read your article, click a landing page, hear a sales pitch, and maybe ask an LLM about your category. If each surface tells a slightly different story, trust weakens. Not dramatically. Just enough to hurt conversion.

Executive Visibility Becomes Important Once Content Ties to Pipeline

Most teams don't care about executive visibility until content volume gets expensive. Then suddenly the VP of Marketing wants to know what's shipping, which narratives are being pushed, and whether campaign content actually lines up with the plan. Reasonable ask.

Prompt-by-prompt workflows usually struggle here because the work is fragmented across personal docs, chat history, copied prompts, and local edits. You can still manage it, sure. Plenty of teams do. But the management overhead rises with every contributor and every campaign.

A better way to evaluate this is with the 3-Layer Visibility Check:

- Can leadership see what's being produced?

- Can they verify it matches strategic priorities?

- Can the team trace output back to source context?

If the answer is no to two or more, you're probably not dealing with a writing problem. You're dealing with an operating model problem.

This matters more in 2026 because AI-generated output is cheap. Attention isn't. Executive teams will care less about whether content can be generated and more about whether it can be trusted, reused, measured, and tied to pipeline narratives.

How To Evaluate The Right Fit For Your Team

The cleanest way to evaluate Oleno or prompt-by-prompt LLM workflows is to test them against your actual marketing process, not a sandbox writing prompt. Buyers make bad decisions when they compare two tools on a fake blog post. You need to compare them against the messy reality of campaign work, approvals, product nuance, and cross-functional handoffs.

Run A 90-Day Workflow Test Instead Of A One-Day Writing Test

A one-day test overweights first draft quality. A 90-day workflow test shows whether the system holds up under real marketing conditions. That's the better buying lens.

Use the 3x3 Evaluation Grid. Test 3 use cases over 3 months: one thought leadership asset, one campaign asset set, and one buyer enablement piece. Then score each approach on setup time, review cycles, context reuse, output consistency, and handoff friction. Don't just ask, "which draft do we like more?" Ask, "which approach keeps working after week six?"

Week six matters a lot. Early excitement hides bad process. We were surprised by this more than once in our own work. Some workflows feel fast on day one because nobody has hit the second-order problems yet. By week six, drift shows up, reviewers get annoyed, and your most strategic people get pulled back into editing.

Use a hard rule here: if an approach requires your most senior marketer to rescue message quality more than once per week, it isn't truly scalable for your team.

Score The System On Reuse, Not Just Output

Reuse is one of the strongest buying criteria because it compounds. A prompt that works once is useful. A system that carries product definitions, audience pain, use case nuance, category framing, and tone across dozens of assets is much more valuable once your team is busy.

I'd score this with a simple Reuse Ratio. Count how much of the context for a new asset is already available and usable before anyone starts drafting. If less than 60% of what you need is already structured and reusable, your team is still rebuilding the same foundation every time.

That's usually where prompt-by-prompt setups get exposed. You can create libraries, saved prompts, and docs. People do. And that can absolutely extend the life of the model. So I want to concede that point because it's fair. But libraries still rely heavily on humans knowing what to grab, when to grab it, and how to merge it correctly. That's a better organized manual process, not necessarily a true operating system.

You should also test reuse across roles. Can PMM use the same strategic inputs as content? Can demand gen pull campaign assets from the same narrative source? Can leadership inspect what message is being repeated? If not, then reuse exists in theory, not in practice.

If you want to compare that on a live workflow, you can request a demo.

Pressure-Test The Weakest Link, Not The Strongest User

Every buying team has that one person who can make any tool look good. They're fast, sharp, and know the business cold. Great. But don't evaluate on your strongest user. Evaluate on your median user, and pressure-test your weakest link.

Ask a demand gen manager to generate a campaign asset set. Ask a PMM to create category content. Ask a content marketer to build buyer-facing material using the same underlying strategy. Then compare how much correction each output needs. That's where you'll see whether the system transfers understanding or just rewards prompt craftsmanship.

I use the Bus Factor Rule here. If one key operator disappeared for two weeks, would the content system still produce usable work? If not, the knowledge still lives in a person, not the workflow. That may be acceptable for a very lean team. It gets risky for a mid-market team with quarterly pipeline pressure.

This is where buyers often change their minds. They start thinking they need the smartest prompt stack. Then they realize they actually need a lower-risk way to preserve marketing context across people and time.

The Common Buying Mistakes That Skew This Decision

Buyers usually get this decision wrong by focusing on visible output and ignoring hidden operating cost. The mistake isn't being excited by AI. That's rational. The mistake is testing for writing when the deeper issue is execution quality across a team.

Cheap Drafts Can Hide Expensive Coordination Costs

Prompt-by-prompt workflows often look cheaper because the software cost is lower and the barrier to entry is basically zero. That part is true. But cheap drafts can still create expensive operations.

Let's pretend your team saves $1,000 a month in software costs with a lighter prompt workflow. Sounds good. But if that same setup causes just 12 extra hours of PMM and content review per month, and those people are expensive, the savings may disappear pretty quickly. Not always. But often enough that buyers should model it.

This is what I call the Visible Cost vs Hidden Cost split. Visible cost is subscription spend. Hidden cost is review time, handoff friction, delayed launches, and narrative cleanup. Mature buyers should score both. If you measure only the visible line item, you'll likely undercount the real cost of ownership.

And honestly, this is where a lot of "AI didn't work for us" stories come from. The model probably worked fine. The operating design didn't.

Buyers Overestimate Prompt Portability Across Teams

A great prompt written by one smart operator rarely transfers as cleanly as people expect. That's because prompts are usually full of implied context. The author knows what matters, what to ignore, and where to push back. The next person doesn't.

That leads to a common mistake: the Prompt Playbook Illusion. Teams think they've documented the workflow because they saved prompts in Notion. But a saved prompt isn't the same as a shared system. One is instructions. The other is embedded context that different people can actually use consistently.

There's a legit counterpoint here. If your team is small, tightly aligned, and one person still owns most messaging, portability may not matter much yet. That's a fair exception. I wouldn't force a heavier system too early just to sound sophisticated. But once your message has to travel through multiple people and channels, prompt portability gets weaker than it looks.

You feel it when someone says, "the draft is fine, but it doesn't sound like us." Then someone else says, "the landing page doesn't match the article." Then sales says, "this isn't how we pitch it." Sound familiar?

Buyers Test In Ideal Conditions And Buy For Messy Ones

This might be the most common mistake of all. Buyers test under ideal conditions. They choose under pressure. Then they deploy in messy reality.

The ideal-condition test usually looks like this: one experienced marketer, one clean prompt, one quiet afternoon, one asset type, no competing deadlines. Of course the output looks decent. But that's not how mid-market SaaS teams actually work.

Real conditions are messier:

- multiple reviewers

- shifting priorities

- product nuance that changes mid-quarter

- different asset types

- campaign deadlines

- people joining the workflow who weren't in the initial setup

One thing matters here: resilience. If a system performs well only when your smartest marketer has time to babysit it, it's not really solving the scale problem. It's just creating a polished demo version of the problem.

A Practical Framework For Making The Call

The best way to decide between Oleno or prompt-by-prompt LLM workflows is to use a simple framework that matches your team shape, not internet opinions. Different teams need different levels of system support. The mistake is assuming the same setup works at every growth stage.

The Four-Signal Framework Separates Tool Fit From Team Fit

Use this Four-Signal Framework to make the decision:

| Signal | Prompt-By-Prompt May Fit | A System Like Oleno May Fit |

|---|---|---|

| Team Size | 1 to 2 core operators | 4 or more contributors touching content |

| Context Complexity | Simple offer, narrow message | Multiple personas, use cases, and product nuance |

| Review Burden | Mostly stylistic edits | Repeated strategic and positioning edits |

| Content Surface Area | One or two channels | Blog, campaigns, PMM, buyer enablement, and more |

This framework works because it focuses on operating conditions. Not preference. If you're a tiny team with one experienced content lead and a tight message, prompt-by-prompt can be totally reasonable. No need to overbuild. But if you have cross-functional content demands and recurring narrative drift, a more structured system usually becomes easier to justify.

I wouldn't treat this as rigid doctrine. There are always edge cases. Some small teams are surprisingly complex. Some bigger teams stay simple because one leader still controls all messaging. But for most scaling SaaS teams, those four signals give you a pretty reliable read.

Use The 2-2-1 Rule To Make A Fast Internal Decision

If you need a practical internal decision rule, use the 2-2-1 Rule:

- 2 or more teams contributing content

- 2 or more recurring review layers

- 1 shared category or positioning story that must stay consistent

If all three are true, you should at least evaluate a system approach seriously. That's where prompt-by-prompt workflows tend to create drag. If only one is true, the lighter approach may still be enough for now.

This gives buyers something they can actually use in a meeting. Not just abstract criteria. A simple rule.

And if you're still split, ask one final question: where do we want our marketing intelligence to live? In scattered prompts and individual operators, or in a repeatable system the team can use, inspect, and improve?

That's usually the real decision.

How Oleno Supports A More Structured Evaluation

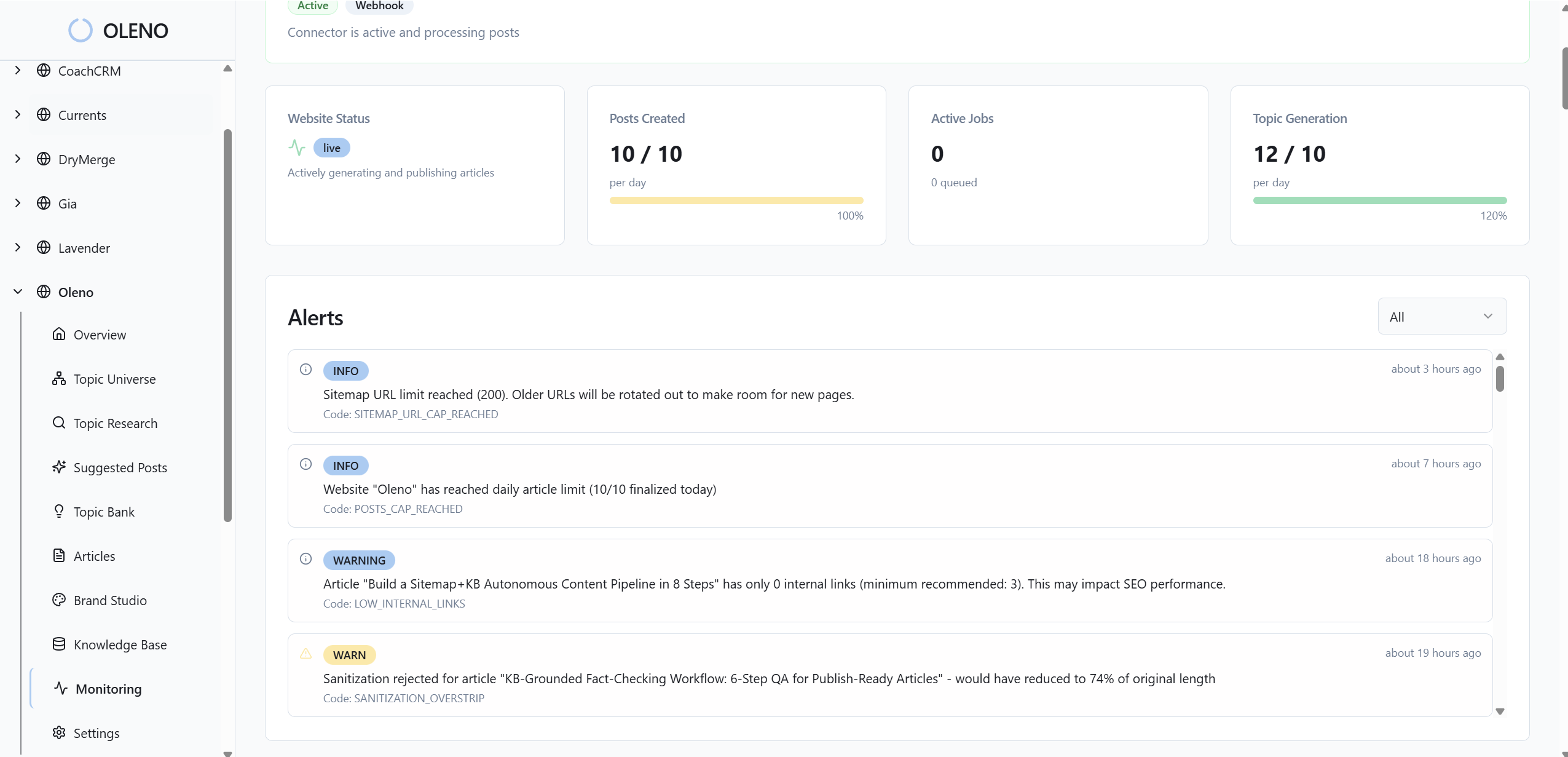

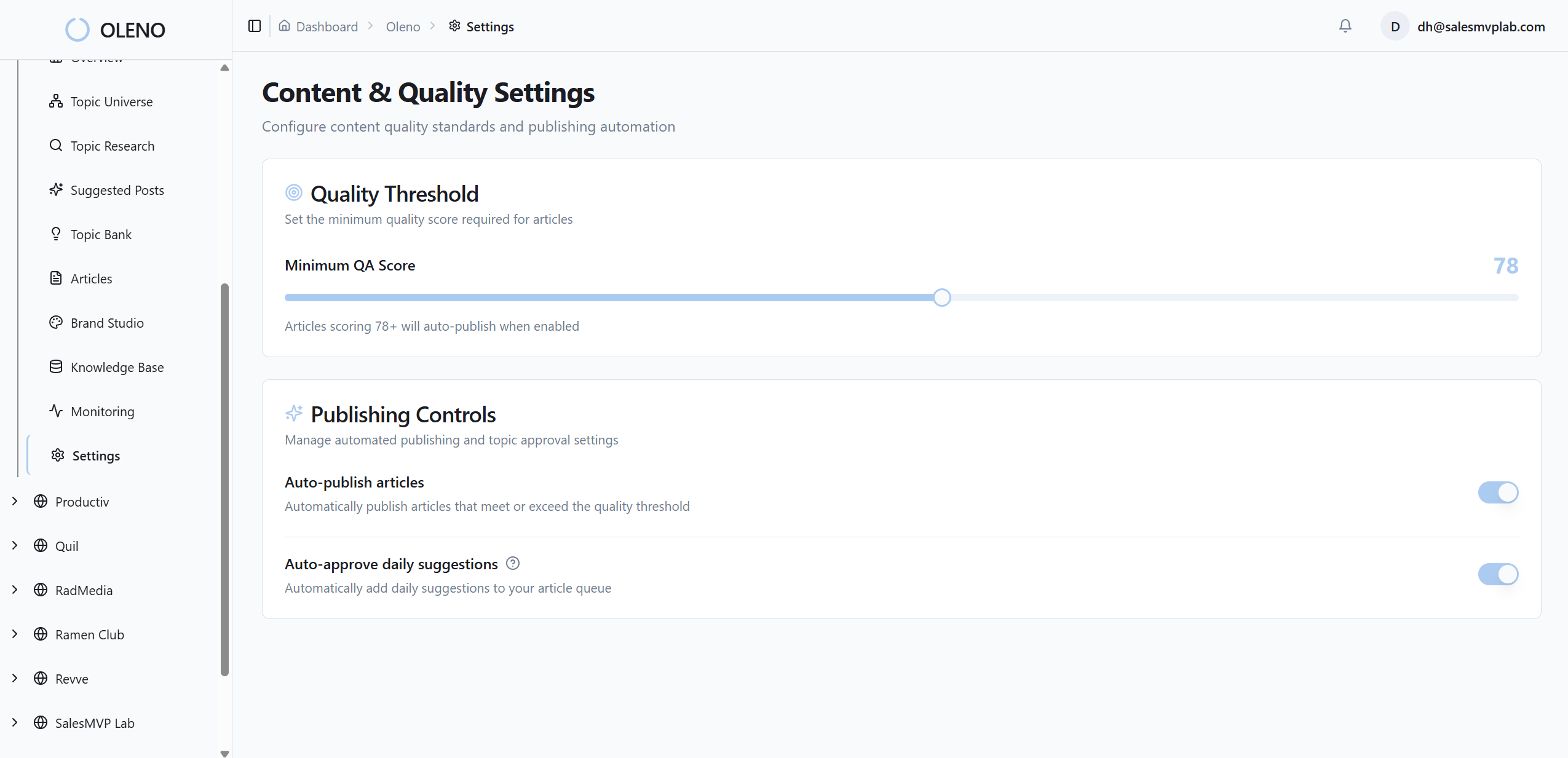

Oleno fits teams that want marketing context, workflow, and output to stay connected as content volume grows. The point isn't to replace strategy. It's to give strategy a place to live so execution doesn't keep restarting from zero.

For scaling SaaS teams doing category content, buyer enablement, and campaign production, that matters because the same core inputs need to show up everywhere. Audience and persona context. Product and use case definitions. Category framing. Story structure. Executive visibility into what is actually getting produced. Those are the kinds of evaluation criteria that tend to matter more in the long run than who wrote the fanciest prompt.

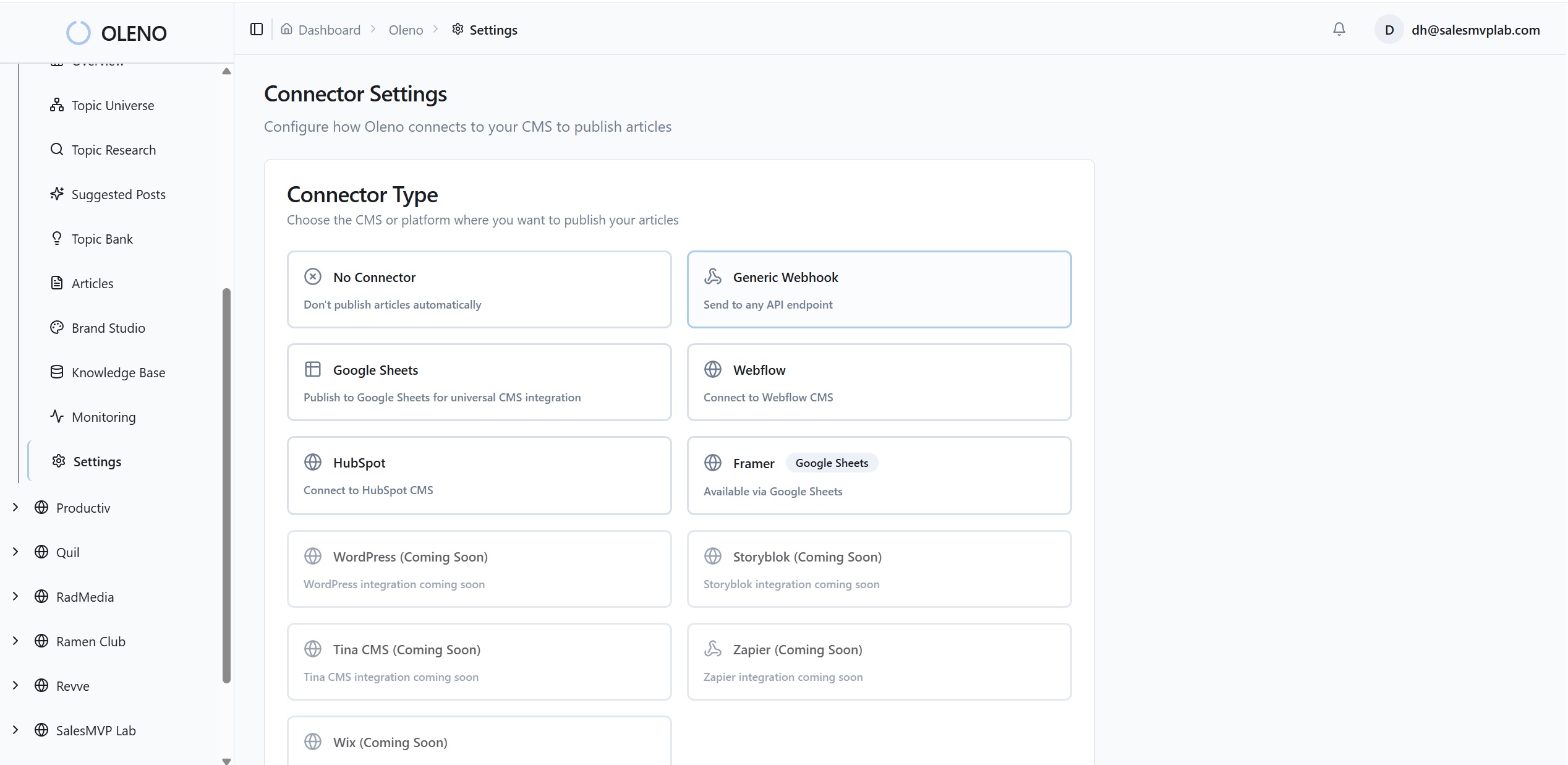

That's also where Oleno's product direction is relevant. The platform is built around structured marketing inputs and execution workflows, with areas like product studio, marketing studio, buyer enablement studio, category studio, storyboard, audiences and persona, and executive dashboard designed to keep strategy and production closer together. A buyer should still verify fit against their own process, of course. But if your current issue is rework tax and narrative drift, those are the types of workflow questions worth testing.

If that's the evaluation you're trying to run, book a demo and compare it against your current prompt workflow using your real campaign work, not a toy example.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions