Implement KB‑Grounded Orchestration: Migrate from Prompt-Based Drafts

Back when I ran a contributor network, we scaled to tens of thousands of pages by system, not heroics. We had structure, review patterns, and a cadence that didn’t fall apart when life got busy. Years later, leading GTM teams, I saw the other side: great ideas, good writers, and a publishing process that still created rework at the worst moments. Sound familiar?

Here’s the uncomfortable truth. The problem isn’t that your prompts are bad. It’s that prompts don’t run your operation. They produce drafts. Then your team fixes tone, chases facts, hunts links, and prays publishing doesn’t break. You don’t need a better spell; you need a governed pipeline that remembers your rules and makes the right things deterministic.

Key Takeaways:

- Replace prompt chains with a KB‑grounded pipeline that enforces rules upstream

- Treat content as a system: Topic → Brief → Draft → QA → Enrich → Publish

- Make accuracy‑critical tasks code‑driven: links, schema, publishing, retries

- Use a QA‑Gate with pass thresholds to prevent late‑night rewrites

- Apply persistent memory (brand voice + KB facts) at every stage

- Quantify rework costs; editing is the most expensive way to enforce rules

- Switch when edits exceed creation time or facts drift post‑publish

Variable Drafts Hide A Broken System

Most teams think prompt quality equals output quality. It doesn’t. Variability is the tell: tone shifts, structure drifts, facts wander. Reproducible systems beat clever inputs because process, not phrasing, governs reliability. If it looks fine in a demo but cracks at 10–50 articles, you’re seeing a system failure, not a writing issue.

Why single prompts collapse under real workloads

Prompt chains start from zero context every time. That means each run negotiates tone, structure, and facts from scratch. It might pass in isolation, then fail when you ask for 12 pieces by Friday. Your team becomes the “memory,” stitching consistency back in during edits, which is a slow, fragile way to scale.

At scale, what you’re really fighting is variance. Standard work reduces defects; ad hoc steps multiply them. The fix isn’t “better prompts,” it’s persistent rules your operation applies without someone babysitting every paragraph. You don’t want clever. You want boringly consistent. That’s how you avoid the frustrating rework that eats weeks.

What is KB grounding and why does it change outcomes?

KB grounding gives every stage the same factual spine. Retrieval pulls only vetted passages, and generation must anchor to them; QA verifies those citations again. You’re not hoping the model “remembers” your roadmap—you're feeding it the canon and enforcing it.

This is why drift drops. Your product facts stop wandering, your phrasing stabilizes, and your articles sound like you even when you didn’t touch the draft. Evidence‑led systems reduce error risk because the source of truth is explicit and enforced, not implied. You can feel the difference the first time a VP reads without grabbing a red pen.

Why conventional prompt fixes miss root causes

Shorter prompts. Clever patterns. Tiny model switches. They reduce token costs and latency. They don’t create governance. You still have no audit trail, no pass thresholds, no deterministic links or schema, and no publishing guardrails. So you keep fixing everything downstream.

Governance lives upstream: rules, memory, QA, and code for accuracy‑critical tasks. That’s the shift—from craft to system. If you need a reference, think “standard work” over “best advice.” Codify the flow, then let the pipeline police structure while your team focuses on story, not commas. For a primer on why standard work creates repeatability, see the Lean Enterprise Institute’s definition of standardized work.

The Real Bottleneck You Can Actually Fix

Most leaders assume writing is the bottleneck. It usually isn’t. Fragmented steps create the real drag—topic selection, differentiation, structure, visuals, QA, and publishing. When these live in different tools (and heads), every handoff adds risk. Fix the pipeline, and drafts stop being the fire you chase.

What traditional approaches miss in multi-stage content

Writing is the visible effort. The invisible effort is all the glue. Topic discovery in a spreadsheet. Drafts in a doc. Images in a drive folder. QA in a checklist. Publishing via copy‑paste into the CMS. That fragmentation makes quality depend on the best‑organized person in the room.

The result is predictable: late edits, broken links, missing schema, and visuals bolted on at the end. You can’t scale “don’t forget” as a strategy. When the pipeline itself is designed—inputs defined, outputs validated, and gates enforced—speed increases without quality falling apart. The workload gets calmer. The outcomes get steadier.

How persistent memory changes everything

Prompts restate rules; memory enforces them. Persistent memory means your brand voice, banned terms, structure rules, and KB facts are applied at every stage. Not “remembered,” enforced. That’s the difference between variable drafts and a system you trust.

When memory follows the work, you stop explaining the same tonal nuance 14 times. Structure lands in the right order. Facts stay anchored. Even your alt text sounds like you. That’s a relief for leaders who are tired of reviewing basics and want to focus on narrative choices again.

When should you switch to a governed pipeline?

Three triggers: edits exceed creation time, product facts get corrected after publish, and links/schema keep slipping. Add a fourth—if publishing feels like crossing fingers, you’ve waited too long. A governed pipeline stabilizes quality before anyone opens the CMS.

Measure it for a month. Track time lost to tone fixes, fact checks, link audits, schema gaps, image hunts, and publish failures. If the review stack is bigger than the writing stack, you’ve got your ROI right there. Nothing beats seeing the rework math in black and white.

The Hidden Costs You Keep Paying Every Week

The costs hide in plain sight. Time you don’t measure. Slack threads you ignore. Calendar blocks that get pushed because rewriting “just this one section” becomes three hours. Editing is the most expensive way to enforce rules, and you pay for it every week.

Editorial rework math you can take to finance

Let’s pretend you draft in 20 minutes and edit in 40. Ten posts a month is 10 hours of edits. At $100/hour, that’s $1,000 in cleanup before visuals, links, schema, and publish. Two brands? Double it. And that’s optimistic compared to what I’ve seen on small SaaS teams.

This is why “just edit it” is a trap. You convert strategy time into production triage. And you still risk missing something under pressure. Standards reduce variation. Variation costs money. This isn’t theory; it’s operations 101. If you need the business case, that’s it.

Want to quantify the delta quickly? Spin up three governed pieces and compare edit minutes to your baseline month. If the number flatlines, keep your workflow. If it drops, you’ve found your budget line. If you’re ready to feel that drop, Try Generating 3 Free Test Articles Now.

The risk surface from hallucinations and broken links

Hallucinated claims and fabricated URLs are more than embarrassing. They erode trust with customers and leadership. The fix isn’t “be more careful.” It’s to remove the risk surface. Retrieval constrains facts. Code injects real internal links. Schema is generated and validated consistently.

This is cheaper than brand repair. Deterministic components exist to eliminate entire classes of mistakes. Don’t assign a human to catch problems a program can prevent. That’s how reliability works—make correctness the default, not the exception.

When publishing breaks, you pay twice

A failed publish burns time on the attempt and on the untangling. Duplicates, broken formatting, missing images—now your team is doing cleanup instead of creating. Add the cognitive load of “did we ship that twice?” and you see why morale dips.

Idempotent delivery, mapped fields, and safe retries prevent double work. Notifications matter only when they matter, so your inbox isn’t noise. This is standard operating procedure in good software systems—idempotency is table stakes. If you want a solid overview, see AWS’s guidance on idempotency for distributed systems.

The Costly Moment Right Before You Hit Publish

This is where it hurts. You think you’re done. Then you find voice drift, a missing FAQ schema, and a feature claim that isn’t accurate. So you rewrite under pressure. Quality gates upstream avoid this exact mess, and your evenings stop disappearing.

The 3 pm handoff that becomes a 9 pm rewrite

You send a draft for final review. Feedback starts light—word choice here, a subhead there. Then someone spots a factual wobble. Another notices off‑brand phrasing. Publishing is at 5 pm, and now you’re rewriting sections while chasing links and images you should’ve had earlier.

A pipeline that enforces structure, voice, and accuracy before anyone sees a draft changes this day entirely. Fixes happen where they’re cheapest—upstream. The handoff at 3 pm stays a handoff. Your 5 pm publish stays a publish.

When a VP flags a factual error on a live post

It stings. Trust slides. Teams slow down. I’ve been there on founder‑led teams where the CEO saw a live post and we had to pull it. Not because the idea was bad, but because one line overstated a capability. That’s a cultural tax you pay for weeks.

KB‑grounded checks and a deterministic enhancement pass reduce the odds of that pop‑up Slack. You’re not trusting memory. You’re trusting systems. Over time, that reliability shows up as confidence in your process—and frankly, more air cover for bigger ideas.

What would it feel like to trust your pipeline?

Picture Monday approvals, Thursday publish‑ready articles. No scavenger hunt for images. No link audits. No last‑minute QA spreadsheet. The content looks like it came from your brand, and it’s citable by humans and machines.

That confidence is worth real money. It’s also worth your weekends. If you’d rather trade scramble for stability, that’s your north star.

A Practical Migration Plan You Can Run This Quarter

You don’t need a platform purchase to start. You need a quarter and a plan. Map the work, move accuracy‑critical tasks to code, and enforce quality before drafts leave the pipeline. Then decide whether to keep building or buy what’s already working.

Step 1: Audit current prompts, failure modes, and editorial costs

Inventory inputs, prompts, and every post‑edit step. Tag failures by type: tone, structure, factual drift, fabricated links, schema gaps, publish errors. Record time per type for a month. This baseline becomes your ROI model and tells you which guardrails matter most first.

Be honest about bandwidth. At PostBeyond, I could ship 3–4 posts weekly solo because I had a framework. As the team grew, context fragmented and quality slowed. Measure your drift now; the data will make trade‑offs obvious later.

Step 2: Design the flow from topic to publish

Define a fixed sequence: Topic → Angle → Brief → Draft → QA → Enhance → Publish. Decide where KB retrieval and voice enforcement apply, and where code owns correctness (links, schema, publishing). Set pass thresholds and when to retry.

Document inputs/outputs per node. This is your standard work. Pipelines outperform prompts under load because expectations are explicit. That’s how you keep quality steady without adding project managers to herd cats.

Step 3: Build KB retrieval with thresholds and evidence caching

Embed your KB, chunk it for retrieval, and set similarity/recency thresholds. Cache the passages used during drafting and pass them into QA for verification. Tighten thresholds for product facts; relax slightly for market framing so your narrative breathes.

Store retrieval events with the draft. This creates explainability—what the system pulled and why. It’s also how you avoid the late‑stage “is this true?” debate. The evidence is attached, not implied.

Step 4: Automate brief generation and information-gain checks

Automate briefs that compare common coverage and highlight gaps. Score uniqueness. If the score is low, re‑angle before writing anything. Include section objectives, voice constraints, and proposed visuals. Attach 3–5 authoritative sources as candidates with context.

Scaffolding improves clarity and speeds writing because the hard thinking happens early. It also prevents copycat content that adds nothing new. You’ll feel the difference the first time an outline forces a better angle instead of letting a boilerplate section slide.

Step 5: Implement a QA-Gate with pass thresholds and refine loops

Codify checks for structure, voice, KB accuracy, snippet readiness, visuals, and filenames. Set a minimum passing score of 85. Anything below triggers targeted refinements. Keep QA artifacts with the draft so you can trace decisions later.

This is where late rework dies. Quality gates reduce defects by design, not by hero edits. Teams relax because “done” actually means done. If you want patterns for scoring, map criteria to the failure modes from your audit.

Step 6: Add deterministic enrichments for links, schema, and visuals

After text passes QA, run code‑only passes. Inject only verified internal links from your sitemap, with anchor text matching page titles. Generate JSON‑LD for Article, FAQ, and BreadcrumbList. Place product visuals using rules and generate alt text. Keep this LLM‑free to eliminate fabrication.

Determinism matters here. Links are either real or not. Schema is either valid or not. Treat these as code paths and your error rate drops. For schema basics, see Google’s introduction to structured data.

Step 7: Wire publish connectors, idempotent retries, and logging

Map CMS fields, choose draft or live, and prevent duplicates. Add retry logic for network errors and store version history. Email only on meaningful events. Log inputs, outputs, retrievals, QA scores, publish attempts, retries, and versions.

You want an audit trail, not a dashboard. That’s how you explain what happened and why without starting an incident thread. Do this once, and future publishes stop being a coin flip. If you prefer not to build all of this, Try Using An Autonomous Content Engine For Always-On Publishing.

How Oleno Implements KB-Grounded Orchestration End To End

This isn’t a tool you prompt. Oleno runs the system for you: strategy to publish, with memory and governance in every step. It writes like your brand, enforces differentiation, and makes accuracy‑critical steps deterministic. The goal isn’t faster drafts. It’s reliable shipping, every day.

Oleno discovers and clusters topics from your KB and sitemap, tracks saturation, and prioritizes what to write next. A 90‑day cooldown prevents over‑publishing the same idea. Topic Universe replaces ad hoc ideation with a defensible roadmap. Every approved topic becomes a brief with competitive research and an Information Gain Score, so repetition gets flagged before you spend a word.

Drafts are written with KB retrieval and Brand Studio voice constraints at every stage. That means banned terms, phrasing, and structural rules are enforced automatically. Each H2 opens with a snippet‑ready paragraph. Sections stand alone cleanly. This design increases the odds your content is both readable and referenced by machines, without turning your team into editors.

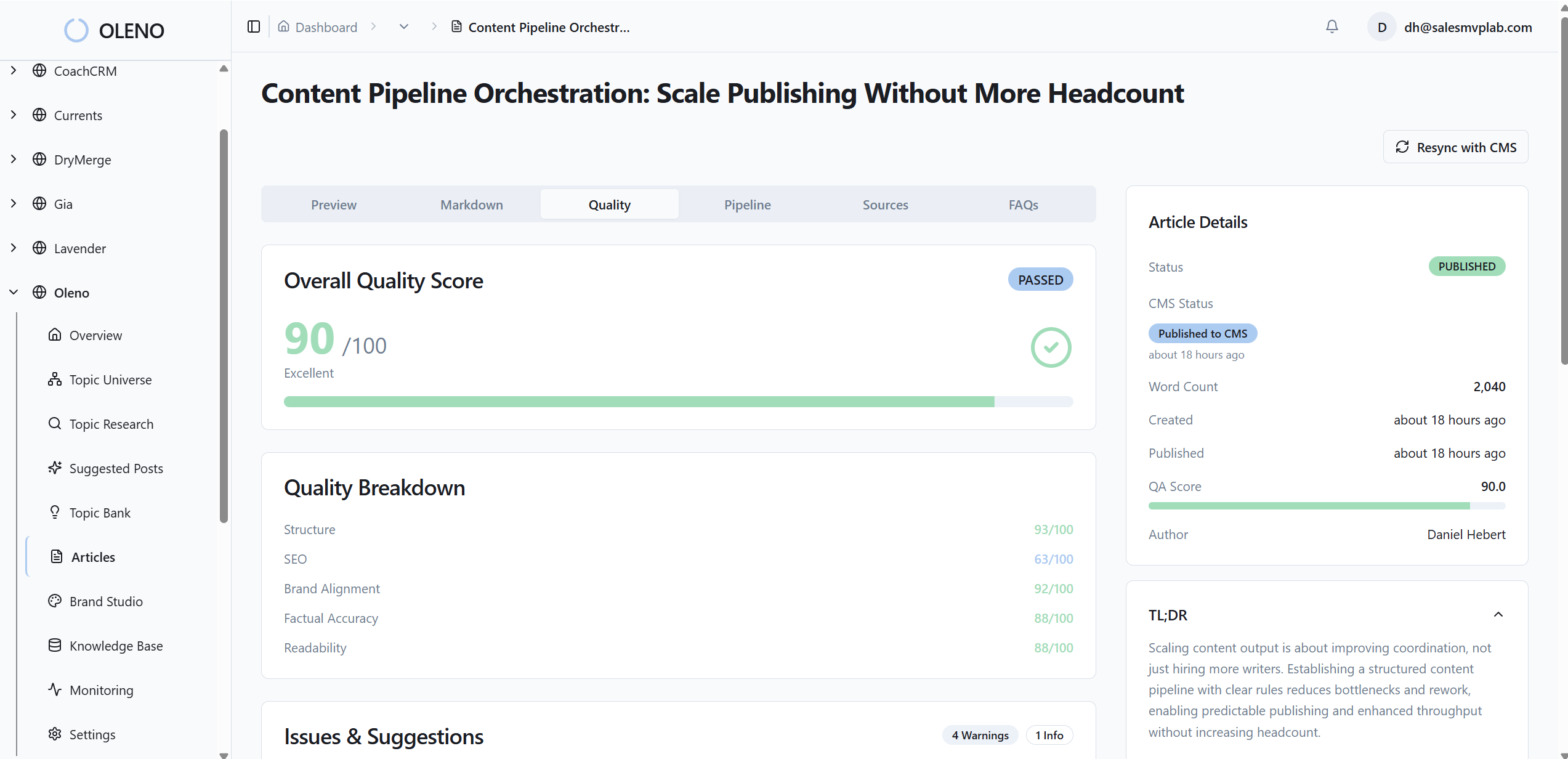

Quality is gated, not hoped for. Oleno’s QA‑Gate evaluates structure, information gain, brand alignment, snippet readiness, and visuals. The minimum passing score is 85. Low scores trigger targeted refinements until the draft clears. You don’t have to chase basics; the pipeline handles them, and your review time goes to narrative choices.

Accuracy lives in code. Internal links are injected only from verified sitemap URLs and anchor text matches page titles. JSON‑LD for Article, FAQ, and BreadcrumbList is generated and validated every time. Visual Studio places brand‑consistent images and product screenshots where they matter, with SEO‑friendly alt text and filenames. No fabricated links. No missing schema.

Publishing is mapped, idempotent, and calm. Oleno connects to WordPress, Webflow, and HubSpot, prevents duplicates, supports draft or live, and retries safely on transient failures. Email notifications fire for meaningful events only. System logs record inputs, outputs, retrieval events, QA scores, publish attempts, retries, and version history—so automation stays explainable.

If your reality today is “edit forever, then hope,” this is the new way to operate. Oleno doesn’t promise perfection. It enforces the rules good teams already want: repeatable strategy, grounded writing, clean structure, deterministic accuracy, and publishing that just works. Want to see the difference without rebuilding your stack? Try Oleno For Free.

Conclusion

You can keep tuning prompts and hiring editors to catch the same problems. Or you can move the work where it belongs—into a governed pipeline that enforces rules before anyone hits “send.” KB grounding provides the facts. Brand memory keeps the voice. Deterministic code removes entire classes of errors. The result isn’t louder output. It’s steadier outcomes. When your pipeline is trustworthy, your team gets to focus on the part only humans can do—ideas worth publishing.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions