Incremental Content Refresh Playbook: Improve Rankings for 1,000+ Pages

Most teams obsess over publishing velocity, then watch page after page quietly lose clicks. The ugly truth is simple. Treating content like a launch, not a product with upkeep, bleeds traffic. The incremental content refresh playbook fixes that. It is boring by design, fast, and safe. Run it weekly, and you cut decay while lifting CTR. I have seen 30 percent decay reduction and a 10 to 25 percent CTR lift in 8 to 12 weeks when teams commit to it.

You are not gambling on big rewrites. You are shipping atomic edits with a hypothesis, a canary, and a rollback plan. You learn faster. You waste less. And you protect the authority you already earned. If your backlog is full of net new articles while legacy pages slide, you are losing money in slow motion.

Key Takeaways:

- Build a triage score that ranks pages by business impact, risk, and opportunity, then work from the top every week.

- Ship atomic edits mapped to one metric and a clear hypothesis, avoid full rewrites unless the page is structurally broken.

- Use canary cohorts and two week monitoring windows to validate edits before scaling across templates.

- Automate the boring parts, diff-based briefs, refresh queues, QA gates, and rollback triggers tied to thresholds.

- Decide when to prune, merge, or fully rewrite with marginal ROI math, not vibes or opinions.

- Score SEO-grade QA on intent, facts, internal linking, schema, and above-the-fold clarity before you hit publish.

Why an Incremental Content Refresh Playbook Beats Net-New Pages

Most teams get bigger gains from maintenance than from net-new pages. Existing URLs carry links, history, and trust, so a small, targeted refresh moves rankings and CTR faster than starting at zero. A cadence of atomic edits compounds across hundreds of pages, and it protects head terms while you still publish new work.

The compounding math of maintenance

Publishing velocity feels productive, but neglected pages lose clicks quietly month after month. The decay is sneaky, it rarely trips an alarm until a quarter slips. Treat content like a product with lifecycle care, updates, QA, and monitoring. Small, high confidence improvements shipped weekly halt decay and recapture demand. Stack that across 500 or 1,000 URLs, and your totals climb without extra headcount.

Google rewards freshness signals when they match intent, not just timestamps. A tighter intro that hits searcher intent, fresh stats, improved internal links, and updated schema are all lightweight levers. They are also low risk. You can move quickly, prove lift, and scale the edit.

Simple weekly maintenance targets work well:

- Fix intros that do not satisfy intent in the first 100 words.

- Refresh out-of-date stats and examples with current sources.

- Tighten title and H1 for clarity and intent match.

- Add missing schema that unlocks a SERP feature.

Where net new loses and refresh wins

New pages fight from zero. They need links, crawl budget, and time to earn trust. Many never land above page two. Meanwhile, stale winners drift from position three to nine, and you lose high-intent clicks you already owned. Refreshes convert existing equity into growth faster. Start with pages sitting near page one, recent featured snippet losses, and CTR gaps that lag the curve for position.

A focused refresh queue beats a bloated content calendar. It is cheaper and safer to recapture a falling snippet than to hope a new article outranks entrenched competitors. You also avoid cannibalization, a common mistake when teams pump out net new around the same intent.

Track SERP shifts, not just your site. Feature changes often create fresh opportunities. When image packs, FAQs, or local packs appear, a small change can win a new surface. That is leverage.

What “incremental” really means in practice

Incremental is one change mapped to one metric and one hypothesis. You are not rewriting the whole article. You are aligning the first 100 words to intent. Or adding a missing comparison section. Or fixing internal links into a key page. Or adding FAQ schema to match new SERP behavior. Each edit has a rollback plan and a measurement window.

The point is causality. You want to learn what moved the metric so you can scale it template-wide. Big-bang edits hide the signal in the noise. They also burn calendar time and slow your team.

Examples of atomic edits that move fast:

- Rewrite intro and title to match today’s dominant intent.

- Add a concise comparison block to target “vs” queries.

- Update statistics, sources, and examples from the last 12 months.

- Add or fix FAQ schema tied to real questions on the page.

Reference: Google documents freshness as a ranking signal when it adds value to the query, and that context matters. Read the overview in Google Search Central on ranking systems and freshness.

Stop Guessing, Let Signals Drive Your Incremental Content Refresh Playbook

Signals, not opinions, should decide what you refresh next. Build a rolling backlog from GSC, GA4, and visible SERP shifts. Weight every opportunity by impression volume, conversion impact, and risk. Review weekly so you avoid chasing noise while still catching early slides.

Build a triage queue from GSC, GA4, and SERP changes

A good queue has three inputs. From GSC, track position and CTR deltas by URL and query, plus impressions to size upside. From GA4, watch landing page sessions and conversions to tie effort to revenue. From the SERP, log feature changes and competitor moves. The queue should sort by business impact first, then SEO risk, then effort.

Your queue is a living thing. Add change notes and owners so everyone sees what moved and why. That shared context reduces rework and keeps junior folks effective.

Useful references for setup:

- Learn the core filters in the Google Search Console Performance report.

How do you set thresholds that prevent thrash?

Write the rules so the team stops debating. You need explicit triggers that move a URL into the queue, a monitoring window, and a rollback tolerance. Weekly review is the right cadence for most sites. Daily creates noise and panic. Monthly is too slow and hides loss.

Starter thresholds that work:

- 20 to 30 percent traffic drop over 90 days on a revenue page.

- Position slide from 4 to 9 on a head query with high impressions.

- Featured snippet lost on a top cluster page.

- CTR that lags the expected curve by 2 to 4 points for its position.

Tie every trigger to a play you can run in under an hour. That is how you keep the flywheel moving.

Canary cohorts for safer learning

Rolling changes across 200 pages at once is a risk you do not need. Pick 5 to 10 representative URLs as a canary cohort, match by template and intent. Ship the same atomic edit, document before and after, then monitor for two weeks. If lift holds inside your error tolerance, scale to the next batch. If it stalls or dips, refine the edit or pick a new lever.

You will learn which edits actually move metrics on your site, not in theory. That knowledge compounds and lowers risk the longer you run the system.

If your team wants examples of measured SEO tests, the writeups from SearchPilot case studies show how small changes can drive meaningful lifts.

The Hidden Cost of Full Rewrites at Scale

Full rewrites change too many variables at once, so you cannot trust the outcome. Review times balloon, off-topic drift creeps in, and approvals pile up. Many teams then stop refreshing entirely after a bad month. That is the real failure mode.

Why big-bang edits fail more often than you think

When you change title, intro, headers, layout, links, and schema in one go, you erase causality. You also widen the blast radius if the edit misses intent. Managers get spooked, then pause the refresh program. The site keeps losing ground while you debate the next move.

Big swings also invite voice drift and factual mistakes. Editors will fix some of it, but not all. Rankings wobble, then confidence falls. I have watched teams burn a quarter on three giant rewrites that did not fix the actual problem.

Keep control by limiting scope. One change. One window. One call on scale or rollback.

The measurable waste of reactive fixes

Emergency edits pull attention from launches, campaigns, and sales support. You pay twice, once in production hours and again in lost opportunity while rankings slide. Put numbers on it so it is real. If a top cluster loses 15 percent CTR for a month on a query with 50,000 impressions, you just missed 7,500 clicks. If that page converts at 1 percent, that is 75 leads gone before anyone reacted.

CTR gaps are easy to size. The curve is public, and outliers are common. A 2 to 4 point CTR improvement on high impression terms is a lot of traffic. That is why incremental wins beat net new most weeks.

For a sense of expected CTR by position, the Advanced Web Ranking CTR study is a solid baseline.

Match effort to upside with marginal ROI math

Estimate upside before touching a page. Use impression volume, current position, expected CTR curve, and conversion rate to estimate incremental value. If a single atomic edit can unlock a 2 to 4 point CTR lift on a high impression query, that often beats rewriting a low impression outlier that might never rank.

A quick way to size a page:

- Project clicks, impressions times target CTR for the position you can reach.

- Estimate incremental clicks, target clicks minus current clicks.

- Multiply by conversion rate and lead value, get to dollars.

- Stack against effort hours and risk, then decide.

If the math is thin, park it. Your queue has better options.

How an Incremental Content Refresh Playbook Calms the Chaos

A good refresh program reduces risk and stress. You ship smaller changes, catch mistakes earlier, and reverse course faster when metrics dip. Teams stop arguing and start executing. Confidence rises because results are visible and repeatable.

What does “SEO-grade QA” include?

QA is not spellcheck. It is a set of gates that reduce ranking risk and protect revenue pages. Validate intent alignment first, the intro must satisfy the dominant query. Check facts and sources for freshness. Confirm internal links into and out of the page are current and aligned with your cluster. Review schema coverage and eligibility for rich results. Then look at layout clarity above the fold, can a scanner see value in five seconds.

Score each dimension, attach evidence, and block deploys that miss thresholds. That is how you keep pages eligible for features and avoid subtle losses that linger for months.

A practical QA checklist looks like this:

- Intent fit in title, H1, and first 100 words.

- Stats and examples updated within 12 months.

- Internal links aligned to priority pages and clusters.

- Correct schema applied and validated.

- Above-the-fold clarity with a clear promise and scannable structure.

Why do rollbacks protect rankings?

Things break. A safe workflow always includes fast rollbacks tied to hard thresholds. Tag each deployment with a change note, the hypothesis, and the metric you expect to move. If position dips beyond tolerance, or CTR falls for two consecutive reads, revert instantly. Then investigate. Safe rollbacks protect revenue pages and build team confidence to keep shipping.

Rollbacks also speed up learning. You do not fear trying an edit when reverting is quick and clean. That is how you avoid paralysis after a miss.

The Incremental Content Refresh Playbook, Step by Step

You can run this as a weekly ritual with a small team. The steps are simple. The discipline is where most teams fail. Write the rules, stick to the windows, and keep score.

Define your signals, thresholds, and backlog rules first

Your rules live in a runbook everyone can follow. Define which metrics matter, how you calculate deltas, and when a page enters or exits the queue. Decide scoring, tie breakers, and business weighting. Publish it. With clear rules, triage becomes fast and junior folks can execute without debate.

A simple starting sequence:

- Pull GSC query and URL deltas weekly, filter by impression floor and target countries.

- Apply triggers, slides, snippet losses, CTR gaps, or conversion dips.

- Score by business impact, impressions times value per click or down funnel value.

- Pick a canary cohort, 5 to 10 URLs matched by template and intent.

- Write atomic edit briefs, hypothesis, lever, expected metric, and monitoring window.

What qualifies as an atomic edit?

Atomic edits are small, focused, and testable. One change, one lever, one metric. That is the rule. Rewrite the intro to match today’s top intent. Add a missing comparison block. Update facts and sources. Improve internal links to your money page. Add FAQ schema because the SERP now shows FAQs. Each edit should take under an hour including QA.

Select and run canary pages inside the same rhythm. Ship to the cohort, document before and after, then watch GSC for two weeks. If you see the expected lift inside your tolerance band, move to batch two. If not, you learned something. Adjust and try the next lever.

Stop chasing approvals. Start publishing validated edits with a tight loop. Request a Demo

How Oleno Operationalizes Your Incremental Content Refresh Playbook

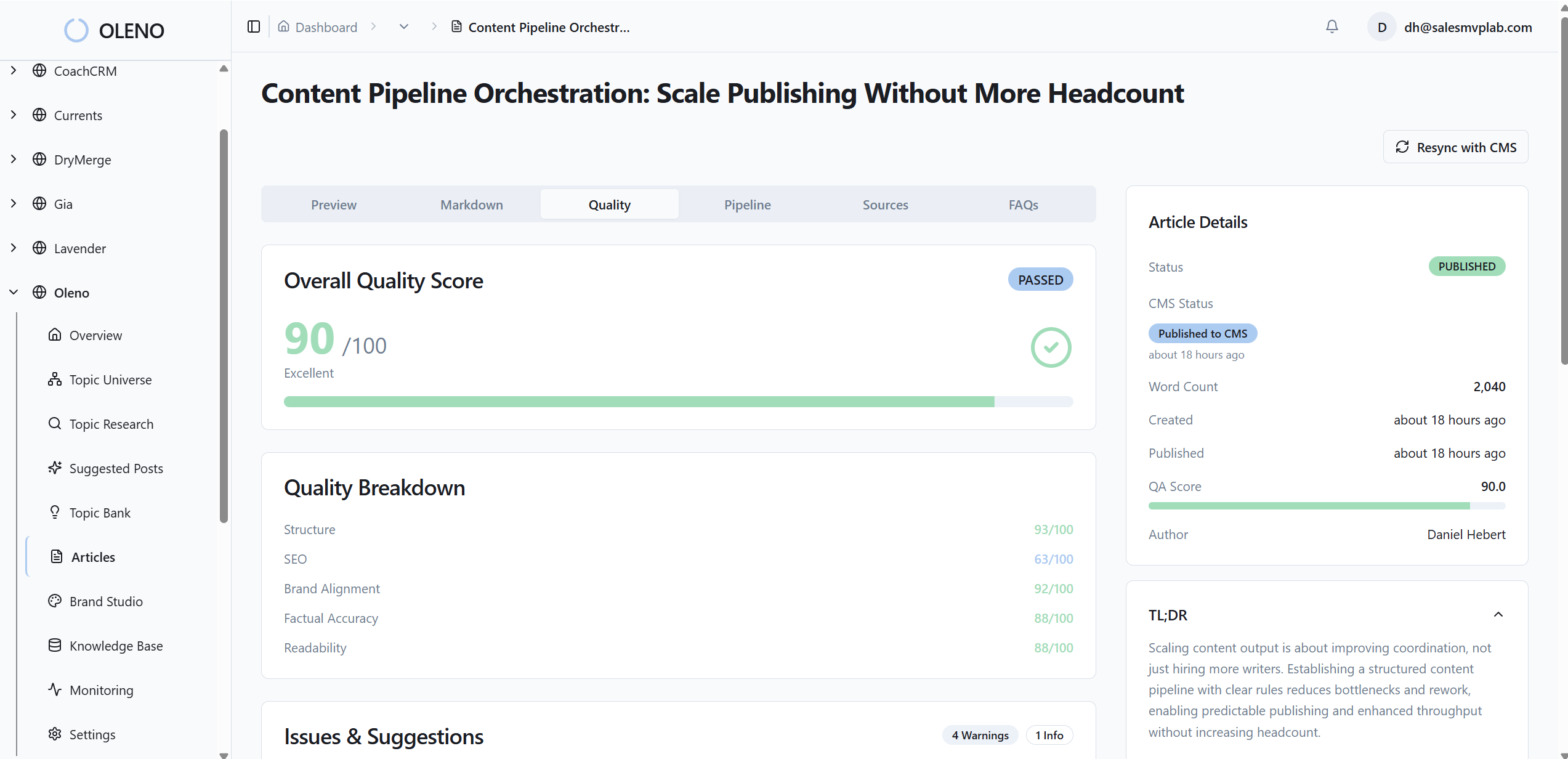

Oleno turns the manual refresh system into a reliable workflow. The platform ingests your signals, enforces your rules, and keeps the refresh queue moving even when priorities shift. You get faster cycles, safer deploys, and clearer learning.

Automated triage and refresh queues that never sleep

Oleno connects to GSC and GA4, flags threshold breaches, and pushes URLs into a ranked queue. Cohorts auto-group by template and intent, so you can test the same atomic edit where it matters. Suggested edit briefs come from your governance rules, not ad hoc prompting. That kills spreadsheet triage and the common mistake of reacting late to slow slides on high value pages.

With Oleno, owners, hypotheses, and monitoring windows are attached to every change. You see exactly what shipped, why it shipped, and whether it worked. That context shortens meetings and keeps execution steady.

SEO-grade QA gates tied to governance studios

Oleno’s Brand Studio and Product Studio keep edits on voice and on truth. QA checks validate intent fit, schema eligibility, internal linking, and above-the-fold clarity before anything goes live. Risky deployments are blocked with clear reasons and suggested fixes. Review cycles drop from hours to minutes, and avoidable failures stop reaching production.

Feature highlights that matter:

- Refresh queue with business-weighted scoring.

- Diff-based briefs that show exactly what will change.

- Schema and link checks that catch eligibility gaps.

- Change history with annotations for every deploy.

Cut review time from 23 minutes per page to 3 to 5 minutes, then scale the win across templates. That is what Oleno delivers. Request a Demo

Safe deploys are standard in Oleno. Canary controls ship to 5 to 10 URLs first, then auto-scale when lifts hold inside your tolerance. Every change is reversible, and rollback triggers are tied to your thresholds. The result is a refresh program your team trusts. You move fast without gambling on revenue pages.

Before you wrap this, lock in your next 90 days of refresh work with a system that does not miss quiet slides or bury learnings. Book a Demo

Conclusion

Publishing new pages feels productive, but the real gains come from disciplined maintenance. An incremental content refresh playbook gives you weekly wins that add up, fewer surprises, and clearer learning. Set hard thresholds, ship atomic edits through canaries, score SEO-grade QA, and protect your rankings with fast rollbacks. Do it consistently and you will reduce traffic decay by roughly 30 percent and lift median CTR and impressions by 10 to 25 percent in the first 8 to 12 weeks. Then keep going. The compounding does the rest.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions