Intent Audit: 7-Step Process to Map Pages to Search Intent and Fix Gaps

Most teams don’t miss pipeline because they can’t rank. They miss it because the page that ranks isn’t doing the job the searcher came to do. I’ve lived both sides, driving big traffic at Steamfeed and watching content at Proposify pull in leads that stalled. Ranking is not the same as aligning to intent. Not even close.

Here’s the thing. Search intent isn’t a vibe; it’s an observable pattern in the SERP and in your query mix. When your “how-to” ranks and the page screams “book a demo,” people bounce. When the SERP flips to comparisons and your evergreen guide refuses to move, conversions slide quietly. You don’t need more content. You need an intent audit and a plan.

Key Takeaways:

- Traffic without intent fit looks busy and burns pipeline; align each page to one job

- Build audits on signals (SERP patterns, query clusters, behavior), not opinions

- Score every page with a weighted model, then assign one remediation action

- Fix cannibalization by choosing a primary intent per cluster and refactoring links

- Ship remediation in two-week sprints; measure CTR lift, micro-CTA clicks, and MQL impact

- Use systems (briefs, QA gates, CMS publishing) to reduce rework and keep momentum

Ready to operationalize this instead of debating it? Try the system approach: Try Generating 3 Free Test Articles Now.

Ranking Without Intent Fit Drains Pipeline

Ranking without intent fit drains pipeline because the page’s “job” doesn’t match the visitor’s job. You’ll see bounces, weak CTA clicks, and decent dwell that goes nowhere. For example, a pricing-heavy hero atop a how-to query looks ambitious, then tanks conversions.

The cost of pages that pull traffic but miss demand

Traffic without intent fit is the worst kind of vanity. It props up your dashboard while sales wonders why form fills are soft and MQLs feel misqualified. When I was leading sales at Proposify, we ranked for topics our audience loved reading, but too many were miles from proposals or signatures. Great for ego. Rough on pipeline.

The pattern is predictable. Research query, educational SERP, and your page opens with product proof and a demo button above the fold. You’ll get time-on-page and maybe scroll, then an exit to a competitor’s comparison. You can see it in the behavior: high impressions, ok CTR, low micro-CTA clicks. It’s not the writing. It’s the job mismatch.

If you want a sanity check, compare your titles to the SERP archetype. If the SERP shows guides and definitions, your title can’t promise “pricing” without paying a CTR tax. Users follow information scent. Break the scent, and they backtrack. It’s not emotional. It’s pattern matching.

The Real Roots Of Intent Mismatch In Your Inventory

Intent mismatch comes from missing SERP patterns, sloppy cluster mapping, and letting two URLs fight for one query family. Traditional audits grade metadata; they rarely inspect the job the SERP is signaling. The fix is simple: add signals, assign a primary intent, then rewrite modules to match.

What traditional approaches miss

Traditional audits chase keywords and tick boxes. Useful, but surface-level. If you don’t look at the SERP archetype, People Also Ask patterns, and the query cluster tied to each URL, you’ll misread the job-to-be-done. Titles and H1s don’t tell the full story, SERPs do.

Here’s the deeper issue. Teams collect keywords, not intent-aligned clusters. Pages get built on “best X” terms while the SERP shows hands-on guides and definitions. Or worse, you create two pages for the same cluster and split signals. The SERP won’t reward indecision.

You don’t need a complicated workflow. You need consistent inputs and rules. Scan results, classify the dominant page types, and label your cluster. Keep that label attached to the URL. This is why we lock an angle before drafting. Decide the story and the job up front. It reduces rework later. It also aligns with how Google teaches evaluators to think about user need in Google’s Search Quality Evaluator Guidelines.

The Hidden Costs You Can Measure Today

Misaligned intent costs you in conversions, hours, and opportunity. You can quantify all three with simple assumptions. Do the math once; you’ll stop arguing “is this worth it?” and start assigning owners.

Let’s pretend you have 100 pages and 30 are misaligned

Let’s keep it directional. Say 30 pages average 800 monthly clicks, convert at 1%, and an MQL is worth $500. Realigning those pages nudges conversion to 1.8%. That 0.8% lift on 24,000 clicks yields ~192 incremental MQLs. Even if half hit at half value, you’ve still recovered meaningful pipeline.

Now, you might push back, conversion isn’t everything. True. But these pages already rank. The cheapest win is matching the job the SERP is telling you to do. You don’t need net-new assets. You need module order, CTA type, and internal links that reinforce the right journey step.

Interjection. This isn’t about perfection. It’s about moving misfits into “fit enough to perform” and freeing budget for net-new coverage where you have gaps.

The Moments That Make Teams Change Course

Teams change when the metrics stay flat while the story doesn’t. You’ll see rankings hold and demos dip. You’ll see traffic steady but clicks go soft. These are intent alarms, not content quality alarms.

A quick story from my time leading sales

At Proposify, we ranked for topics sales leaders ate up, leadership, SDR management, productivity. Great reads. Bad fit. Our product helped teams send proposals and get signatures. The articles weren’t wrong; they were misaligned with what our buyers needed to do next. Leads came in. Momentum died in the middle.

That’s when we drew a line. Content had to map to the journey: researching proposals, evaluating vendors, understanding pricing, comparing tools, then onboarding. We didn’t stop writing thought leadership entirely; we tied it back to jobs our software actually helped with. Conversion climbed because the path made sense.

If you’ve ever watched a dashboard dip at 3am and couldn’t explain it, it’s often the SERP shifting under you. The SERP switches to comparisons; your guide stands still. The fix isn’t new CTAs. It’s a page-level intent reset and internal links that route users to the right sibling page.

Still seeing this pattern crop up quarter after quarter? Formalize how you record decisions. A little discipline goes a long way, think documentation standards like Auditing Standard 1215 on Documentation applied to content. Not overkill. Just traceability.

A 7-Step Intent Audit That Produces A Prioritized Fix List

An intent audit identifies each page’s job, quantifies the gap, and pairs it with a single action. The output is a prioritized fix list you can ship. Do this once per quarter, and you’ll stop rewriting the same pages twice.

Step 1: Inventory pages and collect signals

Start by pulling a clean inventory of URLs, titles, and their primary query clusters. Add Google Search Console clicks, impressions, CTR, and the actual query list per URL. Pull engagement metrics, bounce, time-on-page, micro-CTA clicks, and exit rates. Then review the live SERP for each page’s top queries, including People Also Ask and featured snippets.

Keep everything in one sheet, one row per URL. This matters because you’re going to attach decisions to the URL, not just the keyword. For each page, note the dominant SERP archetype (guide, comparison, product), dominant PAA verbs (how, vs, price), and snippet types. That gives you a consistent signal set you can re-check later.

Step 2: Classify SERP and query clusters by intent

Label each page’s cluster: informational, commercial research, transactional, or navigational. Use rules, not vibes. If the SERP shows definitions, “what is,” and deep guides, it’s informational. If “best,” “vs,” and “pricing” dominate, it’s commercial or transactional. Flag mixed SERPs where two page types show up, because they need careful handling.

Then map your current page modules to the label. If you’re informational, are you leading with a product claim? If you’re commercial, are you burying comparison tables? The point is to mark “match” or “fight” per module. You’ll use this later when you write remediation specs.

Step 3: Score gaps with a weighted model

Create a 0–100 page score that blends urgency and impact. Example weights: urgency 30% using CTR delta vs the SERP average, revenue risk 30% using funnel contribution, content quality 25% using structural checks, and link equity 15% using internal/external link signals. Score equals the sum of weight multiplied by normalized factor.

Two reasons this helps. First, it breaks stalemates by putting numbers behind prioritization. Second, it lets you communicate trade-offs, why a page with fewer clicks might deserve attention because it sits next to a high-intent CTA. Keep the model simple enough that a new teammate can run it in an hour.

Step 4: Choose the remediation pattern

Pick exactly one action per page. Merge when two URLs answer the same cluster and split signals. Rewrite when the page type is right but the modules are wrong. Add commercial sections when research pages need product context. Canonicalize or redirect true duplicates. Retire content when traffic and links are negligible and the topic is off.

If you’re unsure, follow the SERP. If page types are uniform, match them. If the SERP is mixed, either commit to one job or create two pages with clean internal links and a clear canonical. For duplicate consolidation, use Google’s guidance on Consolidate Duplicate URLs (Canonicalization) so signals flow where you want them.

Step 5: Draft the remediation spec per page

For each priority URL, write a one-page spec: target intent, SERP archetype, modules to add or remove, internal links to update, schema to include, and the success metric you’re watching. Include an example title and 3–5 H2s that mirror the SERP. Attach a simple “before vs after” internal link map. Assign a single owner.

This is the moment that prevents frustrating rework. When the spec is clear, designers, writers, and devs don’t reopen the same decision three times. We used this pattern on small teams because it cut meetings. Specs also become training data for future audits, your rules get sharper over time.

Step 6: Plan the sprint and ship quick wins first

Split work into quick wins and deep rewrites. Quick wins: title adjustments, module reorders, schema updates, internal link fixes, under two hours. Deep rewrites: multi-module rework and page-type changes, over four hours. Stack rank by score, then by effort. Plan a two-week sprint with daily check-ins.

Publish in batches, enough to see signal, not so much you can’t tell what moved. This isn’t about changing everything at once; it’s about creating clean tests. You’ll learn quickly which modules create lift for your audience in your space.

Step 7: Measure impact and close the loop

Set guardrails and outcomes. Guardrails: stable bounce and no loss in non-target queries. Outcomes: CTR lift on target queries, position stability, micro-CTA clicks, and MQLs where relevant. Run simple pre/post or holdout comparisons where you can. Re-scan SERPs monthly for top clusters and adjust specs when intent shifts.

Then update your internal links as the SERP evolves. The goal isn’t to lock the site forever; it’s to keep page “jobs” aligned with what the market is asking right now. Small, regular maintenance beats big, sporadic overhauls.

If your team wants to stop debating and start shipping, you’ll want the system behind this audit to keep running after week one. That’s where automation helps. Want to see structured creation back up your audit? Try Using An Autonomous Content Engine For Always-On Publishing.

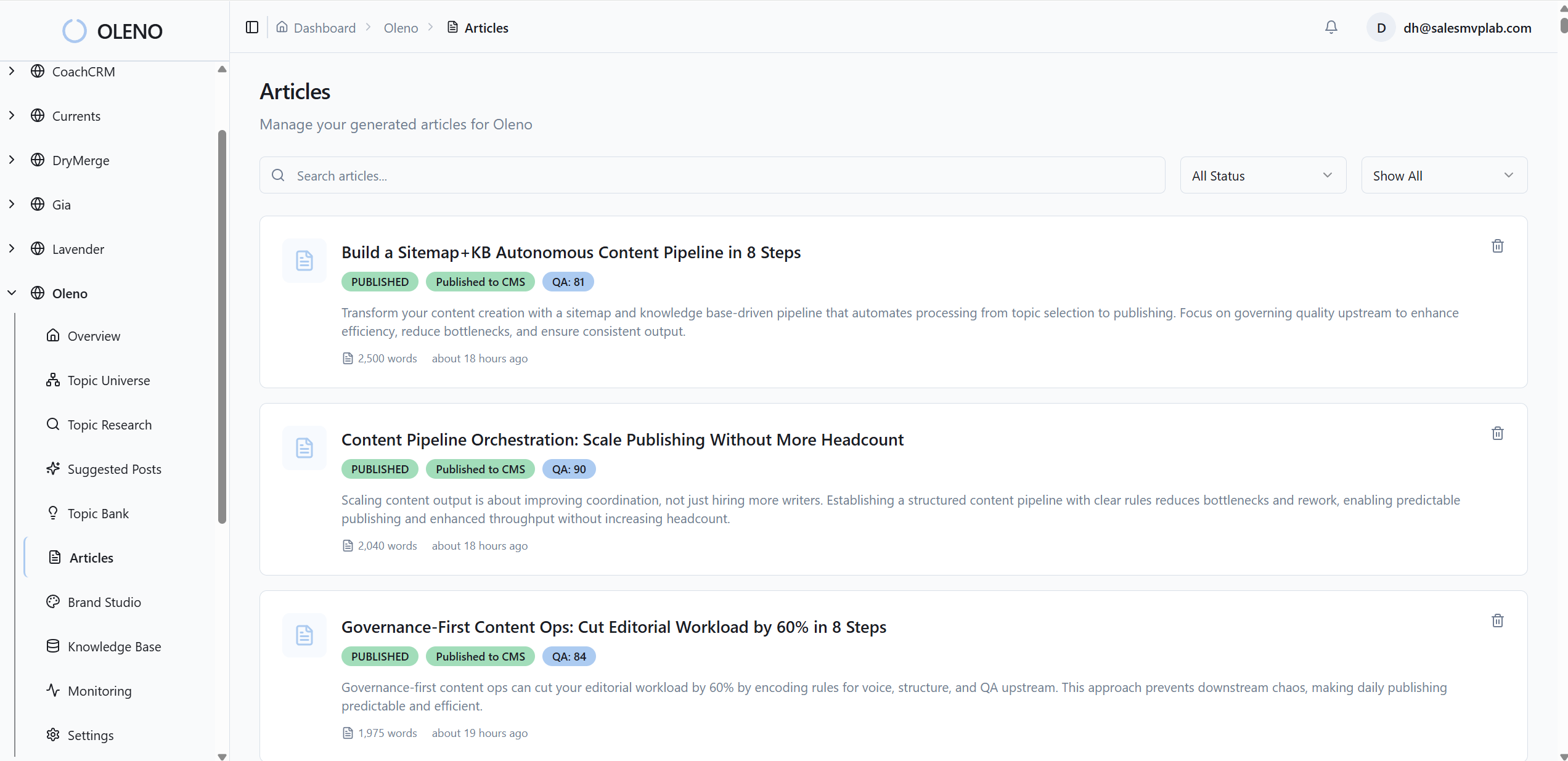

How Oleno Supports Intent Alignment Without Extra Headcount

Oleno reduces misalignment by deciding topics, locking angles that match intent, enforcing structure through a QA gate, and publishing directly to your CMS. It doesn’t track analytics or measure performance; it runs the creation system so your audit backlog actually ships. For example, Topic Discovery prevents duplicate coverage while QA blocks weak structure.

Structured topic discovery and angles that respect intent

Oleno determines what to write and then locks angles that align with search intent before a draft exists. That means fewer misfit pages get created in the first place. The system analyzes your sitemap, reads your knowledge base, and prevents duplicate or thin topics from entering the pipeline. You’re no longer guessing; you’re deciding with guardrails.

In practice, this changes the conversation. Instead of “we need another post on X,” it becomes “this cluster needs a comparison page and a supporting guide.” When the angle is set up front, draft generation moves faster and aligns to the SERP by design. That’s less rework, fewer meetings, and a cleaner site architecture.

Quality gate that enforces structure and voice

Every draft passes Oleno’s QA Gate before publishing. Checks include narrative structure, voice consistency, knowledge base grounding, SEO placement, LLM clarity, and filler detection. Articles below threshold are revised automatically; publishing is blocked until standards are met. The result isn’t perfection; it’s predictable quality.

This is how you avoid rewriting the same piece three times. The QA Gate enforces the layout you’ve decided in your audit specs. If your rule says informational pages can’t open with product proof, the system enforces it. Your brand voice shows up consistently. Your structure is clean enough for both readers and machines.

Direct CMS publishing that keeps the plan moving

Oleno publishes directly to WordPress, Webflow, Storyblok, HubSpot, Framer, and more, draft or live, idempotent to avoid duplicates. No copy-paste. No formatting purgatory. This matters when you’ve mapped merges, redirects, and page-type changes. The faster you ship, the sooner you see signal.

Publishing cadence matches your daily post quota, so execution doesn’t stall on handoffs. You decide the sprint; Oleno runs it. Meanwhile, Social Studio can generate platform-specific social content drafts from your published articles, text only, not scheduling or analytics, so your team has reuse ready without adding overhead.

What Oleno does and does not replace

Oleno runs content creation as a system. It replaces manual editorial planning, prompt-based writing, draft review loops, and publishing handoffs. It does not replace strategy, brand decisions, or your analytics stack. You define the rules and targets. Oleno executes them consistently.

If the earlier math on rework hours and leaked conversions felt familiar, this is how you change it. Fewer misaligned drafts get created. Remediation ships faster. Structure and voice are enforced by code. Want to see how quickly it comes together in your environment? Try Oleno For Free.

Conclusion

Intent is a constraint, not a nice-to-have. Treat it that way and your “traffic” turns into pipeline you can recognize. Run the audit, assign one action per page, and ship in sprints. Then let a system keep you honest, angles locked, structure enforced, publishing on cadence, so your fixes stick when the SERP shifts again.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions