Legal & Compliance QA: Safe Pipeline for AI-Generated Marketing Content

AI lets you publish at warp speed. Legal compliance QA keeps that speed from turning into fines, takedowns, or redlines at 11 pm. If you bolt legal on at the end, AI just amplifies the risk. Treat legal compliance QA as rules baked into the system from brief to publish, not a late-stage opinion. That is the shift.

I learned this the hard way building content engines. You can crank volume for a while. Then the misses pile up. Claims wander. Disclosures get missed. Reviews drag. People get tired. When you encode governance and automate checks up front, velocity goes up while risk goes down. Sounds simple. It is not. But it is doable.

Key Takeaways:

- Encode legal compliance QA as deterministic rules applied from brief to publish

- Build a claims taxonomy with risk tiers and required substantiation per class

- Translate policy into a machine-enforceable lexicon plus semantic patterns

- Verify claims with retrieval-backed citations, block publish on unverifiable lines

- Route edge cases to the right owner with context, SLAs, and audit logs

- Track defect sources, rework costs, and time-to-publish so improvements stick

Why Legal Compliance QA Breaks at AI Speed

Legal compliance QA breaks at AI speed because teams treat it as a final manual gate instead of a system that runs the whole way through. Fast drafting multiplies output, but review capacity stays flat and judgment stays subjective. The mismatch creates rework, delays, and higher exposure on the exact assets you want live.

Treat compliance as a system, not a final gate

Compliance works when it is part of the pipeline, not a stop sign at the end. Define rules, constraints, and disclosures once, then apply them from brief to draft to publish. Writers and models should inherit the same standards automatically. Reviewers should focus on nuance, not basic policy policing.

When you wire rules into the process, violations shift upstream and shrink. Writers stop guessing. Editors stop firefighting. Legal stops rewriting. You get fewer escalations and faster cycles because the system blocks noncompliant output before humans even see it. That is a big unlock.

Claims and lexicon you can actually enforce

Teams get vague about claims, which is where risk hides. Map claim classes clearly, for example product capabilities, performance promises, pricing, security, vertical-regulated lines. For each, define what proof is required, what words are allowed, and which audiences or regions it applies to.

Pair that taxonomy with a policy lexicon and patterns. Maintain approved terms, prohibited phrases, required disclaimers, and sensitive entities per market. Use literal patterns for hard fails and semantic checks for paraphrased risks that slip past keywords. The goal is binary enforcement on the basics, not debates.

Redefining Legal Compliance QA as a System Problem

Legal compliance QA is a system execution problem, not an editing problem. Policies written in PDFs do not enforce themselves. Owners without accountability cannot move fast. Rules without versioning cannot prove what ran when. Treat the whole stack like code and the bottleneck changes shape.

Policies must be executable, not just documented

Policy prose needs to become rules the machine can run or a checklist a human can apply. Start by assigning each policy line to a claim type, a validation method, and an escalation path. If a rule cannot be executed, rewrite it until it can. That clarity kills a lot of back-and-forth.

You will need templates for each content type. Ads have one set of checks, long-form has another, social has a third. Keep the rule sets scoped and versioned. When a reviewer grants an exception, capture it, then update the rule so the same issue is handled automatically next time.

Ownership, versioning, and audit like code

Pick single-threaded owners by domain. One for security claims. One for pricing. One for regulated categories. Owners maintain rules, approve exceptions, and tune the lexicon. Commit changes with notes and impact scopes. Keep an immutable audit log that shows which rule version ran on each asset at publish.

Regulators and partners ask for evidence. A clean audit trail defuses a lot of risk. The structure also speeds change. When guidance shifts, one owner updates one rule set, then the pipeline enforces it within hours. Frameworks like the NIST AI Risk Management Framework align with this approach.

The Measurable Cost of Weak Legal Compliance QA

Weak legal compliance QA burns time, budget, and trust. Late violations trigger edits, re-approvals, and missed windows. Unsupported claims risk takedowns and settlements. The hidden cost is cycle time. The visible cost is cash. Quantify both and the business case funds itself.

Quantify rework, delays, and risk

Put numbers on it. Track hours per violation, number of assets delayed, and slip impact on launches. Add potential enforcement costs using public actions as reference points. The FTC’s Endorsement Guides make clear where disclosure misses create exposure.

Most teams are shocked when they add it up. A single late-cycle claim fix can cost 45 to 90 minutes across writer, editor, PM, and legal. Multiply by dozens of pieces per month. Then add revenue impact from missing campaign windows. Suddenly “just review it at the end” looks like the expensive option.

Map defect sources, then prove it with evidence

Instrument your workflow to tag where violations originate. Are you missing ad disclaimers, inflating performance in blogs, or quoting stale pricing in case studies. For the top offenders, add an earlier control point with the right automated check. Fixing defect sources beats catching defects forever.

Evidence wins arguments. If a rule creates noise, collect false positive examples, tune the pattern, and log the improvement. Publish monthly QA scores, violation trends, and time-to-publish. Transparency changes behavior. People focus when they see how misses create real cost.

When Legal Becomes the Bottleneck, Everyone Loses

When legal becomes the bottleneck, the whole pipeline slows to the speed of the riskiest asset. Writers start to fear the redline. Legal gets framed as blockers. Managers juggle threads across tools. Stress spikes. Trust erodes. Missed dates pile up. Culture pays the bill.

The human reality inside the old workflow

You have seen the dance. Draft ships. Legal flags a vague claim. Writer rewrites. Product weighs in. Legal flags a missed disclosure in the new version. Everyone is frustrated, and the thread is split across three apps. Nobody is wrong, but the system is.

People adopt new systems faster when you name the pain. Acknowledge the mess. Show how better inputs, automated checks, and clear owners shrink the loops. Then measure the change so the team feels the win. Morale improves when review stops feeling like whack-a-mole.

Risk tiers and safe escalations

Not every asset needs the same scrutiny. Define risk tiers. Low risk pieces run automated checks plus spot audits. High risk assets route to domain experts with evidence pre-attached. Calibrate SLAs by tier so low risk work does not get stuck behind a complex review.

Make escalations safe and specific. Send the violating text, the fired rule, proposed fixes, and links to approved wording. Avoid vague feedback. Clear, respectful loops reduce defensiveness and slash the back-and-forth. Guidance from groups like the ASA CAP Code can help shape tiering for UK markets.

A Safe Pipeline for AI Content with Embedded Legal Compliance QA

A safe AI content pipeline encodes governance up front, runs automated checks during creation, verifies claims against trusted sources, then routes edge cases to humans with full context. That mix cuts rework and raises publish velocity without raising risk. It is a system, not a tool.

Define the claim taxonomy first

Start with a claim map. List classes like capabilities, performance, pricing, security, endorsements, and regulated vertical statements. For each, define required substantiation, allowed phrasing, and regional or audience constraints. Store it in your governance layer so briefs, prompts, and drafts inherit the same rules.

Lack of a shared taxonomy is the root of most legal review arguments. The writer thinks it is fine. Legal sees a risky implication. Product sees a roadmap promise. A clear taxonomy with examples turns arguments into decisions. The machine can enforce the basics. Humans handle the gray.

Automate checks with a hybrid engine

Hard fails need literal rules. Banned phrases, required disclaimers, sensitive entities by market. Use lexicons and patterns to catch those instantly. Contextual risks need semantics. Implied superiority, missing context, distorted benchmarks. Lightweight classifiers can flag those cases for review.

Run the engine on every draft and revision. Score output by risk. Fail on critical violations, flag medium risk items, and log everything. Over time, you will tune patterns, cut false positives, and shrink queues. Policy on rails feels a lot better than policy by surprise.

RAG verification with human-in-the-loop

Verification removes a big chunk of ambiguity. Use retrieval to check product and pricing claims against a curated knowledge base of approved docs. Attach citations to each claim or block publish and suggest compliant alternatives when nothing can back it up.

Route edge cases to domain owners with full context. Send the claim text, the triggered rule, retrieved sources, and suggested fixes. Track SLAs by tier. Close the loop by updating rules when humans grant exceptions. Then the same issue is auto-resolved next time. That is how queues shrink for good.

Curious whether this approach fits your stack without adding headcount? Request a Demo

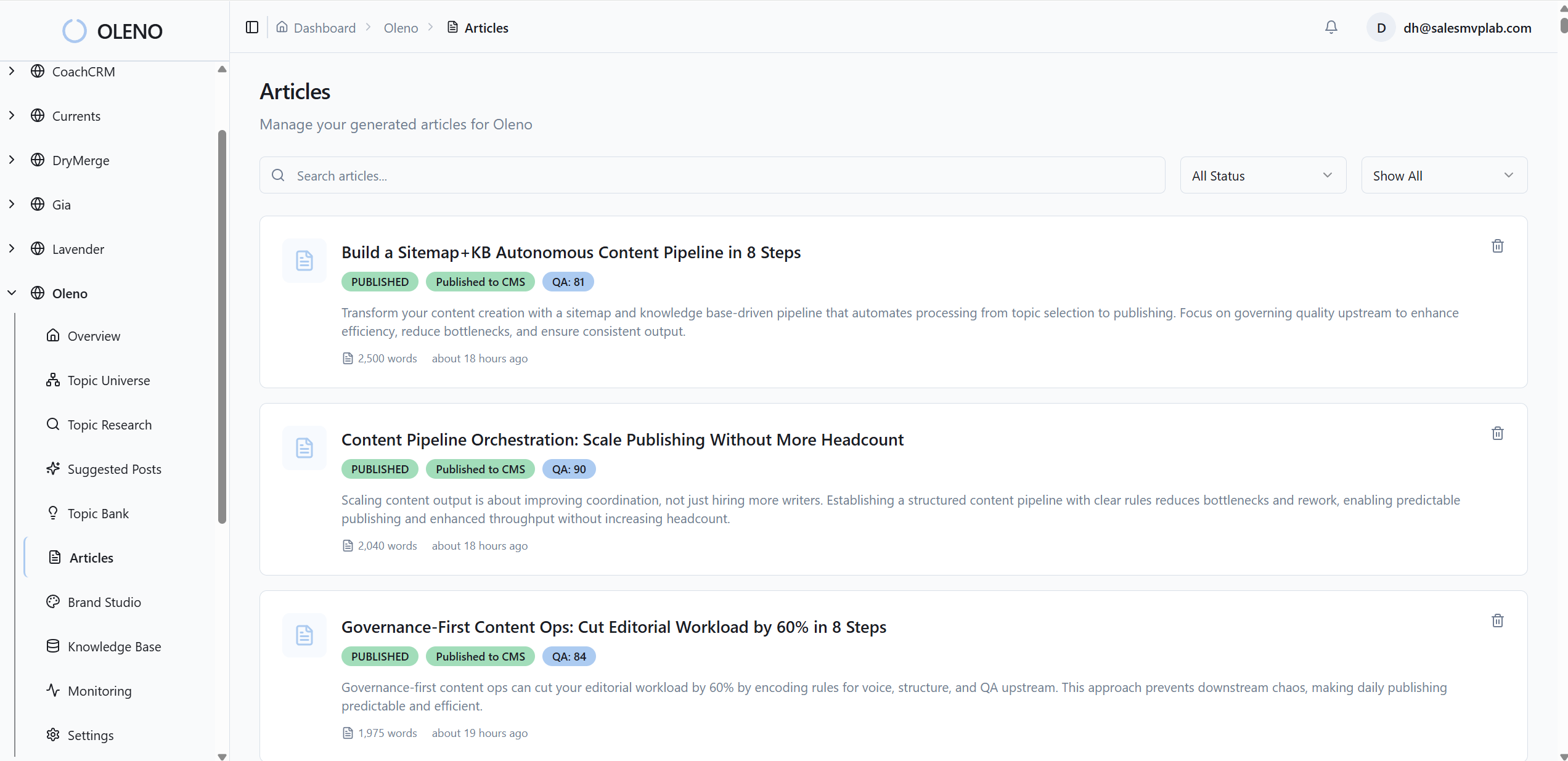

How Oleno Operationalizes Legal Compliance QA Without Slowing You Down

Oleno operationalizes legal compliance QA by encoding governance into studios, running hybrid checks on every draft, verifying claims with retrieval against your approved knowledge, and routing edge cases with SLAs and audit logs. The result is fewer late-cycle edits and faster, safer publishing.

Governance Studios that encode policy into execution

Oleno’s Brand, Marketing, and Product Studios translate your policies into rules the system can enforce. Define approved claims, boundaries, and required disclosures once, then apply them across every asset and channel. Drafts start inside the guardrails so reviewers focus on nuance, not basics.

You can scope rules by content type and region, maintain change notes, and tie rule versions to published assets. When guidance shifts, update one place and the pipeline adapts. That is how teams keep velocity up without playing telephone across tools.

Lexicon plus model checks with audit trails

Oleno runs a hybrid QA engine. Lexicons catch hard-fail phrases and mandatory disclosures. Model-based checks detect implied claims, comparative language, and risky context. Each decision includes a rationale, the rule version applied, and a direct link to the triggering text.

If your team measured 45 to 90 minutes per late-cycle fix, Oleno cuts that to minutes by preventing most issues upstream and bundling the rest with suggested fixes. The approach aligns with risk-minded practices found in resources like the NIST AI Risk Management Framework, adapted for marketing workflows.

60 to 80 percent less compliance-related review time within a quarter. That is what Oleno aims to deliver with encoded rules, automated checks, and clean escalations. Book a Demo

RAG-backed verification and SLA routing

Oleno verifies product and pricing claims against your governed knowledge base using retrieval. The system attaches the exact sources that justify the line or blocks publish and suggests compliant alternatives. No more hunting for substantiation or waiting days for confirmation.

Not everything should be automated. Oleno routes flagged items to the right owner with context, proposed fixes, and SLAs. Approvals update the rule set so repeat issues are auto-resolved next time. Measured outcomes include fewer escalations per thousand words, shorter review queues, and an audit trail that satisfies partners and regulators.

Ready to replace the late-stage scramble with a governed pipeline that moves faster and risks less? Request a Demo

Conclusion

Treating legal review as a final manual step guarantees scaling problems. Encoding governance up front, automating checks, verifying claims with retrieval, and escalating with SLAs flips the script. You reduce compliance-related review time and takedowns by roughly 60 to 80 percent while doubling or tripling safe publishing velocity.

If you want that outcome, focus on the system. Translate risk into a claims taxonomy. Make policies executable. Instrument your pipeline. Then let automation do the boring work so people can handle the hard calls. That is how you ship faster without raising risk.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions