Metadata-Driven Repurposing: Build an Automated CMS Pipeline

Most teams treat repurposing like copy, paste, tweak, hope. That’s why it feels slow, brittle, and risky the second volume goes up. Metadata-driven repurposing flips the work. You treat every piece like structured data, not a blob. You define the fields once, wire templates to those fields, and let your CMS and webhooks do the heavy lifting. Speed goes up. Drift goes down.

I’ve been the person jamming LinkedIn hooks into a Google Doc at 10 pm. It’s a grind. You’re guessing tone, guessing what matters for each channel, and begging reviewers to skim “one last time.” The fix isn’t more effort. It’s a system that puts intent, voice, and claims into metadata so the pipeline can format, render, and publish without heroics.

Key Takeaways:

- Treat content as data: define audience, intent, CTA, claims, sources, and risk flags as fields

- Map fields to channel templates so LinkedIn, X, email, and blog render from the same truth

- Add automated QA gates for voice, claim grounding, and provenance before anything ships

- Use webhooks and CMS APIs for one-click channel exports with idempotent retries

- Track SLIs like throughput, error rate, and approval time to see failure modes early

- Aim for 70% less manual repurposing time and 90% fewer production errors in 8 weeks

Metadata-Driven Repurposing Is the Only Way Out of Manual Chaos

Metadata-driven repurposing means you structure content so systems can render channel assets automatically. You capture audience, angle, takeaways, quotes, claims, and variants as fields, then bind them to templates. The result is speed without drift, for example a blog’s H2 summary becomes a LinkedIn slide title with zero guesswork.

What “Metadata-Driven Repurposing” Means in Plain English

You’re turning one article into a record with labeled parts. Not just title and body, but the thinking behind it. Audience, problem, thesis, five key points, two approved quotes, three hooks, a CTA that fits the intent, plus any trust or legal flags. I know it sounds extra. It’s actually the only way to scale safely.

When those fields are explicit, templates can place them where they belong. Social needs a hook and a stat. Email needs a tight lead and a single CTA. Blog needs the argument and citations. The same source of truth feeds each shape, which is why tone stops wobbling and review time drops.

From Blob of Text to Structured Record

Unstructured text forces humans to remember the rules. That’s where teams fail. You can’t expect a copywriter to recall voice constraints, claim boundaries, and link style while racing a deadline. Put them in metadata. Then let your pipeline apply them every time, the same way.

If you want a reference point for naming fields, look at schema.org’s Article type. It’s not perfect for marketing, but it shows how a record can carry meaning. Build the marketing flavor you need on top of it. The point is the same, treat the article like a structured object.

Templates, Webhooks, and CMS APIs Working Together

Once you have fields, you can wire templates. A LinkedIn carousel template reads hook_options[0], slide_titles from H2 summaries, and stat_cards from the “stats” array. Your CMS stores the record, your serverless function transforms it, and a webhook hands it to scheduling or publishing.

A content modeling primer like Contentful’s overview can help you think in components. The goal isn’t a fancy CMS. The goal is a pipeline that can produce consistent outputs without a human reformatting the same idea four different ways.

The Real Bottleneck Is Missing Schemas, Not Time or Headcount

Slow repurposing comes from undefined fields, not lazy people. If no one agrees on what a repurposable article contains, you can’t automate anything. Define the schema first, with clear ownership and constraints, and your CMS can ship channel assets reliably on a schedule.

Slow Repurposing Is a Symptom, Not the Cause

When a piece is “done,” what do you actually have? If the answer is a long Google Doc, you’re stuck. There’s no safe way to auto-generate a hook, quote card, or email intro if those elements don’t exist as fields with limits and rules.

You don’t need 80 fields either. Start lean. Start with audience, problem, thesis, five takeaways, two pull quotes, three hooks, one CTA, stats with sources, and any disclaimers. That’s enough to cover most channels at a high level. Add more only when you see repeat wins.

The Minimum Schema You Actually Need

Think in terms of the jobs each field enables. Hooks drive social. Takeaways drive slides. Quotes drive images. Stats with sources drive credibility. CTA variants align to funnel stage. When those are present and validated, you’re free to map them anywhere.

For discoverability and previews, follow basics like Google’s structured data guidelines and the Open Graph protocol. That’s not repurposing by itself, but it reinforces the habit of making meaning machine readable. Meaning you control.

What You Lose Without Metadata-Driven Repurposing

Manual repurposing wastes hours, adds risk, and kills momentum. You pay in hidden coordination cost, inconsistent tone, and slow approvals because nothing is labeled. Quantify that drag and the need for a schema-first approach becomes obvious to any exec with a calculator.

The Hidden Cost Model You’re Ignoring

Add it up. Ten minutes to write a LinkedIn hook. Fifteen more to rewrite it for X. Another twenty to rebuild the hero image. Then Slack pings for approvals that bounce around for an hour. Multiply by three channels and two posts a week. You just lost half a day, minimum.

Industry surveys often show content creation time ballooning as volume rises. The exact number varies by team, but the pattern is clear in reports like the HubSpot State of Marketing. The point stands. Without structure, costs scale faster than output.

Quality Debt Shows Up as Rewrites and Retracts

When tone drifts or a claim isn’t grounded, trust erodes. That’s the real cost. A fix might look small, but the rework cycle is brutal. Rewrite, re-review, re-upload, and sometimes retract publicly. One avoidable mistake chips at credibility you spent years to earn.

Track error types by channel. Wrong tone. Broken link. Missing disclaimer. Misstated claim. Then ask a simple question. Which ones vanish if the claim, source URL, and risk flag live as required fields that a template can validate? Most of them, especially when evaluating metadata-driven repurposing.

Approvals Stall When Nothing Is Labeled

Approvers read the whole piece because they have no other option. Claims aren’t tagged, sources aren’t obvious, and risk flags don’t exist. So they re-litigate the entire argument every time. That’s slow, and it’s fragile.

Shift the load. Reviewers scan metadata first. If a high-risk claim is present, they check the source and line number. If the piece includes a legal disclaimer flag, they confirm the right version. The rest moves through on trust. You just turned approvals from novels into checklists.

What It Feels Like When Repurposing Depends on People Not Data for Metadata-driven repurposing

It feels like late nights, guesswork, and anxiety. You chase approvals in DMs, rewrite the same intro three times, and hope the tone lands on every channel. That’s not craftsmanship. That’s a missing system that forces people to carry rules in their heads.

The Friday Night Fire Drill You Know Too Well

Picture the ping. “Can we get this live by Monday on blog, LinkedIn, and newsletter?” The article exists, sort of. The hooks don’t. The quotes aren’t clipped. The CTA’s wrong for email. Now you’re scrambling, trying not to miss anything while the clock runs down.

I’ve been there. You start moving fast and the mistakes creep in. Wrong link. Off-brand phrasing. No citation. You don’t need heroics. You need the rules in metadata so the pipeline carries the weight, even when the plan changes late.

Why Momentum Dies Across Channels

Writers think in arguments, not output shapes. Forcing them to switch context across five formats wrecks flow. They lose the thread. Quality suffers. Morale dips. It’s a quiet tax you pay week after week.

Give them a schema with prompts. Three hook options. Two pull quotes. Section summaries. Stats with sources. They capture thinking once, then the pipeline renders each channel shape. Momentum returns because context switching goes away. Quality holds because the system enforces the rules.

Build a Metadata-Driven Repurposing Pipeline That Runs Itself

You make this real by designing a clean article model, mapping fields to channel templates, and defining a reliable JSON payload for your transforms and webhooks. Keep the model small, the mappings explicit, and the payload stable so downstream systems trust it.

Start With a Reusable Article Model

Design one schema that covers your core use cases. Keep it readable. Add constraints where mistakes happen often, like character limits for hooks and attribution rules for quotes. Assign field owners so someone is accountable for quality.

When you’re ready, add Channel Variant sets for LinkedIn, X, email, and YouTube. Those variants point to the same core fields, with a few channel-specific tweaks like max characters and link policy. That’s how you get reuse without losing context.

After you define it, you can summarize fields like this:

- Topic and persona, who you’re talking to and why it matters

- Problem, thesis, and five takeaways, the core argument

- Hooks, quotes, and stats with sources, what fuels assets

- CTA variants and tone controls, how you guide action

- Visual notes and risk flags, what protects trust

Map Long-Form Sections to Channel Templates

Document exactly how fields land in each channel. H2 summaries become slide titles. Pull quotes become image captions. Stats become cards. Hook_options feed social intros. The mapping lives with the template, not in someone’s head.

Then capture the rendering rules. Max characters. Emoji policy. Link style and placement. Capitalization choices. That guidance belongs in template metadata so transforms can enforce it consistently.

A simple mapping process looks like this:

- Identify the long-form source fields you’ll reuse most

- Define where each field appears inside each channel template This is particularly relevant for metadata-driven repurposing.

- Add validation rules for length, tone, and claim usage

- Test with three recent articles and adjust the mapping

Shape the JSON Payload for Reliability

Downstream systems need a stable contract. Pick a canonical payload shape and stick to it. Include ids, timestamps, language, content fields, channel variants, and render hints. Add claim_id arrays with source URLs and quote_attribution so QA can confirm provenance.

Plan for retries. Use idempotency keys and signatures so your webhooks can run safely without duplicates or tampering. If you want a refresher on structure, the JSON Schema primer is a solid base. For delivery patterns, read the W3C WebSub spec and common webhook security practices.

Ready to cut manual repurposing by 70%? Oleno makes it simple. Request a Demo

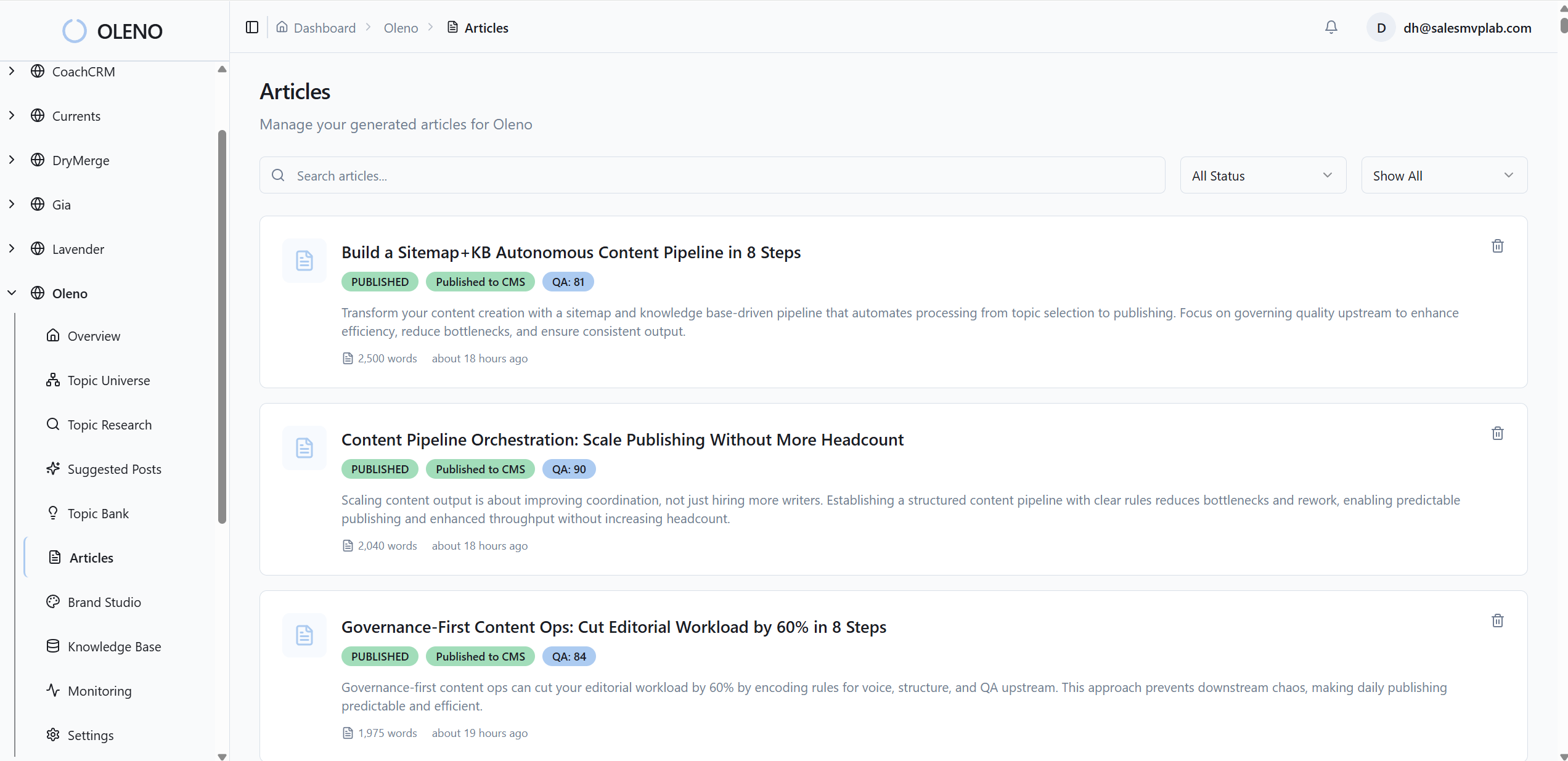

How Oleno Automates Metadata-Driven Repurposing End to End

Oleno turns your model into real outputs by enforcing governance at the source, running jobs that generate channel variants, and validating quality before anything ships. The system posts signed payloads to your CMS or queue, retries cleanly, and gives you dashboards that surface issues fast.

Governance Studios That Lock In Truth

Oleno’s Brand, Marketing, and Product Studios capture tone, POV, approved claims, risk boundaries, and structure rules as fields. Templates then pull those exact fields for each channel, which is why repurposed assets match voice and stay inside product truth.

You don’t rely on tribal memory. You rely on rules the system can read. That cuts rework because voice linting, claim grounding, and provenance checks happen before publish. The fragile handoffs you hate start to disappear.

You can see it in QA outcomes immediately:

- Voice linting catches off-brand phrasing with suggested fixes

- Claim grounding enforces source presence and correct attribution

- Provenance checks confirm quotes and stats link to the right evidence

- Field validation blocks missing hooks, CTAs, or disclaimers before publish

3x faster channel turns, fewer rewrites, and fewer mistakes. That’s what Oleno delivers. Request a Demo

Jobs, Webhooks, and Connectors That Ship It

Once your content record is approved, Oleno runs jobs that create channel variants, sign the JSON payload, and post to CMS APIs or scheduling tools through webhooks. Retries use idempotency keys, and status updates flow back into the record so ops can see exactly what happened.

If you work in WordPress, the REST API docs show the pattern Oleno plugs into. For monitoring and alerts, platforms like Datadog’s monitors are a helpful mental model for throughput, error rates, and SLA triggers. The point is reliability. You see problems early and fix them fast.

Conclusion

Repurposing breaks when it depends on people, memory, and copy-paste. It starts working when you treat content as data with governance baked in. Define the schema, wire the templates, enforce QA at the source, and let the pipeline carry the weight.

If you do that, you can cut manual repurposing time by 70% and reduce production errors by 90% in roughly eight weeks. That’s not a wild claim. That’s what happens when the system owns formatting, distribution, and guardrails, and your team owns the thinking.

Want the governance studios, QA gates, and connectors handled for you? Oleno’s built for that. Book a Demo

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions