Orchestrated AI Content Pipelines: Publish-Ready Articles in 9 Steps

You can crank out a hundred AI drafts and still miss revenue. I learned that the hard way. Years ago, including the rise of dual-discovery surfaces:, I ran a contributor network that hit 120k monthly visitors because we combined depth and breadth. Later inside SaaS, I watched beautiful content fail to drive pipeline because it was disconnected from the product. Speed without a system creates more editing, more drift, and more risk.

The shift that finally clicked for me was simple. Treat content like an operational pipeline, not a series of tasks. When you wire strategy, research, draft, visuals, links, schema, QA, and publishing into one deterministic flow, the drafts start shipping as publish-ready articles. Not perfect. Just consistently good and citable.

Key Takeaways:

- Replace prompt-driven chaos with a measurable baseline and a 30-day audit

- Write policy-as-code for content quality and make QA the gate

- Centralize knowledge, voice, and sitemap rules to cut factual and formatting rework

- Map a topic universe with clustering, coverage labels, and cooldowns to stop cannibalization

- Use a nine-step pipeline with information gain scoring, retrieval scaffolds, and brand visuals

- Swap probabilistic steps with deterministic code for links, schema, and publishing

- Automate end to end so people tune rules and review exceptions, not rewrite drafts

Prompting Scales Words, Not Outcomes

Prompting creates words quickly, but it does not create a reliable pipeline that ships publish-ready articles. Each run starts from zero context, which leads to drift in voice, structure, and accuracy. You see it when a “final draft” needs an hour of fixes before it can go live.

Audit your prompt-driven workflow

Start by inventorying where your prompts live, who runs them, and what gets edited later. Build a simple ledger that captures inputs, model, output length, edit time, and whether it published or died in Docs. Baseline rework minutes and factual corrections per draft so you can quantify improvement. This mirrors what data teams do, because pipeline quality drives results, as argued in Stanford HAI’s perspective on data-centric AI.

Link your observations to structure, not taste. The common pattern is clear: more prompts do not equal more authority. A system does. If you need a primer on why speed stalls without a pipeline, read about orchestrated workflows and the real ai writing limits.

Trace drift sources and quantify rework

Tag issues by type: brand voice, section order, including the shift toward orchestration, factual grounding, links, visuals, schema, CMS formatting. Do not fix yet. Measure first. You want to see where edits concentrate so you can replace the variable steps with rules. That is how you move from “helpful drafts” to publish-ready outputs.

Curious what the time drain really looks like? Operational teams often find that rework clusters around formatting, links, and structure because the pipeline is undefined. Baseline this now, then watch those clusters shrink once you introduce guardrails.

Ready to eliminate 12 hours of manual cleanup this month? Try generating 3 free test articles now.

Establish a 30-day baseline

Pick 20 to 30 articles and run your as-is process without heroics. Keep prompts static. Use the same people. Capture edit time, number of passes, and error classes. Do it for a full month so patterns emerge. Boring and consistent beats ad hoc wins.

Treat Content Like a System, Not a Series of Tasks

A reliable content operation looks like a pipeline with state, not a checklist of separate tools. Each stage has inputs, outputs, checks, and retries that are visible and repeatable. Think workflow orchestration patterns, applied to writing and publishing, not just data jobs, as seen in ACM’s work on orchestration design.

Define non-negotiables and pass or fail criteria

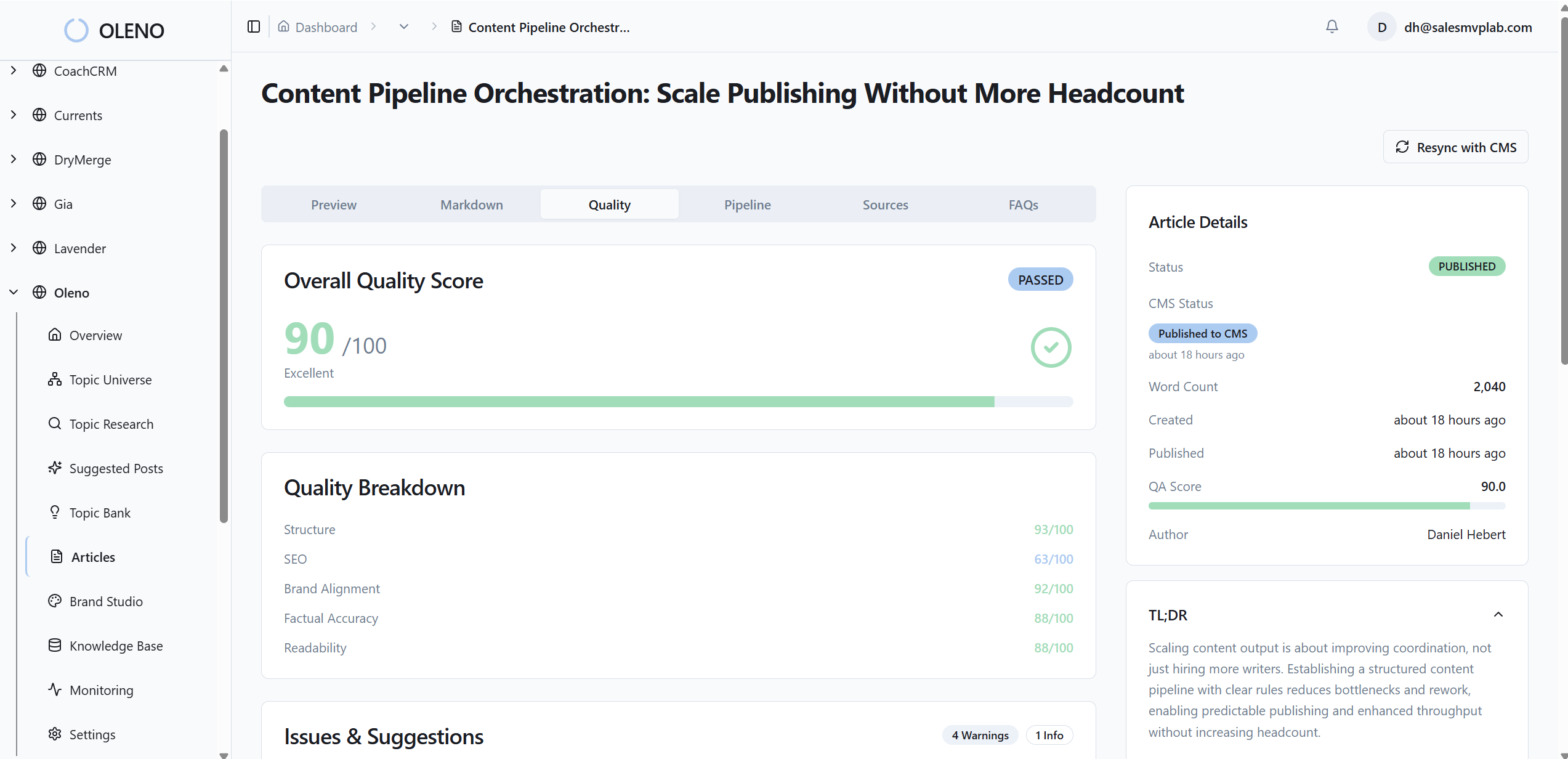

Policies work when they live in configuration, including why ai writing didn't fix, not slide decks. Set thresholds like minimum QA score 85, zero fabricated links, snippet-ready openers on every H2, valid JSON-LD attached, 5–8 internal links from verified sitemap pages, and 2–3 on-brand visuals. Store rules in a config that your pipeline reads programmatically.

Make failure behavior explicit. Example: if qa_score < 85, refine the draft, re-score, and after three retries, escalate. People approve rules, not drafts. This removes “frustrating rework” and late-night fixes because the gate enforces the standard. You can go deeper on why system-first beats tool-first here: autonomous systems and autonomous content operations.

What an orchestrated content pipeline looks like

The pipeline runs a deterministic sequence with state: strategy, brief, draft, visuals, links, schema, QA, publish. It is opinionated on structure, for good reason. Open every H2 with a snippet-ready paragraph. Use section-level retrieval. Enforce voice constraints. Inject internal links deterministically. Validate schema. Publish idempotently.

The people role shifts. They tune rules, review exceptions, and decide what to write next. They do not glue steps together manually, and they do not babysit drafts.

Governance and failure behavior

Define owners for the rules. Decide how new rules are proposed, tested, and rolled out. Keep a small, living set of guardrails. Most teams only need a dozen rules to eliminate 80 percent of the cleanup. Small, clear, enforced. That is governance that helps, not bureaucracy that slows.

The Hidden Costs Quietly Draining Your Content Budget

The biggest cost is not the draft. It is the swirl after the draft that no one tracks carefully. When knowledge is scattered and voice rules live in brains, you pay in edits, delays, and do-overs. Centralizing knowledge, tone, and sitemap truth reduces that drag quickly.

Initialize strategy and ingest the knowledge base

Pull product docs, site copy, playbooks, and approved facts into one place. Chunk, including why content broke before ai, embed, and tag by entity such as feature, claim, and source. Attach brand voice constraints and banned terms. Keep do and don’t phrases, tone sliders, and rhythm rules in code so the model does not forget. Validate your sitemap and pick canonical sources per topic.

This cuts factual corrections because claims are grounded in centralized knowledge during drafting. It also resolves link confusion because the canonical URL is declared up front. If you want to see where the overhead hides, here is a breakdown of the messy steps that creep in without a system: content operations breakdown.

Quantify cleanup and drift with simple math

Let’s pretend you spend 75 minutes editing per draft, split into 25 on facts, 20 on structure, 15 on voice, and 15 on publishing fixes. That is 1.25 hours of preventable work. At 20 posts per month, you burn 25 hours. If your blended cost is 80 dollars per hour, that is 2,000 dollars monthly on avoidable edits. Operational waste scales with volume. Data teams see this pattern often, which is why efficiency baselines matter, as discussed in industry summaries on pipeline efficiency.

You Don’t Have Time to Babysit Drafts Forever

You need a system that proposes topics intentionally, spreads coverage across clusters, and prevents cannibalization. The goal is fewer last-minute edits and fewer “why did we write this” moments. You want predictable inputs and clean, publish-ready outputs.

Map a topic universe with clustering and cooldowns

Discover topics from KB entities, sitemap paths, and focus areas. Cluster by semantic similarity, then label coverage levels like underserved, healthy, or saturated. Enforce cooldowns, for example 90 days, so you do not stomp on a topic again too soon. This reduces duplicate angles and aligns production with authority building. Research on data clustering methods shows why structure before action matters, see the methodology in Nature’s dataset clustering work.

Approve a daily queue and kill random acts

Overproduce suggestions, under-approve work. Select a small queue daily and let the pipeline run. That kills randomness. Quick story. When I was the only marketer at a SaaS company, I could write three to four posts a week because I followed a framework. As the team grew, context drifted and quality sagged. A small, governed queue would have protected both speed and credibility.

Instead of ad hoc ideation, connect your discovery to both search and assistant surfaces. Here is how to align clusters to both worlds without extra meetings: dual discovery.

If you are tired of playing air-traffic controller for drafts, try using an autonomous content engine for always-on publishing.

A Deterministic 9-Step Pipeline That Actually Ships

A dependable pipeline follows nine steps that move from strategy to publish without manual handoffs. The trick is to keep creativity in the prose while making everything correctness-sensitive deterministic. That balance unlocks speed without chaos.

Generate briefs and score information gain

Analyze top-ranking content to spot common coverage, shallow sections, and missing angles. Assign an information gain score from zero to one hundred. If it is under seventy, re-angle or add unique data. Your brief should include thesis, audience, outline, claims grounded in the KB, external link candidates, schema intent, visuals plan, and internal link targets.

This is where you avoid repeating what already exists. The uniqueness score is a forcing function, including why content now requires autonomous, not a vanity metric. It rewards depth and fresh angles.

Build retrieval scaffolds and generate grounded drafts

Use section-level retrieval with KB context for each H2 or H3. Constrain the draft with voice rules, banned terms, and a structure that starts every section with a snippet-ready opener. Retrieval-augmented generation keeps claims grounded, which reduces factual cleanup, discussed in research like arXiv’s overview of retrieval and grounding.

Two practical tips. Keep retrieval narrow and relevant to the section. Validate snippet-ready openers during QA, with the three-sentence pattern baked in.

Automate brand visuals with semantic placement

Reference a brand asset library that includes colors, logos, style references, and tagged product screenshots. Generate a hero plus two to three inline images. Match product screenshots to solution sections using semantic similarity. Place visuals where they reinforce understanding, then generate alt text and SEO-friendly filenames automatically.

Creativity belongs in the images you choose to generate. Correctness belongs in how you place them. Keep those lanes separate.

You can see how a brief-first, retrieval-backed flow plugs into a full loop here: ai content writing.

How Oleno Automates the 9 Steps End to End

A fully automated system needs code where correctness matters and guardrails that enforce quality before publish. Oleno takes the nine steps above and runs them as a closed loop that outputs CMS-ready articles consistently, not occasionally.

Inject deterministic internal links and JSON-LD schema

Remember the link and schema cleanup that eats your afternoons. Oleno removes it. Internal links are injected after text and visuals, including ai content writing, selected from your verified sitemap only, and placed at natural sentence boundaries. Zero fabricated URLs. JSON-LD is generated for Article, FAQ, and BreadcrumbList, validated, and attached as metadata.

This is essential. Deterministic linking and schema cut the two most annoying last-mile tasks. That repeatable accuracy frees your time for bigger calls.

Enforce QA gate and publish via CMS connectors

The QA gate is where the output becomes trustworthy. Oleno runs 80 plus checks across structure, information gain alignment, brand voice, snippet readiness, visual placement, alt text, filenames, links, and schema validity. Minimum passing score is 85. Refinement loops run automatically until pass or max retries. Then publishing happens through mapped connectors to WordPress, Webflow, HubSpot, or Google Sheets with duplicate prevention and idempotency keys.

Transformation callback time. The on-call edits, the formatting nits, the link linting, the schema misses. Oleno absorbs them. You get publish-ready articles that align to your brand, carry real information, and look consistent. If you want to see this difference quickly, Try Oleno for free.

And a quick detail that matters at scale. Notifications tell you when a draft is ready or when publish succeeds. No dashboards or performance tracking in the product. Just delivery signals so you know the pipeline is moving.

Conclusion

If your current workflow begins at the prompt, you are optimizing for speed, not outcomes. The better bet is a pipeline that encodes how your content should look, sound, and ship. Start with a 30-day baseline. Set rules in code. Centralize knowledge and voice. Map a topic universe. Then run the nine steps with retrieval scaffolds, information gain scoring, snippet-ready structure, brand visuals, deterministic links, schema, and a hard QA gate.

You will still edit. You will still make judgment calls. You just will not babysit drafts. Your team tunes the system and approves exceptions, which means more publish-ready articles with fewer headaches and less “frustrating rework.” Content becomes infrastructure. Not a never-ending project.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions