Orchestrated Demand Gen: Build a Repeatable System That Scales Content

Most teams don’t fail at demand gen because they lack ideas. They fail because the work is fragmented across tools, people, and a rotating queue of “urgent” priorities. You can publish a few strong pieces and still have a broken system. I’ve done that. The lift always resets next quarter, and you’re back at zero.

AI poured gas on this. Drafts are faster. Reviews are slower. Coordination gets worse. If the play is still a patchwork of prompts, docs, and meetings, you’re not running demand gen. You’re coordinating activity. And activity doesn’t compound.

Key Takeaways:

- Treat demand gen like an operating system: governance, jobs, operations

- Encode voice, product truth, and allowed claims up front to cut review cycles

- Shift from formats to “jobs” (Acquire, Educate, Convert, Retain) with clear inputs and outputs

- Run a deterministic pipeline from discover to publish with hard QA gates

- Measure operational reliability (cadence, coverage, quality trend), not just traffic

- Orchestration beats prompting because it reduces judgment load and drift

Why Activity Masquerades As Demand Gen

Most teams confuse content output with a demand-gen system because output is visible and systems are not. A real system keeps narrative consistent, ships on a cadence, and compounds coverage across the funnel over months. Activity resets, a system persists. If it stops when one person steps away, it wasn’t a system.

The activity trap most teams fall into

You can crank out drafts, run a flashy campaign, and see a spike. Still not a system. What you’re seeing is fragmented execution across content, SEO, design, and publishing. Each piece on its own might be solid. Together, it’s inconsistent. The cost shows up as drift, rework, and a calendar that never stabilizes.

AI sped up writing, but it didn’t fix execution. It multiplied the number of things you can create without solving what to create, why it exists, or how it gets published on time. Most teams still rely on human judgment at every step. That judgment becomes the choke point the minute volume increases. You feel that as review bottlenecks and missed handoffs.

I’ve been on both sides. At Steamfeed, we had volume, but it only worked because we also had structure. Different people, shared rules. When you don’t write the rules down, you start over every week. And starting over is the enemy of compounding.

Faster drafts didn’t fix the problem, because systems win, not units

Prompts create units. A system creates reliability. When you push speed into draft generation, you pull judgment back onto people. That means more reviewing, rewriting, and meetings. Creation gets faster while publishing slows down. It’s a hidden tax.

A system has three ingredients: governance, job-based execution, and operations. Governance defines how you show up and what’s true. Jobs map to the funnel with clear inputs and outputs. Operations keep cadence steady and publishing controlled. That’s the difference between noisy output and repeatable demand gen. If you need a definition anchor, Microsoft’s overview of demand generation ties it back to business outcomes, not content units. See Microsoft’s explanation of demand generation for how it connects to pipeline and revenue.

Ready to skip the theory and see it run end to end? Request A Demo.

Run Demand Gen Like Operations With Clear Owners

Running demand gen like operations means policies are encoded, jobs are defined, and cadence is enforceable. You set the rules once and reuse them daily. That shift cuts review cycles, reduces drift risk, and makes publishing predictable. Think less “campaign scramble” and more “steady system.”

Define governance first, not output

Before you brief another piece, define five artifacts as your single source of truth: brand voice rules, positioning and POV, product truth and allowed claims, visual guidelines, and approved knowledge sources. Then make them machine-readable. When those rules travel with every asset, reviewers stop rewriting sentences and start confirming exceptions.

Here’s the mechanism. Voice rules prevent style debates. Positioning and POV keep angles aligned to your narrative. Product truth and claims stop invented features or fuzzy promises. Visual rules remove back-and-forth with design. Approved knowledge sources guard against random external facts sneaking in. You’re shifting effort upstream and amortizing it across all outputs.

Keep it pragmatic. I like a one-page summary for humans and a structured version for the system. If you want a helpful way to connect policy to execution, the Spend Matters intake and orchestration guide explains how “policy up front” reduces review touches and surprises downstream.

Map work to jobs across the funnel

Stop shipping formats. Start running jobs. Define four demand-gen jobs: Acquire, Educate, Convert, Retain. Each job has inputs, structured outputs, and pass-fail checks. The goal is auditability. When priorities shift, jobs self-correct because rules don’t rely on memory.

For Acquire, inputs might include site structure and areas where you want coverage. Outputs are publish-ready programmatic SEO pages with locked structure in your voice. For Educate, inputs could be narrative frameworks and product beliefs. Outputs are POV pieces that teach the new way. Convert uses competitive and product truths to produce comparisons and use-case explainers. Retain turns customer stories and adoption content into ongoing proof.

When jobs are defined like this, you can answer basic operational questions: what’s running, what’s blocked, what passed QA, and what published. It stops being personal. It becomes process.

The Costs You Do Not See In Ad-Hoc Content

Ad-hoc content creates a hidden cost structure you rarely see on a dashboard. The bill looks like review tax, coordination drag, drift-driven rework, and cadence decay. Each piece feels small. Together, they stall pipeline influence next quarter. This is where teams quietly lose.

The review tax and coordination drag

Let’s pretend you ship 20 assets a month. Three reviewers per piece, 30 minutes each. That’s 30 hours of senior time. At a $150 blended rate, you’re spending $4,500 monthly just to move drafts through a checkpoint. I’m being conservative. Many teams spend more.

Governance and QA gates cut this cost by changing what gets reviewed. Reviewers focus on exceptions, not every sentence. Quality checks block obvious misses early, so senior people see fewer drafts and spend less time per draft. It’s boring process work, but boring is good here. The Adobe perspective on orchestrated campaigns connects this standardization to shorter cycle times.

Rework from narrative drift and SEO detachment

At Proposify, we ranked well on Google. The content team did great work. The problem was detachment from the product. We wrote about SDR management while we sold proposal software. So we got traffic without demand. Sales felt it. Marketing felt it. Nobody was happy.

Drift shows up as rework. You fix intros to align with POV. You re-angle posts to map to evaluation. You prune pages that pull unqualified traffic. That’s time you could spend compounding coverage. Narrative frameworks and job definitions prevent the drift by making “what belongs” explicit.

What happens when cadence slips for 30 days?

Cadence drives compounding. Suppose organic contributes 30 percent of pipeline. A 30-day slip means fewer refreshes, fewer internal links, and rank softening on pages you already earned. It’s not catastrophic, but it’s costly. A conservative 10 percent drop in assisted pipeline next quarter is common when cadence breaks.

You don’t fix cadence with heroics. You fix it with deterministic workflows and a publishing schedule that’s enforceable. Teams who standardize the pipeline from discover to publish keep shipping even when launches or sales requests pull focus. That’s the quiet advantage.

When The System Breaks, People Feel It

You don’t need a dashboard to know when the system is brittle. You feel it in late-night fire drills, endless review loops, and that uneasy pause before you click publish. Reliability isn’t a nice-to-have. It’s how a small team avoids burnout and still ships.

The 3am incident no one wants again

Picture this. A publish fails, a claim is off by a hair, legal flags it after it goes live. Now it’s a scramble. The root cause isn’t one mistake. It’s a fragile pipeline with manual steps and no hard gates. Brittle systems create drama. And drama kills cadence.

Policy-as-code, QA gates, and idempotent publishing calm this down. The QA gate checks voice, structure, and grounding before anything can move forward. Idempotent publishing prevents duplicates if a job retries. This isn’t fancy. It’s basic operational design. If you want a mental model, Oracle’s view on orchestration frames how reliable flows reduce failure modes across steps.

Founder-led content that cannot scale

I’ve lived the founder-led phase. At PostBeyond, I could crank out 3–4 high-quality posts a week using a tight framework. It worked, until my role expanded. Quality dipped or speed cratered. Same story at LevelJump: three people wearing ten hats, no time to write, and no way to transfer the voice without slowing to a crawl.

The lesson is simple. Encode the voice, claims, and structure so one strategic writer can scale output without watering down the narrative. Put a single person in charge of system health, not just content. Run a weekly 15-minute review for cadence, quality trend, and coverage. It’s not glamorous. It works.

Still firefighting this manually? Give your team a breather and see a working system. Request A Demo.

The New Way To Build A Repeatable Demand Gen Engine

The new way starts with rules, not drafts. Then it runs jobs tied to the funnel through a deterministic pipeline with hard QA gates. You measure reliability over time, not just clicks. It’s less about creative bursts and more about compounding outcomes.

Set five governance artifacts that travel with every asset

Document these once: brand voice rules, positioning and POV, product truth and allowed claims, visual guidelines, and approved knowledge sources. Then make them machine-readable so they travel through discover, brief, draft, and publish. Review shifts to exceptions and accuracy checks, not voice policing or “does this fit our story” debates.

Make it specific. Include banned terms, must-include claims, CTA placement, and image rules. Add examples of “good paragraph” vs “not our voice.” Encode product boundaries so the system won’t publish invented features. If this sounds heavy, it isn’t once set. You’re front-loading decisions you used to make ad hoc, every week.

Define four demand-gen jobs with inputs and outputs

Write job specs for Acquire, Educate, Convert, and Retain. For each job, define inputs (knowledge sources, POV angles, site structure), outputs (assets and formats), distribution rules, and pass-fail checks (voice, claims, structure, alignment). This is where narrative frameworks live. You want to be able to audit whether the engine did its job.

A quick example. Acquire: input site sections and intent clusters, output long-form pages with locked H2/H3 structures and internal link targets, pass-fail includes duplication protection and LLM-readable structure. Convert: input product truth and competitive rules, output comparison pages with fairness constraints and structured tables, pass-fail includes safety rules for claims.

Implement a deterministic pipeline with QA gates and a 15-minute health review

Adopt a consistent flow: Discover, Angle, Brief, Draft, QA, Enhance, Visuals, Publish. Lock structure. Require knowledge grounding at the brief stage so drafts don’t wander. Add QA gates for narrative compliance, accuracy and grounding, and structural readability. Block publish until all pass.

Then run a weekly 15-minute review with three graphs: cadence, quality trend, and coverage by job. The goal isn’t policing people. It’s catching drift early and keeping compounding on track. If you prefer a cross-functional angle, the Spend Matters intake and orchestration guide is useful for designing control gates that map policy to flow.

How Oleno Runs This Playbook End To End

Oleno turns demand gen from a set of activities into an operating system. You define how you want to show up once, and Oleno runs the work on a predictable cadence. Strategy stays human. Execution becomes reliable. That’s the leverage small teams need.

Governance setup and enforcement

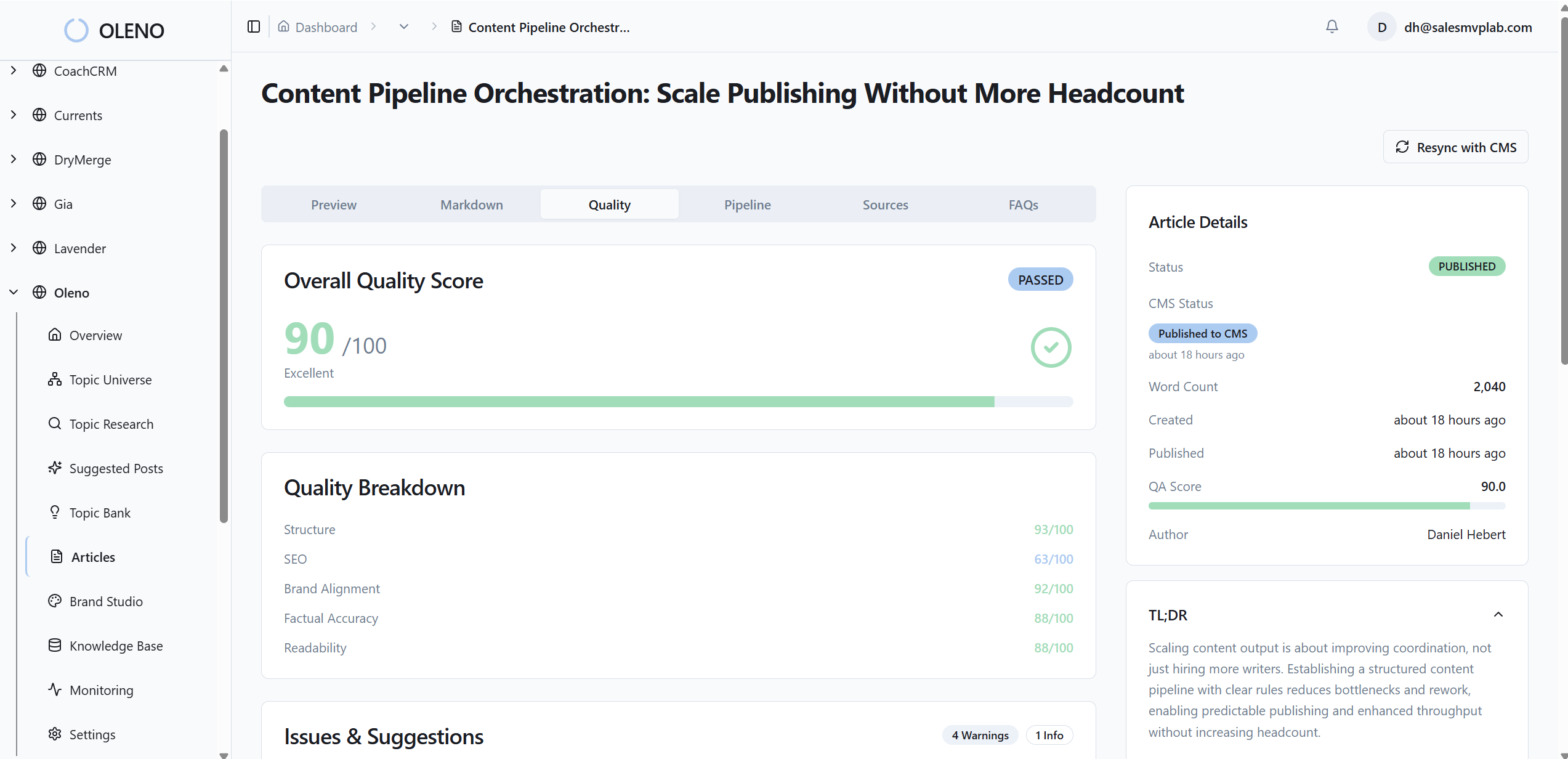

Oleno encodes your brand voice, positioning and POV, product truth and allowed claims, visual guidance, and approved knowledge. Those rules apply everywhere, automatically. Oleno’s quality enforcement checks voice, narrative structure, accuracy, and grounding before anything can publish, so review time drops and exceptions drive discussion.

Because governance is the first-class citizen, the output doesn’t drift as volume grows. You’re not relying on memory or templates that change week to week. You’re relying on rules you control. That’s how Oleno maintains consistency without growing headcount or coordination overhead.

Studios, publishing, and operations in one engine

Oleno runs job-based studios mapped to the funnel. Programmatic SEO for Acquire. POV and frameworks for Educate. Competitive and product content for Convert. Customer proof for Retain. Every studio shares the same engine and flow: Discover, Angle, Brief, Draft, QA, Enhance, Visuals, Publish. That keeps cadence predictable even as your mix changes.

On publishing, Oleno pushes directly to your CMS as draft or live, with idempotent publishing so duplicates don’t sneak in. Visuals can be generated to your brand spec with SEO-safe filenames. Optional distribution reuses approved content across channels with scheduling and cadence rules. System health views show output and cadence trends, common failure patterns, and a quality signal the team can trust. This isn’t traffic analytics. It’s operational visibility, so you can see whether demand-gen execution is actually happening.

If you’re ready to see how governance, studios, and operations come together without adding headcount, Oleno was built for that. Request A Demo.

Conclusion

You don’t need more ideas. You need a system that runs whether or not you’re in the room. When governance travels with every asset, jobs tie output to the funnel, and operations enforce cadence and quality, a small team can ship steady, opinionated demand gen. That’s the point here. Build the rules once. Let the system do the work.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions