Outcome-Based Content Measurement: 6 KPIs & Tracking Plan

You can rack up a lot of traffic and still be stuck answering the same question in exec reviews: is this moving the business. I have lived that tension. At Proposify, we ranked like crazy, but too much content sat miles away from the product story. It created awareness without authority, and it didn’t show up in pipeline where it counted.

On the flip side, when we ran Steamfeed back in the day, depth plus breadth plus a system gave us compounding gains. Not because pages went viral, but because we steadily closed topic gaps and owned more of the conversation we cared about. Focus on this: Count outcomes you can influence, not surface-level engagement that feels good and goes nowhere. If you want to see how a governed content system supports this without turning into another dashboard, Oleno fits right there.

Key Takeaways:

- Track outcomes you can act on, not just pageviews you can’t explain

- Map one KPI per funnel stage, then tie decisions to shifts in those KPIs

- Close coverage gaps in clusters that match your product story

- Instrument snippet-ready sections to grow answer share on priority queries

- Trend organic assists and content-driven MQLs by cluster, not by page

- Shorten time-to-first-action with micro-CTAs and TL;DRs, then re-measure

- Run a 90-day plan with fixed definitions, simple tagging, and CRM handshakes

Pageviews Don’t Prove Authority, Outcomes Do

Outcome-based measurement proves progress by tying content to authority, discovery, and conversion assists. You start by closing coverage gaps in the universe you want to own, then track how often your answers surface and help deals move. For example, watch snippet share on your top questions and organic assists to pipeline by cluster.

![]()

Why coverage beats clicks

Clicks come from anywhere. Authority compounds when your coverage gets intentional and connected to your solution. Build clusters that mirror your product pillars, then publish angles that add new information, not repeats. If you want a quick contrast on speed versus outcomes, the reality of AI writing limits is a useful reminder that volume without structure rarely moves the needle.

KPI 1: Coverage gap reduction

Identify your pillars, map queries to each, and set a baseline of covered versus uncovered topics. Each month, calculate net gaps closed, new topics covered minus topics still missing, and tie your work to product-relevant clusters. Use a lightweight sheet with pillar, target queries, intent, owning URL, status, and date covered. Only mark “covered” when the page satisfies the job to be done, not when it mentions the term.

Add a 90-day cooldown field so you avoid whiplash rewrites when a post underperforms early. Log hypotheses about angle, structure, and examples, then revisit with evidence. The cooldown protects focus and makes your readouts understandable.

What is outcome-based content measurement?

Outcome-based measurement shifts you from counting surface engagement to tracking authority signals, discovery share, and conversion assists. Define outcomes first, then choose KPIs you can instrument with light tagging and a CRM handshake. Keep it simple, one KPI per stage, so everyone understands what will change if the number moves. Research on KPI discipline, like MIT Sloan’s perspective on strategic measurement, supports clarity over volume. The same goes for the outcomes-first stance explored by MarketingProfs on outcome-based marketing.

Curious what this looks like in practice? You can Request a demo now. It shows how snippet-ready structure and gap-aware briefs make measurement cleaner.

Tie Every Metric To A Funnel Outcome You Can Influence

You reduce noise by mapping one KPI per stage across awareness, consideration, acquisition, and retention. Each KPI should represent movement you can trigger with content changes, not historical trivia. Make the mapping clear on one slide so sales and leadership can follow it in under a minute.

![]()

How do you map outcomes to funnel stages?

Lay out a one-row-per-stage matrix with the audience job, content types that serve it, and the single KPI that proves movement. Awareness aims for answer share, consideration for revisit and depth, acquisition for qualified hand-raisers, and retention for activation reads. Build a column for decision triggers so a KPI shift automatically prompts a content response. If you need a helpful primer that connects structured writing to outcome clarity, this hub on AI content writing is a solid starting point.

KPI 2: Snippet share (of targeted queries)

Build a list of your highest-priority questions pulled from briefs and sales input. Track whether your content earns featured snippets or People Also Ask placements for those terms. Weekly, sample a set of terms and mark “snippet present: us, competitor, none,” then aim for steady gains in “us.”

Instrument your content to open sections with direct answer paragraphs using clear definitions, numbered steps where appropriate, and FAQ sections when relevant. If share stalls, fix structure first. Check that H2s open with a direct, 3-sentence answer and that the section stands on its own. For a broader view on capturing answers across search and AI surfaces, see the concept of dual discovery.

KPI 3: Organic assisted conversions

Define an “assist” as any visit from organic content within a lookback window that precedes a form fill or product action. Create a segment for content pages and standard UTMs. Use position-based or multi-touch attribution, then trend assists by cluster, not page. Keep the setup lightweight so you can maintain it.

Report assists as a rolling 28-day trend with a simple moving average. Flag clusters assisting at two times the median, because they deserve more coverage and internal linking support. If you want an academic angle on outcome-focused measurement, this SAGE journal article on outcome orientation offers helpful context.

The Status Quo Wastes Time And Still Won’t Answer “Is This Working?”

Vanity metrics soak up hours and still do not give you a decision. You need a measurement loop that closes coverage gaps, captures answers, assists conversions, and shortens time-to-first-action. That loop makes your content system accountable and your reviews short.

![]()

Imagine you chased pageviews

Imagine you hit 100k pageviews next quarter. Feels good. But if those pages do not close coverage gaps or assist conversions, you burned a quarter and a budget. Back-of-the-envelope, 100k views times 1 percent CTR to demo equals 1,000 clicks. If none are qualified, that is a mirage.

Translate the hidden costs. Reporting time, tool sprawl, and frustrating rework when leadership asks, so what. Replace the vanity loop with an outcomes loop. Measure gaps closed, answers captured, and assists created. Note: If a metric does not trigger a decision, cut it.

For a practical shift from vanity metrics to coordinated execution, this perspective on the shift toward orchestration is useful, and the content operations breakdown shows where misalignment creeps in.

KPI 4: Content-driven MQLs

Agree on MQL criteria with sales, then count an MQL as content-driven when the record has content first-touch or last-touch within 14 days of qualification. Add a hidden field for referring URL on forms. In your CRM, map content source fields and standardize values so your rollups are clean.

Create a weekly export with MQL ID, dates, first-touch URL, last-touch URL, and cluster label. Pivot by cluster and asset type. The goal is directionally correct signal you can act on, not perfect attribution. For additional framing on outcome-centric KPIs, see MarketingProfs on outcome-based marketing and this view from Journyx on outcome-based performance management.

The hidden costs of vanity metrics

Vanity metrics trigger churn in your content calendar because they reward activity, not progress. Teams chase spikes, then patch gaps with hurried rewrites. You pay with context switching, scattered briefs, and edits that never resolve the underlying mismatch between topic and product story. An outcomes-first model ends that cycle and gives you one slide anyone can read.

Make The KPIs Executive-Simple And Team-Actionable

Simple beats perfect because teams act on simple. Choose the six KPIs in this plan, assign one owner per number, and write down the decision trigger next to each. Your standups get shorter, your pipeline gets clearer, and your backlog stops thrashing.

KPI 5: Time-to-first-action (TTFA) from content

Track the median time between a visitor’s first content session and their first meaningful action, for example subscribe, demo click, or signup. Use events in analytics and a timestamped user ID tied to your CRM. Shorter TTFA means content is prompting earlier micro-commitments.

Make it actionable. Define “first action” per journey, then create a rolling 28-day median and set a yellow line threshold. If TTFA elongates, review page load, clarity of next action, and CTA framing on your top two traffic pages. Add micro-CTAs inside high-scroll sections, embed TL;DRs at the top, and surface a relevant next read. Re-measure in two weeks.

Who benefits most from this KPI set?

Marketing gets clarity on what to expand or pause. Sales gets enablement content that actually assists. Leadership gets a single-slide story, gaps closed, answers captured, assists created, and time to action improved. Writers benefit because briefs sharpen, angles get crisp, and frustrating rework drops.

If you want a research lens on measuring real outcomes, this review on measurement and outcomes in organizations can help you frame choices without bloating your dashboard.

Turning outcomes into momentum

When the numbers move, move with them. If snippet share rises on a cluster, add two more angles while you have momentum. If assists fall below median, check intent and CTAs before you rewrite. If TTFA improves, examine what changed and apply it to the next three pieces. Small loops, repeated.

A No-Drama Implementation: Tagging, Events, And Data Validation

Implementation does not need a new tool stack. Standardize UTMs, define a tiny event schema, sync a few fields into your CRM, and run a weekly validation pass. That is enough to get clean readouts for your six KPIs.

How do you tag content without new tools?

Standardize UTMs with source, medium, campaign set to content, content set to slug, and term set to cluster. Add a content_group dimension for pillars. Bake UTMs into templates for CTA buttons and internal links. Define events with lowercase names and clear parameters, then document them on a one-pager the whole team can use.

Suggested event schema:

- view_content: url, cluster

- click_cta: label, url

- start_trial: plan

- book_demo: product_area

- subscribe: list

Note: Do not add events you will never analyze. For broader KPI discipline that plays well with simple setups, see MIT Sloan’s take on strategic measurement and our view on content scale KPIs.

CRM-sync checklist for content events

Create fields for first_content_touch_url, last_content_touch_url, and first_content_touch_date. Map them from analytics via user ID or email capture at conversion. Start with last-touch if stitching is hard, then expand. Build a nightly batch to upsert content fields on new leads and update existing contacts, with guardrails that prevent overwriting a known first-touch.

Align definitions with sales ops to keep a single definition of done. If MQL criteria change, update the content attribution rule along with it so your time series stays clean.

Data sources and reconciliation (GSC + analytics + CRM + internal logs)

Combine Search Console for discovery questions, analytics for sessions and events, CRM for revenue stages, and internal publish logs for timeline context. Decide, in writing, which system is the source of truth per question to stop repeat debates. Use internal publish logs to anchor before and after windows. They are not analytics, but they make interpretation far easier.

Ready to eliminate tool thrash while you keep publishing daily? You can try using an autonomous content engine for always-on publishing.

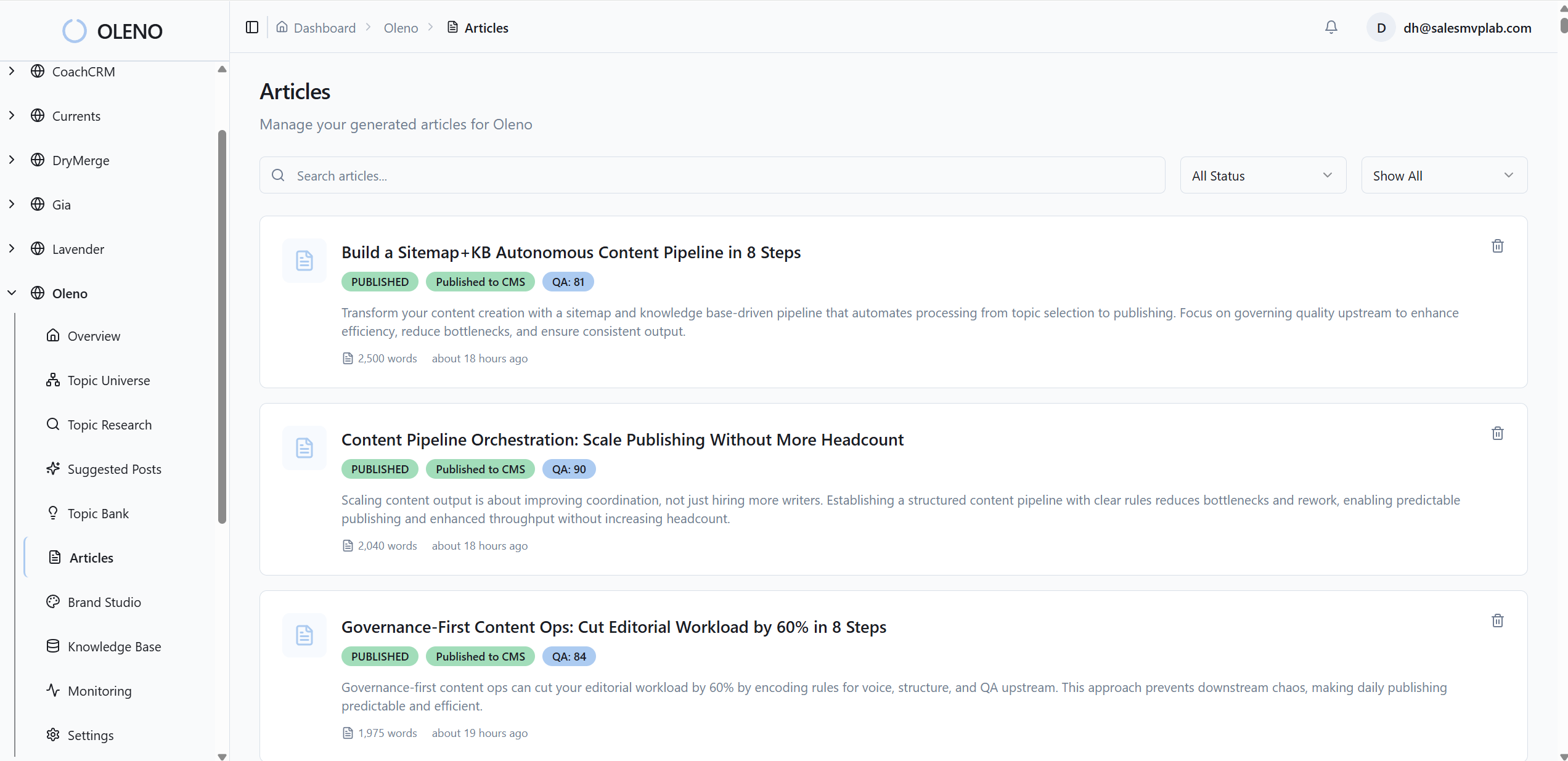

How Oleno Supports Outcome-Based Measurement (Without Becoming Analytics)

Oleno supports outcomes by enforcing the inputs that drive them. It prioritizes gaps with Topic Universe, structures content for answer capture, and protects measurement windows with a 90-day cooldown. It does not track performance. It makes sure what ships is correct, differentiated, and on-brand so your KPIs improve for understandable reasons.

90-day tracking plan you can run in a spreadsheet

Baseline in week zero by recording six KPIs per cluster, then set cadence, weekly for snippet share and TTFA, bi-weekly for assists, monthly for gap reduction and content-driven MQLs. Lock definitions for 90 days to avoid shifting goalposts. Decision triggers can be simple, for example expand a cluster if snippet share rises more than 20 percent in 30 days, pause and review if assists fall below median twice, and fix TTFA with micro-CTAs and TL;DRs if it worsens by 15 percent.

Here's the transformation: remember the reporting churn and vague pageview wins. Oleno’s Topic Universe directs what to write next, its snippet-ready structure increases your odds of answer capture, and the 90-day cooldown keeps measurement interpretable. For a deeper take on system design, see autonomous content systems and this view on performance KPIs that predict ROI.

Where Oleno fits in the plan:

- Topic Universe identifies underserved clusters and prevents over-publishing

- Brief Generation performs competitive research and applies an Information Gain Score so every draft adds something new

- The snippet-ready paragraph rule opens every H2 with a direct answer to support snippet eligibility

- Visual Studio adds brand-consistent images and product visuals where they matter, especially in solution sections

- Deterministic internal linking uses verified URLs and exact-match anchors from your sitemap, no fabricated links

- Schema generation and QA-Gate ensure structure, clarity, and on-brand voice before anything ships

Turning KPI shifts into editorial decisions

Rising gap reduction but flat assists means your topics are right, and your offers or CTAs need work. Add micro-CTAs and product visuals first on those pages, then re-measure in two weeks. Strong snippet share but weak MQLs says you are answering but not differentiating, so add comparison angles, deeper how-it-works sections, and internal links to higher-intent assets. Falling TTFA with rising assists is momentum. Publish more in that cluster and redistribute internal links to support it.

Oleno helps you act without adding dashboards. Topic Universe expands the right clusters. Briefs enforce differentiation before writing. Internal links and schema keep accuracy deterministic. And yes, the system publishes into your CMS reliably so your weekly standups stay focused on decisions, not fixes.

When should you re-cover vs. retire a topic?

Re-cover when the topic is strategic, snippet share is close but not captured, or assists show promise. Retire when the topic is off-solution, duplicates coverage, or fails triggers across two cycles. The 90-day cooldown prevents reactive rewrites that confound cause and effect. Oleno enforces that cooldown at the topic level, which makes your proof points cleaner in that one-slide exec view.

Remember, Oleno is not an analytics suite. It does not monitor ranks or traffic, and it does not claim visibility measurement. It operates the content system so your six KPIs have a fair shot at moving. If you want to see the pipeline that produces consistent, brand-complete articles, you can Request a demo.

Conclusion

Outcomes create clarity. When you reduce measurement to six KPIs that you can instrument and act on, your calendar calms down and your reviews get shorter. You close coverage gaps that match your product story. You earn more answers on the questions that matter. You assist more deals, earlier, with fewer handoffs and less rework.

Keep the plan simple for 90 days. Treat KPI shifts as prompts for editorial decisions, not debates. And run a system that enforces the inputs behind results. That is where Oleno helps, not by telling you what happened, but by ensuring what ships is structured, differentiated, and unmistakably on brand.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions