Provenance-First Retrieval: Build a KB-Backed Citation Layer

I’ve shipped a lot of content under pressure. Sales needs the post for a campaign. Legal wants sources for two lines. Design’s waiting on the hero. The article looks solid… until someone asks, “Where did this stat come from?” Now you’re spelunking through tabs at 3pm, rewriting a paragraph at 4:30, and punting the publish at 5:10. Been there.

Here’s the pattern I learned the hard way: bolt-on citations often break under audit. You can’t glue trust on top of a draft. You have to design it in. That’s what “provenance-first retrieval” actually means, build your KB and your drafting pipeline so every factual span maps to a stable, verifiable source before it leaves the system.

Key Takeaways:

- Treat provenance as a first-class objective, not an afterthought

- Model entities, versions, and passage-level IDs to make claims traceable

- Optimize retrieval for supportability (entailment, authority), not just similarity

- Quantify rework: small gaps compound into hours of editing and risk

- Implement a claim → evidence → citation flow with QA checks before publish

Why Bolt-On Citations Collapse Under Audit

Most teams try to add citations after drafting. That’s fragile because the text wasn’t written against specific evidence in the first place. When reviewers ask for proof, you’re reverse-engineering support under deadline pressure. A provenance-first pipeline writes with evidence in hand, which makes audits fast and predictable.

The hidden cost of retrieval as a context dump

Dumping a giant context into the model feels helpful. It isn’t. The model writes plausible copy, but you didn’t bind claims to specific passages or versions, so verification is guesswork later. The fix is simple in concept: require every factual span to carry a canonical source ID at draft time.

In practice, that means your retrieval step can’t be a blob. It needs constrained, passage-level retrieval with clearly modeled entities and versions. The point isn’t more context. It’s sharper context, mapped to IDs editors can open and validate in seconds. That’s how you reduce drift and slash rework.

What is provenance-first and why does it matter?

Provenance-first means you go claim → evidence → citation, in that order. The system retrieves at the passage level, binds a sentence to a stable source ID, and emits a citation with the draft. QA can then ask the only question that matters: can this sentence be verified against the KB, yes or no.

This approach raises the trust floor. You’re not hoping the right source shows up after the fact. You’re constraining generation to known, versioned evidence. If you want a reference point, the focus on robust grounding and verifiability shows up across recent industry work.

When do bolt-on citations break in the real world?

You’ll see three failure modes fast. Long contexts pull in confounders that look right, but aren’t. URL-only sources rot or change, so your stat evaporates. And editors can’t trace spans back to stable passages, which forces last-minute rewrites. If legal’s involved, the edit loop gets expensive quickly.

I’ve watched teams try to patch this with “find a similar link” habits. It works until it doesn’t. The problem isn’t diligence, it’s architecture. Without stable evidence IDs tied to sentences, you’re carrying verification debt into every review.

Ready to see what deterministic drafting looks like when trust is designed in? Try a short run and pressure-test it against your process. Try Generating 3 Free Test Articles Now.

The Hidden Root Cause of Untraceable Claims

Untraceable claims don’t start in editing. They start in the KB and retrieval model. If your knowledge base is just files and chunks, you’ll struggle to verify anything precisely. Model entities, versions, and passage-level metadata so sentences can point to stable IDs that survive updates.

KB modeling flaws that block verification

A folder of PDFs split into arbitrary chunks won’t cut it. You need canonical entities (products, orgs, concepts), versioned documents, and segments with section and paragraph IDs. Store metadata like section_id, paragraph_index, and anchor text. Now the claim can point to, say, org:doc:sec:para plus a version_hash.

Why this matters: updates won’t scramble your references. When a doc changes, the versioned passage you cited remains stable, and your pipeline can decide whether to refresh or keep the archived reference. Research on structured provenance retrieval points to this same need for granular, addressable evidence.

How do confounders creep into long contexts?

Confounders sneak in when retrieval is broad and unchecked. Over-permissive embeddings, weak filters, and large n-passages create noise that looks relevant. The fix is a retrieval stack tuned for supportability: hybrid search, strict chunk boundaries, attribute filters, and re-ranking that favors entailment and source authority.

I’d rather retrieve fewer, stronger passages than drown the model in “maybe” matches. Score candidates on whether they actually support the claim. Penalize ambiguous snippets. And cap top-k tightly. You’ll see fewer hallucination-adjacent sentences and far cleaner citations.

Evidence IDs beat ad hoc URLs

URLs help readers. Evidence IDs help systems. Use fields like doc_id, section_id, paragraph_id, and version_hash. Keep the pretty URL and human-readable snippet alongside the IDs, but don’t make the URL your source of truth. If the link rots or the page shifts, your evidence graph still points to the correct version.

If you want a secondary reference for rigor, the provenance literature emphasizes stable identifiers and lineage tracking across updates. Different domain, same lesson: identity and versioning are the backbone.

The Costs of Missing Provenance in Production

Missing provenance burns time and trust. Editors spend hours hunting sources, legal blocks ship dates, and leadership loses confidence in automation. You don’t need perfect citations. You need stable mappings that let reviewers verify fast and push back when support is weak.

Rework, risk, and lost credibility you can avoid

Without provenance, your edit loop balloons. An editor questions a line, the writer looks for a source, the source doesn’t quite match, and suddenly you’re debating phrasing instead of publishing. Legal adds a round, the CMS schedule slips, and your “automation” quietly becomes a manual workflow again.

There’s also reputational drag. A single contradiction in public triggers inboxes, follow-up edits, and sometimes a retraction. In systems terms, you’re introducing variance where you could have determinism. The research world talks about audit trails for this reason; research on provenance systems reinforces the operational value of verifiable lineage.

Let’s pretend you publish 50 articles a month

Let’s do back-of-the-napkin math. Say each article contains 20 factual claims. If 20 percent lack firm sources, that’s 200 claims per month in rework. At 5 minutes per claim to find, validate, and update, you’ve bought 16–20 hours of editing and risk every month.

Stretch that across a quarter and you’ve delayed new creation to clean up the past. And this is conservative, five minutes is kind if you’re juggling reviews, Slack pings, and CMS updates. The real bill usually includes context switching and a little frustration tax.

The cascade into QA, publishing, and brand trust

When provenance is weak, QA scores dip, retries spike, and your schedule slips. People start bypassing the system “just this once,” which breaks the system. Readers spot inconsistencies, your support team fields questions, and now this week’s pipeline is competing with last week’s clean-up.

This isn’t theory. I’ve seen it in small teams and in larger orgs. The difference between predictable and chaotic publishing often comes down to whether your pipeline can answer one question quickly: where did this claim come from?

Still repairing drafts by hand? There’s a simpler path. Try Using an Autonomous Content Engine for Always-On Publishing.

The Editorial Friction No One Budgets For

You can feel this pain in your calendar. The 3pm tweak that turns into a 6pm rewrite. The exec review where one stat derails the meeting. The “we’ll fix it post-publish” promise that boomerangs next week. Provenance-first design is about preventing those moments, not adding ceremony.

The 3pm edit that turns into a 3-hour scavenger hunt

You know the drill. One stat, no clear source. You search. You paste a link. Legal wants the exact passage. Now you’re screenshotting PDFs. Design’s waiting on final copy. Multiply this by two, and your afternoon’s gone.

Design for that moment. Put evidence IDs, snippets, and anchors next to the sentence in the editor. Make it a click to open the exact passage. When the source is right there, the “hunt” disappears and the edit takes 30 seconds, not 30 minutes.

When an exec asks, where did this stat come from?

You don’t want to say, “We think it’s from a report.” You want to click “open citation,” show the passage, show the version, and move on. If the live page changed, point to the archived snapshot. If the claim is weak, swap it for one that’s supported.

Provenance lowers the temperature in high-visibility reviews. You’re not defending the text, you’re showing the evidence. For modeling practices that support this, the IPAW 2016 paper on provenance modeling is a useful reference point.

Who carries the risk when sources vanish?

If a link dies, the risk lands on you. Mitigate with version hashes, archived snapshots, and routine link checks tied to your citation layer. When a source changes, flag affected claims and queue a review. Editors decide whether to rephrase, replace, or remove. The pipeline stays accountable without grinding to a halt.

I learned this running lean teams. We didn’t have time to chase ghosts. A small amount of structure saved us from a lot of last-minute “where did this come from?” anxiety.

A Practical Architecture for Provenance-First Retrieval

A workable design ties claims to evidence automatically, emits citations with the draft, and blocks publish if support is missing. You don’t need a moonshot, just a reliable flow that makes verification fast and boring. Here’s the pattern I recommend.

Claim to evidence to citation flow, end to end

Start by detecting factual spans during drafting. Light tagging is enough, don’t overengineer. For each span, retrieve granular passages with hybrid search and a re-ranker tuned for entailment and authority. Bind the span to an evidence_id and store a version_hash plus a human-readable snippet.

Emit canonical citations with the draft and require QA to verify that every span has valid support. If a claim fails verification, send it back for remediation automatically. For additional background on industrializing this loop, related industry work highlights similar constraints.

Model the KB for provenance, entities and versions

Define canonical entities, versioned documents, and passage-level metadata. Use IDs like org:doc:section:para, and store version_hash, updated_at, anchor text, and snippet. Keep both the display_url and a stable archived_url. This gives you stable references and a clean way to surface citations in the editor.

When content updates, you can maintain the old reference while scheduling a refresh. Editors see exactly what changed and why. It’s a small amount of upfront modeling that pays back every time you edit or audit.

Configure retrieval for precision with healthy recall

Use hybrid retrieval (BM25 + vectors) with filters on entity, section type, and recency. Chunk by semantic boundaries to avoid mid-sentence splits, cap chunk length to reduce drift, and re-rank for entailment, not just similarity. Keep top-k small, then consolidate evidence to prefer diverse sections over redundant hits.

You’ll get fewer false positives and cleaner support. If a claim can’t be supported, you’ll find out in drafting, where it’s cheap to fix, instead of in legal, where it’s not.

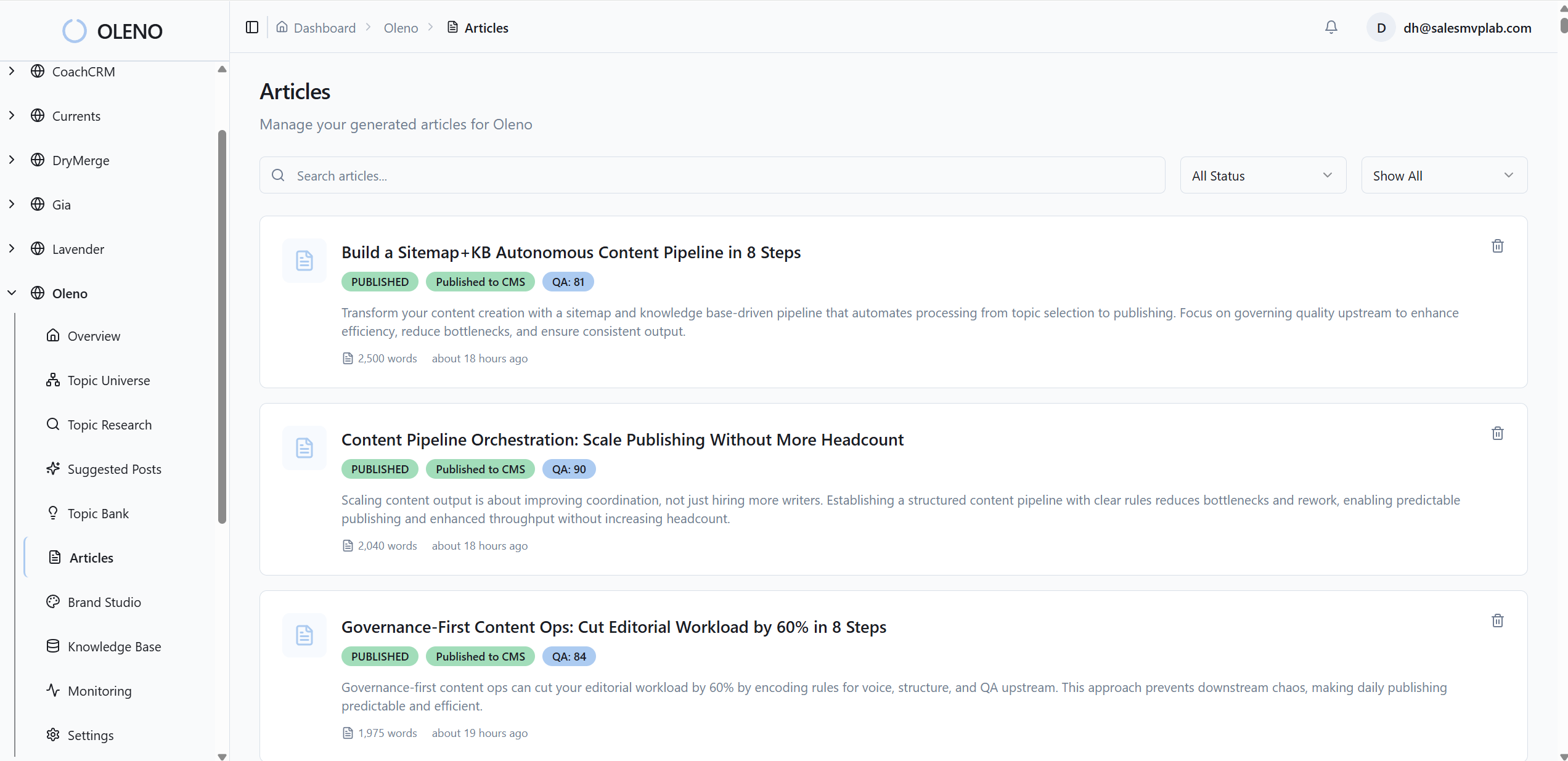

How Oleno Enforces KB-Backed Provenance in Your Pipeline

Provenance-first isn’t a slide. It’s a system choice. Oleno creates drafts grounded in your knowledge base, enforces quality with a QA Gate, and publishes to your CMS on a fixed cadence, so the provenance layer shows up where it matters: in the draft, in QA, and in the final publish.

KB-grounded drafting that limits unverifiable claims

Oleno drafts against your knowledge base by default. When you model entities, versions, and passage-level metadata, those IDs can ride along with factual spans in the draft. The immediate benefit is fewer stray claims. The narrative stays tight because the system writes within the bounds of what can be supported.

I’ve seen teams go from “we think this is right” to “open the evidence” in a week simply by configuring the KB with stable IDs and connecting them to drafting validators. You don’t eliminate judgment. You reduce the wild goose chases.

QA Gate checks that block unverifiable assertions

Oleno’s QA Gate already validates narrative structure, voice, clarity, SEO placement, LLM readability, and knowledge-base grounding. Extend that gate to require an evidence_id and snippet for each factual span. If a claim lacks support, Oleno routes the draft back for remediation and re-tests it automatically.

This is where the rework disappears. Instead of editors playing detective at 3pm, the system prevents unverifiable claims from reaching them in the first place. It’s quality as a gate, not a suggestion.

System logs and version history for audits, plus CMS publishing with stable fields

Oleno maintains internal logs of KB retrieval events, QA scoring, retries, and version history. Put those next to your provenance tokens in an audit view and you can answer “what changed and when” without spreadsheets. When a source updates, you’ll see which articles depend on that version and decide whether to refresh or archive.

Publishing is idempotent, so you can safely re-run updates when sources evolve. Add citation fields, evidence_id, display_url, snippet, to your CMS template and publish them alongside the article. Editors get a clean UI, readers see references, and your system stays synchronized as content and sources change. For the modeling angle behind this, provenance retrieval research aligns with these practices.

Here’s the bottom line. If you’re still hunting sources on deadline, you don’t have a provenance-first pipeline yet. Oleno helps you set it up the right way, then runs it, drafting against your KB, enforcing QA, logging decisions, and publishing with stable citation fields. Want to feel the difference in a week, not a quarter? Try Oleno For Free.

Conclusion

You can keep gluing links onto finished drafts and hoping reviews go smoothly. Or you can design provenance into the system and make trust the default. Model entities and versions. Bind claims to passage-level IDs. Require QA to verify support before publish. When you do, audits get faster, edits get lighter, and publishing gets predictable. That’s the shift, from writing words to running a verifiable content system.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions