QA-Gate Governance: Reduce Content Rework 50% in 90 Days

Rework feels like a writing problem. It isn’t. It’s a system problem wearing a writer’s hat. When rules live in docs and not in code, quality depends on who had coffee and who’s under a deadline. I’ve seen good teams look bad because the workflow let small errors slip through.

We fixed this by treating quality like a gate, not a hope. There’s a pass or fail checkpoint. Block publish until the non-negotiables are met. The result wasn’t perfect, but it was consistent. And consistency beats heroic editing every time.

Key Takeaways:

- Move rules from “guidelines” to “gates” so quality is enforced, not requested

- Automate deterministic checks (structure, links, schema, alt text) to cut rework

- Define acceptance criteria tied to outcomes, not vibes

- Track rework rate, QA pass rate, and time-to-publish to prove impact

- Pilot, tune, then enforce pass-to-publish in 90 days

- Use code-based publishing to prevent duplicates and broken layouts

If you’d rather skip theory and see a governed gate in action, you can Try Generating 3 Free Test Articles Now.

Why Rework Persists Without a QA-Gate

Rework persists because most teams rely on guidelines and memory instead of enforceable gates. Guidelines drift, especially under deadlines, and quality becomes negotiable. A QA-Gate turns rules into a pass/fail checkpoint so drafts ship only when criteria are met, like structure, voice, and schema being verified.

The System Problem That Looks Like a Writing Problem

The story usually goes like this. You hire a solid writer, give them a brand guide, and expect quality to “just happen.” It does for a week. Then the calendar fills, briefs get rushed, and the guide becomes optional reading. Rework climbs. Not because the writer got worse, but because the system gave them a hundred ways to miss.

When quality is enforced by people instead of code, interpretation creeps in. One editor loves long intros. Another trims aggressively. One person adds schema. Another forgets. The same draft can pass or fail based on who’s busy. That’s not a talent issue. That’s governance missing in action.

Here’s the fix: put rules where they can’t be ignored. Move structure checks, KB grounding, and schema validation into a gate that blocks publishing until they pass. The work still breathes. The floor gets raised.

What Is a QA-Gate in Content, Practically Speaking?

A QA-Gate is a yes/no checkpoint before anything ships. You codify non-negotiables, then block publish until they pass. That includes structural hierarchy, snippet-ready H2 openers, brand tone constraints, KB-grounded claims, verified internal links, JSON-LD schema, visuals, and accessibility.

Think less redlines, more guardrails. The gate doesn’t decide if a metaphor sings. It decides if the article is structured to be cited, if claims are grounded, if links are verified, and if alt text exists. Humans keep judgment where it belongs, story, argument, nuance. Machines handle repeatable checks.

Software folks have done this for years with code quality gates. The same idea applies here. If you want the primer, SonarQube’s explanation of quality gates lays out the pass/fail mindset cleanly.

What Happens When Rules Live in Docs, Not Code?

They drift. People interpret rules differently or skip steps when they’re busy. You get inconsistent structure, vague claims, missing schema, and broken internal links. Then the fixes are manual, which eats your week.

When you convert rules to code, the same checks run every time. Structure? Verified. Links? Pulled from a real sitemap, not someone’s memory. Schema? Generated and validated, not guessed. Accessibility? Checked, not hoped for. It’s not glamorous. It is effective.

If you want a second perspective, this testing lens is helpful: What quality gates are and why they help. Different domain, same discipline.

The Root Cause You Can Actually Fix

The root cause is the lack of deterministic checks in the pipeline. Opinionated reviews stretch forever; code-based validations give you a predictable floor. Put machines on structure, links, schema, and accessibility; keep humans on narrative, insight, and voice.

Deterministic Checks Replace Opinionated Reviews

You don’t need a committee to verify a heading structure. Or whether a link exists. Or if schema validates. Machines are better at this, and they don’t get tired. Start with high-frequency failure points: internal links from a verified sitemap with exact-match anchors, JSON-LD schema generation, duplicate detection on titles, and banned-terms linting.

Then elevate the baseline: snippet-ready openers on every H2, alt text present on all images, canonical fields mapped, and brand tone constraints enforced. Opinion still matters. Just not on whether an anchor text matches a page title.

I’ve seen teams drop rework by simply refusing to debate link choices. The rule picked the link. Humans moved on to the story.

Where Should Governance Be Code, Not Guidelines?

Anything repeatable and verifiable belongs in code. That includes structural hierarchy, snippet-ready H2 openers, internal link validation, schema injection, alt text presence, canonical mapping, and duplicate detection. Guidelines should cover judgment: narrative clarity, angle strength, and whether this adds something new.

This split protects your editors from being the rules police. It narrows their job to the work that moves the needle: insight, clarity, and resonance. It also makes handoffs less brittle. The gate enforces the basics so feedback is about ideas, not commas.

How Do You Define Acceptance Criteria That Matter?

Start from outcomes, not aesthetics. Fewer edits. Faster time-to-publish. Lower error rate post-publish. Then write 20–30 checks tied to those outcomes. Examples: information gain above a threshold, internal links must match verified pages, schema validates, brand tone meets constraints, and every image has alt text.

Make the pass threshold explicit. For instance, “scores 85 or above across weighted checks.” Add a rationale for each check so no one argues its existence, only its weight. For more structure inspiration, skim Perforce’s overview of quality gates. The logic translates well.

The Hidden Costs You Can Measure This Quarter

You can measure rework in hours, days, and missed opportunities. Track rework rate, QA pass rate, and time-to-publish. Those three metrics tell the story. Rework should fall, first-pass pass rate should rise, and time-to-publish should compress.

The Rework Tax You Pay Every Week

Let’s pretend you ship 12 articles a month. Each gets two rounds of edits at 45 minutes per round. That’s 18 hours of editing. Now add CMS cleanup, another 15 minutes per post. You’re north of 21 hours monthly on rework alone. And that ignores context switching, which taxes everyone.

What would you do with those 21 hours back? Record founder stories. Brief a new cluster. Improve distribution. Rework isn’t just annoying, it’s a budget line hiding in your calendar. A QA-Gate gives you a lever to move it.

Time-To-Publish Slippage And The Opportunity Cost

Publishing delays compound. If your average publish slips from 3 days to 8, you’ve lost five days of discovery and internal momentum per article. Multiply by 12 posts, and that’s 60 days of visibility given up in a single month. You can’t buy those days back.

Shortening the path, by removing manual checks and brittle handoffs, shortens the opportunity gap. The work gets into the world when it still feels fresh to the team. That matters more than people admit.

What Metrics Prove Quality Drift?

Track three things weekly:

- Rework rate: percent of drafts needing more than one edit pass

- QA pass rate: percent of drafts that pass all checks on the first attempt

- Time-to-publish: draft-to-live, measured in hours

Trend lines matter more than absolutes. You want rework down, pass rate up, and time compressed. If you’re running gates across a portfolio, note that stage gates are a known discipline in other orgs, see the HHS Stage Gate Reviews practice guide for a governance angle that maps well to content.

If those numbers make you wince, you’re not alone. If you want help reducing them without adding headcount, Try Using An Autonomous Content Engine For Always-On Publishing.

The Human Friction You Feel Every Week

The cost isn’t just time. It’s energy. It’s credibility hits when layout breaks. It’s that knot in your stomach when legal pings you about a claim you didn’t even write. Governance lowers that stress by removing the avoidable mistakes.

The Ping Pong That Burns A Week For Everyone

You know the dance. Draft goes to editor. Comes back with 22 comments. Writer fixes 18, misses 4. Second pass. Then legal flags a term. Then a VP wants a new screenshot. No one’s lazy. You’re paying interest on process debt.

When you put explicit criteria in a gate, most of that ping pong disappears. The first pass is cleaner because the floor is higher. Editors argue the argument, not the scaffolding. Legal gets exactly the terms they pre-approved. You get your week back.

I’ve lived this. At one startup, we moved from “please remember” to “the gate checks it.” The edits went from structural nitpicks to “tighten this argument.” It wasn’t glamorous. It was better.

When A Publish Breaks Layout And Trust With Sales

We shipped a great piece once. CMS mapping was off. Images broke on mobile. Sales stopped linking to the blog for a week because they were worried about quality. That’s not a technical glitch. That’s a brand hit.

Idempotent, verified publishing rules prevent that. Field mapping is consistent. Visuals are embedded with the right metadata. Duplicate publishes are blocked by design. Fewer fire drills. More shipping.

Ship a QA-Gate That Cuts Rework in 90 Days

A 90-day rollout works because you can pilot, tune, then enforce. Start with acceptance criteria. Automate deterministic checks. Wire a draft → QA → publish pipeline. Set roles and SLAs. Measure pass rate, rework, and time-to-publish weekly.

Define Acceptance Criteria, 20 To 30 Checks That Matter

Write criteria that reduce editing. Think outcomes, not preferences. Include structure and snippet-ready H2 openers, information gain thresholds, brand tone compliance, KB-grounded assertions, banned terms, visuals with alt text, JSON-LD presence, canonical mapping, and internal link verification.

Set a pass threshold. For example, “85 or above across weighted checks.” Add a short rationale for each. That one step kills half the debates. It also gives you a lever when you need to tune weights without relitigating the entire system.

Automate The Gate With A Draft → QA → Publish Pipeline

Build a CI-like flow. A draft enters QA. If it fails, the pipeline refines and retries automatically. Keep system logs of inputs, outputs, retrieval events, QA scores, and version history. Those logs are operational, not analytics, and they make retries predictable.

Publishing runs only when links, schema, and quality thresholds pass. Make delivery idempotent so a retry never publishes twice. Map fields once. Prevent duplicates by design. This is where rework drops, not because people try harder, but because the system leaves less to chance.

Plan The 90-Day Rollout With Pilot And Enforcement Checkpoints

Weeks 1–3: pilot on 5–10 articles. Tune criteria and weights. Weeks 4–6: expand to 50% of content. Train the team on new handoffs. Weeks 7–9: enforce pass-to-publish on all net-new posts. Weeks 10–12: include updates and republishing rules.

Keep it boring and explicit. RACI is clear. SLAs are tight during the pilot (24 hours max). Add a rollback policy for any post that slips through. Annotate rule changes so you can correlate shifts with pass rates. If you like a governance template, the Queensland Digital Investment framework shows how to make decisions auditable.

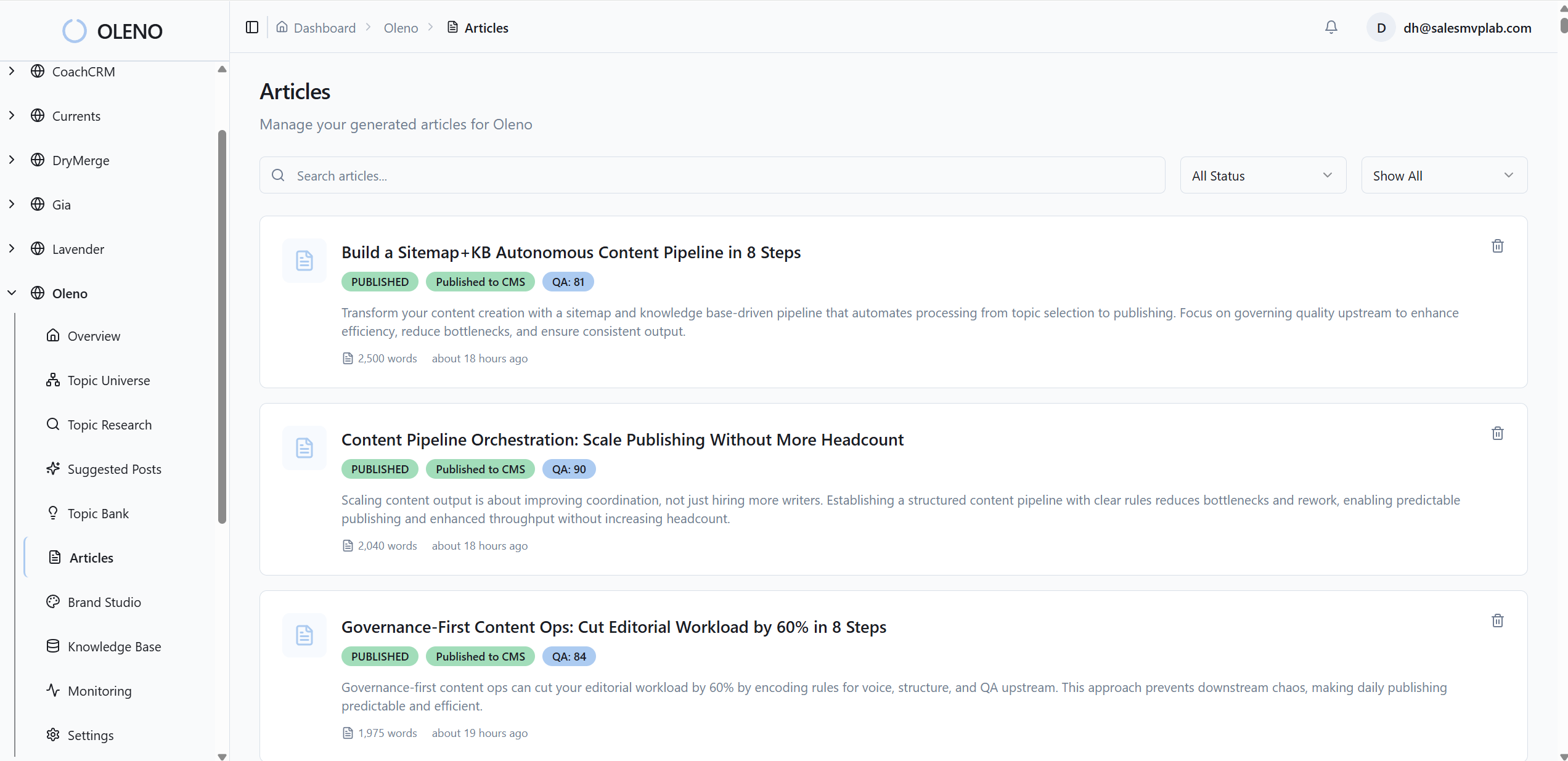

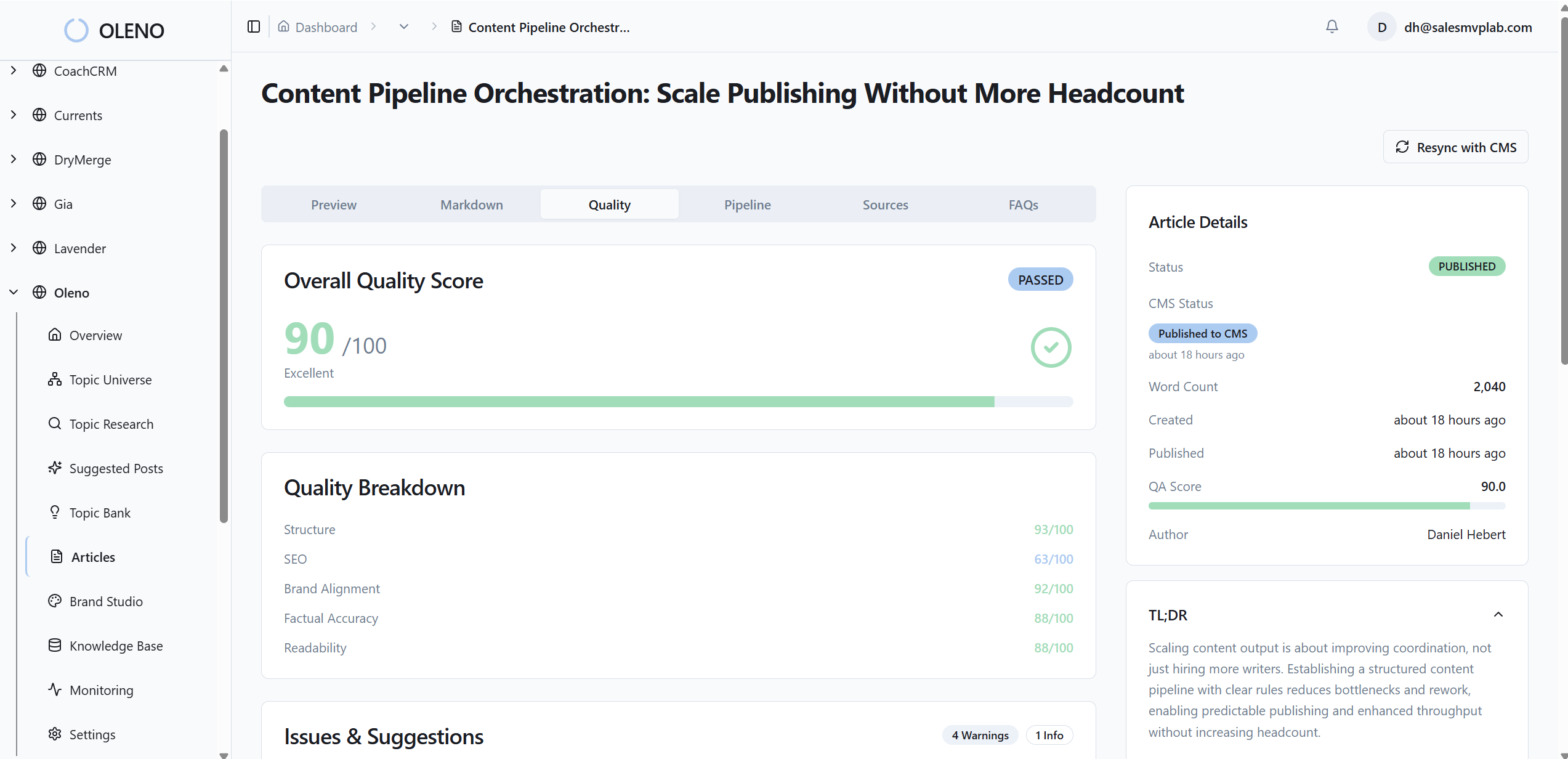

How Oleno Automates QA-Gate Governance End to End

Oleno turns this blueprint into a deterministic system. It evaluates drafts against 80+ criteria, injects verified links and schema, generates brand-consistent visuals, and publishes to your CMS with mapped fields and duplicate protection. It’s not a dashboard. It’s an engine that enforces the floor so your team can focus on the story.

QA-Gate With 80+ Criteria Raises Baseline Quality

Oleno evaluates structure, clarity, brand alignment, information gain, snippet readiness, visuals, links, and schema. Articles don’t publish until thresholds are met. If a draft fails, Oleno refines and re-tests automatically, removing AI-sounding language and normalizing tone so your editors don’t have to.

This lowers the edit ping pong because quality becomes a precondition, not a suggestion. The system keeps operational logs for retries and version history, again, internal only, so work is explainable and consistent. You get fewer manual reviews and a tighter time-to-publish without lowering the bar.

Deterministic Linking, Schema, Visuals, And Idempotent Publishing

Oleno injects internal links from verified sitemaps with exact-match anchors. Fabricated or stale URLs aren’t possible. It generates JSON-LD for Article, FAQ, and BreadcrumbList automatically and validates it before publish. Visual Studio produces brand-consistent hero and inline images, prioritizes solution sections, and writes alt text and filenames for accessibility.

On delivery, Oleno converts markdown to CMS-ready HTML, maps fields, embeds visuals and metadata, supports draft or live modes, and prevents duplicate publishing by design. Delivery failures trigger notifications so you can fix issues quickly. Fewer broken layouts. Fewer post-publish cleanups. If you prefer a quality gates mental model, the QualityClouds overview is a useful parallel.

If you’re ready to let the system handle the structure while your team focuses on the story, Try Oleno For Free.

Conclusion

You don’t edit your way out of rework. You govern your way out. Move rules from docs into a gate. Let deterministic checks handle the floor so people can raise the ceiling. Pilot, tune, and enforce within 90 days. And if you want the system to run every day without handoffs, Oleno is built to do exactly that, consistently and on-brand.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions