What High-Quality AI Content Marketing Actually Looks Like

Your AI content probably isn't failing because the model is weak. You felt it this week when the draft looked polished, sounded fine, and still couldn't be published without you rebuilding the angle, the proof, and half the product claims.

AI content marketing has a weird problem right now. The output is faster, but the work around the output is getting heavier. Marketers are still shaping the brief, checking the product context, fixing brand voice, rewriting generic sections, pulling proof, repurposing for social, and making sure nothing weird gets copied into the CMS.

So the real question isn't whether AI can write. Of course it can. The question is whether your content system makes good editorial decisions before the draft exists. If it doesn't, you're just producing faster slop.

Key Takeaways:

- High-quality AI content requires marketers to stay in the editor's seat.

- The right comparison is platform versus writer, not human versus AI.

- Better prompts won't fix weak inputs, thin product grounding, or missing human review.

- Strong AI content marketing needs a repeatable editorial workflow across brief, draft, review, publish, and repurposing.

- AI content should be created to perform in AI engines as well as traditional search.

- Lean teams scale output by reducing rework, not by removing editorial control.

Why AI Content Marketing Fails Before the Draft

AI content marketing fails when teams treat the model like a cheaper writer instead of building the editorial system around it. The failure usually starts before the draft exists. Weak inputs, missing product context, and unclear review decisions create a polished article that still can't be trusted.

The Model Isn't the Bottleneck Anymore

Most marketers we talk to already use ChatGPT, Claude, Perplexity, or some mix of all three. The first draft is no longer the hard part. Getting something coherent onto the page is easy now. Getting something specific, accurate, useful, and safe to publish under your brand is the part that still costs real time.

A content manager opens a draft at 4:30 PM and sees the usual pattern. The intro is too broad. The product section claims something the product doesn't do. The proof is vague. The social repurposing angle is detached from the blog argument. Nothing is broken enough to throw away, but nothing is strong enough to ship. That is the worst kind of draft because it looks close.

We saw this with our own content work before we built a system around it. The pain wasn't "AI can't write." The pain was repeating the same strategy, voice, product truth, and angle instructions every time we started a new piece. Like a SaaS release without specs, QA, or a changelog, the output might compile. Nobody should ship it.

Faster Output Can Hide Lower Quality

The dangerous part of AI content marketing is that speed feels like progress. A team that used to publish four articles a month can suddenly produce twenty drafts. The calendar looks healthier. The content pipeline looks busy. Then the hidden costs show up in review.

If every article needs a senior marketer to repair the argument, you're not scaling. You're moving the bottleneck. That senior marketer now spends the week fixing drafts instead of choosing better topics, interviewing customers, sharpening the market point of view, or building distribution. Frankly, we've seen a lot of teams call that "AI adoption" when it's really just rework with nicer formatting.

There is a fair counterpoint here. For low-stakes content, a generic draft might be enough. If the byline doesn't matter and the topic has no product risk, a writing assistant can do the job. B2B SaaS content is different because product accuracy, buyer trust, and pipeline relevance all sit inside the same article. If one breaks, the piece loses credibility fast.

If you're trying to scale the system without turning your editor into a repair shop, request a demo and we'll show you where the review work actually moves.

Generic Inputs Create Generic Content

Generic AI output usually comes from generic source material. The model was given a keyword, a loose persona, maybe a few tone notes, and then asked to produce authority. That doesn't work. Authority comes from what the system knows before it writes.

A quick diagnostic helps. Pull your last five AI-assisted articles and check three things: how many product claims were traceable to a source, how many sections contained a specific buyer scenario, and how many sentences could appear on a competitor's blog without changing anything. If more than 30% of the article is brand-swappable, the issue isn't prose. The issue is missing editorial inputs.

Strange source inputs create strange outputs too. We've seen teams accidentally mix old planning docs, campaign notes, and unrelated buyer language into the same AI workspace. Then lines like "outsource to find what works.", "Bring it in-house to scale.", and "For many companies, customers and users are two different things." float into places they don't belong. The model didn't fail. The system fed it bad context.

How to Build an Editorial System for AI Content

A strong AI content system separates production work from editorial decisions. The marketer decides the angle, sources, structure, claims, and distribution logic. The AI does the production work between those decisions, which is how lean teams raise output without giving up control.

Diagnose Whether You Have a Writing Tool or a Content System

Start with the review pattern, not the tool category. If your team spends most of its time prompting, copying, pasting, fact-checking, and rebuilding structure, you're using AI as a writer. If your team spends most of its time making upstream decisions and approving structured outputs, you're moving toward a content system.

Ask five questions before adding another tool. Does the process store brand voice across pieces? Does it store product context in a place the AI reads every time? Does human review happen before the brief, before the outline, before the draft, and before publish? Does the workflow include content repurposing after the article ships? Does the system create content for AI search engines and traditional search, or is it still chasing keyword density alone?

The threshold is pretty simple. If a marketer has to re-explain positioning more than once per week, the system is too manual. If a product claim gets checked only after the draft is written, fact grounding is too late. If repurposing starts from a blank page after publish, distribution was never part of the content workflow.

A useful audit looks like this:

- Inputs: list the strategy, product, voice, proof, and buyer sources the AI receives.

- Decisions: mark every point where a human approves or changes direction.

- Outputs: track where the article goes after publish, including social and email.

- Failures: record where rework happens most often.

Put Human Review Before the Expensive Parts

Human review is most useful before the draft becomes expensive to fix. A weak angle caught at the brief stage takes minutes to repair. The same weak angle caught after a 2,000-word draft takes an hour, sometimes more. Every marketer knows this, yet most AI workflows still push review to the end.

The operating rule we like is: review the decision before reviewing the prose. The marketer should shape the research direction, then the brief, then the outline, then the draft edits. That keeps the human focused on judgment. It keeps the AI focused on production. Not glamorous. Very effective.

Some teams resist this because pauses feel slower. That's valid. If you only need a one-off article, pausing four times may feel like overkill. At content cadence, those pauses save time because they prevent the same mistake from multiplying across ten articles. Catching the wrong audience in one brief is annoying. Catching it after ten published posts is a mess.

Ground Product Claims Before the Model Writes

Product grounding has to happen before drafting because factual accuracy is hard to bolt on later. If the AI doesn't know what your product does, what it doesn't do, what changed in the last release, and which claims are approved, it will fill the gap with plausible language. Plausible is the problem.

For B2B SaaS marketers, product context should include release notes, feature boundaries, customer stories, pricing facts, use cases, competitive limits, and approved proof. Not all of that belongs in every article. The system needs access to it so the right pieces can be pulled into the right section. Google's own guidance on creating helpful, reliable content pushes in the same direction: useful content has to be written for people, grounded in real value, and not produced mainly to satisfy search systems.

Run a claim audit on one draft. Highlight every sentence that says your product, market, customers, or competitors do something. Each highlighted sentence needs a source. If you can't point to a product page, help doc, release note, customer story, sales insight, or internal product truth, either rewrite the sentence or remove it. No source, no claim.

Design for AI Engines and Search Together

AI content should be created to perform in AI engines as well as traditional search. That means the article needs clean answers, specific claims, strong structure, and original framing. Keyword density still matters, but it isn't enough to win when buyers ask Google AI Overviews, ChatGPT, or Perplexity for a synthesized answer.

The practical shift is small but important. Write sections so they can be quoted. Put the answer in the first sentence after the heading. Use descriptive headings that match real buyer questions. Keep proof close to claims. Avoid keyword-stuffed copy that reads like it was built for a crawler instead of a person. Google's own AI features documentation makes the retrieval layer obvious: AI surfaces depend on content that can be understood, extracted, and shown in response to a query.

We might be wrong on the exact timeline, but we don't think we're wrong on the direction. Search visibility is splitting. Classic SEO still matters. AI search engines now matter too. The teams that win won't write two different versions of the same article. They'll build one stronger article that works for both.

If you're already publishing with AI and want to see where your current workflow is leaking quality, request a demo and we'll walk through the process with you.

Repurpose the Core Idea Without Watering It Down

Distribution workflow breaks when repurposing is treated as a summarization task. A blog post becomes five bland LinkedIn bullets, an email intro, and a few social captions that repeat the title. The core idea gets thinner each time it moves channels. That is how a sharp article turns into forgettable promotion.

A better system keeps the argument intact while changing the shape. The blog post carries the full reasoning. LinkedIn pulls the strongest opinion, the buyer symptom, or the lesson story. Email frames the problem in the reader's day-to-day language. Sales enablement turns the same idea into a conversation starter for reps. Same spine. Different surface.

Use a simple channel test. If the repurposed asset doesn't preserve the article's main claim, it shouldn't ship. If the post needs the reader to click through before it makes sense, rewrite it. If the email sounds like a summary instead of a reason to care, go back to the pain. Content repurposing works when each channel earns attention on its own.

How Oleno Turns Editorial Control Into a Platform

Oleno turns AI content marketing into a governed workflow where marketers make the key decisions and AI handles the production work. The platform stores strategy, voice, product truth, and proof once, then applies that context across research, brief, outline, draft, edit, publish, and repurposing.

Strategy Memory Replaces Re-Prompting

Oleno is built around the idea that the marketer stays in control and the system carries the context. Brand & Voice Memory stores how your content sounds. Positioning & Messaging Control stores what you believe, who you sell to, what you lead with, and which buyers you avoid. Product Truth Library stores the product facts the draft is allowed to use.

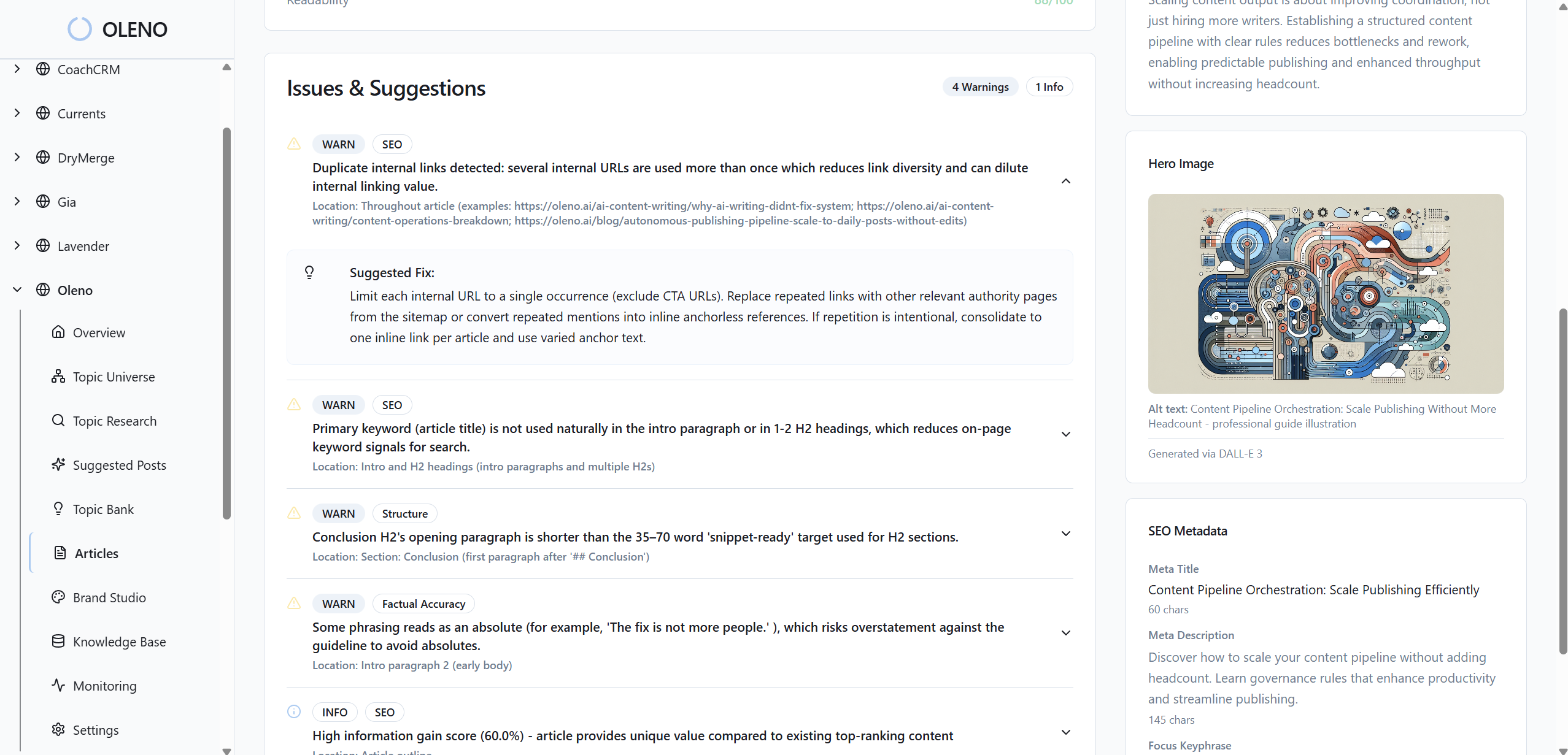

That matters because AI content marketing quality usually breaks in the same places: voice drift, invented product claims, weak proof, and generic positioning. Oleno addresses those failure modes before the draft exists. The marketer shapes the angle at Compose, reviews sources in Research, edits the Brief, approves the Outline, and then reviews the Draft. The AI does the work between those points.

Oleno isn't a fit if you want content running with no human review. Fair enough. Some teams want that. Marketers who care about the byline usually want something else: a system that keeps them making the calls without forcing them to become the production layer.

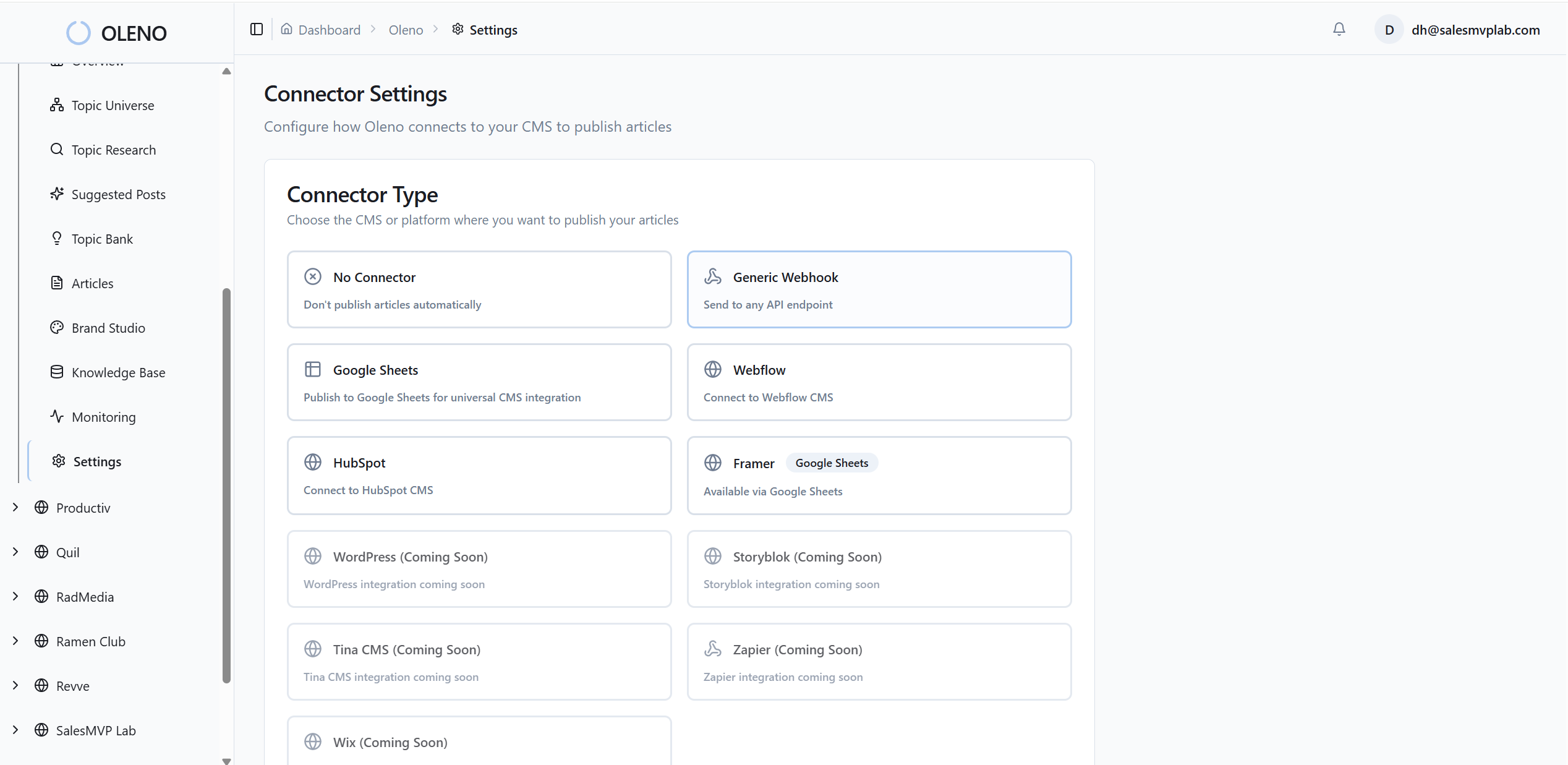

Publishing and QA Stay Inside the Workflow

Oleno also carries the content past the draft. Publish pushes into WordPress, Webflow, Storyblok, HubSpot, Tina, Wix, Framer, Google Sheets, Webhook, and Zapier. It handles CMS publishing details like image rehosting, Yoast metadata mapping for WordPress, Gutenberg figure blocks, and idempotent updates by external_id. The marketer isn't also the integration layer.

The Quality Gate scores each draft for factual grounding, voice match, structure, link health, and SEO density before the marketer sees it. Oleno also supports Repurposing to Social, so the long-form article can become LinkedIn and X posts in the same brand voice without turning into a thin summary. That closes the loop between creation and distribution.

The old comparison was human versus AI. Wrong frame. The better comparison is platform versus writer, because the real question is whether your workflow can hold strategy, product accuracy, editorial control, search structure, and publishing across many pieces. If you want to see how Oleno handles that workflow, book a demo.

Build the System Before You Scale

High-quality AI content marketing doesn't come from asking the model to try harder. It comes from deciding where humans must intervene, what product inputs the system must read, how claims get grounded, and how distribution happens after publish. Once those decisions are clear, AI becomes useful in the right way.

The marketers who win with AI won't be the ones who remove themselves from the process. They'll be the ones who remove the repetitive production work while keeping control over the angle, proof, structure, and final judgment. Build that system first. Then scale.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions