What to Look for in Content Execution Without Management Overhead

Growth-stage SaaS teams adopt new software all the time, but they rarely adopt tools that create a new layer of management work. If your team has 1 to 3 marketers, you've probably felt this pain this week: content needs to ship, strategy is clear in your head, and somehow the draft still comes back wrong.

That gap is why growth-stage SaaS teams adopt new content systems in the first place. Not because they want more software. Because they can't keep burning hours on frustrating rework, endless context-setting, and review cycles that make every article feel custom-built from scratch.

You can make the wrong call here pretty easily. A lot of content tools look useful in a demo, then quietly push more coordination work back onto the Head of Marketing. And for a lean team, that's the part that hurts most. You're not buying software in a vacuum. You're buying back focus, or you're adding another headache.

Key Takeaways:

- Growth-stage SaaS teams usually adopt a content platform when the cost of review cycles starts crowding out strategy work.

- If your team needs a manager to constantly translate positioning into briefs, prompts, and edits, you don't have a repeatable system yet.

- A useful evaluation criterion is simple: can the system reduce handoffs within 30 days, without requiring a new content manager to run it?

- The real comparison isn't AI tool versus agency. It's whether either option can hold strategy, voice, and product truth steady across repeated output.

- Management overhead often shows up as hidden labor: extra approvals, more rewrite rounds, and founder or marketing lead time getting pulled back into execution.

Why Lean SaaS Teams Start Looking For A Different Content System

Growth-stage SaaS teams start looking for a different content system when content becomes a pipeline dependency instead of a side project. The issue usually isn't a total lack of ideas. It's that execution keeps breaking somewhere between strategy and the finished draft. That break creates delay, rework, and a lot more management than a small team can afford.

I've seen this pattern before in different forms. Back when I was the sole marketer at a SaaS company, I could write fast because all the context lived in my head. As soon as the team grew, that changed. The writer didn't have the same product nuance, I had less time, and every piece needed more explaining than it should have. Sound familiar?

A lot of buyers frame the problem as output. They say they need more content, more writers, more throughput. I don't think that's quite right. The root issue is usually translation loss. Strategy lives in founder calls, sales notes, positioning docs, product launches, and random Slack threads. Then someone downstream has to reconstruct all of it into publishable content.

That's where the Editing Tax shows up. One draft comes back generic. Another gets the facts mostly right but misses the buying triggers. A third sounds polished, but not like your company. None of these failures look massive on their own. Stack them across 8 or 10 assets in a month, and now your Head of Marketing is doing editor, product marketer, and quality control work at the same time.

Let's pretend a marketing lead spends 45 minutes briefing, 60 minutes editing, and 20 minutes chasing approval per asset. At 12 pieces a month, that's 25 hours gone before you even count planning. That's not a content problem anymore. That's a management load problem.

If you want to see whether Oleno fits your situation, the fastest route is to request a demo and pressure-test it against your current review process, not just the draft quality.

What Actually Matters When You Evaluate A Platform Like This

The right evaluation criteria for a lean SaaS team are operational, not cosmetic. You need to know whether the system reduces coordination, preserves strategic context, and produces work that doesn't boomerang back to leadership for cleanup. Fancy output in a sample draft matters less than repeatability across 20 drafts.

Strategic context has to survive the handoff

A lot of platforms look fine when they're generating one polished sample. The harder question is whether your strategy survives repeated use. Positioning, audience nuance, product truth, differentiation, and use case focus all have to stay intact after the tenth article, not just the first.

I think this is where a lot of teams misread the category. They compare writer quality, or prompt quality, when they should be comparing context retention. If the system requires you to restate your positioning every time, it isn't really carrying the load. You're still the operating system.

A useful rule here is the 80% Context Test. If a new campaign or article still needs you to manually inject more than 20% of the strategic context each time, management overhead hasn't really gone down. It's just moved around.

For background on how this problem shows up across content operations, the breakdown is similar to what many teams see in manual workflows documented by the Content Marketing Institute's research on process maturity and performance here.

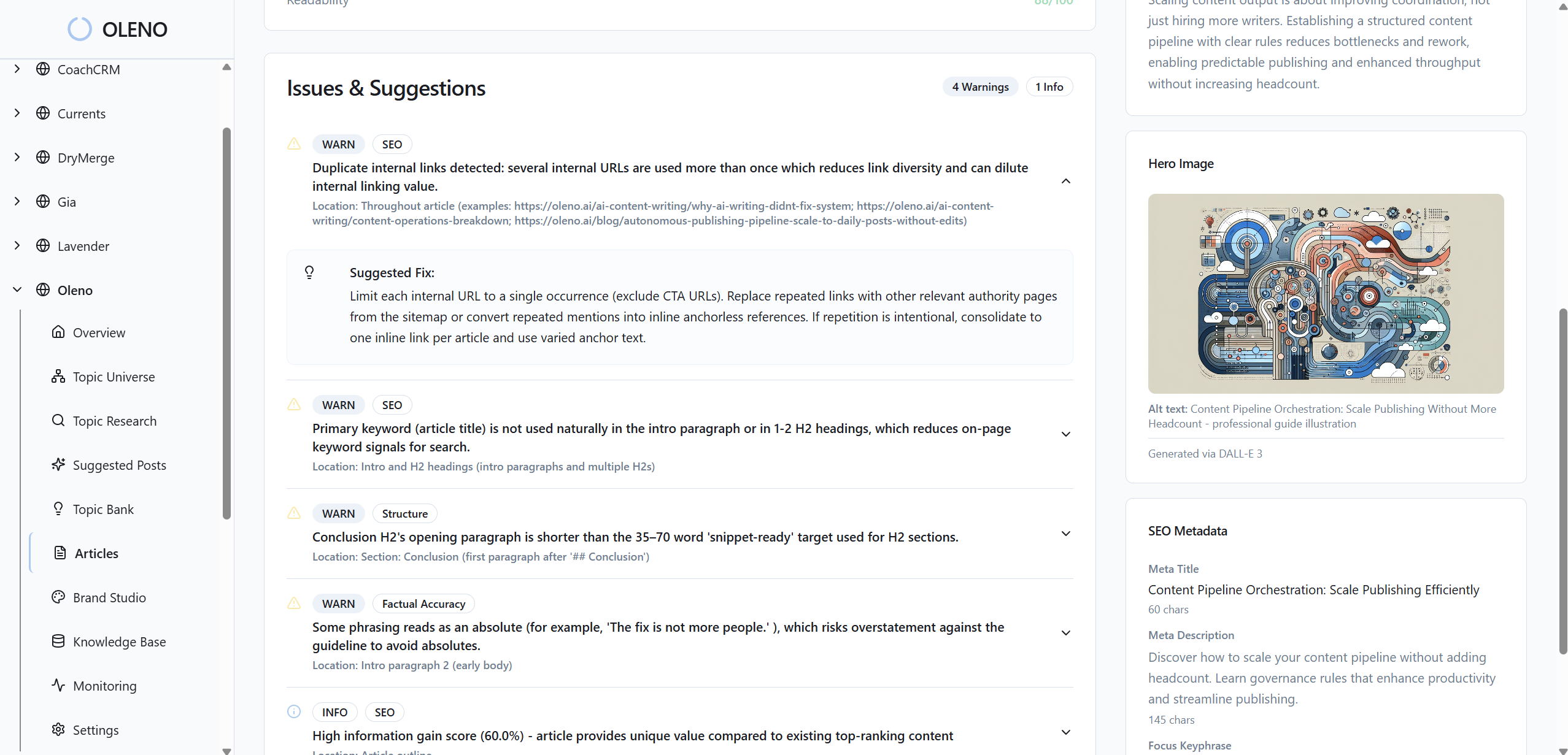

Draft quality needs to reduce review cycles, not just impress in isolation

Draft quality matters because poor first drafts create downstream labor. That sounds obvious, but buyers still get distracted by output that reads smooth while missing key substance. A nice-sounding article that gets product details wrong is still expensive.

The frame I like is the Slop Test. Can a draft pass basic scrutiny from someone close to the business without triggering a rewrite spiral? If not, the tool may generate words quickly, but it won't reduce management work.

There's a fair counterpoint here. Some teams are happy to use rough first drafts and polish them manually. That's valid, especially if they have a strong editor and low volume. But if you're a growth-stage SaaS team trying to build a repeatable engine, rough output becomes a tax pretty fast.

So ask a blunt question during evaluation: does this reduce review rounds from three to one, or does it just move the heavy lift into editing? That's the actual buying question.

The system should fit a 1 to 3 person team

Small marketing teams don't need more moving parts. They need fewer. That's why usability in this category isn't about pretty dashboards. It's about how much ongoing supervision the system requires.

Picture a Head of Marketing on a Tuesday. Pipeline review in the morning. Product launch prep after lunch. Paid performance check-in at 3. If the content platform needs babysitting between all that, adoption won't stick. It may get used for a month. Then it becomes shelfware.

My rule of thumb is the Weekly Touch Threshold. If the platform needs more than 2 hours a week of management just to keep content moving, it's probably misaligned for a lean team. Some setup work is fine. Ongoing dependency is where things get expensive.

For buyers comparing software adoption risk more broadly, Gartner has been pointing at the same issue for years: tools fail internally when operational complexity outpaces team capacity here.

How To Evaluate Whether Oleno Fits Your Team

You can evaluate Oleno without turning the process into a six-week buying project. The cleanest path is to test whether it reduces the three most expensive failures in your current setup: context loss, draft rework, and execution management. If those don't improve, nothing else matters much.

Start with a workflow audit before you look at output

Before you judge any platform, map how content gets made today. Count the handoffs. Count the review rounds. Count how many times someone has to explain the same positioning or product detail again.

Most teams skip this. Then they compare tools on surface impressions.

Use what I call the Handoff Count method:

- List every person involved from topic idea to publish.

- Mark every place where context has to be restated.

- Track how many review loops happen on a normal asset.

- Add up total time spent coordinating, not just writing.

If you see 5 or more handoffs on a standard article, that's a strong sign the system is management-heavy already. A new platform should cut that number, not mask it.

Run a live test with one real use case

Don't evaluate on generic prompts. Use a real launch, a real comparison article, or a real buyer enablement page your team actually needs. Growth-stage SaaS teams adopt platforms based on whether they work under live conditions, not sandbox conditions.

This matters more than most vendors admit. A fake sample can look clean because the constraints are clean. Real work isn't. Real work includes half-finished messaging, product nuance, sales objections, and stakeholders who all care about different details.

A good test set usually includes:

- one product or feature launch asset

- one comparison or competitive asset

- one lower-funnel buyer piece

- one piece that would normally need heavy editing

One thing worth watching closely: whether the second and third outputs get stronger because the system holds context better, or whether every new asset starts from zero again.

If you want to run that test against your own backlog, you can request a demo and use a live campaign instead of a made-up sample set.

Score for management reduction, not feature volume

Feature lists are where software evaluations go sideways. Buyers start tallying capabilities that may never matter in practice. I prefer a simpler scorecard.

Use the 4-Part Lean Team Score:

| Criteria | Question | Pass Signal |

|---|---|---|

| Context Retention | Does strategy carry into output without repeated manual setup? | Team restates less each cycle |

| Draft Quality | Does first-pass output reduce edit time? | Review rounds drop within first month |

| Workflow Load | Does the system reduce coordination work? | Fewer handoffs and approvals |

| Team Fit | Can a small team run it without adding a manager? | Under 2 hours weekly oversight |

Could you add more criteria? Sure. Security, procurement, integration fit, and internal reporting may matter too. But for this specific buying decision, those four questions usually expose whether the tool is a fit or a future headache.

For a useful outside benchmark on software evaluation discipline, the procurement guidance from Harvard Business Review on measuring hidden operational costs is worth a read here.

Common Buying Mistakes That Add More Overhead

The biggest buying mistakes happen when teams evaluate content software as if they were buying a writing assistant, not an execution system. That leads them to overweight surface-level output and underweight workflow impact. Then six months later, the same Head of Marketing is still stuck in review hell, just with a new login.

Buyers often mistake speed for leverage

Fast draft generation can look like leverage. Sometimes it is. But speed without context control usually creates faster mistakes, not better execution.

I've seen teams fall into this because the first demo feels like relief. Content appears quickly. The blank page problem goes away. Then the real work starts. They still have to correct positioning, fix examples, tighten claims, and remove generic phrasing. So the writer went faster, but the manager got busier.

That's the Speed Trap. If draft time drops by 70% but edit time doubles, your total operating burden may not improve much. Maybe it still helps a little. Maybe not. You need to measure the full chain.

Agency replacement logic can be too narrow

A lot of teams buy with one question in mind: can this replace our agency or freelancers? Fair question. Budget pressure is real.

But that lens can be too narrow. The deeper issue is whether the system gives the in-house team a repeatable way to produce demand-gen content without constant translation and oversight. Replacing outside spend is nice. Reducing internal management drag is often the bigger win.

And to be fair, agencies still make sense in some scenarios. If you're early, unclear on positioning, or need big campaign concepts, outside help can be useful. But once strategy is mostly clear and execution is the bottleneck, paying for more external production without fixing the context gap tends to recreate the same problem in a different shape.

Teams underrate the cost of executive review time

This one gets ignored a lot because nobody books it as line-item spend. Founder edits. VP review. Product marketing cleanup. Marketing lead rewrites. It all feels normal, so nobody calls it overhead.

But it is.

Let's pretend your founder spends 30 minutes reviewing each strategic asset, and your Head of Marketing spends 75 minutes getting it into final shape. Across 10 pieces, that's more than 17 hours a month from two expensive people. If the platform doesn't pull that number down, the business case gets shaky.

That hidden cost is why some teams adopt Oleno. Not because they want more content software. Because they want leadership time back.

A Simple Framework To Decide If Oleno Is Worth It

A useful decision framework should tell you when to buy, when to wait, and what evidence counts. You don't need a giant spreadsheet for that. You need a few hard checks that keep the decision honest.

The 3-Bucket test makes the decision clearer

I like putting buyers into three buckets:

| Bucket | What You See | Likely Decision |

|---|---|---|

| Not Ready | Positioning still changes every month, low content volume, no defined workflow | Wait and tighten strategy first |

| Ready But Fragile | Clear strategy, weak execution, heavy review burden, small team | Strong fit to evaluate now |

| Operationally Mature | Documented systems, dedicated content ops, low review drag already | Evaluate for efficiency, not rescue |

This works because it separates category fit from timing. Not every growth-stage SaaS team should buy now. Some should wait. That's a healthy answer, and I think buyers trust content more when it admits that.

If you're in the middle bucket, that's usually where the strongest case exists. Strategy is clear enough to encode. Volume pressure is real. And management overhead has started to choke execution.

The 30-60-90 rule keeps evaluation grounded

Use a 30-60-90 lens during the buying process:

- In 30 days, you should know whether setup reduces context repetition.

- In 60 days, you should know whether review cycles are shrinking.

- In 90 days, you should know whether output consistency improves without more manager oversight.

If none of those move, something is off. Maybe it's the platform. Maybe it's internal process. Maybe the team isn't ready yet. But at least you'll know quickly.

I wouldn't overcomplicate this with twenty KPIs. Start with four:

- handoffs per asset

- average review rounds

- manager hours per month on content cleanup

- number of publishable assets shipped

That's enough to make a grown-up buying decision.

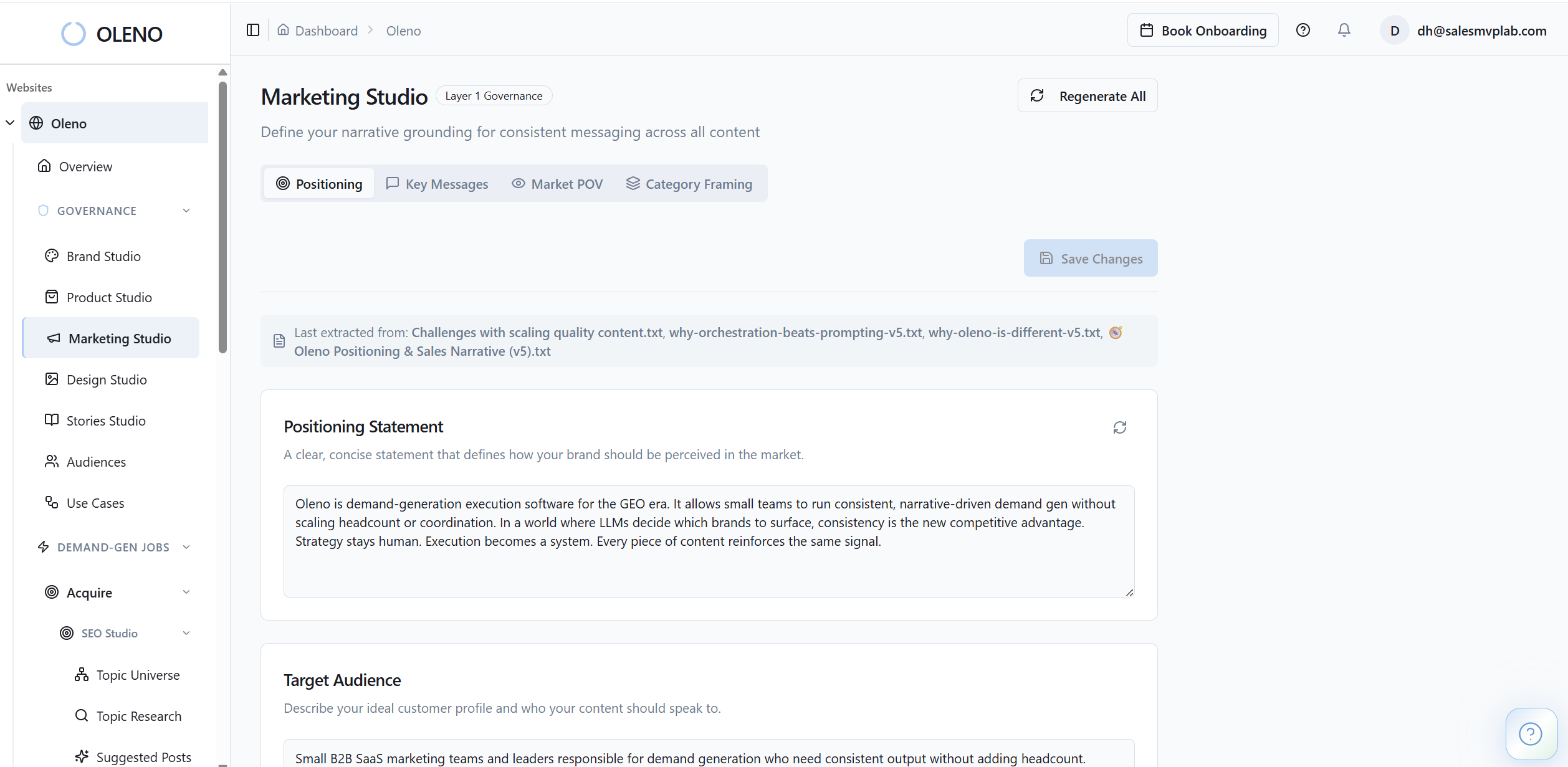

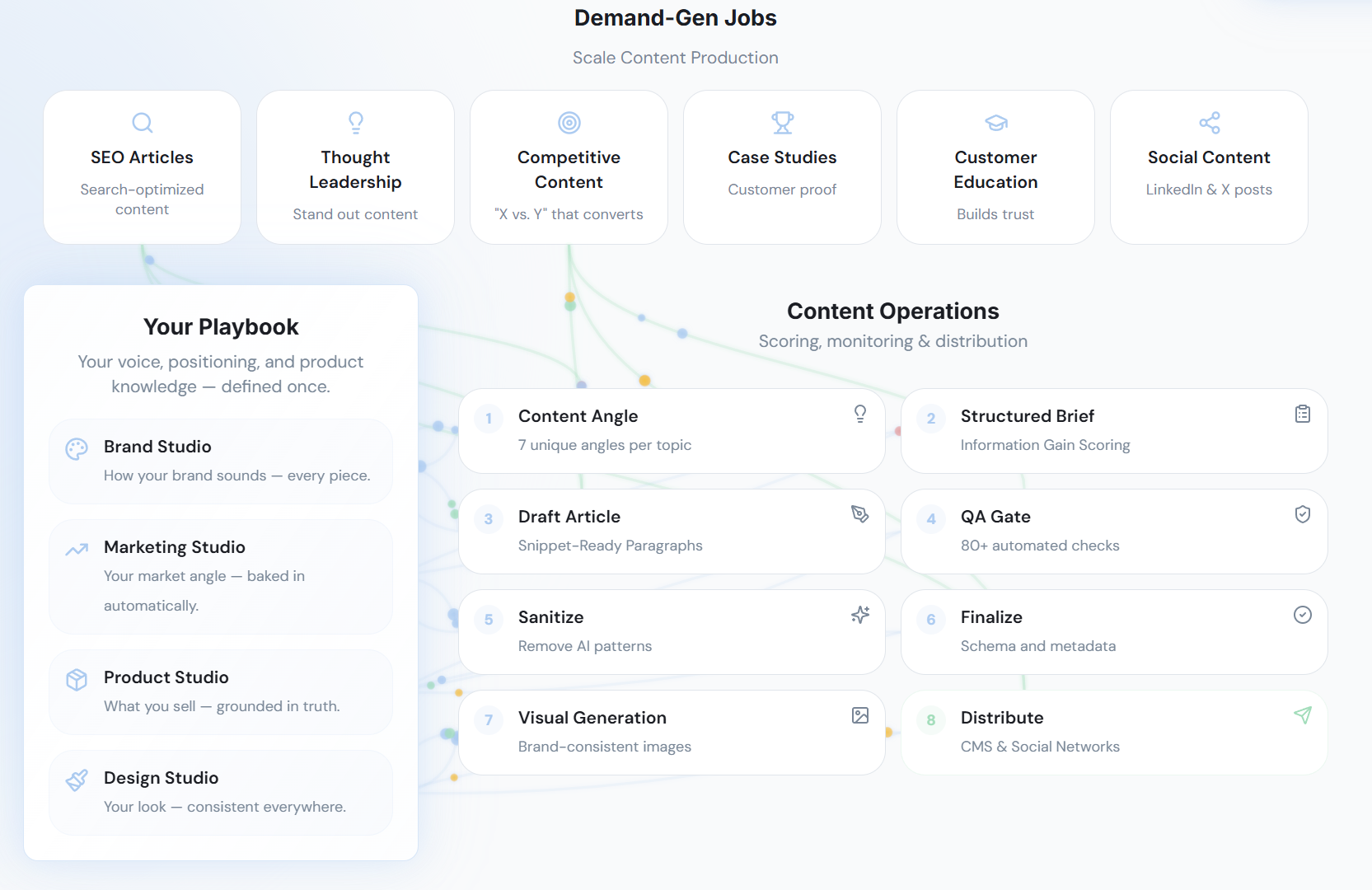

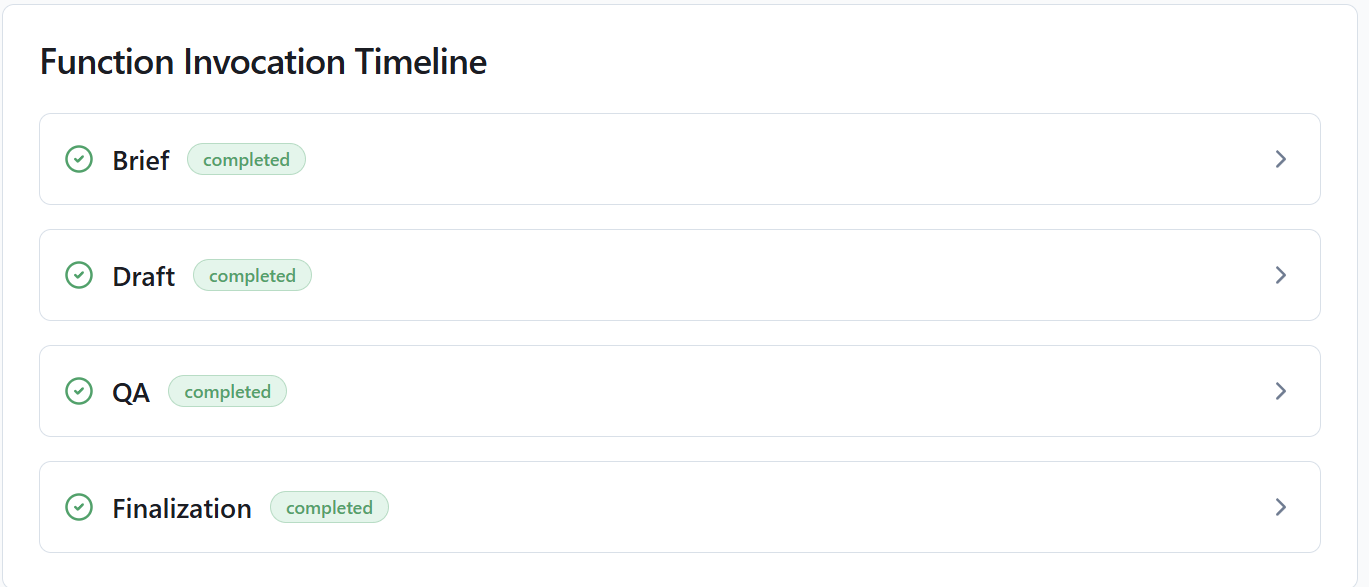

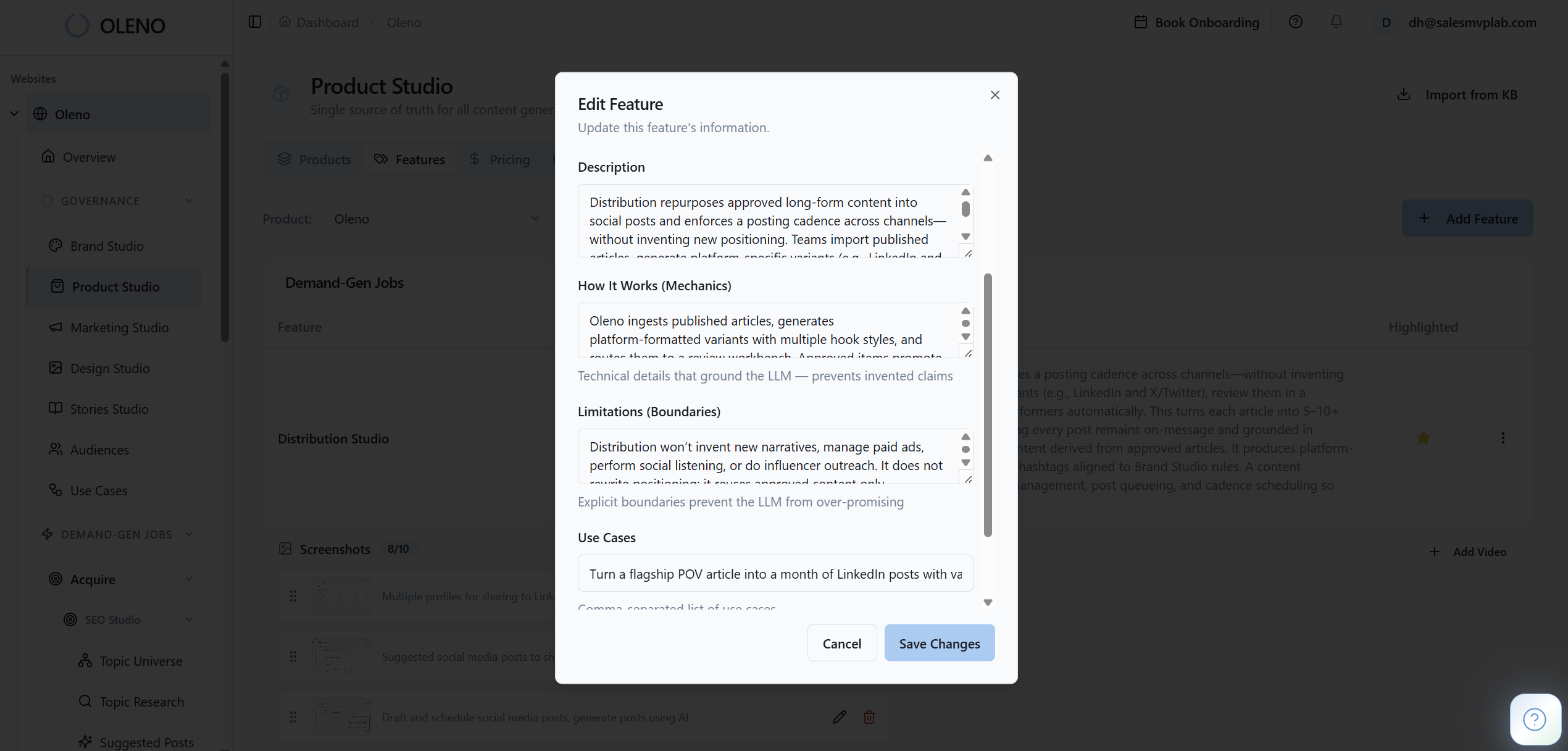

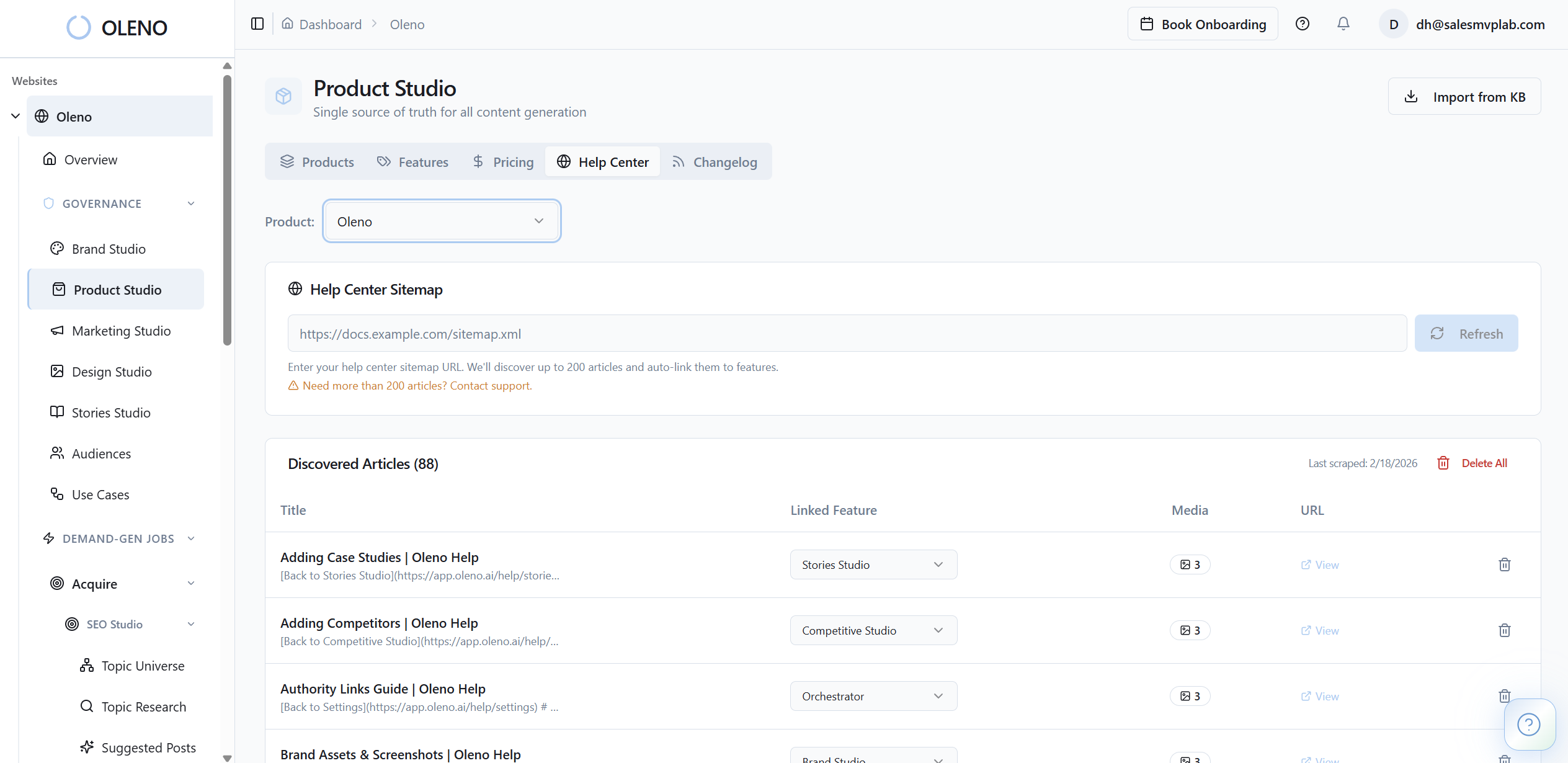

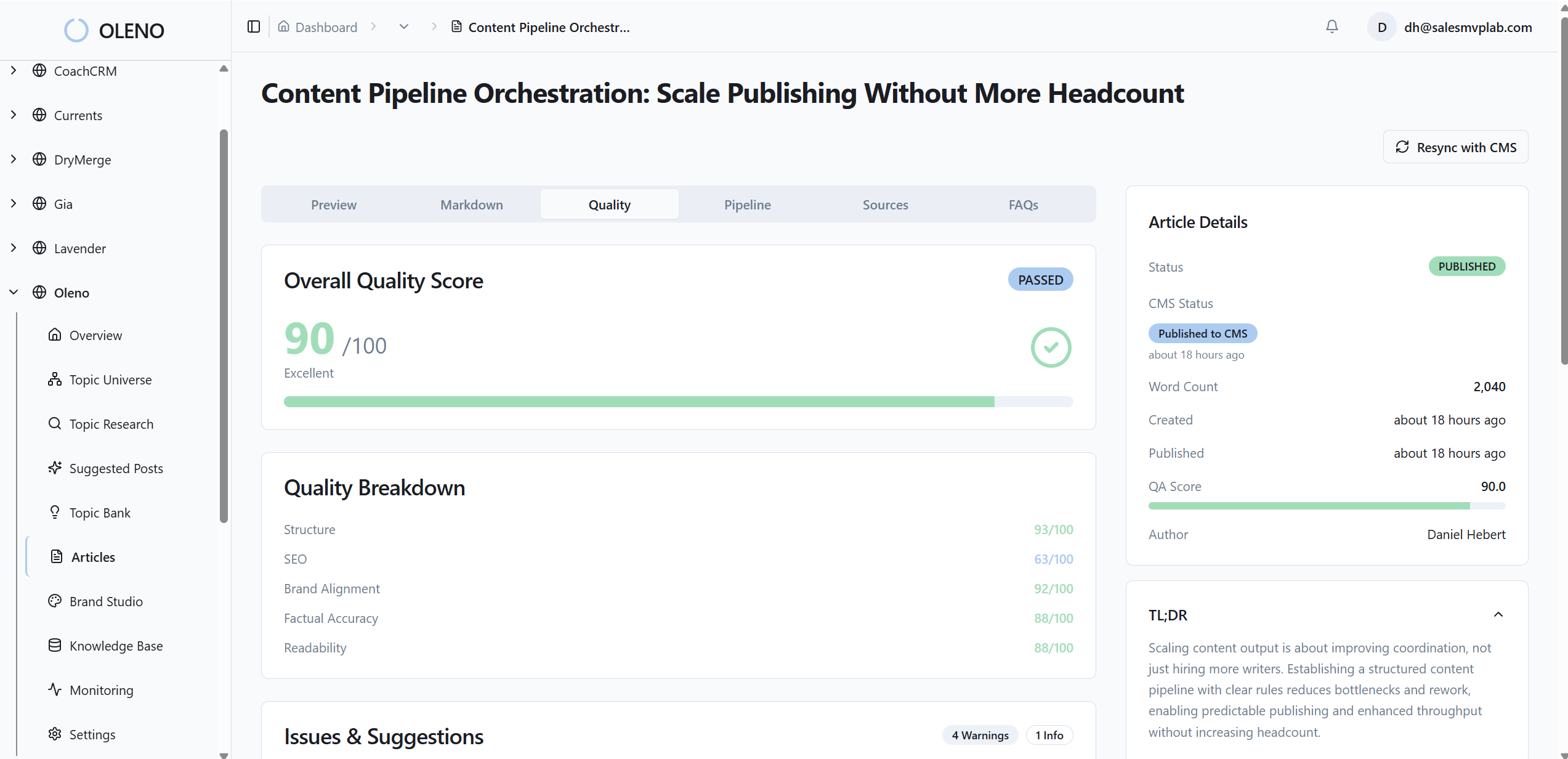

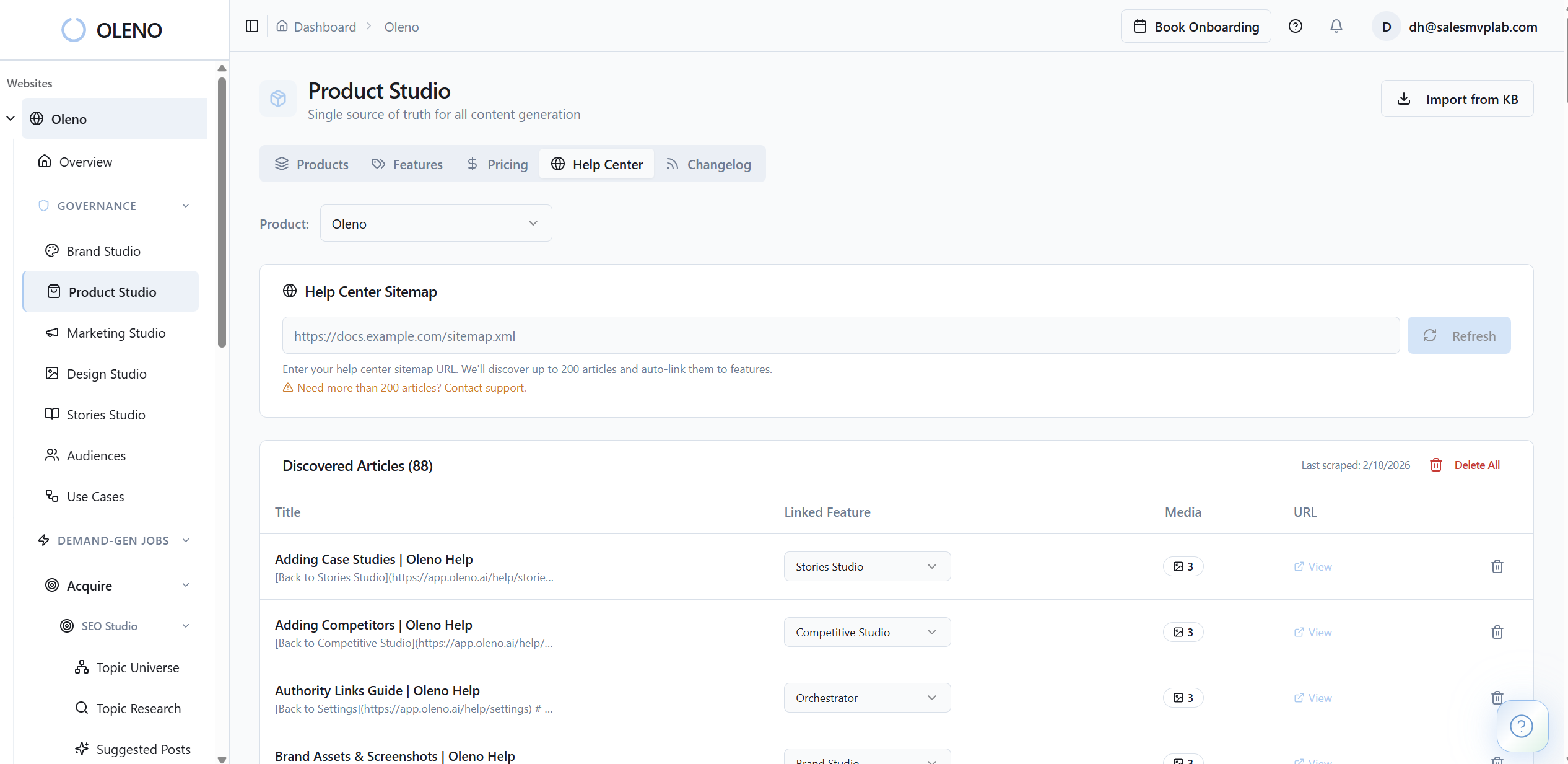

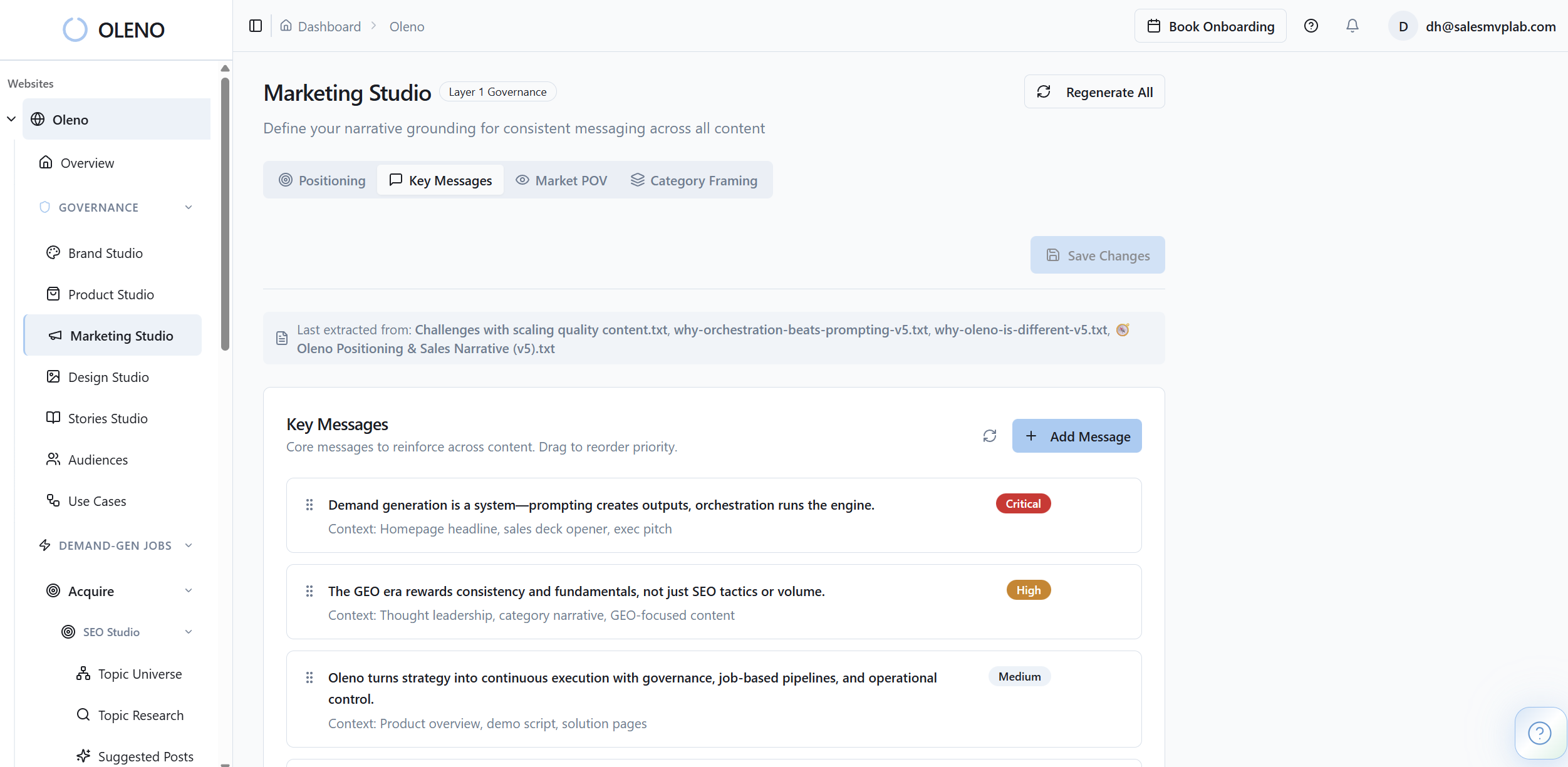

Apply The Framework To Oleno

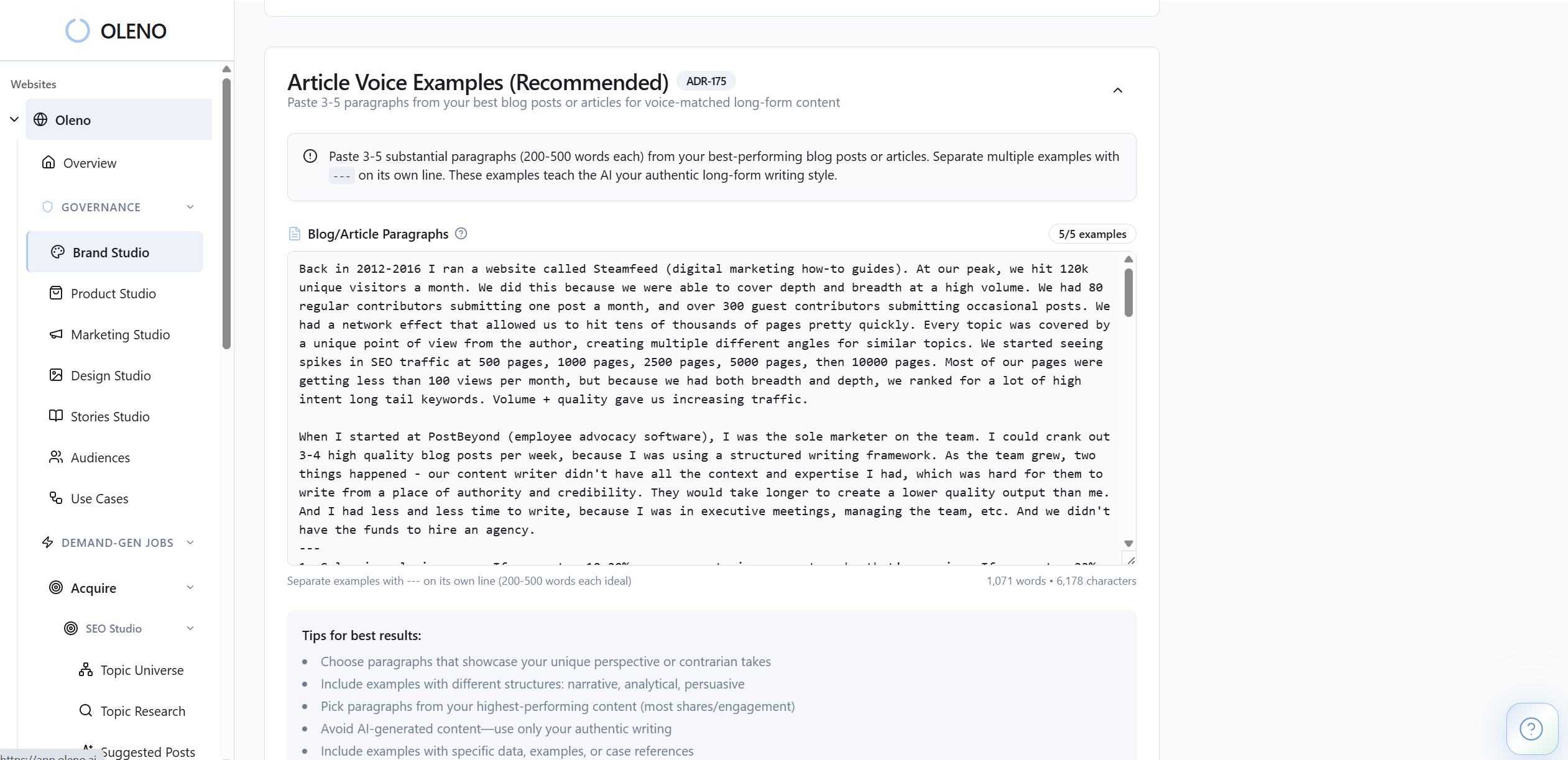

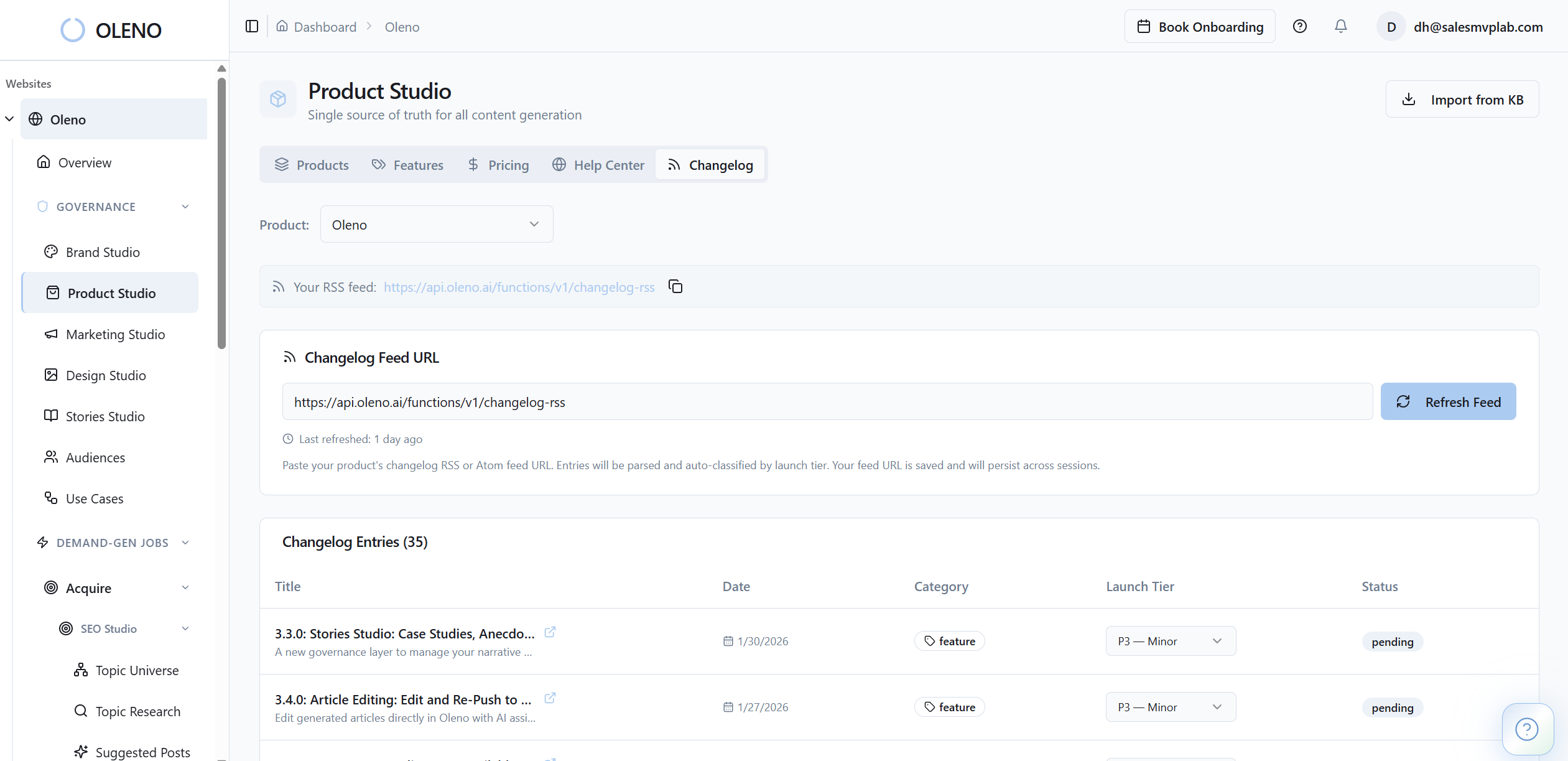

Oleno tends to make sense for growth-stage SaaS teams that already know what they want to say, but can't keep that truth intact through execution. The platform is positioned around planning, quality control, publishing flow, and maintaining brand and product context across repeated output. For a lean team, that matters because the goal isn't just to generate more drafts. It's to reduce the management work wrapped around them.

The practical question is whether Oleno can carry enough strategic context that your Head of Marketing stops acting like a translator between leadership, product, and whoever creates the content. That's the test. If it can reduce that translation layer, cut the Editing Tax, and keep output aligned across launch content, comparison pages, and buyer content, the adoption case gets stronger.

You should also evaluate this honestly against your current stage. If your positioning changes every week, or your team isn't producing enough content for process friction to matter yet, waiting may be smarter. But if your team has the strategy, the backlog, and the recurring headache of rework, it's probably worth putting Oleno through a live operating test.

A simple next step is to bring one real campaign, one comparison page, and one buyer enablement asset into a working session and see how the system handles them. If you want to do that with your actual workflow, book a demo and evaluate it against the four criteria above: context retention, draft quality, workflow load, and small-team fit.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions