Content Analytics Playbook: Attribute and A/B Test to Boost Conversions

I’ve lost count of how many content reviews I’ve sat through where the headline slide is monthly sessions. It’s comforting. It’s also misleading. The hard truth: traffic is a context metric. Conversions and revenue are the outcome. That’s what your CFO cares about. That’s what you can actually defend.

When we treated “more pageviews” as the goal, we won a few dashboards and lost the pipeline. I’ve been guilty of this. At Proposify, we ranked for broad topics, looked great in Search Console, and… demos stalled. That’s the lesson here. Measure the path from article to action, then optimize that path relentlessly.

Key Takeaways:

- Define 3–5 outcome KPIs tied to revenue and build up from there

- Treat measurement as an experiment pipeline, not a dashboard ritual

- Instrument content touchpoints and prove influence with clear rules

- Quantify waste from bad data, bots, and misattribution before fixing

- Make reversals normal, retest big wins before rolling out system-wide

- Use systems to encode learnings into briefs, structure, visuals, and links

Why Traffic-Only Reporting Misleads Content Teams

Traffic-only reporting misleads because it measures audience size, not progress toward revenue events. Conversion KPIs (trial starts, demos, expansions) tell you whether content actually moves pipeline. For example, an article with 2% assisted demo rate beats a viral post with 0.1% every time.

The metrics that actually matter for content-led growth

If you want content to drive real growth, define a small set of conversion KPIs and make traffic the supporting actor. Pick outcomes you can defend in a board meeting: free trial starts, sales-qualified demo requests, expansion upgrades. That’s it. Everything else should roll up to these numbers.

Then instrument the path from article to action. Which sections get read? Which CTAs get clicked? Where do people stall? Once those events are clean, including the rise of dual-discovery surfaces:, you can talk conversion rate, assisted conversions, and incremental lift by content type. I like to keep the report tight: outcome, drivers, and what we’re changing next.

A lot of teams borrow KPIs from checklists. You don’t need to. A simple, outcome-first frame often beats a big toolkit. If you want a reference, the structure in the Content Strategy Playbook maps neatly to this outcome-first approach.

What is a conversion contact and why define it?

A “conversion contact” is your rule for when content gets legitimate credit for a revenue-facing action. Without that definition, every report becomes a debate. Set a lookback window (30–90 days is reasonable), define the required events (article view plus CTA click), and cap credit at one per session.

Document it in your analytics runbook. Be specific. What qualifies? Which events are required? How do you treat cross-device or cross-domain journeys? This is less about being perfect and more about being consistent so actions follow from data, not opinions.

When you define this cleanly, conversations with leadership stop being “do we think content did this?” and start being “what do we ship next to improve it?”. Small change. Big effect.

Why teams chase views and miss pipeline

It’s tempting to celebrate rising sessions. I get it. You’re under pressure, the line is moving up and to the right, and that graph feels like progress. The problem is that attention is not intent. Attribution by pageview encourages popularity, not impact.

Flip the model. Focus on conversion influence, including the shift toward orchestration, not views. Your writers need to know which paragraphs, visuals, and CTAs pull prospects forward. The difference is night and day: we stop writing for applause and start writing for advancement. As one editor told me, “I want to win demos, not pageviews.”

Ready to skip the vanity metrics and operationalize what works? Try Oleno for Free.

Treat Measurement As An Experiment Pipeline, Not A Dashboard

Dashboards summarize history; they rarely prescribe the next move. An experiment pipeline takes hypotheses in, ships instrumented changes, and outputs decisions that update briefs and priorities. For example, a weekly cadence with decision thresholds turns insights into edits, not slides.

What traditional dashboards miss

Dashboards are great for “what happened.” They’re weak at “what should we change right now?” You need a loop that turns observations into actions: hypothesis, instrumented variant, threshold, decision, rollout. Weekly rhythm. One backlog. Winners become rules in your brief template, not a line item in “QBR Highlights.”

The shift seems small. It isn’t. When your pipeline is run like this, you get compounding effects. Writers internalize which moves matter. Editors know what to look for. And the backlog tracks decisions, not ideas. If you want a primer on touchpoint-level thinking, Adobe’s Introduction to Content Analytics is a straightforward starting point.

The minimum viable measurement system

Start lean so you can actually run the loop. Standard UTM taxonomy, canonical rules, a lightweight event schema for long-form, and a weekly review ritual. Ship one A/B test at a time. Roll the winner into the brief. Queue the next test. Less scatter, more signal.

Keep decision thresholds simple: expected lift, sample size math, and a “no rollout without confirmatory run” rule for big placements. After four stable weeks, add complexity if you must. Most teams won’t need to. The loop itself creates the discipline.

The Hidden Cost Of Vanity Metrics And Guesswork

Vanity metrics drain time and attention because they create false confidence and rework. You pay in engineering hours, bad decisions from bot-inflated data, and misallocated budget due to misattribution. For example, a quarter of “traffic wins” can hide a flat pipeline.

Engineering hours lost to debugging tracking

Let’s pretend your team ships 12 articles this quarter. You then spend 20 hours a month chasing broken events, inconsistent UTMs, and odd CMS caching. Internal rate is $120/hour. That’s $7,200 per quarter with nothing new learned. Worse, trust takes a hit. People start ignoring the data.

Standardize events and cut this in half. Centralize your event schema. Add a pre-publish QA pass that validates fires once per action, including content strategy scorecard 7 metrics to measure authority growth, parameters persist across redirects, and internal traffic is excluded. Quick win. Those hours get reinvested into tests that actually change outcomes.

Let’s estimate the waste from misattribution

Last-touch-only models almost always under-value long-form content. Say content actually contributes 20% of assisted conversions, but your model credits 5%. On a $500,000 pipeline, you could be under-investing in pages driving $75,000–$100,000. That’s material. It’s also fixable.

Run structured holdouts or multi-touch rules with clear evidence requirements. Social often gets the last click; content earns the heavy lifting upstream. If you need a sanity check on attribution thinking, the framing in Adobe Content Analytics is a useful counterweight to pageview-obsessed reporting.

When You Cannot Prove What Worked, Everything Feels Arbitrary

When you can’t explain what worked, strategy devolves into opinion battles. Confidence slips. So does momentum. Define proof rules now, events, lookbacks, thresholds, so the next leadership conversation is about next actions, not whose slide wins.

When leadership asks which pages drive signups

We’ve all been there. “Which pages drive signups?” Without event-level attribution, you point to traffic spikes and a few anecdotes. Unsatisfying. Instead, define the proof rules in advance: which events count, what “assisted” means, what the lookback window is, and how you’ll treat cross-session journeys.

Then bring the receipts. “These five articles drove a 1.8% assisted demo rate last 60 days; this CTA block contributed 40% of clicks.” Not perfect. Defensible. And now you can ask for the one thing that matters: permission to roll the winner across the cluster.

A quick story, ranking without demand

At Proposify, we ranked incredibly well for topics far from our product. The content was fun, the traffic graphs looked great, and sales didn’t move. It wasn’t the team’s effort. It was the gap between what we published and what the product solved. That lesson stuck with me.

Optimize for the conversion path you can influence. Not the biggest keyword. Your editorial voice still matters. Just make sure it points somewhere you actually want people to go.

Still living in dashboard land and gut calls? Take a lighter path. Try Generating 3 Free Test Articles Now.

A Practical Content Analytics Playbook You Can Run This Quarter

This quarter’s playbook: define outcome KPIs, including why ai writing didn't fix, instrument content touchpoints, run one disciplined test at a time, and protect data quality. For example, a single-variable CTA test with proper sample size and bot filtering can produce a reliable lift you’ll trust.

Define outcome KPIs and map content touchpoints

Pick 3–5 outcome KPIs tied to revenue: trial starts, demos, expansions. Map each to proving events on long-form pages: section view on pricing explainer, primary CTA click, form submit. Write this as a one-page spec with owners, definitions, and lookback windows.

Share it with writers. They need to know what the analytics team counts as progress. I’ve found that once the team sees how paragraphs tie to events, they start writing differently. More clarity. More intent. Less fluff. And you can measure movement in a straight line.

Design A/B tests for long-form content with sample size math

Test one variable per run: headline, CTA placement, inline visual, or social proof block. Calculate sample size from your baseline conversion rate and minimum detectable effect. Defaults that work: 80% power, 95% significance. If the test is underpowered, pause and accumulate traffic. Don’t guess.

Segment only when you have volume to support it. Source and device splits can create useful nuance, but only if you can hit your thresholds. Otherwise, you’ll “discover” patterns that disappear the minute you roll out. It happens more than teams admit.

Protect data quality with QA and bot filtering

Publish with a measurement checklist. Validate that events fire once per action, attribution parameters pass through redirects, and internal traffic is excluded. Turn on bot filters. Drop impossible scroll speeds and zero-duration sessions. Short holdout tests can sanity check suspicious wins.

When results look too good, sample raw logs. You don’t need to do this weekly. Just when something feels off. A small dose of skepticism prevents a lot of rework later.

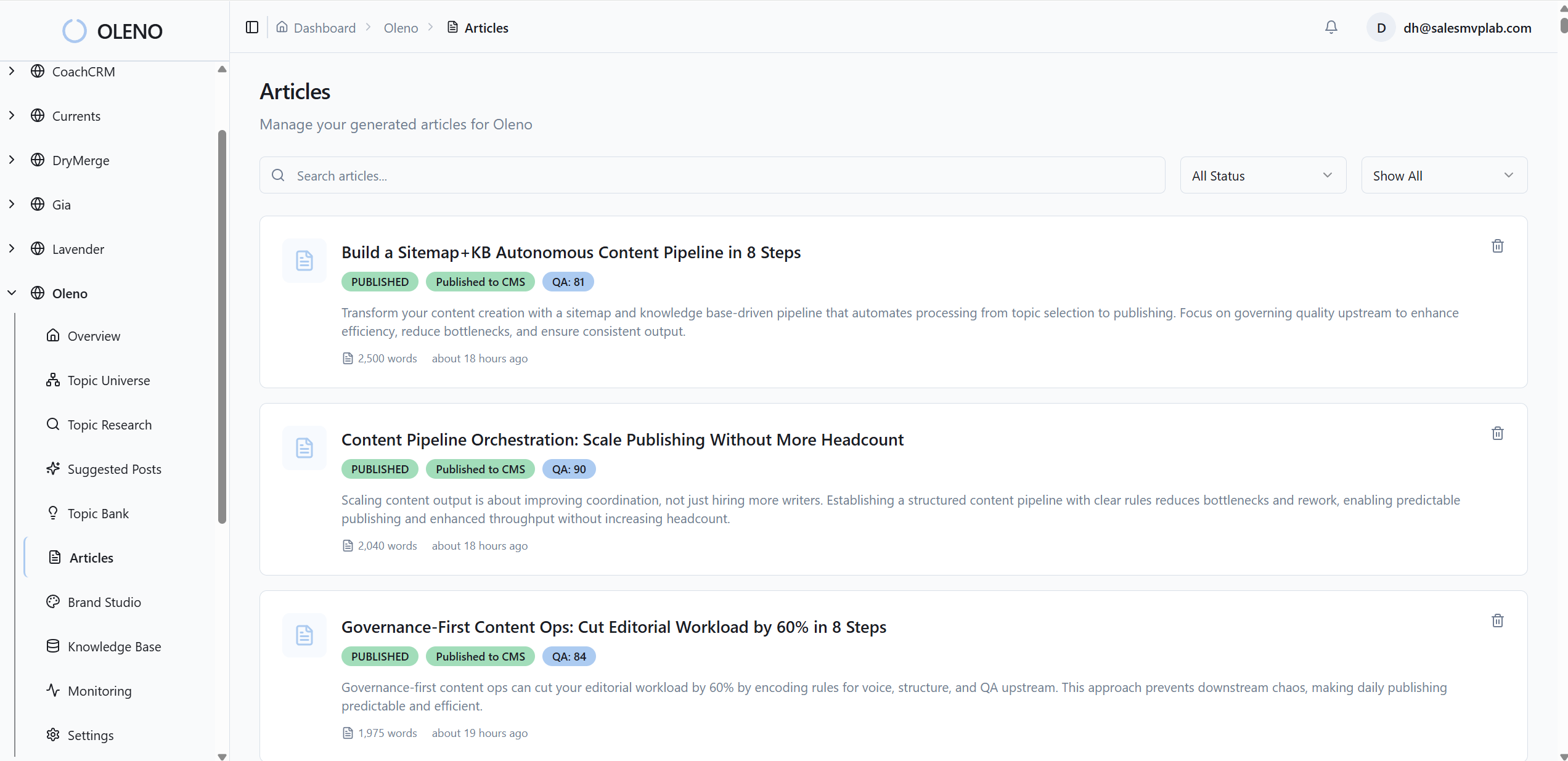

How Oleno Turns Experiment Insights Into Better Content Decisions

Oleno turns your proven insights into repeatable execution by updating strategy, briefs, structure, visuals, and internal links automatically. It’s not an analytics platform; you bring the measurement. Oleno ensures the wins show up everywhere they should, every time.

Translate experiment winners into Topic Universe priorities

Use your analytics stack to identify winning topics and sections. Then feed those into Topic Universe so high-impact gaps move up the queue. Oleno prioritizes where authority should grow next based on configured focus areas and your knowledge base, not guesswork.

The benefit is simple: fewer low-impact drafts and more coverage where conversions actually happen. When the strategy layer is alive to your data, including why content broke before ai, publishing stops being random. It compounds. Oleno keeps that loop running without manual coordination.

Encode insights into briefs with Information Gain scoring

Turn wins into rules. If testimonial placement lifted demo requests, bake that into the brief template. Oleno’s Information Gain scoring helps ensure each outline adds something new versus what already exists. Low-differentiation briefs get flagged before writing begins.

Writers start from a brief that reflects what moved the needle. That’s the goal. No heroics. Just consistent, differentiated articles that align with how prospects actually convert.

Deterministic internal linking supports your attribution paths

If your measurement shows a reliable path, say, including why content now requires autonomous, feature explainer → comparison guide → primary CTA, make the path consistent. Oleno injects internal links deterministically using only verified sitemap URLs, with exact-match anchor text and rules-based placement.

Because links are code-driven, not ad hoc, the paths you want people to follow remain intact across articles. That reduces randomness in journeys and makes your assisted conversion math more stable.

Snippet-ready structure and product visuals align with conversion hotspots

Sections that drive engagement should be crystal clear. Oleno opens every H2 with a snippet-ready, three-sentence answer, designed for scanning and citation. Visual Studio adds brand-consistent images and prioritizes product visuals in the solution areas where they help readers decide.

Alt text and filenames are generated automatically, including ai content writing, and visuals are placed intentionally, not randomly. The result is predictable clarity where it matters most: the exact blocks your tests show are doing the work.

Important note: Oleno doesn’t measure performance. It operationalizes your learnings, across Topic Universe, Information Gain, snippet-ready structure, deterministic links, schema, visuals, QA, and publishing, so the system keeps improving.

Want to see this system turn your winning ideas into consistent outputs? Try Using an Autonomous Content Engine for Always-On Publishing.

Conclusion

Here’s the punchline. Make traffic the context, not the headline. Build a small, disciplined experiment pipeline. Prove influence with clean events and clear rules. Then let a system carry your wins forward, into briefs, structure, visuals, and links, so each new article starts smarter than the last. That’s how content compounds into pipeline, quarter after quarter.

About Daniel Hebert

I'm the founder of Oleno, SalesMVP Lab, and yourLumira. Been working in B2B SaaS in both sales and marketing leadership for 13+ years. I specialize in building revenue engines from the ground up. Over the years, I've codified writing frameworks, which are now powering Oleno.

Frequently Asked Questions